Is the Prompt Outdated? GPT-5.5 Now Has Intuition—Just Specify the Goal and AI Takes Over Automatically

![]() 04/27 2026

04/27 2026

![]() 522

522

Edited by | Key Focus Editor

Recently, OpenAI President and Co-founder Greg Brockman disclosed several core details about GPT-5.5 for the first time in a special interview on the Big Technology Podcast.

Greg Brockman stated that the phase of piling theoretical intelligence into models in the AI industry over the past two years has come to an end, and AI is now officially ready to take over specific execution tasks. AI is transitioning from a mere brain system to a brand-new intelligent application form.

Greg Brockman claimed that in practical applications, GPT-5.5 demonstrates extremely strong intuition and contextual understanding capabilities, allowing humans to completely bid farewell to cumbersome prompt engineering. This signifies a fundamental change in human-computer interaction: users now only need to set overall goals, and the model can automatically take over and solve problems end-to-end.

Below are the core highlights from this in-depth interview that we have comb, sort out, organize, arrange, streamline d (compiled):

1. GPT-5.5's breakthrough lies in truly surpassing the practicality threshold for commercial tasks

In the past, large models heavily relied on complex prompt engineering for step-by-step guidance; now, with deeper contextual and intuitive understanding, users only need to issue overall goals, and the model can autonomously take over browsers, handle complex spreadsheets, or create presentations. In this new workflow, AI forms the trunk and brain of execution, while humans are freed from specific clicks and writing, transforming into "supervisors." Personal productivity will be infinitely amplified, with each individual equivalent to managing a fully automated digital enterprise.

2. Open-source distillation cannot replicate end-to-end system capabilities

Faced with the industry trend of open-source models using "distillation techniques" to quickly catch up, OpenAI's true competitive edge does not lie in single model parameters. Brockman stated that mere model distillation cannot replicate GPT-5.5's actual performance. The real competitive barrier lies in "end-to-end system co-design capabilities"—encompassing seamless coordination of computing power cluster scheduling, data pipelines, organizational structures, and safety alignment. This systemic engineering capability of continuously testing and iterating cutting-edge AI (i.e., "the machine that builds machines") represents a generational gap that the open-source community cannot easily bridge.

3. Scalable deployment must be strongly tied to enterprise-level IT risk control

As AI systems gain more operational permissions, safety and controllability become core issues. Unlike Anthropic's "unreleased deployment" strategy, OpenAI adheres to "iterative deployment," advocating for prioritizing model testing by network defenders to enhance real-world ecosystem resilience. A more critical challenge lies in scalable management: when autonomous agents within enterprises swell from a few to hundreds of thousands, original management models will inevitably fail. Therefore, large-scale autonomy of agents must be strongly bound with strict observability and enterprise-level IT governance frameworks to ensure digital employees' execution remains under human supervision sandboxes.

4. Exchange underlying computing power scale for speed in solving human challenges

The world is entering a new phase driven by computing power: the more computing power invested, the faster problems are solved. Humanity's upper limits in science and engineering will directly depend on the ceiling of available computing power. Taking healthcare as an example, future dedicated computing power from gigawatt-scale data centers could allow AI to continuously deduce, consult expert data, and design experiments over months to conquer complex diseases like Alzheimer's. Computing power will replace traditional resources as the core infrastructure for solving daily business affairs and major scientific propositions, with global demand for computing power facing long-term structural growth.

Below is the transcript from Greg Brockman's interview:

1. OpenAI's Agent Roadmap

Alex: This episode invites OpenAI President and Co-founder Greg Brockman to deeply explore GPT-5.5, the renowned Spud model, examining its capabilities and significance to OpenAI. Greg, nice to meet you. Welcome back to the show.

Greg Brockman: Thanks for having me. Hope this isn't too urgent a situation.

Alex: Let's start here. Can you confirm that GPT-5.5 is Spud?

Greg Brockman: Yes. GPT-5.5 is an amazing model. I think in many ways, it's a step toward a new way of using computers to get work done. It's a whole new category of intelligence. It's extremely useful in all aspects of programming and debugging, showing great initiative in solving very difficult and tricky problems, truly able to solve problems end-to-end with minimal instructions.

But what's most striking to me isn't necessarily its programming capability improvements—I think those were expected. What's most striking is that it now truly surpasses the practicality threshold for all kinds of general-purpose applications. It performs better in creating slides, spreadsheets, and is much more outstanding in computer operations, using browsers, and clicking through applications that were originally difficult for AI to run. So I think we're indeed witnessing the rise of this new way of using computers, and it all starts with this core intelligence.

Alex: Last time we spoke, you mentioned this was actually the culmination of a two-year research process. Was this planned two years ago? Does OpenAI have such long planning cycles?

Greg Brockman: Yes, our planning does have a very long-term vision. Note that we stack many research ideas and bets across various timescales, achieving continuous progress at every level of the technology stack. So what GPT-5.5 represents isn't the endpoint; in many ways, it's a starting point. This is actually a step toward the kind of models we foresee coming in the next few months. Everyone can expect even more significant capability improvements across broader areas, covering all aspects of tasks models can accomplish. This will be very exciting. We're constantly thinking about how to make the products we produce more useful for real-world applications, real users, and practical uses.

Alex: Can you specifically share what aspects we should focus on in the coming months? If this is just a beginning, what is it the beginning of?

Greg Brockman: Our grand vision is reflected in many things, not just models. You can view models as the brain, and systems, testing frameworks, and super-app applications as the body built around it, making it a useful AI. This is the transformation currently happening: shifting from language models produced by our kind of labs to truly practical AI, toward an assistant that can truly assist you based on your instructions, strive to achieve your goals, and actually operate. You can see that Codex now isn't limited to programmers—it's actually applicable to anyone using a computer. It's not perfect currently, doing not entirely correct (not entirely correctly) in some tasks where it should be able to, and sometimes its personality isn't exactly what you want. It's extremely powerful and does many amazing things out there, but you still need to spend some time carefully reading its communication to confirm how it solved problems. For these aspects, we're very clear on how to make them better. From 5.4 to 5.5, we've already made very significant progress. In the coming process, we'll achieve even more significant improvements in various aspects, making these models even more practical. Internally, we've been deeply thinking about final applications.

One thing that has changed over the past twelve to eighteen months is that we used to focus solely on continuing to improve benchmarks, making these models more powerful at the brain level. But our current focus is putting them into real-world applications, thinking about how people in finance, sales, marketing, and every functional department use computers, and how we can assist their computer work. We're thinking about how to make models not just theoretically assistive but also practically experienced, able to recognize what constitutes excellent outcomes.

I think we're moving toward a situation where workers will become supervisors. You're almost the CEO of this automated company, operating according to your goals. You still hold the dominant position and bear responsibility, needing to think about whether this is what you want and whether the work meets standards. But regarding which specific buttons were clicked, the specific way code was written, or the specific mechanics of spreadsheet operations, if these aren't important to you, you can abstract yourself from them and focus solely on evaluating whether the outcomes meet expectations. So it's like adding leverage for every worker.

2. End-to-End Co-Design is Worth Investing In

Alex: Okay. As you mentioned, this is the crystallization of two years' work. For our audience, let me explain that there are two different types of AI training. The first is pre-training, where you simply have the model predict the next word to make it a generalist and intelligent; the second is reinforcement learning, allowing it to truly execute and attempt to complete different tasks, rewarding it when it does so outstandingly and effectively, and to some extent, it learns how to complete these tasks. So are you basically saying that during this period, OpenAI loaded a large amount of task-specific reinforcement learning content into this model, and this is what produced the results you described?

Greg Brockman: I would express it in a slightly different way. There are many steps in the entire process, including pre-training, mid-training, reinforcement learning, and data collection. These different links work together to ultimately produce results and determine how the model connects with the world. This is also key to making it practical. We've been investing in each of these aspects, not just focusing on the individual capabilities dedicated to each link, but truly having a team that comes together to examine the entire technology stack, discussing how we can make it more useful for real-world applications.

So this isn't determined by any single thing we did. This is actually about overall effort. Like building a car, it's not just about whether you have a better engine. You can build a great engine, but if the rest of the car doesn't reach the engine's quality level, it's useless. This is the real innovation: it's end-to-end co-design, with all links combined in a repeatable way to make the model better and better to serve our users.

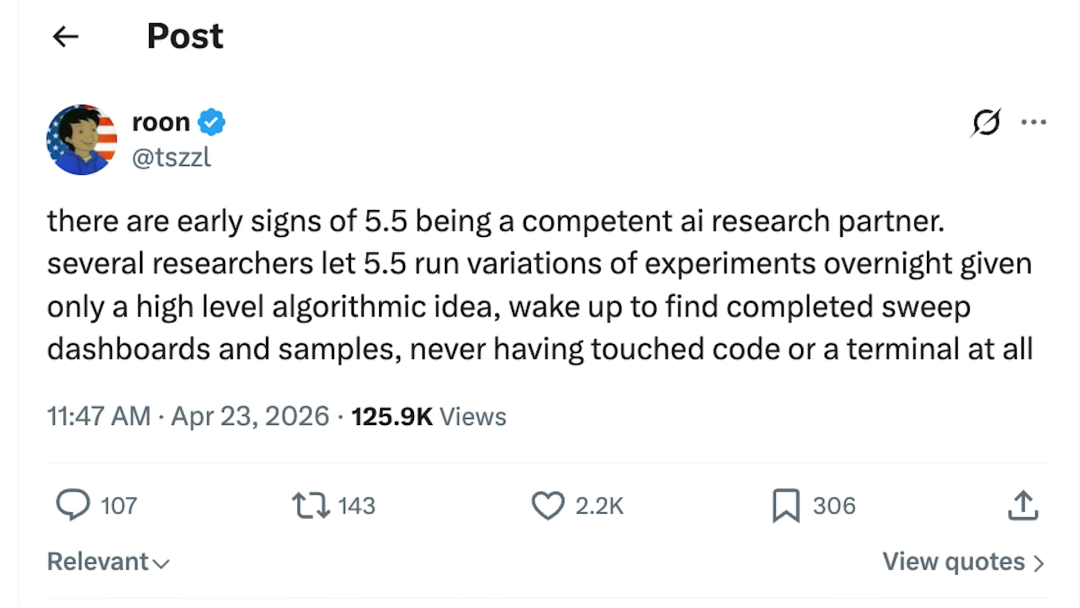

Alex: You joined me in a conference call with many media members earlier. One interesting thing was that you started by saying the model could understand your needs more intuitively, without needing detailed explanations word for word as in the past. Here's a tweet from roon: Early signs indicate that 5.5 is a competent AI research partner. Several researchers had 5.5 run various variant experiments overnight, providing only high-level algorithmic concepts, and woke up to see complete experimental groups, dashboards, and samples without ever touching code or terminals. Regarding this, it's a two-part question: how did you achieve this? Does this mean prompt engineering is outdated?

Greg Brockman: First, when we say there's a new category of intelligence, this is our true intention. Models are becoming easier to use intuitively because they have deeper understanding capabilities, able to truly examine the context and figure out what they're being asked to do.

As for the second part, is prompt engineering outdated? I actually think in some ways, prompt engineering might be even more dynamic than before. Now you spend a lot of time trying to explain to the computer exactly what you want, stuffing in various contextual explanations of the current situation and requirements. You'll wonder why you have to explain these things to the computer. The point is that the computer should assist me through work. I don't want to have to break down tasks and teach it step by step; I just want to point it in a direction, hoping it handles the details and delivers results, providing some form of feedback during the process and becoming the driver of underlying execution. So the future of prompt engineering lies in being able to get more from the model with less effort, while still having a multiplier effect when putting in the same effort—you'll get even greater improvements. We're now at the forefront of the current model capability ceiling.

Alex: Okay. Let me briefly talk to everyone about the economic costs of building such a model. Although you haven't specified how much funding or computing power was invested in training this massive giant model, we can safely assume it was a huge investment. There has always been a pattern: after these giant models are released, they're distilled by open-source model creators, and then open-source models only lag a few months behind the leading foundational models. Now I'm curious, given such a huge investment, and as you keep advancing, the model's capabilities improve quite dramatically. So how do you maintain your competitive edge? In the long run, if this pattern just repeats over and over, what's the point?

Greg Brockman: My perspective is slightly different. I think the real investment lies in end-to-end co-design, building a system and a collaborative working method that combines developers and technology, part of which involves how to use massive supercomputers to produce these models.

Now, it's not about obtaining model outputs and distilling them to simply get models with completely identical capabilities but smaller sizes and faster operation. If that were the case, we would have done it already, and providing services would be much easier. Although distillation techniques involve a lot of brilliant tricks, the main point I want to express is that what we're truly investing in is the machine that builds machines.

On the deployment side, we've thought deeply about safety guarantees and mitigation measures, conducting extensive testing in real-world scenarios for various aspects where the model might be misused. We've been working on this for years, thinking deeply about these issues in cybersecurity and biology. This effort is reflected in our publicly available Preparedness Framework, which stipulates how we handle model uses and how we attempt to maximize benefits and reduce risks. So everything we do needs to be closely linked, concerning how to ensure continuous progress while making models widely accessible. Because we deeply believe this technology can empower people, benefit humanity, and raise everyone's living standards.

3. Model Moat and Distilled Models

Alex: Going back to the earlier topic, the pricing for this model is, to my knowledge, double that of the previous model, GPT 5.4. From an economic or business standpoint, since you’ve already invested so much infrastructure into training models, how do you plan to address the threat if open-source models can deliver slightly inferior but nearly comparable performance at a lower cost?

Greg Brockman: Looking back at our history, progress hasn’t been driven by competition but by our own desire to improve. At comparable levels of intelligence, our prices have dropped significantly year-over-year—sometimes even by two orders of magnitude, or 100 times. Yet the typical Jevons Paradox has been at play: when you reduce the cost of something, it sparks far more activity than before.

We continually see that intelligence pays off—even small improvements in the model’s capabilities can have an enormous impact on the types of tasks it can now accomplish. That’s really the heart of what version 5.5 is about. People might see this as just an incremental improvement in intelligence, but I believe it will lead to a massive leap in practical utility. In fact, calling it an “incremental update” is quite conservative. While it’s only a 0.1 version change, it vastly underestimates the magic this model demonstrates in real-world applications.

I’d push back against the idea that seeing numbers means OpenAI is under pressure to go public and that the era of free lunches is over. Our business model is actually quite simple: we lease and build compute resources, then resell them with a margin. As long as there’s scalable demand for intelligence and problems to solve, this model works. At every stage, we’ve seen demand far outstrip supply, allowing us to keep scaling up our compute capacity.

My core directive is to have the team think about how to add value above raw compute and ensure we maintain a positive operating margin. This isn’t about competition in the market; it’s about whether we can efficiently turn compute into intelligence, where the output value exceeds the input cost. We’re constantly striving to build more efficient models, and market competition drives tremendous innovation, leading to greater usage and overall ecosystem growth. You can see this in our revenue numbers and those of others in the industry.

4. Cybersecurity Risks of Models

Alex: Greg, I’d like to ask about the cybersecurity implications. OpenAI and Anthropic have taken very different approaches. Anthropic’s latest giant model, Mythos, hasn’t been released to the public, whereas your Spud or 5.5 models are out in the open. I’ll ask you directly: Could releasing such a powerful model without gradual, step-by-step practice lead to significant cyberattacks?

Greg Brockman: I’d take issue with the premise of the question. As part of our disaster preparedness framework, we’ve been investing in cybersecurity defenses for years. We’ve been proactive long before we saw various capabilities emerging. We’ve always taken a very deliberate, step-by-step approach. Over the past few weeks, we’ve expanded trusted access to our cybersecurity programs. Overall, we believe in the resilience of the ecosystem while also recognizing the need for gradual progress.

As models continue to get more powerful, we want to put superior models in the hands of defenders to ensure critical infrastructure can be protected. When models are put into people’s hands, they explore them in ways that no one could have imagined without that access. So a step-by-step approach must be used, constantly advancing down the pipeline while introducing additional safeguards to maximize benefits and reduce risks.

Our team has been thinking deeply about the cybersecurity implications of models. We believe in iterative deployment as part of bringing improved models into practical use as they advance. We strongly believe in democratizing access—the ultimate purpose of creating this technology is to empower people and ensure it benefits all of humanity. So we’ve been working hard to figure out how to safely and responsibly make this technology widely available in the world.

Alex: Right. It seems your team doesn’t favor Anthropic’s approach to deploying Mythos. To quote Sam, claiming to have built a bomb and preparing to drop it, then selling bomb shelters to select customers for $100 million is excellent marketing. But the alternative is that developers can’t consider everything, and vulnerabilities will inevitably emerge that only real-world deployment can uncover. So starting with a small group of trusted testers before widespread deployment might make sense. What do you think?

Greg Brockman: The right answer here is nuanced and rooted in the technical details and the many factors at play. We need to think about the model evolution process for ourselves and other participants in the ecosystem. Having a small group with access might allow for high-leverage discovery and patch generation, but then how do you coordinate disclosure of that information across the industry?

I think moving to either extreme is rarely accurate; you need to apply the right tools for the specific situation. This isn’t the first time we’ve thought about this, nor will it be the last. Notably, our models have been in the hands of defenders for some time, and we’ve been building trusted access programs. The models we release have multiple safeguards built in and are actually not allowed to be used for cyberattacks.

In short, this reflects a difference in values. Do you want to put models in people’s hands and empower them, or do you want to centralize control and keep them out of the public’s hands? That’s probably the underlying tension in the debate. Any reflexive, extreme strategy is unlikely to bring the best outcomes for the world.

5. How to Trust Agents

Alex: Okay, I’d like to shift gears and talk about agents. To some extent, agents work best when given a high degree of autonomy. That makes sense on some level. But I’m curious, as agents are able to perform more tasks, access more files, and work across programs in the future, what level of trust is appropriate to place in them at this point?

Greg Brockman: Agents today are actually trending toward being quite reliable. While issues like prompt injection still exist as vulnerabilities, we’re actively patching them, and the models are becoming more resilient.

As models are given more responsibility and access to important context, it’s similar to managing employees. Having five trustworthy employees isn’t a problem, but if you have fifty thousand, you have to think about how to achieve good governance and oversight. As this super app becomes easier to use for anyone working on a computer, we’re also investing heavily in governance and oversight. For example, in our recently released Workspace Agents, businesses can define agents in the cloud and get a hosted CodeEx security sandbox to connect them to Slack for work. Watching it go viral within organizations has been really cool. When you see someone else’s agent, you can just copy it to create your own version. This provides an opportunity for excellent governance, where IT departments can see all the agents that have been created and their conversations, allowing them to precisely set guard rails. You need to gradually increase the responsibility given to agents and the diversity of tasks they collaborate on, while also maintaining security, reliability, observability, and oversight capabilities. If you don’t weave these together tightly, the state will get out of balance.

Alex: Yeah, basically just go for it, but be cautious.

Greg Brockman: But you also have to be truly all-in. As you scale, the nature of prototyping and scaling makes you think about whether you still have the ability to oversee and understand the big picture. So you need to make sure you’re tuned in at every step and fully aware of what the team is doing.

6. The Future of the Compute Economy

Alex: Greg, let’s close with this. You mentioned a compute-driven economy. What does that specifically mean?

Greg Brockman: We’re moving toward a world where the more compute you throw at problems, the faster they get solved, and the upper bound on solving problems is determined by how much compute is available. Take drug discovery, for example—tackling complex diseases like Alzheimer’s is currently beyond human capability. But imagine you could leverage a gigawatt-scale data center and spend months or even a year dedicated to thinking through how to crack it. It wouldn’t just think at the brain level; it would consult world-class experts and even suggest wet lab experiments. This would undoubtedly have a profound, positive, and transformative impact on humanity.

Everyday tasks could be solved in the same way. The smartphone in your pocket would become a trusted agent that knows you, has context about your profile, and to whom you could turn for health advice and get reliable information. You could just talk to it, and it would proactively understand your goals and interests and help you. Compute will become the core resource, regardless of scale, showing just how much computers can do work on behalf of humans. This is the future we’re all collectively building.

Alex: Yeah, I guess that explains why you’re leading these massive infrastructure investments and gambles.

Greg Brockman: It’s still not enough; we’re going to feel the scarcity acutely. Already, people trying to use agents are hitting rate limits and feeling it. We’re working on behalf of all the companies in this space and those who want to use agents to do our best to ensure there’s enough to go around. We’re heading toward a world where compute is scarce, and we all have a role to play in trying to make it more available.

Alex: Greg, thanks for taking the time amidst your busy schedule. It’s been great talking to you. Thanks again for coming.

Greg Brockman: Likewise, it’s been a pleasure.