DeepSeek-V4: The Singularity of Cambrian Explosion in China's AI Applications Approaches

![]() 04/27 2026

04/27 2026

![]() 442

442

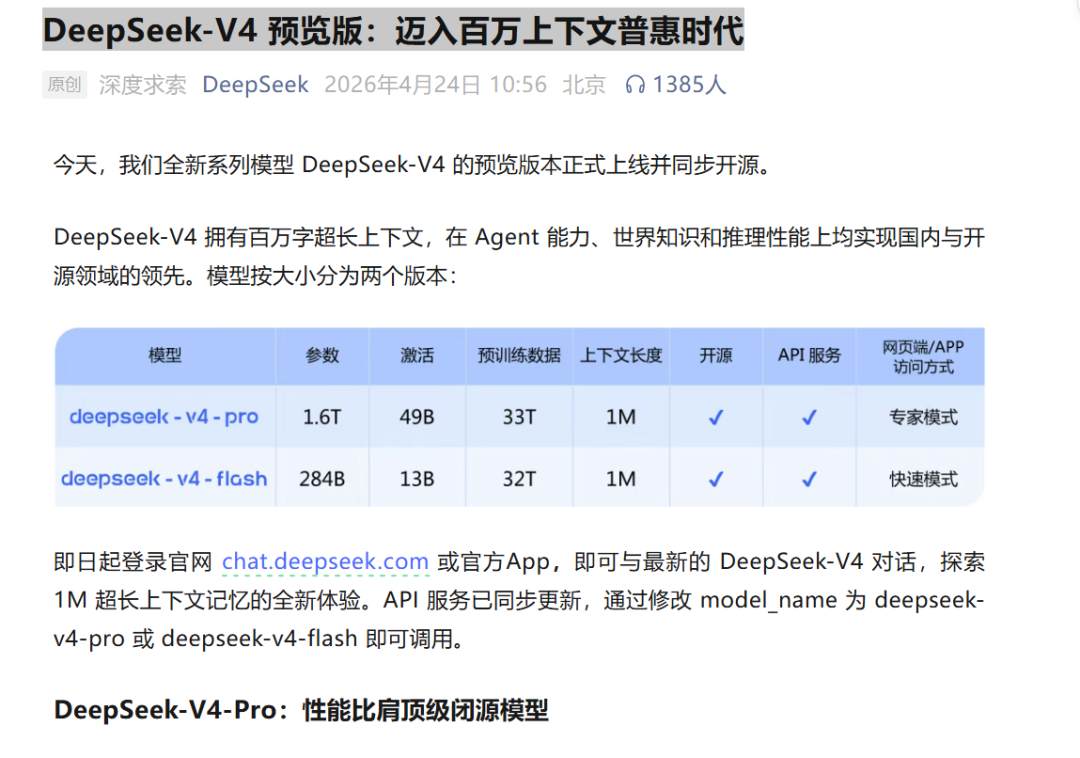

As noon approached on April 24, DeepSeek's official WeChat account released an announcement: 'DeepSeek-V4 Preview: Entering the Era of Universal Access to Million-Context AI.' The long-awaited V4 has finally arrived.

In my view, the sentence written at the end of this announcement article matters more than all the benchmark data above it:

'Not seduced by praise, not intimidated by criticism, following the path, staying upright.'

This is the sole response from an organization after enduring fifteen months of speculation, doubt, and negative predictions. Read in a broader context, its subtext is roughly: 'We know what we're doing, and we don't care what you say.'

And the answer V4 provides is indeed not a conventional iteration.

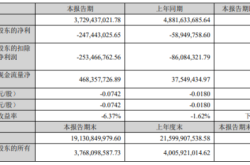

In my view, V4's core significance does not lie in benchmark scores—though V4-Pro achieved 90.2% on Apex Shortlist and a Codeforces Rating of 3206, making it dominant among open-source models. The real watershed lies in three numbers:

First, cost. In a 1M context, V4-Pro's single-token inference FLOPs are only 27% of V3.2's, with KV Cache at just 10%; V4-Flash is even more extreme, at 10% and 7%, respectively. This means that while expanding context from 128K to 1M theoretically increases load nearly eightfold, single-token computational consumption actually decreases. In AI, capability improvements usually come at the cost of increased computational power. V4 breaks this rule. This reverse efficiency revolution suddenly makes many Agent scenarios previously confined to whitepapers economically feasible.

Second, chips. V4 runs entirely on domestic chips like Huawei's Ascend and Cambricon, with its technical architecture shifting from CUDA to the CANN framework. This is the world's first trillion-parameter MoE model deployed on purely domestic computing power. As Jensen Huang put it: 'This is a terrible outcome for the U.S.' V4 proves one thing: China's AI can sustain its underlying computational cycle without CUDA. This signal's disruptive impact on the industrial chain far exceeds the model's benchmark scores.

Third, Agent. V4-Pro already ranks as the best open-source model in Agentic Coding evaluations, with internal user experience surpassing Sonnet 4.5 and delivery quality approaching Opus 4.6's non-thinking mode. Meanwhile, V4 has undergone special optimizations for mainstream Agent frameworks like Claude Code, OpenClaw, and CodeBuddy—this is not a model that 'just chats,' but one that 'gets work done.' Starting with V4, DeepSeek's positioning clearly shifts toward Agent infrastructure.

Together, these three signals point to a more fundamental conclusion: After V4, the singularity of Cambrian explosion in China's AI applications has arrived.

01

Singularity

This conclusion requires explanation.

540 million years ago, the Cambrian explosion occurred. In what was nearly an instant on a geological scale, a vast array of animal phyla emerged in the oceans. More precisely, identifiable fossil assemblages suddenly appeared in the fossil record. Scholars agree that the Cambrian explosion's premise was not a single factor but the simultaneous fulfillment of multiple conditions: oxygen concentration, marine chemistry, ecological niche availability, and Hox gene evolution. Species diversity suddenly surged because the underlying environment reached a critical threshold.

Today, the underlying environment of the AI industry is reaching a similar threshold.

First is the cost threshold. V4-Flash is priced at 1 yuan per million tokens for input (cache miss) and 2 yuan for output. V4's full adaptation to domestic chips essentially proves that during the Agent era, reliance on NVIDIA's high-end GPUs for inference is not inevitable.

This logic means a developer can process context equivalent to 'The Three-Body Problem' for just a few dollars. When costs drop to this level, application scenarios shift from 'what can be done' to 'why not try,' which is the true foundation for Agent deployment.

Second is the performance threshold. In Agentic Coding evaluations, V4-Pro is already the strongest open-source model, with internal user experience surpassing Sonnet 4.5. Under a 1M context setting, V4-Pro's single-token inference FLOPs are only 27% of V3.2's—a breakthrough leading globally. In mathematics, STEM, and competitive coding evaluations, V4-Pro surpasses all publicly benchmarked open-source models and rivals the world's top closed-source models.

Such fundamental facts mean the model's 'intelligence density'—effective intelligence generated per unit of computational power—has crossed a critical point.

Finally is the toolchain threshold. V4 has undergone special optimizations for mainstream Agent frameworks like Claude Code, OpenClaw, and CodeBuddy, with improvements in code tasks and document generation. Million-context support has become standard across all official services.

This is precisely the prerequisite for Agents to work autonomously over extended periods. It is no longer a 'toy' but can be directly deployed in production environments.

All three thresholds have been simultaneously breached: costs are low enough to scale, performance is strong enough to competent (shèngrèn, meaning 'be competent'), and the ecosystem is ready for deployment. This is not linear improvement but phase change.

An interesting parallel: In V4's technical report, the official admits the model's capabilities still lag GPT-5.4 and Gemini-3.1-Pro by about 3–6 months. This statement instead shows that V4's significance lies not in catching up but in solidifying the foundation of 'basic intelligence.' Once this foundation is established, applications will spontaneously emerge above it.

Historical experience is direct: Every qualitative change in underlying infrastructure has triggered a Cambrian-style explosion in applications. Amazon Web Services reduced computing costs below a threshold, igniting a global SaaS startup boom; 4G pricing dropping below a threshold sparked the short video (shǐpín, meaning 'short video') and live-streaming e-commerce era. Today, DeepSeek V4 is driving basic intelligence costs below a similar threshold—and this time, what's being disrupted is intelligence itself.

02

Earthquake

Let's zoom in to examine the first signals of this Cambrian explosion.

Most noteworthy is the speed of industrial chain restructuring: After V4 adapted to Huawei's Ascend 950PR, domestic chip companies like Cambricon, Hygon Information, and Moore Threads accelerated their adaptations in sync, while giants like Alibaba, ByteDance, and Tencent ramped up procurement of Ascend chips.

This is not just a model release by one company but the activation of an entire domestic computing power industrial chain.

The application layer's chain reactions are equally intense. The most direct impact is the qualitative change in Agent economics. Calculated based on V4-Flash's API cost for 1 million tokens, a task requiring complete reading of a medium-sized code repository costs just a few dollars. Under this cost structure, 'letting Agents trial and error' becomes engineeringly reasonable for the first time, establishing the economic foundation for large-scale Agent deployment.

Moreover, V4-Flash's price of 0.2 yuan per million tokens for input (starting price) nearly pushes AI inference into a 'utilities-like' new phase. When the marginal cost of intelligence approaches zero, the entire application layer—customer service, e-commerce, education, healthcare, legal—will be redefined at an unimaginable pace.

Another easily overlooked signal: Before V4's release, DeepSeek initiated external financing for the first time, with the latest target valuation reportedly exceeding $20 billion. In my view, this resembles an organization signaling readiness for large-scale deployment after completing core infrastructure construction.

03

Paradigm Shift

Regarding V4's industrial impact, placing it in a broader context reveals a deeper transformation taking shape: China's AI is shifting from 'catching up in model capabilities' to 'ecological circulation,' with models, chips, and applications forming a positive feedback loop.

V4 has pioneered in proving the feasibility of domestic chips supporting trillion-parameter models, directly driving synchronous growth for companies like Cambricon, Hygon, and Moore Threads. With market validation, these chip companies gain confidence to invest in next-generation product development.

Next-gen chips, being more powerful and cost-effective, will further reduce model inference costs, fostering more developers and application scenarios. Expanded application scenarios generate more data and feedback, further enhancing model capabilities. China's 'model-chip-cloud' closed loop is moving from 'logically viable' to 'factually established.'

At the chip ecosystem level, while NVIDIA and CUDA remain the best or only options for global competition in pre-training stages, V4's emergence as the first trillion-scale model independent of NVIDIA CUDA on the inference side signals a turning point: China's AI is evolving from point breakthroughs to systemic generational advancement. The new narrative should be: China's AI is establishing a complete technical closed loop from chips to models to applications, independent of NVIDIA's ecosystem.

Once this loop runs smoothly, its significance far exceeds any single model's capability breakthrough.

DeepSeek V4 is becoming an infrastructure-level presence, with its open-source ecosystem, cost-pricing strategy, and chip adaptation paths set to rapidly reshape the landscape of China's entire AI application ecosystem.

04

Cambrian

Let's zoom out further.

For years, the AI industry has been answering one question: What is the upper limit of model capabilities? From GPT-4 to GPT-5.4, from Claude to Gemini, everyone has sprinted along the single path of 'larger parameters, higher intelligence.' But this framework has a blind spot: Once model intelligence reaches a certain level, what determines the industrial landscape is no longer 'whose model is smarter' but 'whose model can scale wider and penetrate deeper.'

V4's emergence is shifting AI industry competition from 'capability races' to 'ecosystem races.'

This is not the victory of a single model but the triumph of open-source ecosystems over closed-source barriers, cost restructuring over computational power thresholds, domestic tech stacks over technological monopolies, and developers/users over the pricing power of a few giants.

DeepSeek V4's open-sourcing under the MIT license means any developer worldwide can locally deploy, freely commercialize, and secondarily develop it. This level of openness is accelerating the erosion of closed-source giants' moats.

05

Conclusion

'Not seduced by praise, not intimidated by criticism.'

In an industry filled with noise and gamesmanship, one ability is scarce: When everyone doubts, staying silent and continuing to code; when everyone predicts decline, opening the terminal and training the next version. V4 arrived fifteen months late.

But these fifteen months were not wasted. They were spent migrating from CUDA to CANN, expanding context from 128K to 1M while driving costs down, and steadily approaching the global first tier in Agent capabilities.

These silent engineering feats won't appear in any sensational headlines. But they are becoming the bedrock of AI applications for the next decade.

You'll find: The richest soil for China's AI applications is now ready. A surge of intelligent species is accumulating energy beneath the surface.