Ali’s HappyHorse Makes Its Debut, Overseas Users Advocate for Open Source

![]() 04/29 2026

04/29 2026

![]() 525

525

The much-anticipated HappyHorse has finally made its grand entrance.

On April 27th, Alibaba announced the official gray-scale release of HappyHorse 1.0. During this initial phase, creators can register and utilize the tool on the official HappyHorse website and Alibaba Cloud’s BaiLian platform, while regular users can access it through the latest version of the QianWen App or the official creative platform.

When HappyHorse first emerged, it employed an anonymous ranking strategy that garnered widespread acclaim within the industry. As it rapidly ascended various leaderboards, akin to a wild horse breaking free from its reins, one couldn’t help but ponder: Which team is behind this success?

Initially, few suspected Alibaba’s involvement, given that the company had never before released a highly acclaimed video generation model. Moreover, unlike content platforms such as Douyin or Kuaishou, Alibaba lacked their inherent advantages.

Eventually, the newly established ATH Business Group stepped forward to claim responsibility, marking a moment of triumph.

The timing of HappyHorse’s arrival couldn’t have been more opportune. Just as Lin Junyang’s departure raised concerns about talent drain and R&D prospects, Alibaba swiftly countered with a top-tier model, seemingly silencing the critics.

Of course, these two events were not directly linked. Model development typically requires several months—it cannot be initiated in March and completed by April.

Following HappyHorse’s release, some delved into the background of its leader, Zhang Di, discovering that he began his career as an Alibaba Star, later became the technical lead for Kling at Kuaishou, and only returned to Alibaba last year to head the Future Life Lab under Taotian.

Meanwhile, Zhou Chang, previously in charge of QianWen, was recruited by ByteDance and led the team that created Seedance 2.0. Even Kai Kun, now working on Kling, was once an Alibaba Star.

Thus, these events appear somewhat interconnected, as talent movements have not significantly hindered Alibaba’s ability to navigate technological change as an organization.

The debate over open-source versus closed-source, sparked by Lin Junyang’s departure, now shows signs of shifting trends.

Wu Yongming responded by stating that Alibaba would persist with its open-source model strategy, a response that seemed to evade the core issues.

Alibaba will not fully transition to closed-source but will limit open-source releases to smaller model sizes, reserving larger versions for internal MaaS sales.

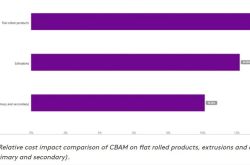

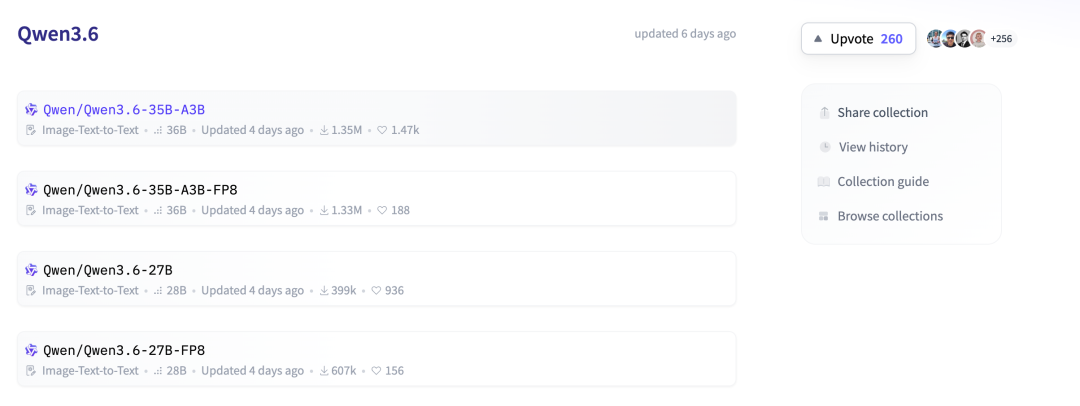

The latest Qwen 3.6 series open-sources models with 35B or 27B parameters, while Qwen 3.6-plus is exclusively available through Alibaba’s platform. The official release page states, “We will open-source smaller model versions to reaffirm our commitment to technological inclusivity and community-driven innovation.”

Lin Junyang’s departure was not the catalyst for these adjustments but rather reduced internal resistance to their implementation.

Earlier this year, he expressed at a forum his desire to open-source the Qwen 3-Max large model but was unable to do so.

A similar process occurred with video generation models. Alibaba’s Tongyi Wanxiang open-sourced up to wan2.2 but began closing wan2.6 earlier this year, sparking even more controversy.

Unlike text models like Sora or Veo, video models differ significantly in terms of usability. While large language models can now generate highly coherent articles or novels, video models struggle to produce satisfactory results even for 10-second clips after numerous attempts.

Consequently, the open-source community plays a more vital role in video model development.

This is evident in the wan ecosystem.

The early wan2.1 model, whether T2V or I2V, was relatively rudimentary despite being superior to other open-source models. The community made substantial contributions to enhancing the user experience.

Projects like lightx2v reconstructed inference processes, ranging from sampling strategies to memory optimization, reducing latency and costs while improving stability.

Various LoRA and lightweight versions introduced numerous features atop the base model.

Even Meituan collaborated with academia to develop solutions like InfiniteTalk on the wan2.1 base model, significantly optimizing capabilities in audio-driven animation, lip-sync, and long-video consistency.

Fortunately, the food delivery wars had not yet commenced, so this was not considered defection.

These community contributions were open-source and easily replicable, leading everyone to assume Alibaba would incorporate them into future releases.

Had Alibaba remained fully open-source, this would have been a mutually beneficial scenario driving technological progress.

However, when Alibaba shifted from open-source to closed-source, complaints arose that it had exploited the open-source community’s engineering practices and ideas before abandoning them.

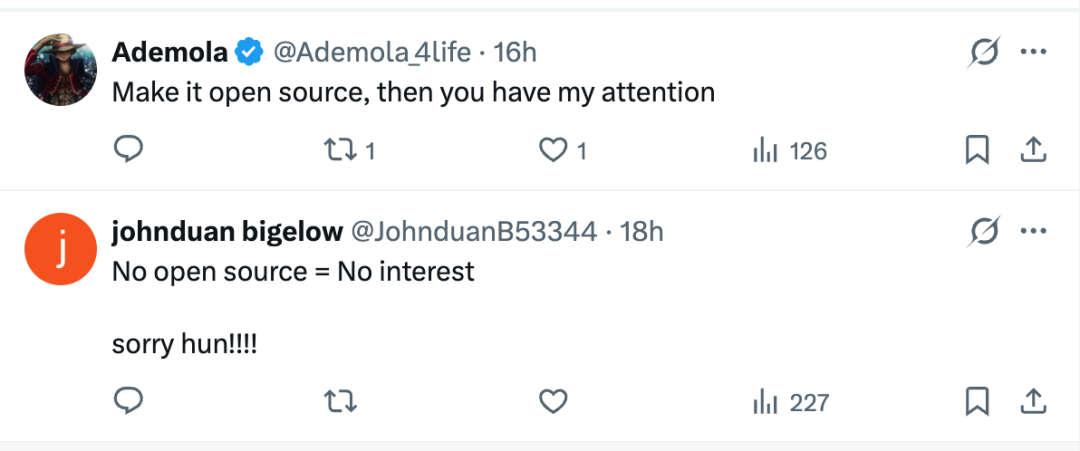

Indeed, beneath HappyHorse’s latest post, overseas users are still advocating for open-source. To be honest, they’re somewhat disconnected from reality.

Now, let’s delve into my hands-on benchmarking of HappyHorse vs. Seedance 2.0 vs. Kling 3.0.

Prompt:

First-person perspective (owner’s viewpoint), with no owner’s body visible in the frame. Two golden retrievers sit on the ground before the camera, intently staring at a sesame seed cake about to be thrown. The owner tosses a sesame seed cake forward from the camera’s front, forming a clear parabolic trajectory in the air.

The left golden retriever leaps instantly as the cake is thrown, opening its mouth to catch it but misjudges in mid-air and misses. The cake brushes past its mouth and continues falling. The right golden retriever remains calm, waiting on the ground instead of jumping. After the cake lands, the right retriever quickly grabs it. Both dogs move naturally and react authentically, avoiding exaggeration or stuttering.

I extracted this prompt from a short-video blogger I frequently watch. He has two golden retrievers, one noticeably smarter—it always waits for the other to jump for the cake, then grabs it from the ground after the other misses.

Top-left: HappyHorse; Top-right: Seedance 2.0; Bottom-left: Kling 3.0; Bottom-right: Veo 3.1.

Ranking the generation quality: Seedance 2.0 ≈ Kling 3.0 > HappyHorse > Veo 3.1.

Veo 3.1 was laughably bad—the cake magically appeared in the dog’s mouth, a total surprise, totally awesome.

Seedance 2.0 and Kling 3.0 were similarly realistic but had minor flaws.

Seedance 2.0 had the cake bounce too high upon landing, while Kling 3.0 showed the other dog hesitating for seconds before grabbing the cake, contrary to my prompt’s “quickly grabs it.”

HappyHorse had more issues. The footage looked fake; the dogs resembled CG. Continuity errors occurred—the cake first landed on the dog’s nose, then jumped into its mouth the next second. Additionally, the cake’s landing wasn’t shown, making the scene incomplete.

While this ranking reflects my opinion, I believe it’s objective.

Prompt:

First-person perspective, with no owner visible. Realistic environment with natural lighting. A cat stands on a table beside a glass of water. The cat appears guilty, gently pushing the glass toward the table’s edge with its front paw while slowly retreating, frequently looking up at the camera with hesitant, probing movements. The glass moves slowly across the table at first.

The pushing involves brief pauses and repeated actions. After the glass crosses the edge, it begins falling, accelerating due to gravity. Upon landing, the glass tips over or shatters, spilling water naturally in all directions without abnormal adhesion or deformation. The cat quickly looks down after the glass falls, remaining vigilant. All movements are continuous and natural, without teleportation, object disappearance, or repetition, adhering to basic physics.

All models performed equally poorly here, shattering my faith in reality.

Seriously, who keeps saying reality doesn’t exist anymore? Every new model release brings claims that “reality is broken,” yet here we all still are—parallel universe theory confirmed.

None of the four models accurately depicted the cat pushing the glass.

In HappyHorse’s video, the cat’s paws seemed glued to the glass, pulling it outward upon contact. As it pulled, the glass inverted, but the water defied gravity and didn’t fall. Physics ceased to exist, I must say. Moreover, the glass shattered upon landing, yet reappeared intact moments later.

In Seedance 2.0’s video, the cat never touched the glass—it fell autonomously on the horizontal table, as if summoned by belief. After landing, it stood upright like a 60-year-old man at a community gate.

Kling 3.0 had the same issue—the glass gained sentience and collided with my cat bro without contact. Additionally, the landing effect wasn’t rendered.

Veo 3.1 fared slightly better—the cat’s paw didn’t push the glass but brushed it. The glass tipped over without spilling water, still defying gravity. The shattering effect appeared, but the glass shards were excessive for a single glass.

These videos may not objectively reflect the models’ capabilities, as my test data was limited—AI video generation often requires extensive sampling.

However, based on these preliminary tests, HappyHorse’s model doesn’t show a particularly significant gap compared to others.

Currently, Alibaba offers substantial discounts, with professional memberships priced as low as 0.44 yuan/second after monthly subscription, maximizing cost-effectiveness.

Of course, all these evaluations assume HappyHorse remains closed-source. If it opens up, I’ll immediately champion it as the true deity.