Beijing Auto Show Highlights: Physics-Based AI Sparks New Era of Computing Power Revolution, Automotive Industry Enters 'Superbrain' Competition

![]() 04/29 2026

04/29 2026

![]() 566

566

Source: Zhiche Technology

At the 2026 Beijing Auto Show, display boards prominently featured 'AI,' 'integrated cockpit-driving systems,' 'large models,' and 'L3' as the most eye-catching keywords, replacing previous focuses on driving range and LiDAR counts. Volkswagen unveiled its 'Omnidirectional Intelligent Agent AI' roadmap, XPENG self-identified as a 'physics-based AI technology company,' and NIO incorporated 'embodied intelligence' into its vehicle positioning—automakers seemed to collectively 'transform' into AI companies overnight.

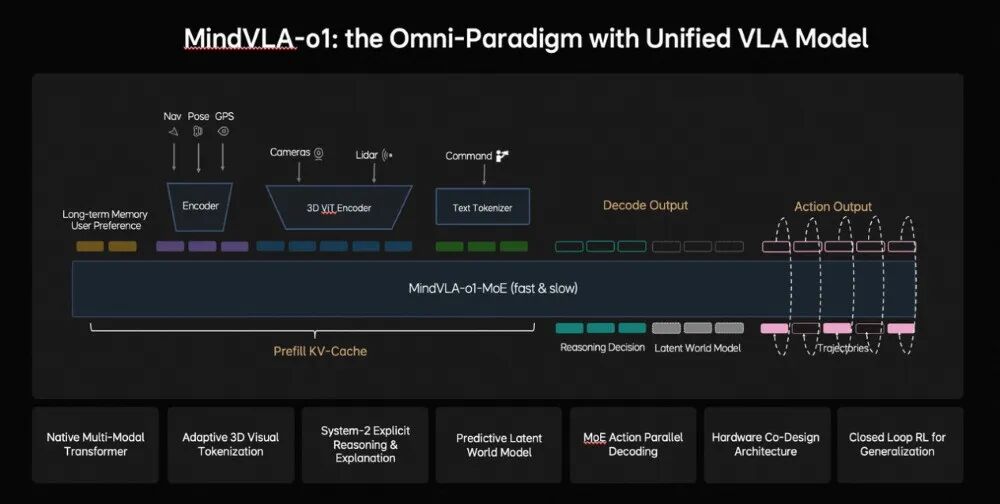

In March 2026, at NVIDIA's GTC conference, NIO released the MindVLAo1 model while Geely introduced the WAM (World Behavior Model). A technical route competition between VLA (Visual-Language-Action models) and world models moved from behind the scenes to center stage, with industry debate shifting from 'whether to pursue AI' to 'what kind of AI to develop.'

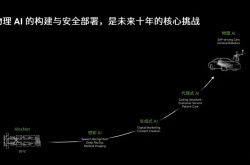

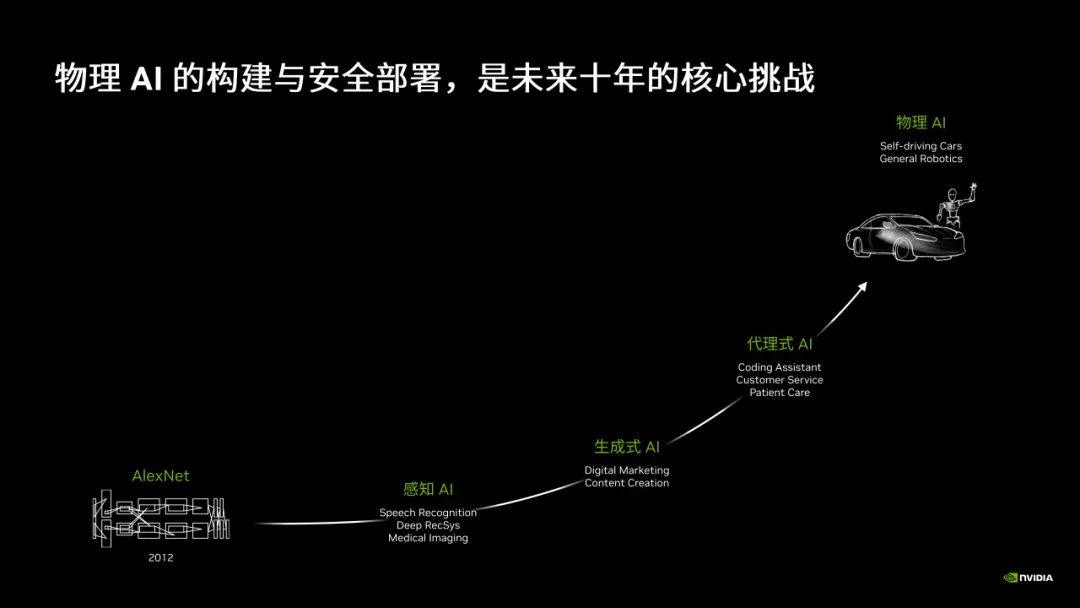

Why has physics-based AI suddenly taken center stage? Traditional AI excels at processing information-world content—image recognition, text understanding, video recommendations—with inputs and outputs remaining in the informational realm without direct physical-world interaction. Physics-based AI operates entirely differently, requiring comprehension of physical laws, object properties, and causal relationships. Take autonomous driving as an example: the system must not only recognize a ball rolling ahead but also predict that a chasing child likely follows; it must not only detect sudden braking by a vehicle ahead but also understand whether the cause is an accident or driver distraction.

Physics-based AI encompasses two core dimensions: first, enabling AI to grasp fundamental physical-world common sense (common sense) such as gravity, friction, and causality; second, equipping AI with actionable capabilities for safe, efficient interaction with the real world based on this common sense . This signifies AI's developmental shift from virtual intelligence toward actionable intelligence.

The automobile stands as a pivotal carrier for this transformation. As the most complex mobile terminal, it also represents the largest-scale, most hardware-demanding industrial scenario for physics-based AI implementation. Whoever first creates AI that truly understands the physical world and achieves 'intelligent monetization' in vehicles will likely dominate industry competition in the next decade.

Image Source: Autonomous Driving Heart

VLA vs. World Models: Which Approaches Closer to Reality?

In 2026, intelligent driving technology split into two distinct technical paths. The VLA camp, represented by NIO and XPENG, advocates endowing systems with reasoning capabilities through large language models; the world model camp, led by Huawei and Momenta, argues that predicting physical-world states constitutes the key to intelligent driving.

This divergence is no academic exercise—it directly determines where hundreds of billions in industry R&D funding flows and shapes the ultimate driving experience for consumers. Importantly, these divisions are not absolute. NIO's MindVLAo1 already integrates implicit world modeling capabilities.

The VLA architecture unifies Vision, Language, and Action execution. Its core philosophy enables AI to comprehend semantic information in driving scenarios like humans do, then make decisions accordingly.

On the eve of the 2026 Beijing Auto Show, XPENG unveiled its second-generation VLA Ultra intelligent driving system, emphasizing 'high-definition map-independent' all-scenario driving capabilities. Equipped with four Turing chips delivering 3,000 TOPS of total computing power, the system achieves end-to-end integration across perception, decision-making, and planning control. More critically, XPENG deployed dual Turing AI chips and its second-generation VLA large model in the 120,000-yuan-level MONA model, attempting to expand data scale through price coverage.

NIO debuted the MindVLAo1 model at GTC 2026, employing technologies such as MoE Transformer, 3D spatial understanding, and closed-loop reinforcement learning. This model demonstrates three-dimensional spatial perception and predictive implicit world modeling capabilities. NIO emphasizes that 'autonomous driving merely represents the starting point for physics-based AI,' with future foundational models driving new paradigms in embodied intelligence beyond driving applications.

The core tenet of world models posits that autonomous driving should not merely process current visuals but simulate future world states seconds or even minutes ahead in the cloud, driving with 'foreknowledge.'

Huawei's ADS 5.0 epitomizes this approach. Its WEWA 2.0 architecture introduces a 'multi-agent game mechanism (game mechanism)' for the first time, constructing a highly realistic digital twin of the real world in the cloud. Through multi-agent interactions, AI undergoes continuous training across countless extreme scenarios, boosting efficiency tenfold. More groundbreakingly, the architecture adopts an 'online reinforcement learning' mode of 'simultaneous generation, learning, and validation,' further multiplying training efficiency by ten. The vehicle-side system pioneers 'safety risk field theory,' reducing collision risks by 50%.

Momenta represents the first company to mass-produce integration between world models and reinforcement learning. At the 2026 Beijing Auto Show, Momenta officially launched the R7 reinforcement learning world model. CEO Cao Xudong decomposed the technical path into three layers: first, pre-training with massive real driving data to compress physical laws, common sense, and causal relationships into the model; second, applying world models to closed-loop simulations for autonomous driving, enabling the system to predict world evolution in response to its actions; third, conducting reinforcement learning within world models, allowing systems to repeatedly trial-and-error in hyper-realistic virtual environments and autonomously acquire optimal decision-making capabilities for extreme scenarios.

This mechanism aims to enable AI to surpass average human drivers in long-tail extreme scenarios. According to Momenta, vehicles equipped with the R7 system demonstrated capabilities during real-world testing to autonomously predict rolling trajectories and diffusion ranges of objects unexpectedly falling from preceding vehicles, then proactively plan evasive routes—'handling sudden road conditions with greater composure and alignment with human driving logic.'

Qcraft completed a $100 million Series D funding round in March 2026, explicitly allocating funds toward general physics-based AI R&D combining 'world models + reinforcement learning.' Its 'Chengfeng MAX' solution adopts a unified VLA + world model + reinforcement learning architecture, attempting to integrate multiple technical routes by 'taking their essence.'

The contest between the two camps has intensified. Li Chuanhai, CTO of Geely Automobile Group, publicly questioned VLA during the WAM world behavior model launch: VLA merely matches standard answers, lacks genuine cognition of physical laws, relies on limited driving data rather than massive video datasets, and struggles to model the physical world.

Momenta's Cao Xudong was more direct: 'VLA offers limited improvements for intelligent driving. Only 'world models + reinforcement learning' can deliver tenfold or even hundredfold enhancements.'

However, industry consensus is evolving toward integration. Black Sesame Technologies CEO Shan Jizhang asserted: 'Combining VLA with world models represents the most probable technical route for advanced intelligent driving, with potential to surpass human driving capabilities.'

Another emerging path comes from Zoyu Technology's 'native multimodal foundational model.' Rather than following the traditional approach of distilling cloud-based large models into vehicle-end small models, Zoyu's foundational model conducts bottom-up pre-training on universal physical-world patterns, supporting unified multimodal inputs including video, text, voice, and maps. This enables cross-domain, cross-regional Zero-Shot knowledge transfer. Zoyu CEO Shen Shaojie stated clearly: 'Future surviving intelligent driving companies will all transform into mobile physics-based AI companies.' The model will debut this year in passenger vehicles and commercial heavy trucks, planning to serve as a technological foundation for overseas expansion.

Physics-Based AI Gives Birth to Automotive 'Superbrains'

From VLA to world models, physics-based AI's computing power demands have triggered comprehensive industrial chain upgrades. Baidu Vice President Shi Qinghua stated bluntly at the 2026 Smart Electric Vehicle Development Summit: 'The current competition in autonomous driving, particularly in model training and inference, has reached a stage of computing power shortage.'

He presented notable macro data: by 2028, inference is projected to account for 73% of total computing power demand; between 2022-2024, inference costs for equivalent performance dropped over 200-fold. Meanwhile, global AI large model weekly invocation volumes reached 27 trillion Tokens, with automotive sector growth even more explosive—'smart cockpits are comprehensively integrating large models for generative HMI and multimodal reasoning.'

How much computing power does physics-based AI require? NVIDIA's Thor chip answers: no upper limit, only an endpoint. A single Thor chip delivers over 1,000 TOPS, while a dual-chip configuration via NVLink interconnect provides maximum computing power of 4,000 TFLOPS (FP4 precision) with bidirectional aggregation bandwidth reaching 180GB/s—tens to hundreds of times higher than traditional PCIe or Ethernet solutions.

During the Beijing Auto Show, Desay SV and Pony.ai unveiled intelligent driving domain controller solutions based on dual-Thor platforms, already capable of L3/L4 mass production. Pony.ai's Robotaxi domain controller utilizes NVLink's 'highway' to enable collaborative work (collaborative operation) between two Thor chips, achieving full functional coverage for edge computing domains.

More industrially significant than mere computing power accumulation is 'integrated cockpit-driving systems,' which consolidate intelligent driving and smart cockpit hardware onto a single chip, breaking the longstanding hardware separation between cockpit and driving systems.

Horizon Robotics unveiled China's first cockpit-driving fusion vehicle intelligence chip, Xingkong 6P, on the eve of the auto show. Using one chip to replace traditional separate systems for intelligent driving and cockpit functions, it deploys the cockpit's digital AI and high-level intelligent driving's large models on the same silicon substrate.

This solution's significance extends far beyond mere physical consolidation. Space occupancy shrinks by 50%, per-vehicle costs drop by 1,500-4,000 yuan, while more fundamental changes emerge at the architectural level: unified architecture eliminates the need for data to travel long distances between chips, enabling millisecond-level low-latency interactions. Research and development delivery cycles plummet from 18 months to 8 months—a 56% reduction. For safety, Horizon's pioneering 'castle' security physical isolation architecture creates internal partitions within the chip, preventing cockpit entertainment system failures from affecting the intelligent driving domain. Currently, Volkswagen, Chery, BYD, and other automakers, along with Tier 1 suppliers like Bosch and Denso, have locked in Xingkong 6P as an intended partner. Horizon founder and CEO Yu Kai stated bluntly: With technological breakthroughs like 'cockpit-driving fusion,' the user inflection point for high-end intelligent driving experiences has arrived, with intelligent driving configurations set to become mainstream standards within three years.

Other chip players are also embracing this trend. Qualcomm's 8775 chip offers 50-72 TOPS of cockpit-driving fusion computing power, while its flagship 8797 chip reaches 320-640 TOPS. A dual-chip configuration has already been deployed in the Leapmotor D19. Black Sesame Technologies' Huashan A2000 family covers 200-1,000 TOPS across tiers, with its A2000X providing 1,000 TOPS equivalent computing power for L3 autonomous driving and Robotaxi applications.

While the industry fixates on chip computing power, storage shortcomings are quietly emerging. At the Beijing Auto Show, large model integration in vehicles became standard. Changan, Dongfeng, BAIC, BYD, Geely, Great Wall, and other automakers collectively integrated Qianwen large models, with over 7 million intelligent vehicles deploying Doubao large models. NIO's World Model NWM, Zeekr's 'Super Eva,' XPENG's second-generation VLA, and NIO's Mind GPT also made their vehicle debuts.

However, large model integration brings immense storage pressure. Micron Technology CEO provided a vivid reference: current mainstream vehicles require around 16GB of memory, while L4 autonomous driving models need over 300GB. The automotive industry's memory chip supply pressures are intensifying, with Li Auto's supply chain vice president Meng Qingpeng warning that the industry may face a crisis of memory chip supply fulfillment rates below 50% in 2026.

Physical AI Drives Monetization of Core Automotive Business

The true barrier to physical AI lies not in computing power or chips themselves, but in the efficiency of the data feedback loop. This is precisely the greatest advantage Chinese automakers have in popularizing large-scale AI models.

At the Q4 2025 earnings call, He Xiaopeng announced that R&D investment in physical AI would rise to RMB 7 billion in 2026, covering the VLA large model, humanoid robot IRON, flying cars, and L4-standard pre-installed Robotaxi production. A more significant milestone is the official rebranding of "XPENG Motors" to "XPENG Group." He Xiaopeng defined it bluntly: "In the last decade, XPENG was about smart electric vehicles; in this decade, it's about global physical AI." 800,000 mass-produced vehicles equipped with intelligent driving systems form its data flywheel for physical AI.

As of April 2026, Huawei's ADS cumulative assisted driving mileage surpassed 10 billion kilometers, with R&D investment in intelligent driving exceeding RMB 18 billion in 2026. Massive investments and vast data volumes drive Huawei's continuous iteration of its cloud-based WEWA 2.0 architecture.

Cost reduction has become an unavoidable core demand in the automotive industry. Horizon Robotics' Horizon Xingkong 6P solution reduces per-vehicle costs by RMB 1,500-4,000 and shortens cabin-driving integrated hardware-software delivery time by 56%, opening a cost breakthrough for high-level intelligent driving in the RMB 100,000-200,000 mainstream market.

XPENG directly integrates dual Turin AI chips and its second-generation VLA large model into the RMB 120,000-priced MONA model, essentially calculating long-term benefits in terms of scalable training costs and reusable hardware-software capabilities. Once the virtuous cycle of "more data—faster iteration—better experience—more users" begins, the cost curve will enter a downward trajectory.

Industry consensus is clear. The competition in intelligent driving has entered a comprehensive stage centered on data feedback loop capabilities, large model training efficiency, and engineering implementation levels. High-level intelligent driving will accelerate its penetration into mainstream RMB 100,000-200,000 models. This "standardization battle" is transforming physical AI's intelligence scale from high-end differentiation into core foundational capabilities.

The natural extension logic of physical AI lies in cross-platform migration capabilities. Horizon Robotics' team has extended its core computing platform BPU to embodied domains like robotic vacuum cleaners and quadrupedal robots.

XPENG has already begun building experimental scenarios across more physical AI platforms: the humanoid robot IRON plans to start mass production by the end of 2026 with a monthly capacity target of over 1,000 units; the core power system for the flying car "Land Aircraft Carrier" has also commenced mass production.

Zhuoyu Technology's cross-category foundational model integrates intelligent driving needs for passenger vehicles, heavy trucks, logistics vehicles, and other mobile platforms under a unified model framework—different mobile entities operating in the world with the same physical cognition framework represents the most fundamental expansion of physical AI's connotation. The slogan "one model driving intelligent mobility for all" is transitioning from vision to technical reality.

The "Last 500 Meters" of Physical AI

No matter how grand the conceptual narrative of physical AI becomes, it must ultimately withstand scrutiny in the real physical world. The SenseTime Jueying team summarizes this as the "last 500 meters" dilemma—"the gap from algorithmic feasibility to onboard trustworthiness seems small but represents the hardest barrier to overcome."

This dilemma comprises at least three dimensions.

First, explainability of large models. While traditional end-to-end solutions break through the scalability bottlenecks of rule-based systems, AI decision-making becomes a black box: passengers remain unaware of why the system brakes or swerves. The SenseTime Jueying team once stated bluntly: "End-to-end represents the ChatGPT moment for intelligent driving, but why don't users trust it yet? Because end-to-end solves capability but not explainability." In mobility scenarios where safety concerns override functionality, this question remains unanswered.

Second, the paradox between computing power investment and commercial returns. Each additional layer of intelligence exponentially increases onboard computing demands. Baidu's Shi Qinghua succinctly captured the automotive industry's dilemma: "Selling a car has a certain cost, but once the vehicle truly becomes intelligent, each added capability in the intelligent driving system escalates computing consumption"—yet automakers struggle to translate these improvements directly into consumer willingness to pay.

Third, compliance and data security. The large-scale data flows, localized computing, and cloud-training coordination involved in deploying large models on vehicles create entirely new pressures on data privacy and regulatory compliance. The industry is exploring explainable AI and controllable large model solutions but has yet to reach consensus standards.

The core challenge in transitioning to physical AI ultimately remains engineering capabilities. Traditionally, the automotive value chain centered on manufacturing and hardware; future competition will revolve around physical AI models, data, and algorithms. As industry experts note—the focus shifts from competing on specifications to competing on foundational platforms; from building functions to creating intelligence. The second half of the automotive competition has just begun.

- End -

Disclaimer:

Works marked "Source: XXX (non-Smart Vehicle Technology)" in this official account are reprinted from other media, intended to disseminate and share more information. This platform does not necessarily endorse their viewpoints or verify their authenticity. Copyright belongs to the original authors. Please contact us for removal if infringement occurs.