2.7% Performance Gap: Global AI Race Enters Era of Asymmetric Competition

![]() 04/29 2026

04/29 2026

![]() 460

460

Author|Chuan Chuan

Editor|Da Feng

In April 2026, Stanford University's Institute for Human-Centered Artificial Intelligence (Stanford HAI) released the ninth edition of its Artificial Intelligence Index Report. This 423-page annual report systematically tracks global AI technological development, research output, industrial investment, and social impact. One data point rewrites conventional perceptions of the global AI competition landscape: as of March 2026, the performance gap between the U.S.'s top model Claude Opus 4.6 and China's Dola-Seed 2.0 stands at just 2.7%.

Three years earlier in May 2023, OpenAI's GPT-4 led with over 1,300 Arena points, while Chinese models scored below 1,000. Now, the finish line of this pursuit is within sight—a mere 39 Arena points separate them, a margin reversible in the next model release cycle. The report describes this shift as "nearly eliminated."

Yet the true significance lies not in the number itself but in the divergent paths behind it. In 2025, U.S. private AI investment reached $285.9 billion—23 times China's total—with 5,427 data centers, over 10 times any other nation. Despite chip embargoes and capital disparities, China has pioneered an asymmetric approach: trading efficiency for computational power, real-world scenarios for data, and ecosystems for standards.

This 2.7% gap represents not just model performance but a fleeting convergence of two technological philosophies at equilibrium.

Efficiency Revolution: Breaching Computational Barriers

The U.S. AI industry is awash in unprecedented capital. Global corporate AI investment surged to $581.7 billion in 2025, with the U.S. accounting for $285.9 billion—more than doubling year-on-year. Six tech giants—Microsoft, Meta, Oracle, and others—pledged $660-700 billion in 2026 capital expenditures, with three-quarters earmarked for AI infrastructure.

However, massive investments haven't fully translated into technological moats. The Stanford report exposes a structural dilemma: recent U.S. AI spending focuses on data centers and energy infrastructure—the "most certain revenue-generating segments." Yet decades of underinvestment in power grids have created physical bottlenecks. Goldman Sachs warns this will hinder U.S. AI expansion.

China, under high-end chip export restrictions, redirected resources toward efficiency. By late 2024, DeepSeek-V3 trained for 57 days using 2,048 NVIDIA H800 GPUs at a cost of $5.57 million—roughly one-tenth of OpenAI's comparable model. Anthropic CEO Dario Amodei revealed GPT-4-level training costs around $100 million, with next-gen models potentially reaching $1 billion. DeepSeek-V3 proved computation isn't the sole variable.

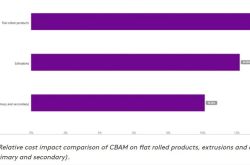

Energy costs provide another efficiency lever. China's "East Data, West Computing" initiative deploys data centers in western regions rich in green energy, achieving a world-leading PUE (Power Usage Effectiveness) below 1.1. Industrial electricity prices in 2026 show China at $0.048-0.061/kWh (as low as $0.013-0.03 in the west), versus $0.08-0.12 in the U.S. and $0.10-0.15 in Europe. Combined with ultra-high-voltage transmission, China slashes AI inference power costs—60-70% of operational expenses—to a fraction of U.S. levels.

This isn't mere cost-cutting. When Chinese models reduce per-million-token costs to a fraction of U.S. counterparts, developer adoption shifts from technical preference to economic rationality. In February 2026, Chinese AI models surpassed U.S. models in weekly calls on OpenRouter for the first time, with four of the top five models being Chinese. Notably, Chinese users comprised just 6.01% of OpenRouter's platform, indicating overseas developer-driven adoption.

The U.S.'s 23x investment advantage hasn't translated into proportional performance leadership—a sign that as Moore's Law slows and chip miniaturization nears physical limits, "brute-force computing" yields diminishing returns. China's efficiency revolution, forced by embargoes, strikes at AI industrialization's core: cost.

Real-World Scenarios: Iterating Models in the Physical World

If efficiency is China's survival strategy, real-world applications are its evolutionary engine.

The Stanford report highlights China's overwhelming lead in industrial robot deployment: 295,000 units—nearly nine times the U.S. total and 54% of global installations. This dominance is no accident. China's manufacturing sector, the world's largest, offers AI deployment opportunities across port logistics, mining, and factory production lines.

In January 2026, the Ministry of Industry and Information Technology revealed AI penetration in pilot factories exceeded 70%, with over 6,000 vertical-domain models deployed, driving 1,700+ key smart manufacturing equipment and industrial software applications. Industrial agents capable of perception, decision-making, and execution are pushing Smart Manufacturing (smart manufacturing) from automation to autonomy. In contrast, U.S. manufacturing AI penetration stands at just 34%—a legacy of deindustrialization since the 2010s.

This scenario density creates fundamental differences in iteration speed. Chinese AI models refine parameters daily in factories, ports, and mines—real physical environments providing constant feedback. Unlike lab-trained models using static datasets, this "combat-ready" iteration is 2-3x faster and crucial for handling complex physical-world challenges.

The Stanford report notes a stark AI capability contradiction: "AI wins math Olympiad gold but still can't reliably read a clock." Top models achieve just 50.1% accuracy in analog clock recognition (vs. 90.1% for humans). Moving from digital to physical worlds causes sharp capability declines: robots achieve 89.4% success in software simulations but plummet to 12.4% in real-world tasks like folding clothes or washing dishes. The report describes this as a "jagged frontier"—razor-sharp in some areas, blunt in others.

Thus, accelerating AI deployment in real-world scenarios and accumulating physical-world data becomes a competitive edge. China's manufacturing base, diverse applications, and growing industrial robot fleet create structural advantages: models aren't "benchmarking" but "working."

Ecosystem Export: Redefining the AI Race's Endgame

With model performance gaps narrowing to 2.7%, competition shifts from technical duels to ecosystem battles.

China now leads in research output. By 2024, Chinese AI papers accounted for 20.6% of global citations (vs. 12.6% for the U.S.). Among the top 100 most-cited AI papers, China's share rose from 33 in 2021 to 41 in 2024, while the U.S. fell from 64 to 46. In patents, China secured 97,206 AI grants in 2024—74.2% of 131,121 global patents—while the U.S. dropped to 12.1% from 42.8% in 2015.

More critical shifts occur in open-source ecosystems. Chinese open-source models now dominate global developers, particularly in "Global South" nations. DeepSeek-R1, released in early 2025, triggered download surges on Hugging Face far exceeding Llama. Alibaba's Qwen and ByteDance's Seed series followed with iterative open-source versions, creating a Chinese model cluster effect in developer communities.

This open-source strategy offers strategic depth. It bypasses U.S. closed-ecosystem barriers—developers deploy locally and fine-tune freely without relying on U.S. APIs. Meanwhile, it establishes standards based on Chinese tech stacks. The Stanford report notes 44 nations now operate "nationally supported supercomputing clusters" with heavy AI infrastructure investment. As these countries build local AI capabilities, China's open-source models, frameworks, and deployment tools become more attractive than U.S. closed alternatives.

U.S. advantages remain significant. It leads in top-tier models (50 vs. China's 30), data centers (5,427 vs. China's 412), and venture capital (AI startups raised tens of times more in 2025). Yet the Stanford report reveals troubling trends: since 2017, AI scholars migrating to the U.S. plummeted by 89%, including an 80% drop last year alone. Talent inflow depletion and diminishing capital advantages are reshaping the competition's fundamentals.

The AI race's outcome may hinge not on model size or data center count but on who converts AI into productivity at the lowest cost, fastest speed, and broadest scale. As efficiency and scenarios tear open the technological wall, that 2.7% gap could become a gateway to a new era.

Stanford's 2026 AI Index Report notes industry now develops over 90% of cutting-edge models, with AI agents handling real-world computer tasks surging from 12% to 66% success in 18 months. Technology is permeating the physical world at unprecedented speed. The depth, breadth, and cost of this penetration will ultimately determine who writes the AI era's rules. The game has changed.