From Hardware Race to Large Model Dominance in Autonomous Driving?

![]() 05/06 2026

05/06 2026

![]() 472

472

When we look back at the evolution of autonomous driving in 2026, a captivating trend emerges. A few years prior, automakers were in fierce competition at product launches, boasting about who had integrated more LiDAR sensors or whose chips boasted superior computational prowess. Today, however, the focal point of discussion has shifted to large models. This transition doesn't imply that hardware is obsolete; rather, it's become evident that merely amplifying sensor numbers and computational power doesn't equip vehicles with the ability to truly learn and drive like humans.

Why Are Sensors No Longer the Centerpiece?

Initially, autonomous driving solutions heavily depended on hardware-based perception capabilities. Automakers aimed to outfit vehicles with the most sensitive sensors available, utilizing high-definition cameras, ultrasonic radars, and LiDAR to meticulously label every tree and streetlight in the vicinity. The prevailing logic was that if a vehicle could see accurately and far enough, it could adeptly avoid obstacles. Consequently, hardware configuration became the primary yardstick for assessing a vehicle's intelligence.

However, as the demand for urban Navigation on Autopilot (NOA) surged, autonomous driving technology encountered a bottleneck. Despite collecting vast amounts of data through hardware, vehicles still grappled with unexpected scenarios. Situations like temporary traffic lights at construction sites, pets darting across the road, or false reflections from puddles posed significant challenges for traditional modular programs. These scenarios were endless, and no amount of code could encompass all real-world possibilities.

This highlighted a fundamental issue: traditional autonomous driving relied on manually programmed rules. While sensors could identify obstacles, the decision-making layer often faltered because it hadn't encountered such specific situations before. This led the industry to recognize that merely upgrading hardware was akin to patching up a shaky foundation. To enable vehicles to navigate complex environments, their reasoning logic had to evolve—shifting from manual rules to large models.

Tesla, starting with FSD V12, replaced manually programmed driving rules with an AI neural network, drastically reducing over 300,000 lines of perception and planning code to just a few thousand lines. The V14.3 version released this year further took over the underlying control module, also originally composed of over 300,000 lines of C++ code, achieving for the first time a full-stack AI closed loop from perception to execution.

The Transition from Multiple-Choice to Intuitive Driving

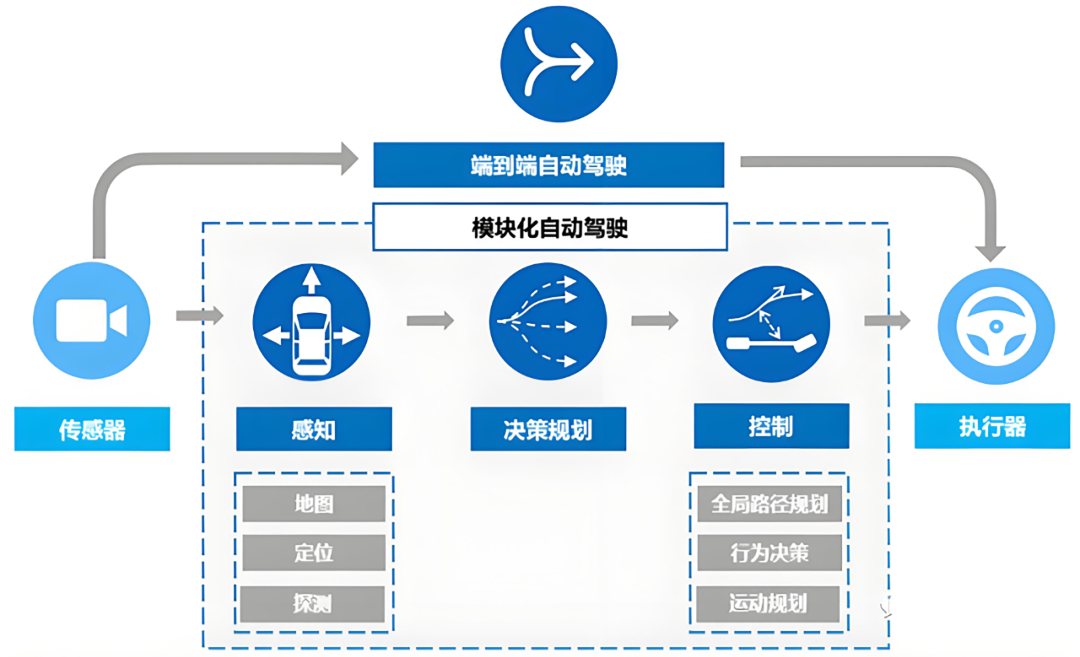

Previous autonomous driving systems operated akin to multiple-choice tests. When sensors detected an obstacle ahead, the system would consult its pre-learned knowledge. If the obstacle was a pedestrian, it would brake; if it was a plastic bag, it would proceed. However, when faced with uncertain objects, the system would hesitate or even freeze. Today's large model technology, particularly the end-to-end architectures prevalent by 2026, has entirely abandoned this segmented logic.

Image source: Internet

End-to-end systems feed images from cameras directly into a massive neural network, which then outputs steering angles and throttle positions. They no longer require intermediate manual rule interventions but instead learn human driving habits by analyzing tens of millions of hours of human driving videos. This learning process mirrors how children learn to ride a bicycle—developing an instinctive response through countless repetitions. XPENG Motors' second-generation VLA large model officially entered mass production in the first quarter of this year, achieving for the first time end-to-end generation from visual signals to action commands, eliminating intermediate language translation steps. It was first deployed in multiple 2026 Ultra models, including the P7+, G7, and X9.

Today, smart cars handle complex road conditions with remarkable ease. For instance, when encountering oncoming traffic in a narrow alley, vehicles no longer awkwardly stop in the middle of the road but instead adjust their position slightly based on the other driver's intentions, even communicating through subtle headlight movements. This human-like driving style cannot be achieved through hardware alone but requires large models. The mass production achievements of intelligent solution provider Momenta also underscore this point. Between 2025 and 2026, its intelligent driving solution deployments surged from nearly 300,000 units to over 800,000 units. Even including luxury brands like Mercedes-Benz, BMW, and Audi, it took less than 40 days to deliver an additional 100,000 units, demonstrating market recognition of this technological direction.

Why Are World Models the Core Competency?

By 2026, the focus of autonomous driving competition has shifted to world models. World models empower vehicles not only to perceive the present but also to predict the future. While previous hardware stacking could only solve the problem of seeing, large models endow vehicles with spatial imagination. When driving on a road obscured by a large truck, the large model will mentally reconstruct the scene in the blind spot based on the current road environment and logic, anticipating potential non-motorized vehicles that might emerge.

This enhanced capability further diminishes the importance of hardware. Because large models possess robust error correction and completion abilities, they no longer require LiDAR to meticulously map every centimeter. Even in rainy or snowy conditions with blurred camera vision, large models can infer the road's direction and potential hazards based on their understanding of physical laws. Automakers have also entered the mass production stage in deploying world models.

At this year's GTC Conference, Li Auto unveiled its next-generation autonomous driving foundation model, MindVLA-o1, whose core is the implicit world model technology that enables vehicles to mentally imagine future scenes seconds ahead. NIO's 2026 EL7 is equipped with its self-developed 5nm chip, Thor, and the NIO World Model, bringing world model technology to the mainstream market at a starting price of RMB 179,800. SAIC Volkswagen's new flagship SUV, ID.ERA 9X, announced its global debut with Momenta's R7 reinforcement learning world model during the Beijing Auto Show, marking the official mass production of physical AI in vehicles. This deep understanding of the real world is the key to large models surpassing hardware stacking.

This also explains why automakers no longer blindly pursue computational power figures. In the past, higher TOPS (Tera Operations Per Second) values were considered superior, but now greater emphasis is placed on computational efficiency and model evolution speed. An optimally designed end-to-end large model demonstrates far smoother driving performance under the same computational power than traditional systems that merely calculate coordinates rigidly. Hardware now serves as the limbs and senses of large models, while the true soul is the neural network capable of understanding human driving logic.

What Does This Transformation Bring?

The shift from hardware to software models has directly lowered the barriers to entry for autonomous driving while raising the ceiling.

By reducing reliance on top-tier LiDAR and ultra-high computational power chips, the hardware costs of intelligent driving systems have begun to decline. This means more ordinary family vehicles can now enjoy high-level autonomous driving. In the 2026 market, many models priced around RMB 100,000 to 200,000 offer driving smoothness and safety surpassing those of expensive test vehicles from just a few years ago.

Take Horizon Robotics' latest solution as an example: it reduces hardware costs by RMB 1,500 to 4,000 per vehicle, rapidly popularizing high-level intelligent driving configurations in mainstream models priced between RMB 100,000 and 200,000. By the end of the first quarter this year, over 70 mass-produced models priced between RMB 100,000 and 150,000 offered Navigation on Autopilot (NOA) functionality, making intelligent driving accessible to all.

Meanwhile, the learning speed of autonomous driving has grown exponentially. In the hardware-stacking era, system upgrades required engineers to manually modify code, with each bug fix potentially taking months. Now, by feeding new, high-quality driving data to large models, they can learn to handle new traffic rules or special weather conditions in just a few days. This self-learning and self-evolving capability has transformed autonomous driving from a laboratory experiment into a mature product adaptable to different regions and cultural backgrounds worldwide.

Final Reflections

The shift from hardware competition to large model competition in the autonomous driving industry represents a fundamental paradigm shift. To replicate human driving abilities, we must not only equip machines with good "eyes" but also endow them with a "brain" capable of thinking and predicting. This transformation marks autonomous driving's departure from its mechanistic infancy and its entry into a truly intelligent era.

-- END --