Understanding in One Read - Whether Intelligent/Self-Driving Cars Should Use LiDAR: It May Not Just Be a Technical Issue

![]() 05/06 2026

05/06 2026

![]() 508

508

There has long been a sharp viewpoint within autonomous driving: LiDAR is the 'Marxism' of the autonomous driving world—replacing emergence with centralized measurement and understanding with data. While it appears rigorous, it is essentially lazy engineering.

That sounds good, and it's not entirely wrong. But it only tells half of the story.

On the other side of China, the reality at the 2026 Beijing Auto Show looks like this: Leapmotor A10 brings LiDAR to an 80,000-yuan car, BYD's Seagull-class models start offering LiDAR as an option, Changan's mass-produced LiDAR-equipped vehicles enter the 100,000-yuan price range, and Huawei's latest ADS solution features a fusion perception system with up to six LiDAR sensors. Meanwhile, Chinese companies RoboSense and Hesai Technologies account for over 80% of the global automotive LiDAR market share.

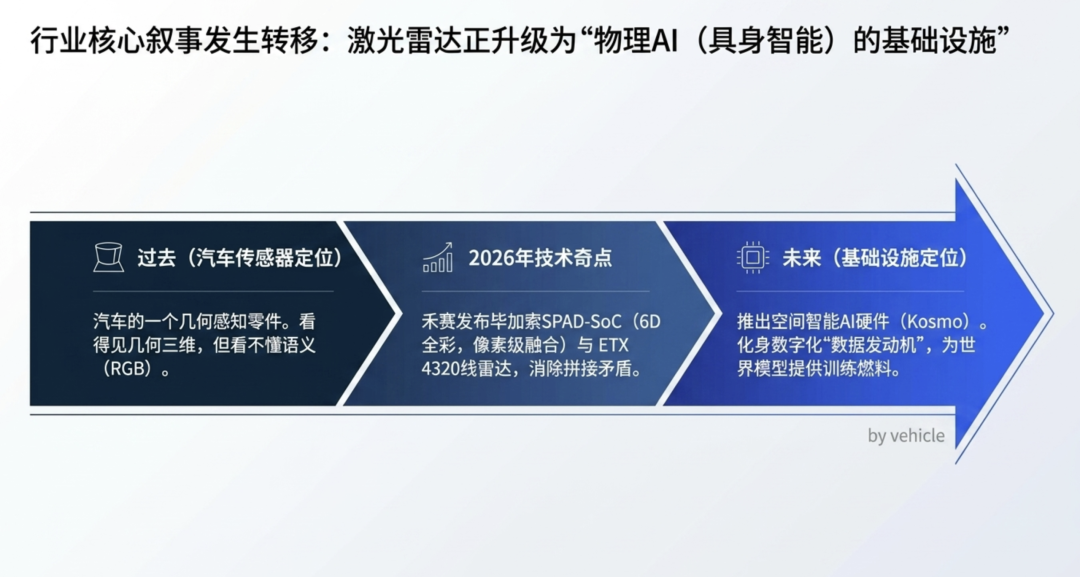

What's even more interesting is that at Hesai's recent Technology Open Day, the narrative of the entire LiDAR industry was shifted from 'automotive sensors' to 'Physical AI infrastructure'—launching the world's first 6D full-color LiDAR chip, Picasso, the 4320-line ETX radar, and Kosmo, a spatial intelligence hardware for embodied AI. This signifies one thing: Act II of LiDAR has begun, and the protagonist of this act is not cars, but robots.

On one side, the Musk-XPeng camp declares that 'pure vision is the only correct path,' while on the other, the Huawei-BYD-Waymo camp votes with their feet by stacking hardware. Meanwhile, players like Hesai are betting on humanoid robots and world models. Who is right?

This article provides a five-dimensional analysis—technical principles, engineering implementation, commercial aspects, geographic differentiation (the real battleground in Europe, the U.S., and Japan), and Physical AI (whether robots need LiDAR)—along with projections across three time slices (now/2028/2030+). Finally, I offer my prediction: Whether LiDAR should be used in cars or robots lies in the unasked half of the question.

Let's start with the conclusion, then explain why.

LiDAR is the 'crutch' of autonomous driving.

A person with a fracture needs a crutch, but once healed, they discard it. However, a severely injured patient with permanently misaligned bones—like a Robotaxi—may need crutches for life.

This doesn't mean LiDAR is 'useless,' nor does it mean 'pure vision will inevitably win.' It means: The value of a sensor depends not on the technology itself but on the product of algorithmic capability × scenario tolerance × cost structure. These three variables yield vastly different results at different times, for different vehicle models, and in different scenarios.

Keep this in mind—the following three dimensions expand on this point.

Dimension 1: Technical Principles—Musk Is Right, But Only 70% Right

The friend at the beginning of the article quoted Rich Sutton's 'The Bitter Lesson': Over the long term, general approaches that rely on scaling compute and data will always outperform handcrafted, clever architectures. This has been the core lesson of AI over the past 30 years, validated from Deep Blue to AlphaGo to GPT.

Applied to autonomous driving, this logic states: No matter how many sensors you stack, you can't outperform a sufficiently large end-to-end neural network + enough real-world data.

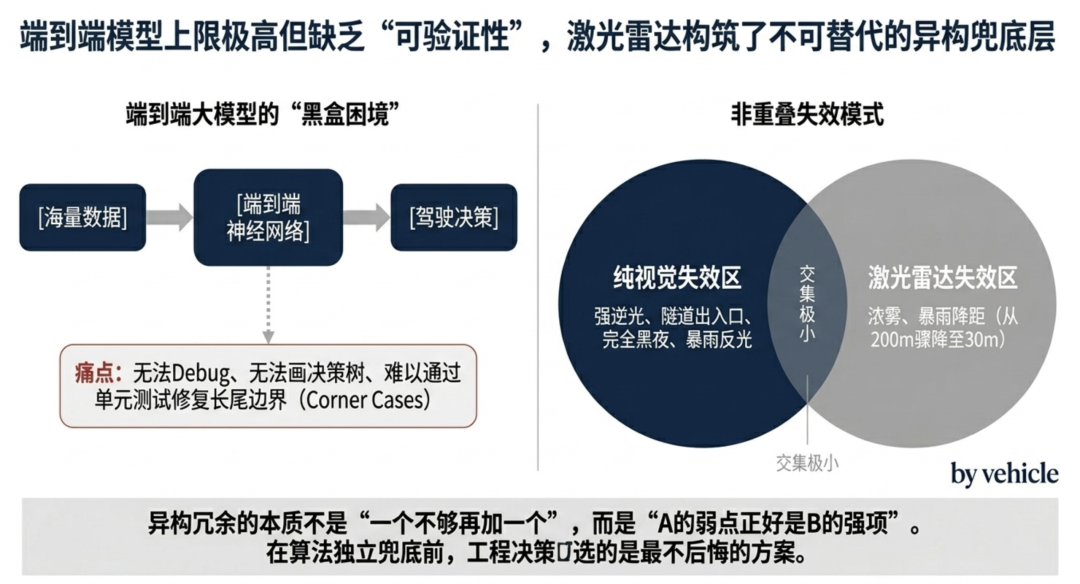

The first half of this judgment is correct. LiDAR is fundamentally geometric measurement—it tells you 'something is there' but not 'whether it's a plastic bag or a rock' or 'how it will move next.' The final 99.9999% reliability is a cognitive problem, not a perception problem. Musk is not wrong here.

But the second half contains a trap: Sutton's Bitter Lesson holds true under an implicit premise—that you can actually wait for the algorithm to outperform the hardware.

In autonomous driving, waiting can cost lives.

Here's a neat physical fact: LiDAR and cameras fail differently. Cameras 'blind' in strong backlight, tunnel entrances/exits, complete darkness, or heavy rain glare; LiDAR's point cloud quality plummets in dense fog, heavy rain, or snow (XPeng's real-world test: effective detection range drops from 200 meters to just 30 meters in heavy rain). However, their failure scenarios overlap minimally.

This is the value of 'redundancy.' It's not 'adding one more if one isn't enough,' but 'A's weakness is B's strength.'

This is why Waymo's sixth-generation system retains 16 × 17MP cameras + short-range LiDAR + imaging radar + external microphones—it addresses not perception accuracy but systemic risk from single-point failures.

This doesn't mean Tesla's pure vision approach is wrong. Tesla will likely succeed—but it's betting on a future that may take another 5-8 years to materialize. In those 5-8 years, should others give users seatbelts?

Dimension 2: Engineering Implementation—The 'Verifiability' Challenge of End-to-End Black Boxes

What engineers fear most is not 'whether this solution works' but 'how to explain it when it fails.'

End-to-end large models solve the ceiling problem of autonomous driving—Tesla's FSD V12, V13, and V14 keep getting smarter, with XPeng aiming for 'less than one disengagement per 100 km.' But they introduce a harder problem: verifiability.

When an end-to-end neural network hesitates for two seconds at an intersection or fails to brake in time for a suddenly darting child, engineers can't debug it.

They can't print intermediate variables, draw decision trees, or write unit tests to prevent recurrence. They can only feed more data, retrain, and pray the next version fixes it.

In this context, LiDAR becomes a 'failsafe layer.' It doesn't participate in the large model's intelligent decision-making—it does one thing: detect an object three meters ahead and brake, no matter what it is. This is why Li Auto's Li Xiang said the phrase that lingers in the industry: 'If Elon Musk came to China, Tesla would keep LiDAR too.' In China's complex road conditions—electric bikes, low-speed vehicles, construction barriers, sudden pedestrian crossings—the failsafe value of LiDAR against extreme long-tail scenarios is amplified to the extreme.

And LiDAR is currently the most reliable and direct-detection sensor.

After the Xiaomi SU7 highway intelligent driving accident in March 2025 that sparked nationwide discussion, Chinese automakers collectively shifted to a more conservative approach: Xiaomi YU7 comes standard with LiDAR + 4D millimeter-wave radar across all trims, while Li Auto's L-series intelligent driving refresh adds LiDAR to the Pro version too.

This doesn't mean Chinese automakers 'rely on stacking hardware due to technical inferiority.' Quite the opposite—it's about using hardware to protect users' lives before algorithms reach 'self-sufficient failsafe' levels. Engineering decisions choose not the most elegant solution but the least regrettable one.

Dimension 3: Commercial Aspects—The $200 Revolution + China's Hidden Industrial Chain Advantage

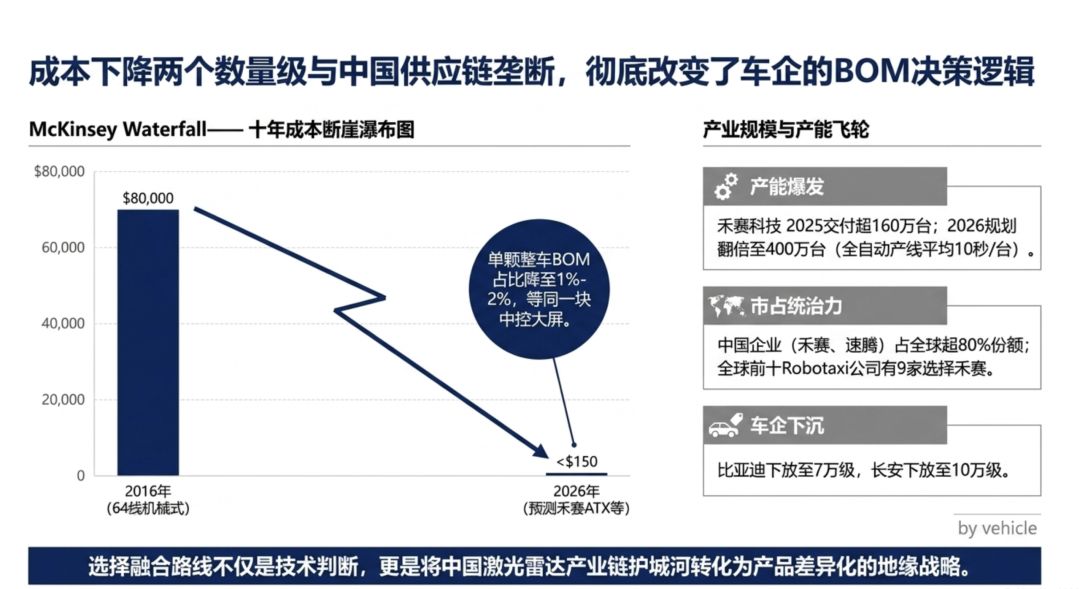

In 2016, a 64-line mechanical LiDAR cost $80,000. By 2025, mass-produced models like Hesai's ATX and RoboSense's MX dropped below $200—a 400-fold price reduction in a decade.

Even more staggeringly, Hesai's main ATX product will further drop to $150 in 2026. This is an economic variable that demands serious reconsideration. When a product's price falls by two orders of magnitude, your assessment of it must be rewritten.

This is why Musk's famous 2019 quote—'Anyone relying on lidar is doomed'—made sense when LiDAR was prohibitively expensive. He was right then. But by 2026, a single LiDAR's share of a vehicle's BOM has fallen to 1-2%, comparable to a high-end infotainment screen. When cost structures change, decisions follow.

This explains why:

BYD: Invested in RoboSense, with its 'Divine Eye' system across the Dynasty and Ocean series down to 70,000 yuan

Changan: Plans to bring LiDAR to 100,000-yuan models

Huawei: Persists with a fusion route in ADS 5.0, with car BU CEO Jin Yuzhi stating publicly, 'L3/L4 requires LiDAR'—and adding more of them (our previous article, 'In-Depth Analysis of Huawei's 2026 Qiankun Tech Conference: How Industry Players Are Responding,' noted six LiDAR sensors already deployed)

Leapmotor, Geely, Chery: Follow suit by bringing LiDAR to lower-end models

Toyota's Chinese joint ventures: Choose Hesai's ATX LiDAR for 2026 models, marking traditional Japanese automakers' bet on China's LiDAR supply chain

Behind this lies an unspoken industrial dark horse: China dominates the LiDAR supply chain (84% global share) but lags in automotive AI chips regarding process technology.

Choosing pure vision means ceding your moat to NVIDIA and Tesla's compute advantage. Choosing fusion means converting your supply chain strength into product differentiation.

Here's how the industrial scale looks:

As the global market leader, Hesai's financials best illustrate 'this business has taken off':

2025: Over 1.6 million units delivered, doubling annually for five years straight; peak monthly shipments exceed 200,000 units

2026: Capacity planning doubles to 4 million units, with fully automated lines producing one unit every 10 seconds

Cumulative deliveries exceed 2.4 million units, the world's first LiDAR maker to surpass one million annual deliveries

ATX refresh secures 4 million orders, with mass production starting April 2026

9 of the top 10 global Robotaxi companies choose Hesai—including Baidu Apollo Go, Didi Autonomous Driving, WeRide, Pony.ai, and Motional

Selected by NVIDIA as the LiDAR partner for its DRIVE AGX Hyperion 10 L4 platform

Thailand's 'Galileo' factory

Launching in early 2027, marking a global footprint

RoboSense's Q1 2026 data is equally staggering: 330,000 units shipped in a single quarter, with 22 automakers and 80 models secured.

These two Chinese leaders are using capacity and cost to propel the industry into a new phase.

This is why Chinese automakers collectively choose fusion—it's not just an engineering judgment but an industrial one.

This doesn't mean Chinese companies 'choose LiDAR out of protectionism.' It means technology route selection is never just about technology—it's a composite function of industrial structure, geopolitics, and supply chain security. Musk's optimal solution in the U.S. may not be Wang Chuanfu's in China.

Dimension 4: Geographic Differentiation—Latest Moves in Europe, Japan, and the U.S. Expose the False Dichotomy of 'Route Wars'

If you only compare Tesla vs. Chinese automakers, you might think this is a binary 'pure vision vs. fusion' war. But zoom out to Europe, Japan, and North America, and you'll see a quadripartite landscape where each camp offers different answers.

Europe: The Once-Firm L3+LiDAR Camp Collapses in 2026

This was the biggest news in the past six months—many Chinese media haven't caught up yet:

Mercedes Drive Pilot: The world's first commercial L3 system in 2021, with 35 sensors + LiDAR, allowing 'eyes-off, hands-off' on German Autobahns—suspended in January 2026; the new S-Class switches to an L2++ scheme without LiDAR, 'MB.Drive Assist Pro'

BMW Personal Pilot L3: Launched in 2024—removed in the 7 Series refresh in April 2026, reverting to L2

Volvo: Once promised LiDAR standard across EX90/ES90—removed in 2026 models, terminating a five-year contract with Luminar and pushing it toward bankruptcy

Polestar 3: Followed Volvo, dropping standard LiDAR in 2026 models

After five years, European luxury brands collectively answered: 'L3 + LiDAR' consumer products are commercially unviable.

Why? Mercedes' Drive Pilot cost €6,000-9,000 but only worked on German highways, daytime, good weather, with a lead vehicle, and below 95 km/h. Consumers realized: 'I'm paying €7,000 for an extremely limited feature when Tesla's FSD (L2++) works in nearly all scenarios.'

Mercedes CEO Ola Källenius said after a CES test drive: 'The car felt like it was on rails.'—he meant the NVIDIA-based L2++ without LiDAR, not his own L3 Drive Pilot.

A harsh truth emerges: L3 as a consumer product may have been a false premise from the start.

Japan: Conservative Progress, Betting on LiDAR but Outsourcing AI

Japan's three major automakers have chosen a consistent path—handling hardware integration in-house while outsourcing AI:

Toyota: Co-developing L4 platforms with Waymo, while Chinese joint venture models use Hesai's ATX LiDAR (2026 mass production)

Honda: Signed a multi-year deal with California AI firm Helm.ai for next-gen autonomous systems using LiDAR + AI; also invested in FMCW LiDAR company SiLC

Nissan: Ariya test cars feature 11 cameras + 5 millimeter-wave radars + next-gen LiDAR, partnering with UK's Wayve for L4 mass production (mass production) in 2027

Note Nissan's approach—a fusion perception scheme explicitly targeting L4 (skipping the awkward L3). The Japanese have learned from Europe's mistakes.

U.S.: Severely Fragmented, Three Paths Diverge

The U.S. landscape is most complex, housing both Tesla and Waymo—global benchmarks for their respective routes:

Tesla: FSD V14 rolling out widely, Cybercab mass production in April 2026—the pure vision endpoint

Waymo: Raised $16 billion in 2026 (valuation $126 billion), deploying in 20+ cities; sixth-gen system features 16 × 17MP cameras + short-range LiDAR + imaging radar + external microphones—the fusion route endpoint

GM Super Cruise: Announced an 'LIDAR + eyes-off' scheme for 2028, based on NVIDIA's platform—explicitly shifting to LiDAR + fusion

Zoox (Amazon) and Aurora: Using LIDAR Across the Board

Luminar: Once a Rising Star of U.S. LIDAR, Faces Bankruptcy in 2026 After Losing Volvo Order—Collapse of U.S. LIDAR Supply Chain

The U.S. message is clear: Tesla has defined the answer for the personal vehicle market (pure vision); for Robotaxi and commercial fleets, LIDAR remains the standard solution.

Piecing Together the Four Puzzles, a Global Perspective Emerges

Overlaying the four regions, a critical conclusion previously overlooked by all becomes clear:

Every place is truly different.

L2++ (Mass Market): Vision-centric, with LIDAR adoption depending on supply chain and market preferences. Europe is still waiting to be developed, the NOA battle has just begun, and China’s adoption of LIDAR is reasonable.

L3 (Consumer Products for Highways/Restricted Scenarios): Its existence is questionable. Mercedes-Benz and BMW have exited, Huawei in China is pushing forward aggressively, and Tesla in the U.S. has skipped it.

L4 (Robotaxi/Commercial): LiDAR is a global consensus. Waymo, GM’s former Cruise, Nissan, Zoox, Aurora, Baidu Apollo Go, and XPeng Robotaxi all retain LIDAR.

The automotive industry is debating whether to advance to L3 or leap directly from L2++ to L4. The answer may no longer be purely technical—it could be another overlapping function of technology, business, and policy, much like LiDAR itself.

An Interesting Detail: Mercedes-Benz’s MB.Drive Assist Pro, developed for the Chinese market, uses a solution from Chinese company Momenta. Even European luxury brands acknowledge—China’s intelligent driving capabilities have become part of the global benchmark.

Dimension Five: The New Variable of Physical AI—Do Humanoid Robots Need LiDAR?

If you think the LiDAR story ends here, you’re wrong.

What truly re-energized the entire industry in 2026 wasn’t L3 or Robotaxi, but Physical AI—embodied intelligence represented by humanoid robots. And in this arena, the debate over “whether to use LiDAR” is even more intense than in automotive.

Turning Point: Hesai’s “Second Act” Declaration

On April 17, 2026, Hesai Technology hosted a tech open day dubbed a “watershed” by the industry. This wasn’t an ordinary product launch—it shifted the narrative of LiDAR from “automotive sensor” to “Physical AI infrastructure.”

Three announcements, each redefining the industry:

1) Picasso SPAD-SoC—The World’s First 6D Full-Color LiDAR Chip

Traditional LiDAR only perceives 3D space (XYZ). Picasso integrates RGB color data directly into the point cloud through chip-level pixel-level fusion—every point carries color data, enabling pixel-level spatiotemporal alignment and eliminating the need for “post-stitching” between cameras and LiDAR. This fundamentally resolves the core contradiction: “LiDAR sees geometry but doesn’t understand semantics.”

2) ETX 4320-Line LiDAR—The World’s First 6D Full-Color Ultra-High-Resolution Platform

The production version supports 1080/2160/4320-line configurations, with a range of 400 meters at 10% reflectivity, capable of identifying traffic cones at 300 meters and small animals at 280 meters. Mass production begins in late 2026, with deployment on flagship models in 2027–2028.

3) Kosmo Spatial Intelligence AI Hardware—Transforming Real-World Spatial Data from a “Luxury” to a “Standard Resource”

This is the biggest surprise. Kosmo isn’t for vehicles—it’s for embodied intelligence training. Its goal is to mass-collect high-fidelity 3D spatial data to feed robotic world models—akin to building a “data engine” for the Physical AI era.

Hesai CEO David Li said on stage: “The core opportunity of this era is AI, especially the construction of Physical AI infrastructure and the digitization of the physical world.”

Translation: LiDAR is no longer just a car part—it’s the “eyes” of Physical AI.

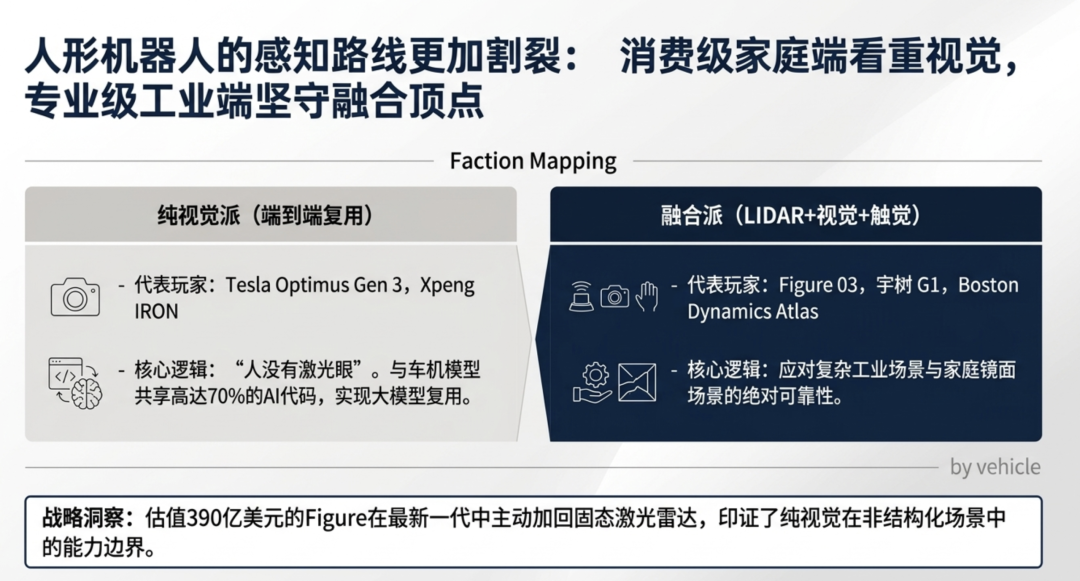

Robot Faction Wars: Pure Vision vs. Fusion—More Divided Than Automotive

The perception debate in humanoid robots is far more complex than in automotive. Let’s examine the two camps:???? Pure Vision Camp (Replicating Tesla’s Automotive Approach):

Tesla Optimus: 8 cameras + FSD-derived neural network, no LiDAR. Gen 3 production begins at Fremont Factory in 2026.

XPeng IRON: Equipped with “AI Hawk Eye” 720° vision system + 3 Turin AI chips (2250 TOPS), no LiDAR. He Xiaopeng’s logic: IRON shares 70% of AI code with XPeng vehicles, so it must follow a pure vision route.

Early Figure 02: Primarily vision-dependent. The shared philosophy of this camp: Humans don’t have laser eyes, so robots shouldn’t either. Plus, pure vision allows vehicles and robots to reuse the same end-to-end large model.

Fusion Camp (LiDAR + Cameras + Tactile):

Figure 03 (Released October 2025): 3 pairs of stereo RGB cameras + solid-state LiDAR + ToF depth + 6-axis torque sensors + fingertip tactile. This marks Figure’s reversal from the pure vision route of Figure 02.

Unitree G1: 3D LiDAR + full depth camera coverage, retail price: RMB 99,000.

Unitree Go2 Robot Dog: Standard 4D ultra-wide-angle LiDAR.

Boston Dynamics Atlas: LiDAR + stereo vision.

Honor’s First Humanoid Robot: Equipped with Hesai JT128 LiDAR, won the Beijing Robot Marathon championship at launch.

RoboSense: Launched Active Camera + 192-line Airy hemispherical radar, designed specifically for robots.

A Highly Symbolic Turning Point: Figure’s shift from Figure 02 to Figure 03, actively adding solid-state LiDAR. This company, once deeply collaborating with OpenAI and now valued at $39 billion, chose the opposite path of Tesla. Why? Because home scenarios (glass, mirrors, low light, cluttered spaces) pose greater perception challenges than road scenarios—pure vision can’t keep up.

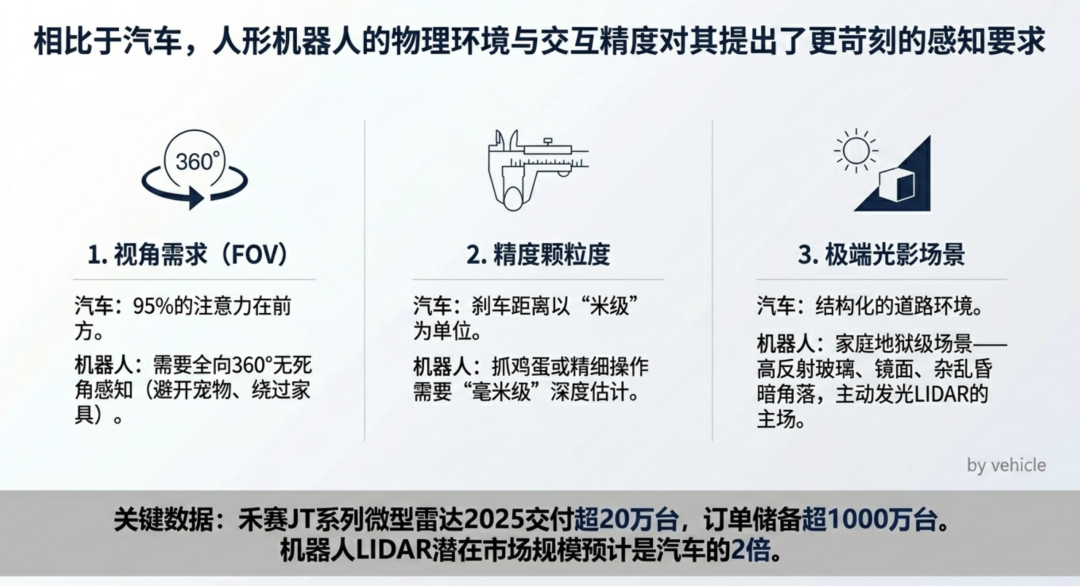

Why Do Robots Need LiDAR More Than Cars? Three Physical Reasons

You might ask: If even Tesla Optimus doesn’t use LiDAR, why do robots need it? The answer lies in the physical nature of usage scenarios:

① 360° Perception, Not Just “Front-Facing”

Cars focus 95% of attention forward. Robots need to walk, grasp objects, avoid pets, and navigate furniture—requiring omnidirectional perception. This is a natural weakness of cameras, which require stitching from multiple units, whereas LiDAR inherently provides 360° coverage.

② Millimeter-Level vs. Meter-Level Precision

Car braking distances are measured in meters; robotic egg-grasping precision is measured in millimeters. Visual depth estimation degrades sharply at close range, while LiDAR achieves centimeter-level or even millimeter-level accuracy.

③ Transparent/Reflective/Low-Light Scenarios Are a Camera Nightmare

How much glass, mirrors, and dimly lit corners are in homes? For pure vision robots, these are high-risk zones. LiDAR, which doesn’t rely on ambient light and actively emits lasers, thrives in these scenarios.

This is why Hesai’s JT series mini LiDAR—a 360° compact radar designed for robots—saw over 200,000 units delivered in 2025, secured exclusive supply agreements with leading brands like Dreame and Mova in 2026, and has over 10 million units in backlog. The robotics LiDAR TAM (Total Addressable Market) is estimated by industry insiders to be twice that of automotive.

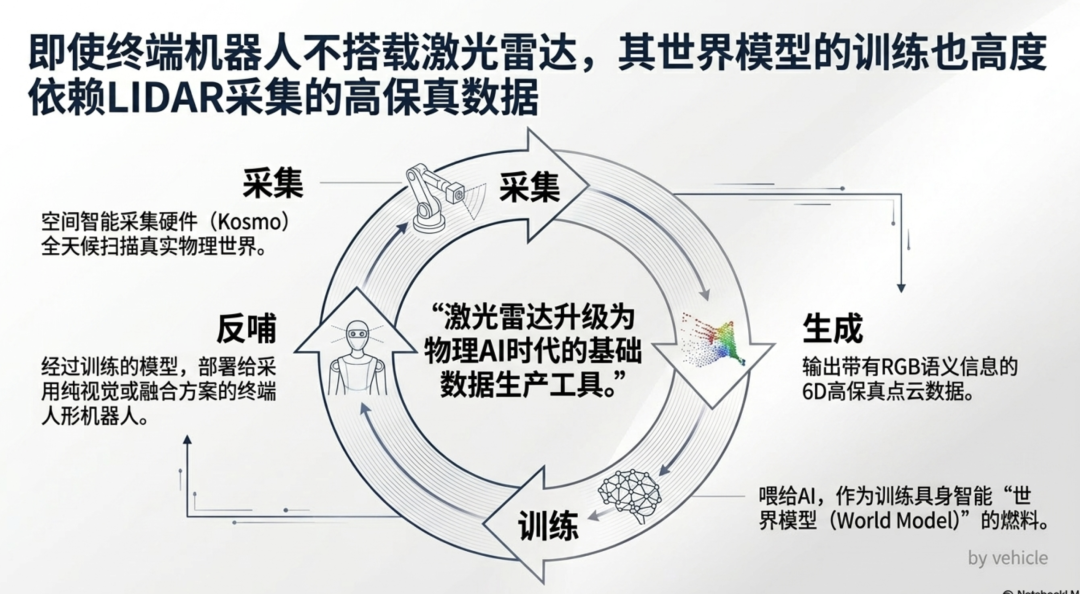

LiDAR’s Role Changes in the Physical AI Era

Here’s a counterintuitive insight: Even if the final robot product doesn’t use LiDAR, training it still requires LiDAR.

Why? Because world models need high-fidelity 3D spatial data for training. How do you collect this data? With LiDAR + camera fused point clouds. Hesai Kosmo and RoboSense’s Active Camera essentially do this—digitizing the physical world into AI training fuel.

This is why Hesai repositions LiDAR as “Physical AI infrastructure.” Even if Tesla Optimus ultimately ships without LiDAR, its training data likely comes from LiDAR-equipped data collection vehicles, robots, or dedicated devices like Kosmo.

This isn’t about “humanoid robots must have LiDAR.” It’s about LiDAR upgrading from a “car part” to a “foundational data production tool for the Physical AI era”—its market size and strategic importance have grown by an order of magnitude compared to the L2/L3 era.

Temporal Dimension: Three Time Slices of Evolution

Overlaying the first four dimensions onto a timeline clarifies the picture.

Now (2026): Four Camps Go Their Own Ways

Pure Vision Consumer Vehicle Camp: Tesla FSD V14 rolls out globally, Cybercab enters mass production in April, XPeng GX follows.

Fusion Robotaxi Camp: Waymo expands to 20+ cities (targeting 1 million rides/week by 2026), Nissan launches L4 in 2027, all-in on LiDAR.

China’s Intelligent Driving Democratization Camp: BYD/Changan/Huawei/Li Auto/Xiaomi double down on fusion routes, LiDAR aggressively penetrates the RMB 100,000 price tier.

European Luxury Surrender Camp: Mercedes-Benz/BMW/Volvo/Polestar collectively abandon L3+LiDAR, retreat to L2++ with NVIDIA compute, but keep LiDAR as an option.

Physical AI New Arena: Tesla Optimus and XPeng IRON go pure vision; Figure 03, Unitree G1, and Boston Dynamics opt for fusion; Hesai and RoboSense pivot from automotive to “Physical AI infrastructure.”

This stage isn’t about “who wins”—it’s about each camp rooting itself in its optimal ecological niche.

Mid-Term (2027–2028): L3 Consumer Products Fail Globally, Humanoid Robot Mass Production Begins

Here’s the article’s most counterintuitive prediction: By 2027–2028, L3 as a consumer product will begin to be declared dead globally.

Why? Europe has already answered (Mercedes/BMW exited), the U.S. skipped it (Tesla does L2++, Waymo does L4), and China, while touting L3, is actually shipping L2++ in disguise (so-called “L3” requiring driver readiness isn’t true L3).

Meanwhile, 2026–2028 is the critical window for humanoid robots to move from labs to mass production:

Tesla Optimus Gen 3 begins pilot production at Fremont, targeting consumer launch in 2027.

Figure 03’s BotQ factory reaches 12,000 units/year capacity, targeting 100,000 units in four years.

XPeng IRON announces 2026 mass production, advanced factories, and retail presence.

Hesai’s ETX 6D full-color LiDAR enters mass production in H2 2026; Kosmo spatial intelligence hardware launches in late 2026.

The real races at this stage are threefold:

L2++ Ultimate Evolution: End-to-end large models push assisted driving to “nearly no takeover”—Tesla, XPeng, Horizon Robotics, Huawei, and Momenta collectively advance this path.

L4 Robotaxi Scale Expansion: Waymo targets 1 million rides/week by end-2026; XPeng, Baidu Apollo, and Nissan Wayve compete in different markets.

Physical AI Data Race: World model training demands massive 3D spatial data, opening LiDAR companies’ “second curve”—Hesai and RoboSense’s robot radar shipments grow far faster than automotive business.

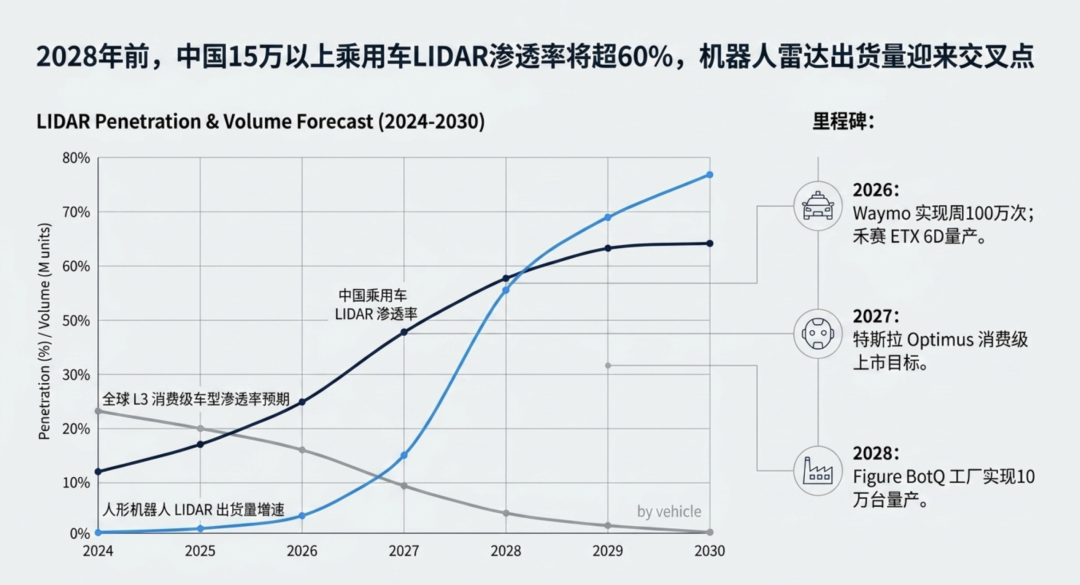

Forecast 1: By 2028, LiDAR penetration in China’s passenger vehicle market for models above RMB 150,000 will hit 60–70%.

Forecast 2: L3 “eyes-off, hands-off” consumer products will remain niche globally. The consensus will be—either L2++ or L4; the middle tier has no commercial value.

Forecast 3: Around 2028, LiDAR installations on humanoid robots will surpass automotive. Hesai’s claim of “robotics LiDAR TAM being twice that of cars” will begin to materialize.

Long-Term (2030+): Final Fork, But Not “Who Beats Who”

When end-to-end large models + sufficiently large real-world datasets + onboard compute reach a tipping point (XPeng Turin chip at 2200 TOPS, Li Auto “Schumacher” with 40 billion transistors, Tesla HW5/6), pure vision will truly catch up to fusion solutions.

But by then, the market will fragment into six segments, not a binary opposition:

Forecast 4: LiDAR won’t “die”—it will undergo a role transformation, downgrading from the “heart of intelligent driving” to a “safety belt for specific scenarios” (automotive) while upgrading to a “foundational data production tool for Physical AI” (robotics + world models). The total industry pie won’t shrink—it will grow larger and stronger.

Final Verdict: Do We Really Need LiDAR?

Back to the original question. My answer is layered—by region, by use case, and by species:

If you're asking about the ultimate technological form—Musk is right. The true endpoint for universal autonomous driving will be end-to-end, vision-centric. This philosophical victory is unshakable.

But, but—future machines must outperform humans. That means extreme scenarios will demand enhanced sensors, not just human-grade ones. If you're asking whether Chinese smart vehicles should deploy LiDAR in the next five years—they should.

Consumers accept paying more for hardware upgrades. LiDAR supply chain costs have dropped to $150-200. Before end-to-end large models fully mature, this is cheap insurance—and a mental anchor to capture consumer trust. If you're asking about Robotaxi adoption—it's mandatory. Nine of the world's top ten Robotaxi companies use LiDAR. When operators bear 100% liability, redundancy isn't a cost—it's a lifeline. It's insurance.

If you're asking about humanoid robots—it depends on the application. Consumer-grade home models may go pure vision (Tesla's path). Industrial/precision operation scenarios need LiDAR (Figure 03/Unitree/Boston Dynamics path).

But training world models for all robots requires high-fidelity 3D data from LiDAR—that's the industry's foundational logic. If you're asking whether LiDAR will vanish post-2030—no, it will grow stronger but must evolve.

It will upgrade from 'automotive component' to 'physical AI infrastructure.' Companies pursuing Hesai's Picasso chip + Kosmo + RoboSense Active Camera roadmap are positioning themselves at the gateway to embodied AI for the next two decades.

In one sentence: LiDAR isn't the wrong answer. It may be a transitional solution—and the ticket to the next era.

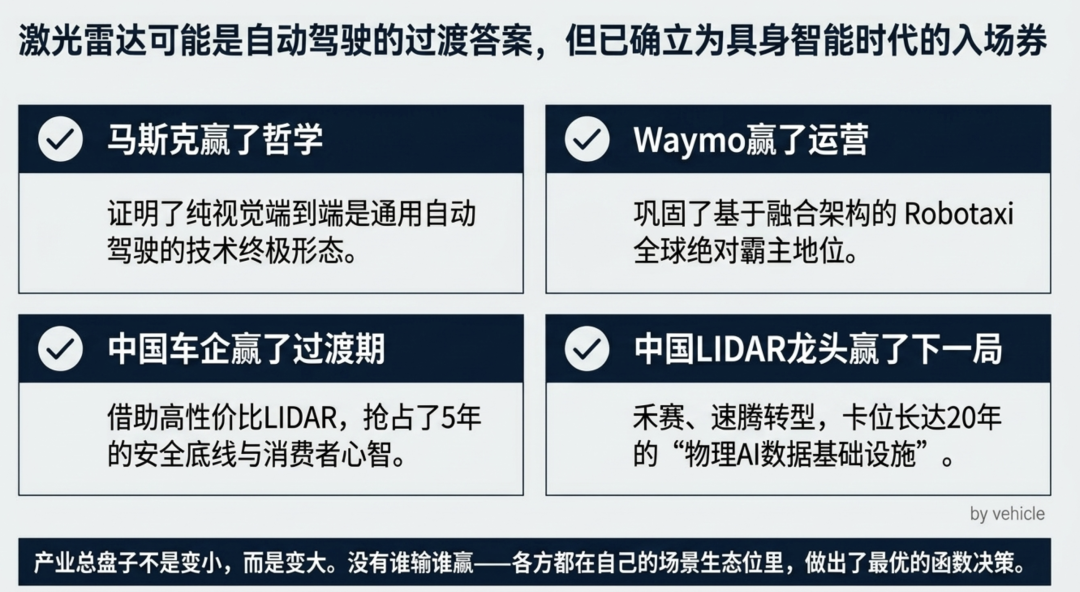

Musk won the philosophy debate. Waymo won Robotaxi. Chinese automakers won the five-year consumer transition period. European luxury brands won 'the wisdom to cut losses at the right time.' And companies like Hesai and RoboSense—they may win the longest game: data infrastructure for the physical AI era. No one lost—everyone nailed their half of the equation.

Unauthorized reproduction or excerpting is strictly prohibited-