Liang Wenfeng: Not Disrupting the Status Quo, DeepSeek Ascends to New Heights

![]() 05/06 2026

05/06 2026

![]() 546

546

Liang Wenfeng did not set out to disrupt the status quo.

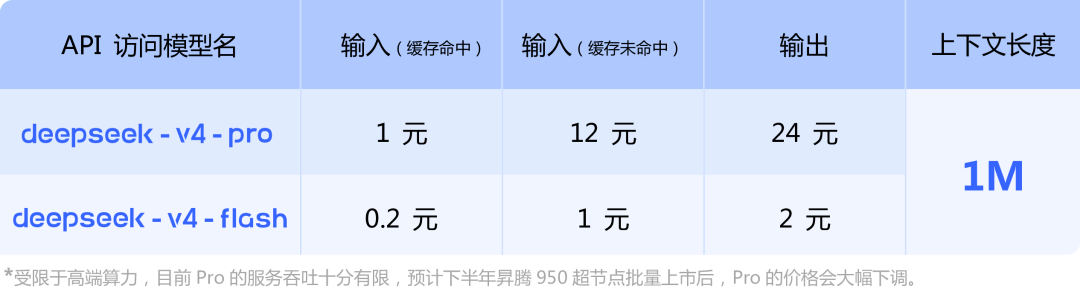

On April 24, DeepSeek-V4 (hereinafter referred to as "V4") was released with little fanfare. According to the official introduction, V4 comes in two versions: Pro and Flash, corresponding to the expert mode and rapid mode on the web and app interfaces, respectively.

The Pro version is the flagship offering, boasting a high capability ceiling and benchmarked against top-tier closed-source models like GPT-5 and Gemini. It provides professional users with in-depth reasoning and analytical services. The Flash version, on the other hand, is lightweight, prioritizing speed and affordability. While its reasoning capabilities are comparable to the Pro version, it falls slightly short in terms of world knowledge reserves, making it more suitable for daily office tasks, simple information queries, and other scenarios.

Not Disrupting, but Building on Existing Foundations

The updates didn't stop there. On April 30, DeepSeek's image recognition mode was gradually rolled out, bridging the long-standing multimodal gap and enabling the previously "blind whale" to finally see the light.

Many have speculated that Liang Wenfeng came to shake things up.

V4 introduces a cutting-edge attention mechanism, granting it globally leading long-context capabilities. This means DeepSeek incorporates ultra-long context capabilities of up to one million tokens as a standard feature across all versions, free of additional charges, benefiting all users.

Previously, users seeking such capabilities had to pay extra or upgrade their subscription plans, incurring additional costs. For ordinary users, the most noticeable advantage is the elimination of cumbersome operations like "splitting text and feeding it in segments." Long-text reports, documents, and other materials can now be imported and processed in one go.

This exemplifies Liang Wenfeng's "engineering mindset." When there is no significant generational leap in model capabilities, engineering optimizations can significantly enhance the user experience.

In terms of pricing, DeepSeek remains a "price disruptor." Based on industry pricing estimates, its overall pricing is just one-third or even lower than that of mainstream competitors. At the same time, it is compatible with both OpenAI and Anthropic API interface formats, allowing developers to switch with minimal effort by modifying just one parameter, achieving nearly cost-free migration.

What defies "industry norms" is a "self-assessment" in their technical report: V4's capability level still trails behind GPT-5.4 and Gemini-3.1-Pro, with a development trajectory approximately 3 to 6 months behind leading closed-source models. In the AI industry, where companies often make monthly "blockbuster announcements" claiming "world's first" or "industry-leading" status, this seems out of place.

However, this is not a sign of weakness but a manifestation of highly strategic restraint. Liang Wenfeng has no intention of engaging in a "who is the strongest" contest with peers. He did not come to disrupt but to build on existing foundations, choosing a more challenging path for DeepSeek: forging a full-stack, independently localized path outside NVIDIA's CUDA ecosystem.

This means DeepSeek must start from the ground up, adapting to domestic chips and building an independent training system. The trade-off is enduring slower iteration speeds, external criticism, and a "technology time lag" of 3 to 6 months.

Faced with these challenges, Liang Wenfeng has chosen to demonstrate DeepSeek's capabilities rather than prove himself through words. He once said, "We didn't intend to become disruptors; we just accidentally became one."

This silence was once misinterpreted as falling behind or losing one's edge.

But Liang Wenfeng has a clear strategy. As "Jinduan" evaluated, his background as a quantitative engineer makes him adept at breaking down problems to their fundamentals, recalculating whether each step is redundant, and achieving the same results with minimal resources. His reasoning for choosing open source stems from the same logic: "In the face of disruptive technology, the moat formed by closed-source models is temporary."

The industry consensus is also clear: The AI race is a marathon. Short-term hype and rankings are meaningless; the ultimate competition lies in cost control, ecological barriers, and independent capabilities.

Such composure is rare in today's AI landscape.

DeepSeek Ascends to New Heights

Today, DeepSeek stands at a pivotal juncture: It has moved beyond the reckless pursuit, imitation, and overnight success of a startup but has not yet reached the pinnacle of defining industry rules.

It is midway up the AI mountain, like a climber reaching the midpoint. Its current state is: imperfect but authentic; lagging but resilient.

At this stage, three dimensions of tension and balance are evident:

Technically, DeepSeek is neither hasty nor sluggish, leading in certain areas. It is well aware of its gap with top-tier closed-source models but focuses on expanding its strengths. For example, its capabilities in processing ultra-long contexts of up to one million words, low-cost reasoning, and open-source model efficiency optimization have reached the global top tier.

Commercially, DeepSeek is transitioning into a formal commercial entity. It once relied on High-Flyer Quant without seeking financing or profit, resembling idealistic geeks. But this landscape is changing, and Liang Wenfeng is now considering financing. AI giants like Alibaba and Tencent are lining up to participate.

This process will not be smooth, as the old financing model has not fully ended, and the new self-sustaining system has not fully taken shape. But DeepSeek must take this step to complete its commercial closed loop.

Ecologically, DeepSeek is rapidly catching up. For example, the image recognition mode fills its multimodal gap, V4 redesigns its attention mechanism, optimizes agent capabilities, and achieves deep collaboration with Huawei's Ascend chips.

In other words, DeepSeek has secured its place in the AI finals, climbed to mid-mountain, and the next step is the summit battle.

Figure | V4 Pricing

This is also a microcosm of China's current AI industry.

Over the past two to three years, global AI rules, standards, and paradigms have been almost entirely defined by Silicon Valley. Domestic AI companies have mostly played the roles of followers and imitators, buying others' cards and competing on others' platforms while passively adapting to industry rules.

DeepSeek, Kimi, QianWen, and others have been reshaping the game alongside industry partners over the past year.

Referring to Jensen Huang's "five-layer AI cake" theory—energy, chips, infrastructure, models, and applications—China's industry is gradually filling in the gaps layer by layer. DeepSeek's core value lies in breaking through the key barrier between domestic computing power and upper-layer large models, resolving the awkward dilemma of domestic chips having hardware but no adaptation and computing power but no implementation.

Another strategically significant detail in this V4 update is that DeepSeek, for the first time in a technical report, listed Huawei's Ascend and NVIDIA GPUs side by side in its hardware validation list.

Moreover, the FP4 precision format selected by V4 happens to be the native precision supported by Huawei's newly released Ascend 950 chip this year. DeepSeek officially stated that once the Ascend 950 super nodes are mass-marketed in the second half of the year, the usage price of V4-Pro will see a significant drop.

Its significance lies in the fact that once the Ascend ecosystem deeply adapts and integrates with domestic large models, the domestic AI industry will completely break free from single-computing-power dependence, and computing power pricing and supply stability will be fundamentally restructured. Only then will V4's true influence become apparent.

More critically, DeepSeek has also forged a new industrial paradigm distinct from Silicon Valley: Silicon Valley AI's core logic is high-priced closed-source models, technological monopolies, and reaping global profits. DeepSeek adheres to open-source inclusivity, extreme low-cost, and full-stack independence, proving that AI development does not have to replicate overseas high-investment, high-monopoly paths. Chinese AI can rely on independent technology, inclusive ecosystems, and controllable costs to forge a differentiated global path.

At the end of DeepSeek's official announcement of V4, a small line of text stands out: "Not seduced by praise, not frightened by slander, following the path, and staying true to oneself."

This is DeepSeek's true situation over the past 15 months: It has experienced both the hype of being placed on a pedestal and constant doubts and pessimism.

These sixteen words can also be seen as Liang Wenfeng's response: "I know what you've said, but I will still move forward at my own pace."

Of course, during this mid-mountain preparation period, DeepSeek still faces one essential question: commercialization.

For a long time, the biggest controversy surrounding DeepSeek has been the ambiguity of its business model. This lack of transparency has made it difficult for outsiders to see its true actions, increasing speculation. What DeepSeek needs to do is complete its transformation from a technologically idealistic entity to a market-oriented commercial entity. This will also help it quickly pass through the mid-mountain stage and reach the summit.

Liang Wenfeng and DeepSeek now stand at that mid-mountain point. The long-term story belongs not only to DeepSeek but also to China's AI industry, which has just begun to unfold.

References:

AIX Finance, 'Is DeepSeek, Which Actively "Concedes," Any Good This Time?'

DeepSeek, 'DeepSeek-V4 Preview: Entering the Era of Universal Access to Million-Context Capabilities'