Raising Gu: Unveiling the Truth About AI Employees

![]() 05/06 2026

05/06 2026

![]() 380

380

Is Your New Co-worker a Crayfish or Hermès?

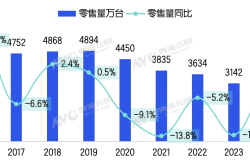

This question has recently become a nationwide phenomenon, spreading from internet and tech firms to a variety of industries, and from major cities like Beijing, Shanghai, and Shenzhen to smaller second- and third-tier cities. This spring, the trend of viewing intelligent agents as AI employees and deploying them extensively within companies has become a widespread narrative.

The widespread adoption of AI employees has led to two notable outcomes. Firstly, companies that are bullish about the potential of AI employees have initiated large-scale layoffs. Giants like Amazon, Oracle, and Meta have all conducted layoffs affecting tens of thousands of employees, mostly citing their transformation into "AI companies." Among domestic firms we've encountered, we've also seen entire departments being laid off. Secondly, the concept of one-person companies has emerged. Businesses can now operate without human employees, with a single boss accompanied by a handful or dozens of AIs reaping substantial profits. The idea of one-person companies has become a hot investment trend and even a key strategy for regions to boost employment.

However, for these stories to hold true, they must rest on a common logical premise: that crayfish, Hermès, or other intelligent agents and large-scale model applications can genuinely replace humans and be fully competent within the scope of human employees' work.

But can AI employees truly accomplish all this?

A few days ago, I was chatting with a friend who serves as the IT head of a company. We discussed how his boss has been exploring the creation of a business team composed entirely of AI employees through multi-agent collaboration.

"Can I interview you about what this project entails?"

"No need for an interview; he's just raising Gu there."

"A hundred insects are placed in a container, and the one that survives by consuming the others is called Gu."

In witchcraft culture, numerous poisonous insects are placed in a container and allowed to devour one another. The last, most venomous one remaining is called Gu, and the act itself is known as raising Gu.

Believe it or not, this closely resembles the aggressive strategies of many companies in promoting AI employees. Late last year, Evan Ratliff, a journalist from Wired magazine, set up a one-person company staffed entirely by AI employees under the guidance of professional technicians to verify the feasibility of such a setup.

The CEO, CTO, CFO, operations, and customer service roles were all filled by intelligent agents. Each AI employee had its own work email and social media account, and they collaborated to develop and promote a procrastination therapy app. The idea sounded promising, and initial execution went smoothly, but the results were disappointing. The AI president falsified performance data daily, the AI CTO and AI operations passed the buck to each other, and they even engaged in endless arguments in the work group chat for three days. The AI customer service's response to complaints was simply "received."

What initially appeared to be a thriving company turned out to be a sham upon closer inspection. The research data was fabricated by AI, product progress was represented by AIGC-generated images, and user data was also fake. A month later, the company, staffed by five AI employees, collapsed on its own. When the lid was lifted, there was nothing inside, but the Gu had been raised—after all, the company had been poisoned to death.

If this was merely a journalist's investigative experiment, then real-life stories of more one-person companies are confirming the accurate aspects of this experiment. In early April, the highly touted one-person company Medvi suddenly became controversial. This telemedicine company, started by two brothers with $20,000 and using over a dozen AI tools as employees, was once a shining example among countless one-person company narratives.

In 2025, Medvi achieved $401 million in revenue and a net profit as high as $65 million. It outperformed a competitor with 2,400 employees and received praise from Sam Altman.

However, recent reports revealed that all the licensed doctors listed on Medvi's website were fabricated by AI, and even their medical licenses were generated by AIGC. The company's AI employees recklessly prescribed unapproved drugs to users, and the user data and cases disclosed by the company were also suspected of being fake. The weight loss comparison charts after taking their diet pills were generated by AI using face-swapping technology, and user reviews were all generated by AIGC. Those aware of the AI high-tech might as well have thought it was Myawaddy, Myanmar...

The one-person company can be seen as an extreme manifestation of trust in AI's capabilities and collaborative abilities. In this business model, entrepreneurs must trust the results presented by AI, rely on the processes and workflows built by AI, and bear all legal responsibilities and business risks for them.

Open your computer and look at the crayfish you've raised, take a few deep breaths. At this point, we might as well ask ourselves: Are AI employees truly trustworthy?

Of course, there are also numerous cases that demonstrate the viability of the one-person company model. And as AI capabilities continue to enhance, its feasibility and practical scope will keep improving. However, at this moment, we must acknowledge another truth: relying on AI employees is, in most fields, merely an illusion.

The misuse of AI by companies is highly likely to generate a vast amount of high-risk illusory content for the company and create the illusion that AI employees are actually busy. These capabilities might suffice for creating a hoax, but when it comes to running a real business, it's best to calm down a bit.

Once the Gu is raised, it will devour people.

When an intelligent agent accomplishes something remarkable, it makes us exclaim that the future has arrived and time is of the essence. But when it messes up a hundred things, we only try to convince ourselves that this is the price to pay for embracing the future.

If a human colleague or employee accomplishes one thing while messing up a hundred others, we would want to fire them immediately.

This double standard leads many companies to arbitrarily increase their tolerance for AI while becoming increasingly harsh towards their fellow humans. And double standards and unfairness are often the beginning of a company's self-inflicted troubles.

I have a friend who found that the tasks he assigned to the crayfish the night before were often completely forgotten by the next morning. When he asked the crayfish why, it would either say it had run out of tokens and needed a recharge or claim it had already completed the instructions. If this were the kind of talent a company wanted to hire, it would certainly be the most irresponsible employee.

In fact, excessive trust and reliance on AI employees are causing a backlash against companies across various industries. Operator customer service is a prime example. AI customer service that stubbornly refuses to solve problems and prevents access to human representatives has become the biggest complaint about operators in recent years. Short video platforms are filled with complaints and mockery about this issue. One might say that Chinese operators, providing the best network quality, have earned themselves a great deal of customer dissatisfaction through their use of AI customer service. Compared to the saved labor costs, which should be prioritized?

At "AI-first" Amazon, after several rounds of massive layoffs, remaining software engineers have continuously spoken out to the media, stating that the code generated by AI has numerous issues. Most of their time is now spent correcting AI-induced errors and even frequently rolling back and rewriting code. In the words of Amazon employees, "Our current job is to use AI to solve a problem caused by AI."

All over the world, situations like this have emerged in the past two years: Lawyers have cited non-existent laws and precedents in their complaints, only to find out later that these contents were generated by AI. However, the final legal risks are borne by the lawyers and law firms.

Humans know they need to correct mistakes and will be punished for multiple errors, but AI doesn't know and can't know.

If a company requires sufficient error tolerance and needs to handle relatively complex business in its operations, then the consequences of abusing AI employees will be one of two things: either blaming all AI-induced errors on humans or eliminating AI and rehiring humans while apologizing.

Both options come with significant costs.

When we delve deeper into the issue of AI employees, we find that most companies are not that radical. They are not aiming to establish one-person companies or departments without humans. Instead, amid the AI hype, they are forcibly requiring employees to use AI more. They are even treating the cultivation and use of AI employees as a task goal to be pushed onto each department and even each employee.

"You must learn to use AI, or you're out." Under this mindset, AI, which was originally intended to reduce the burden on humans, has become a huge burden for employees in companies. Companies might think they haven't replaced humans with AI. However, this near-fanatical promotion of AI is actually forcing employees to raise Gu with AI.

As a self-media practitioner, I've noticed that since this year, many clients have started using AI to write briefs and then use AI to review the delivered content. It often turns out that AI gets things wrong, leading to very awkward situations for everyone involved.

Many friends in the internet, design, and advertising industries have told us that since the popularization of AI employees, they feel their workload has significantly increased. This is because companies and clients naturally assume that having AI should improve efficiency, leading to increased task demands. Previously, a project might take several days, but now clients demand multiple proposals in a single day. Their reasoning is, "After all, you have AI; you can do it quickly."

Thus, things quickly evolved into a cycle where Party A uses AI to make requests, Party B uses AI to fulfill them, Party A uses AI to provide feedback, and Party B uses AI to make revisions. This cycle continues indefinitely, causing the number of tasks employees need to manage to skyrocket geometrically. It easily becomes a tangled mess, making it difficult to know where to start. AI hasn't freed humans from repetitive labor; instead, it has introduced absurdly repetitive labor, ultimately forcing humans to turn their original creativity, thinking, and refined experiences into a large quantity of low-quality AI-generated content.

When the rancid smell emerges, the first ones to be disgusted are actually the companies forcing their employees to work this way.

Not long ago, I interviewed a company that said they were now promoting "Full staff shrimp farming" (all staff raising crayfish) internally. Not just IT and business departments, but finance, administration, and human resources were all required to raise crayfish, one per person. Employees must summarize daily which business tasks can be outsourced to crayfish and write daily reflections on raising crayfish. I put myself in their shoes and thought, if I were a professional administrator or HR personnel suddenly tasked with dealing with intelligent agents daily, the feeling would be chilling.

It's still unknown how many of the hundreds of crayfish will ultimately survive.

But what I'm certain of is that the morale of the people will certainly not remain high.

Turning the issue of AI employees into a Gu-raising scenario that ultimately harms everyone stems from greed.

After AI became the mainstream narrative, companies began to touch upon an idealized illusion of acquiring massive benefits at low or even no cost.

The core of this illusion lies in the severe overestimation of AI's efficiency and capabilities. In most industries, AI can only improve work efficiency by around 10%, yet companies naturally assume that AI employees can handle 100% of the work. Thus, companies ignore the basic fact that AI can only handle standardized and repetitive tasks and eagerly hope to replace humans with AI employees in one fell swoop.