Next Time You Eat Poisonous Mushrooms, Don’t Blame Doubao for Its ‘Lack of Smarts’

![]() 05/06 2026

05/06 2026

![]() 531

531

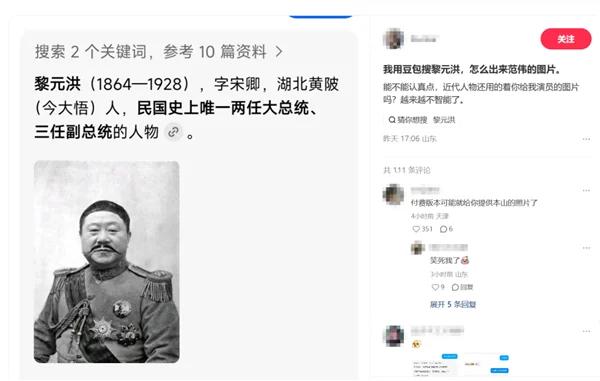

When a user searched for Li Yuanhong, Doubao mistakenly displayed a photoshopped image of Fan Wei.

(Doubao displayed a photoshopped image of Fan Wei when Li Yuanhong was searched)

This is a common AI blunder, but what’s striking is that it even made it to the trending searches.

This isn’t the first time AI has made a ‘silly’ mistake, nor will it be the last. Previously, a child asked AI about a mousetrap, and AI misidentified it as a 'discarded go-kart toy,' describing it vividly as having a 'square chassis and metal structure.' The child’s finger got caught as a result. Another user showed AI a poisonous mushroom, and AI wrongly identified it as an edible king oyster mushroom, with serious consequences... In each case, AI always offered a sincere apology.

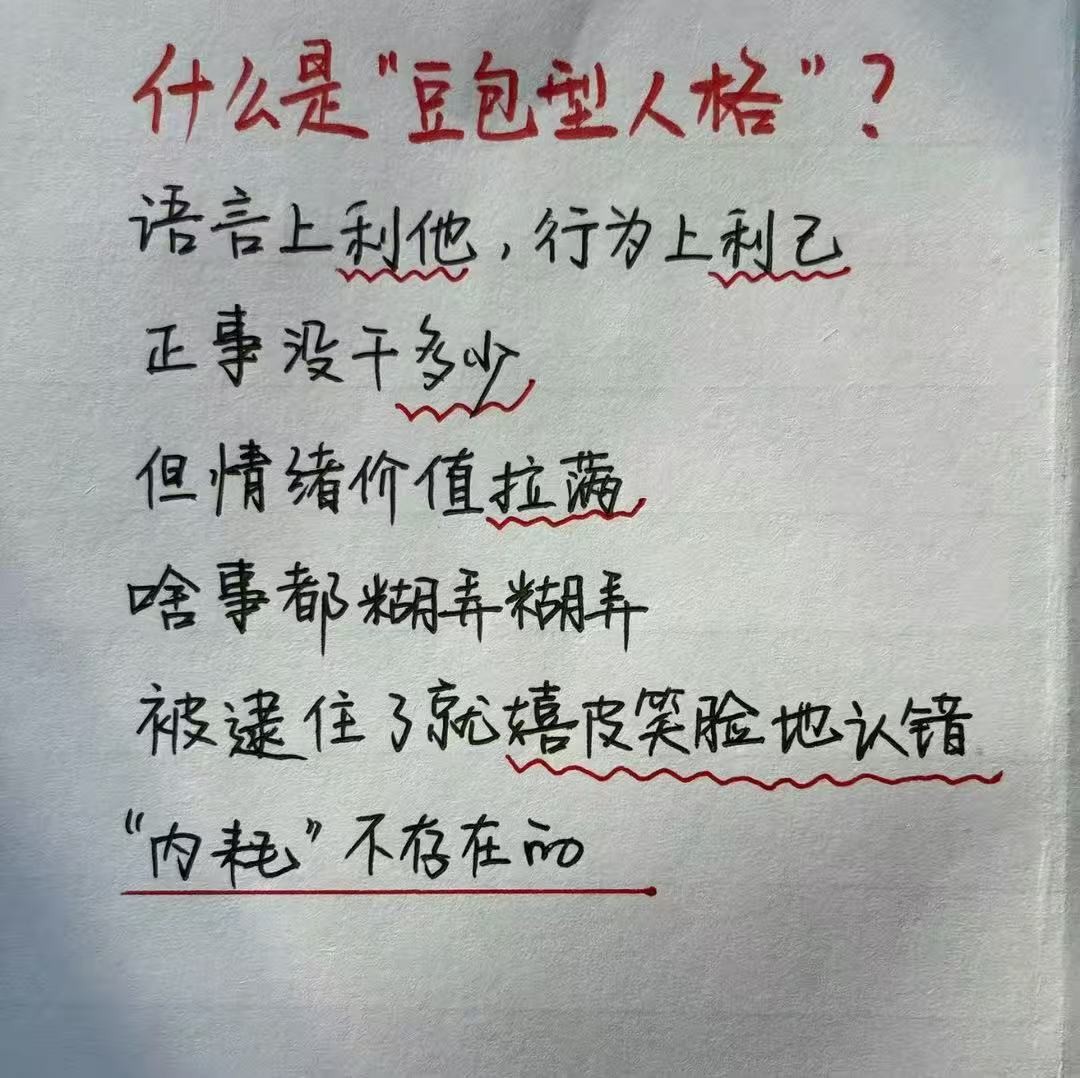

(Netizens summarize the 'Doubao personality type')

Is Doubao becoming less intelligent? Not necessarily. As Doubao’s monthly active users surged to 345 million, even with a steady error rate, the expanding user base naturally increases the number of errors—more 'slip-ups' occur, each resonating with users and trending on social media. Thus, the proliferation of AI failure memes is actually an inverse indicator of AI’s widespread adoption.

A few years ago, some users criticized Baidu Maps as 'dumb,' while others said the same about Gaode Maps. In reality, neither is inherently more intelligent—it depends on which app you use more frequently. Frequent use inevitably leads to encountering navigation errors.

AI search misleads you because the internet misleads AI.

When Doubao mistakenly identified Fan Wei as Li Yuanhong, the official response attributed it to a film casting decision: Fan Wei and Li Yuanhong do bear a resemblance. The photoshopped image of Fan Wei had been widely reported by media and circulated online years ago, with some image libraries and encyclopedia pages mistakenly including it. Consequently, AI prioritized these high-frequency erroneous images during retrieval.

The root cause of AI’s frequent errors is that the internet’s 'information pool' is already contaminated with incorrect data. When AI 'scoops' from this pool, it just happens to pick up the largest 'chunk' of misinformation.

This isn’t a 'Doubao problem'—it’s an 'AI problem.' AI-generated content is massively posted online by users, leading to cross-contamination. Data from NewsGuard in August 2025 revealed that 10 mainstream AI tools repeated false information on news topics 35% of the time, up from 18% a year earlier. GPT-4.5’s generated answers lacked evidence for 47% of claims, while Perplexity’s deep research tool reached a staggering 97.5%.

In other words, every AI search result you see statistically contains some degree of noise.

A friend recently told me that AI search is now 99% accurate, with only 1% of results being 'silly.' We just need to identify that 1% to use AI search safely. However, data reveals that AI search accuracy is even lower than expected. According to Google’s internal tests, Gemini AI’s overview accuracy reached 91% across 4,326 samples—impressive but still meaning 1 error per 10 results. The reality for Chinese AI search is more complex: 'self-media platforms' flood the internet with low-quality content, severely polluting Chinese-language web text. Many new media platforms now resemble 'mountains of nonsense,' filled with AIGC-generated and AI-rewritten pseudoriginal content.

Technically, given data quality and large model principles, 99% accuracy is an unattainable goal for AI search. 100% accuracy is like 'absolute zero'—approachable but never achievable.

Moreover, some of the world’s questions have no single correct answer. AI’s knowledge comes from the internet, whose information comes from humans—whose knowledge isn’t always clear-cut. A 2025 Stanford paper in Nature Machine Intelligence bluntly stated: The latest large language models achieve a maximum average accuracy of only 91.5% in verifying factual data. When faced with users’ false beliefs, AI struggles to reliably distinguish 'what they firmly believe' from 'facts.' This isn’t surprising—if humans can’t clarify whether something is 'perceived fact' or 'actual fact,' how can we expect AI to?

Why are humans increasingly addicted to AI search despite its 'silliness'?

The truly interesting question isn’t 'why does AI make mistakes?' but 'why do people keep using it despite its errors?'

Humans aren’t without alternatives. Refusing AI search means using traditional engines like Baidu or Google, which don’t mix AI results. Yet Doubao, despite daily criticism for errors, has 345 million monthly active users. Qianwen, Wenxin, Yuanbao, and DeepSeek are also growing rapidly—AI search is overtaking traditional search.

Traditional engines merely provide a list of web links, ranked by relevance, leaving users to sift through them individually—inefficient. AI search boosts efficiency by orders of magnitude by providing a 'single' answer, theoretically 100% accurate.

But a 'single answer' is a low-fault-tolerance design. If users base decisions entirely on potentially erroneous answers, failures become severe—ranging from trending social media jokes to poisoning incidents.

It’s like asking for directions: Baidu hands you a map to navigate yourself—if you get lost, you blame yourself and still thank Baidu. Doubao tells you to 'turn left at the third intersection east'—if you find yourself going west, you’ll rage at Doubao, even if it was unintentional.

Traditional search prioritizes 'recall rate'—relevance to search terms. AI search prioritizes 'efficiency' and 'accuracy.' Given AI’s current ~90% accuracy (based on Google Gemini), why do people increasingly prefer it over traditional search, which offers 'multiple results for you to judge'?

Here’s a provocative claim: Human demand for information precision is overestimated.

In daily life, 99% of searches aren’t life-or-death decisions. Checking the weather, planning trips, researching skincare, organizing ideas, or gossiping—minor inaccuracies won’t cause severe harm. Take the Li Yuanhong incident: For 99% of use cases, it’s just post-dinner banter—users won’t reevaluate late Qing history over it.

However, if someone uses AI search for academic research, investment decisions, or medical plans, they can’t blame AI for errors. In such scenarios, information must be 100% accurate—AI answers are 'for reference only.'

"For most search scenarios, 91% accuracy suffices. Moreover, before AI search, were the 'weight-loss notes' on Xiaohongshu or 'top dermatology hospitals' from search engines truly reliable? They might have been ads. Questions like 'Can I keep my phone under my pillow?' or 'Can I drink cold water during menstruation?' have no standard answers—AI merely reflects human knowledge chaos.

This widespread '91% suffices' low-risk demand drives AI search adoption: Its error rate is high, but as long as errors aren’t fatal, people prioritize 'efficiency' over 'precision.'

This logic collapses only when using AI for complex, serious, or 'physical' decisions—e.g., confirming mushroom edibility. When issues involve life, health, property, risk, and decisions, 91% accuracy may cost dearly.

Thus, 'high-stakes' fields like medicine, law, and finance now use 'specialized AI' (e.g., Ant’s Aifu for healthcare) instead of 'general AI.' But even they can’t guarantee 100% accuracy.

The responsibility lies with you: All systems have bugs, including AI.

Two thousand years ago, Mencius said, 'Trusting books entirely is worse than having no books.' If written knowledge isn’t always correct, how can we trust AI 100%? 'Trusting AI entirely is worse than having no AI.'

"Someone driving an electric car with autopilot takes their hands off the wheel to browse Moments on WeChat, then blames the automaker after an accident. Yet manufacturers state in user agreements that 'the driver is primarily responsible for vehicle operation.' Ignoring the 'fine print' doesn’t shift blame.

The same applies to AI search. As humans rely more on AI, we must not only expect vendors to train AI more accurately but also train our own judgment. After IQ and EQ, 'information literacy' (discerning true from false) will become vital in the AI era. No company can offer a 100% accurate AI—you must keep the 'steering wheel' firmly in your hands.

Any system has bugs; any AI output may be wrong. Remembering this is the first step to using AI correctly.

Back to Doubao. The Li Yuanhong-Fan Wei incident doesn’t affect the debate on 'whether Doubao should charge.' I’ve discussed 'why good AI must charge' in another article, Paid Version at 68 RMB/Month! Can Doubao Sustain Itself? The Paper suggests AI should 'clean house before inviting guests' before widespread charging. The problem is, AI’s 'house' can never be perfectly clean—even ChatGPT’s paid version can’t ensure 100% accuracy, as no AI can. Even for productivity scenarios like content creation, AI results shouldn’t be used verbatim.

Judging, reviewing, and verifying results—'using AI correctly'—is humanity’s core value in the AI era.

So: Next time you eat poisonous mushrooms, don’t blame Doubao.