Voice Interaction: The New Favorite in the AI Field Overnight—GPT-RT2 Unveiled, Altman Suggests Typing is Outdated?

![]() 05/09 2026

05/09 2026

![]() 421

421

Why type when you can speak?

In February 2024, OpenAI launched Sora, an AI model capable of generating videos, which swiftly revolutionized content creation in the mobile internet era. Even Disney showed interest, planning to invest $1 billion and integrate its core film and television intellectual property into Sora 2. However, in March 2026, OpenAI announced the closure of Sora, with related APIs ceasing operations in September.

In response, OpenAI clarified that it was "reallocating computing resources to core enterprise products."

So, what kind of products warranted such a drastic move by OpenAI? In April, OpenAI rolled out new services like GPT-Image 2.0 and GPT-5.5. On May 7, it followed up with the launches of GPT-5.5 Instant and the focal point of our discussion today—the GPT-Realtime-2 series model.

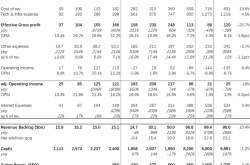

GPT RT2: AI Speaking Like a Human

In simple terms, GPT-Realtime-2 (abbreviated as GPT RT2) is a model series that comprehensively optimizes AI voice capabilities, encompassing three branches: the original version (GPT-Realtime-2), translation (GPT-Realtime-Translate), and transcription (GPT-Realtime-Whisper). GPT-Realtime-2 boasts GPT-5 level reasoning capabilities, allowing developers to customize the model's reasoning depth to balance accuracy, timeliness, and computing costs.

Image Source: OpenAI

The primary goal of these new technologies is to enable AI to converse naturally like a human.

While many AI models today already excel in TTS (Text-to-Speech) timbre, closely resembling human speech, the nuisance calls we often receive from operators and banks are AI-generated. With high-quality audio during calls, it can be challenging to discern whether the other party is human or AI.

However, the moment we start speaking, these AI customer service agents reveal their true nature. In Leitech's view, the capability gap between AI voice models and humans mainly lies in complex task processing. Consider a classic joke:

"Buy a watermelon on the way home from work, and if you see apples, buy two."

An AI without reasoning capabilities might interpret "buy two" as referring to watermelons or apples based on its own understanding. In contrast, an AI with reasoning capabilities would recognize the ambiguity and grammatical errors, asking for clearer instructions. Another example: when you ask the car's infotainment system to "fold the passenger seat and activate zero-gravity mode," can the system infer that you want to activate zero-gravity mode for the seat behind the passenger?

The emergence of GPT-Realtime-2 equips AI with the ability to truly understand user needs.

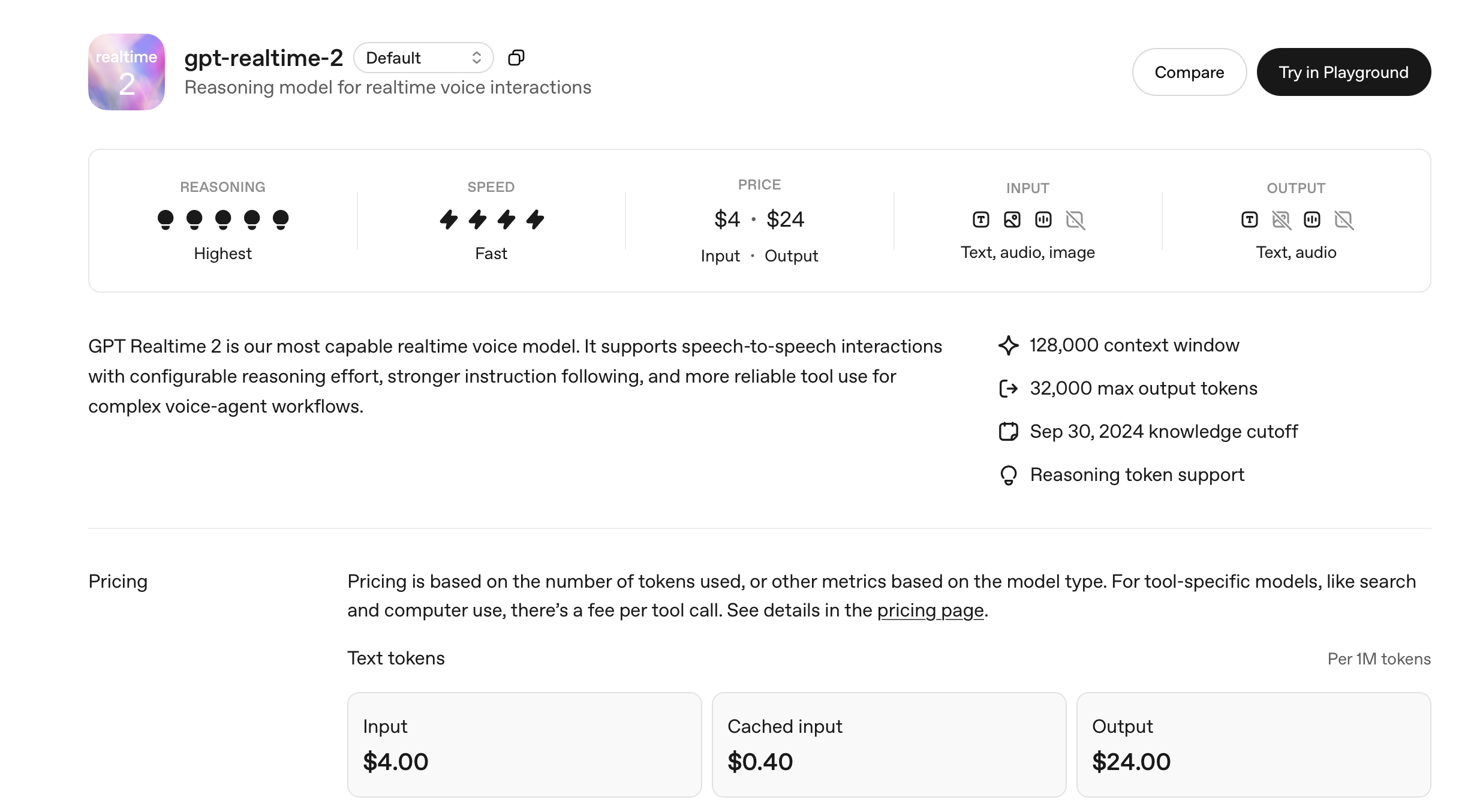

Additionally, GPT-Realtime-2's parallel tool calls can activate multiple components simultaneously to respond to complex voice commands. GPT-Realtime-Whisper can transcribe speech into documents in near real-time, enabling "real-time subtitles." GPT-Realtime-Translate's simultaneous translation function generates spoken translations while the other party is still speaking, with efficiency comparable to simultaneous interpretation.

Image Source: OpenAI

It's no exaggeration to say that GPT-Realtime-2 is likely to revolutionize AI interaction, with voice becoming the primary mode for daily AI interactions (excluding work productivity AI).

Typing vs. Speaking: A Generational Divide?

Focusing on voice interaction has become a consensus in the AI field in recent years:

On May 7, Qianwen introduced AI voice input on its PC platform, creating AI use cases for work scenarios with robust semantic parsing capabilities. Prior to this, AI platforms like Doubao, Claude, ChatGPT, and Gemini had already supported desktop voice modes, enabling users to interact with AI using their voices, including for programming. On April 27, Insta360 collaborated with ByteDance's AI programming platform TRAE to launch a lapel microphone suitable for Vibe-Coding. On April 23, Tuya unveiled the PVAD self-training model and TTS enhancement engine at its global developer conference, proposing the concept of LUI (Language User Interface, analogous to the graphical user interface GUI).

Even today, Elon Musk is promoting xAI's Grok Voice Think Fast 1.0 voice assistant on X.

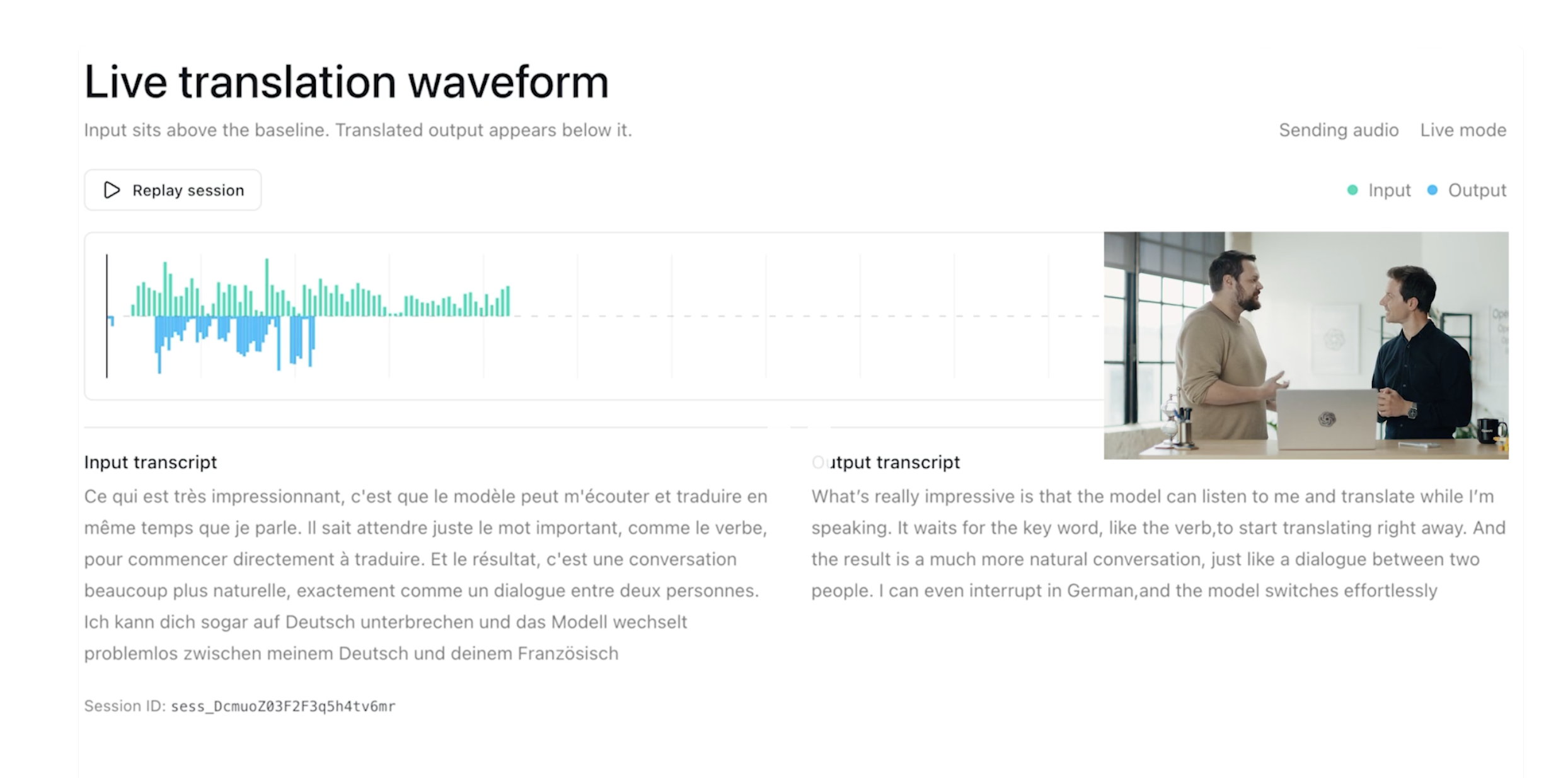

Image Source: X

So, why has the AI battlefield shifted to voice interaction interfaces in 2026? OpenAI CEO Sam Altman shared a viewpoint:

"It seems that young people prefer to interact with AI through voice, while older and middle-aged individuals prefer typing. I wonder if this will change."

In Leitech's view, this phenomenon reflects not just habits but also differences in thinking patterns between generations. For those born in the 2000s and 2010s (and the 2020s, the era of voice interaction), who grew up in an always-online, touch-screen environment, the keyboard is primarily associated with "work" and is rarely used except for gaming.

Image Source: X

Over the past 20 years, the efficient and precise keyboard-mouse input method has established the PC as a productivity tool but has also confined us to working only in front of the computer. The emergence of LUI has changed this stereotype: whether fetching a glass of water, going for a walk, or lounging in a chair, AI can keep up with our thoughts through "fragmented words," ensuring inspiration is always accessible.

Indeed, the characteristic of voice input having "low effective information content" can only be addressed by AI. Take Qianwen's newly launched AI voice input on its PC platform as an example. Leitech gave it a try. Besides basic voice input, Qianwen's PC voice input can automatically filter out meaningless interjections and filler words during speech.

For instance, when inputting the request shown in the image, I added numerous "er" and "you know" at every pause, but Qianwen filtered them out directly.

Image Source: Leitech

Admittedly, using voice input in public places like offices may impact colleagues. However, advancements in AI voice capabilities have made voice input practical in work scenarios. If you speak in a thoughtful and rapid manner, like young people, with your "mind faster than your mouth," or if you prefer voice input, Qianwen's voice input function is indeed beneficial.

From this perspective, embracing voice interaction is essentially AI giants vying for the market of young users. Whoever stabilizes voice interaction will monopolize the "interaction sovereignty" of these young people's fragmented time.

This approach of catering to the usage habits of the next generation and building user loyalty is not new. Electronics like MacBook Neo, Chromebook, and iPad have also invested heavily in the education market overseas, following the same logic.

However, in Leitech's view, besides proactive布局 (layout) for the next generation, there's another driving force behind AI giants' focus on LUI: LUI and AI share the same ultimate goal of being "always on standby and readily available."

Whether it's text-based interfaces (TUI, command line) or graphical interfaces (GUI), after years of development, the scenarios these visual interactions can cover have reached their limits. However, in scenarios where "hands and feet are occupied," such as driving, exercising, cooking, and showering, the value of voice interaction has not been fully explored.

Take driving as an example: to fill the interaction gap caused by the absence of physical buttons, domestic new energy brands have focused on LUI voice interaction interfaces. Giving ultra-long and complex commands to the car's infotainment system has become a reserved program when experiencing smart cockpits.

In response, many car companies have started collaborating with leading AI companies, using external voice large models to enhance the smart cockpit experience. For example, at last year's Guangzhou Auto Show, many car companies revealed to Leitech that their car infotainment systems had "incorporated the capabilities of Doubao."

Image Source: Leitech

In the turbulent AI market of 2026, whichever AI company perfects AI voice first and leads the industry from GUI interaction to LUI will be the first to enjoy the "new cake."

Voice Interaction: The Gateway to AI Hardware

Even setting aside the long-term proposition of LUI, from the perspective of users and smart hardware, voice interaction is a shortcut to accelerate the transformation of IoT devices into AIoT devices.

In the past, making smart hardware "smarter" required stacking screens and computing chips. However, AI voice has minimal hardware requirements for device-side implementation. All it takes is a microphone for sound reception, a computing module for processing audio data, a computing platform for running on-device models (optional for some AI hardware), and basic network connectivity. Any formerly unremarkable IoT product can transform into an AIoT device.

Image Source: Leitech

The DingTalk A1 recording card, which has significantly contributed to Leitech's coverage of overseas exhibitions and executive group interviews, is a prime example. Leitech has tried many smart recording devices and even purchased smart voice recorders running on-device models. However, limited by model capabilities, the effects of such "smart voice recorders" were usually unsatisfactory.

The DingTalk A1, however, does not have this issue: with a complete large model installed on the phone, it can output translation results almost synchronously. After offloading transcription and translation tasks to the phone, the on-device small model in the A1 can allocate more resources to voice pickup, noise reduction, and other aspects, optimizing recording effects from the source.

Besides recording, transcription, and translation, the DingTalk A1 recording card leverages AI agents to convert recording content directly into meeting minutes and to-do items in standard formats, even performing secondary in-depth understanding of the content.

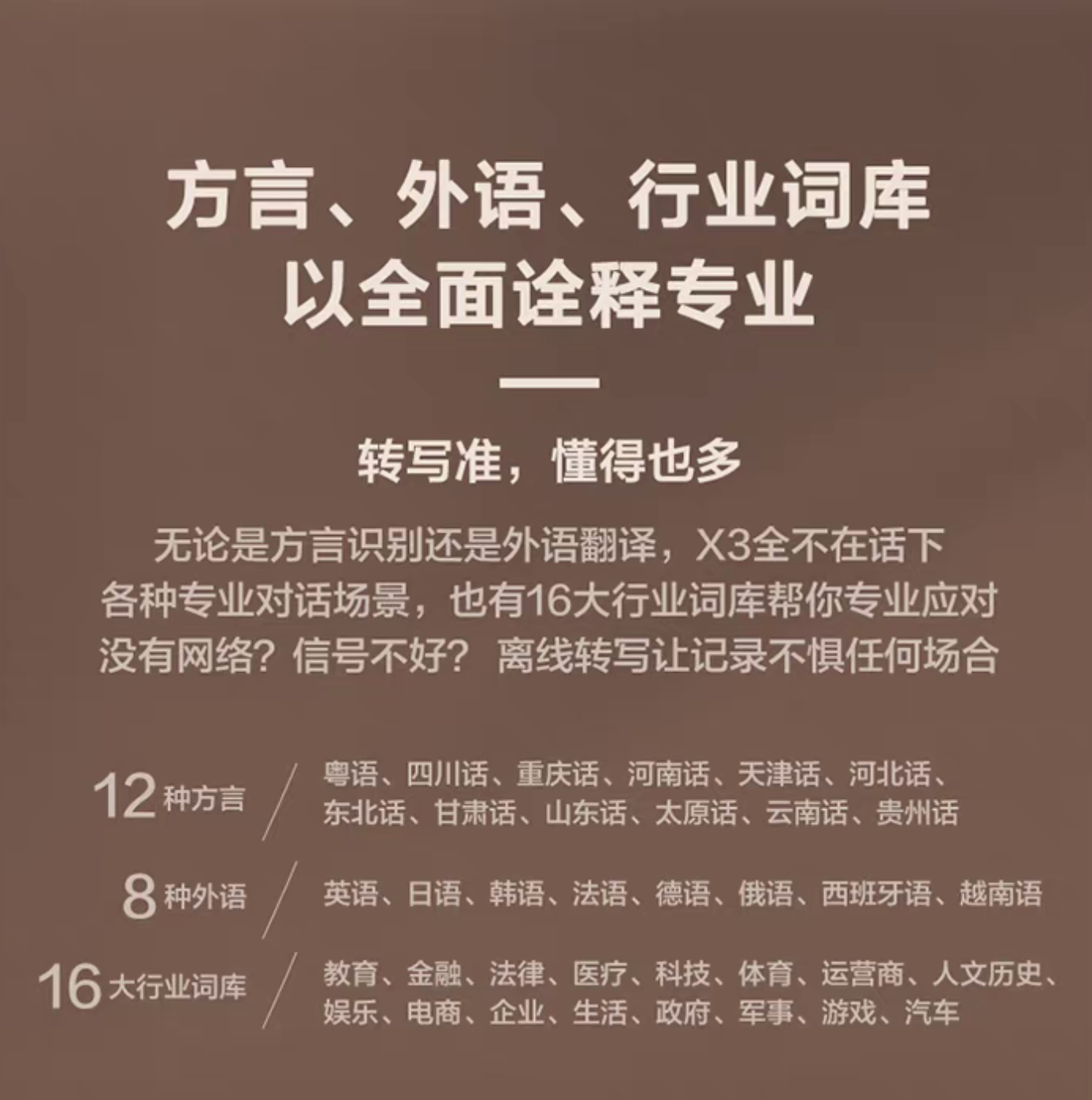

From the perspective of product diversity, besides "empowering" traditional IoT devices, the emergence of AI voice interaction has spawned many new AI concept products. For example, iFLYTEK has built a range of products based on its years of technical accumulation in speech recognition, including AI translators, AI e-ink office tablets, conference earphones, AI glasses, and even AI keyboards and AI mice.

Despite the wide variety, Leitech believes these AI products share a cross-category "mainline"—using voice AI to enrich hardware interaction methods and optimize the product experience.

Image Source: iFLYTEK

Take iFLYTEK's AI office tablet as an example. Limited by pixel response time, e-ink devices are inherently unsuitable for keyboard input. Those who have used a Kindle are familiar with the process of connecting to Wi-Fi and entering passwords. The lack of this input method has long relegated e-ink devices to mere "display devices" without true office capabilities.

However, the emergence of LUI has changed this. iFLYTEK's Spark large model's foreign language and dialect recognition capabilities have unlocked the input capabilities of e-ink screens, truly upgrading them from "displays" to "office tablets." Combined with multimodal input capabilities like image understanding, iFLYTEK has transformed e-ink devices into "all-in-one office devices."

From the perspectives of products, users, and AI suppliers, the importance of voice interaction for AI is undeniable.

China's AI Advantage: Mastering Chinese Listening and Speaking

Focusing on AI voice interaction also holds significance for domestic AI giants—Chinese AI companies inherently understand Chinese better.

Chinese is the most widely spoken language globally in terms of native speakers; considering total speakers (native + second language), it ranks second worldwide.

Some may believe that "knowing Chinese" is only meaningful for domestic users and not relevant internationally, but this is not the case. Leitech recently attended Dreame's product launch event in San Francisco, USA, and earlier reported on overseas exhibitions in non-English-speaking cities like Barcelona and Berlin.

From Leitech's observations, a significant number of first-generation immigrants, even when living abroad, only speak Chinese (primarily Cantonese). Many elderly Chinese, despite living for years in immigrant cities like Vancouver, San Francisco, Barcelona, and Melbourne, still struggle to meet the basic language requirements for naturalization.

It is evident that there is also a pressing need for AI among certain groups. For elderly individuals who are unable to type, voice interaction becomes their sole method of operating electronic devices. Nevertheless, whether it is Google's Gemini, OpenAI's ChatGPT, or xAI's Grok, their support for Chinese voice interaction remains notably constrained. Take ChatGPT as an illustration: during the Lunar New Year, when Leitech was assessing the support for Chinese and various dialects among mainstream AI assistants, it was found that ChatGPT struggled to consistently and reliably output Cantonese. Often, it would automatically revert to English in the middle of a conversation.

For ChatGPT, Gemini, and Grok, effectively "speaking Chinese" presents a formidable challenge. However, for domestic AI services such as Doubao, Qianwen, Kimi, and iFlytek, this very capability constitutes a built-in advantage.

Image source: Doubao

Compared to English, Chinese is characterized by extensive inversion, omission, and implicit meanings that can only be grasped intuitively. This complexity is further compounded by China's vast array of dialects, including Cantonese, Sichuanese, and Northeastern dialects, each embodying its own distinct cultural logic. While large models developed by overseas tech giants can translate Chinese, they frequently encounter difficulties when processing accented spoken language or slang within specific contexts.

This situation has created a natural competitive edge for leading domestic brands like Doubao, Qianwen, and iFlytek. For instance, as previously mentioned, Qianwen's voice input capability on the PC end can not only organize voice materials but also accurately pinpoint key points in users' speech, thereby eliminating the need for users to "beat around the bush." A few years ago, SenseTime even introduced the first AI service specifically designed for Cantonese users, named "Rinne."

It is undeniable that achieving seamless AI interaction represents the ultimate objective for all AI services. However, before such proactive AI interaction becomes ubiquitous, voice remains the most efficient and direct means of communication. By concentrating on Chinese and its various dialects, the domestic AI industry has effectively tapped into the user base of "overseas Chinese," a demographic that has long been overlooked by overseas AI giants, thereby discovering a swift path to becoming a leading global brand.

By 2026, the dynamics of AI competition have undergone a reversal: previously, AI "required people to learn it," but now, AI is "eagerly learning from humans." With the widespread adoption of concepts like voice interaction and LUI (Language User Interface), the era of painstakingly typing into input boxes should genuinely come to a close.

OpenAI voice interaction, Doubao, Qianwen voice

Source: Leitech

All images featured in this article are sourced from 123RF's licensed image library.