In 2026, Selling Data Will Be More Profitable Than Selling Robots

![]() 05/12 2026

05/12 2026

![]() 427

427

Behind the 99% Data Gap in Embodied AI, Who is 'Selling the Shovels'?

Humanoid robots can dance at the Spring Festival Gala and run marathons, yet they struggle to open an unfamiliar bottle cap.

This is because the data they rely on lacks real-world exposure. By 2026, as capital floods into the embodied AI sector, a harsh truth is emerging: high-quality embodied data has become the biggest bottleneck restricting the evolution of embodied AI. Faced with a staggering 99% data gap, players in the field are scrambling to build data infrastructure. This year is thus considered the ' the first year (first year) of data scalability' for embodied AI.

'What we mean by ' the first year ' is not that the problems are solved, but that the industry is transitioning from 'building demos' to 'creating scalable data systems' for the first time,' a representative from Lightron AI told Shuzhi Qianxian. They observed three key trends: first, million-hour-scale high-quality data has become a prerequisite for leading teams; second, data investment has shifted from a marginal to a core budget item; third, more real-world industrial scenarios are paying for the data infrastructure needed for embodied AI training, evaluation, and deployment.

In the AI circle, there's an iron law: the first to profit are always those 'selling the shovels.' In 2026, a thriving data business around embodied AI is quietly taking shape.

01

Exploding Demand for Embodied Data

In early 2026, demand for data in the embodied AI field is rapidly rising.

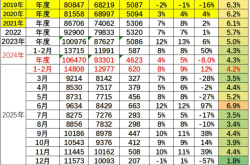

'I'd say demand is a hundred times that of last year,' revealed Yang Haibo, co-founder and president of Lightron AI, a unicorn in the embodied data space. Lightron's data business has grown significantly, securing 550 million yuan in orders in Q1 2026—exceeding its total orders for 2025 and setting an industry record. Over 80% of the simulation assets and synthetic data used by major international embodied AI teams come from the company.

Behind this surge, data is being elevated to an unprecedented strategic position. 'This year, embodied data has shifted from a secondary to a core budget item, becoming one of the fastest-growing segments in client budgets,' a Lightron representative told Shuzhi Qianxian. The industry now clearly recognizes that what determines model capability ceilings and deployment speed is not just algorithms and hardware but also a sustainable, iterative, and evaluable data supply system.

Yao Maoqing, chairman and CEO of Mifeng Technology (a Zhiyuan Robotics subsidiary), also senses this heat. In April, he revealed that data demand is concentrated among cutting-edge large model teams, domestic and foreign embodied AI giants, and startups. 'Demanders are generally in a 'buy as much as you have, and I need it as soon as you get it' state,' he said.

In his view, data will become a fundamental production factor like computing power, with investment attributes and return cycles. 'Those selling shovels will profit first,' Yao believes. At this stage, the industry needs massive data for R&D and validation to drive applications. Following the logic of infrastructure-first development, data's return cycle will be faster than that of physical robots or industry-specific solutions.

The industry generally attributes this demand explosion to three main drivers:

First, 'brain' evolution demands more data 'nourishment.' The core bottleneck restricting robot scalability has shifted from hardware and low-level control to the 'brain'—the lack of advanced embodied AI models. Embodied VLA and world models are rapidly advancing into more complex task spaces, requiring massive data feeding.

Second, industrial deployment accelerates, shifting data needs from lab-scale to deployment-scale. As robots enter factories, logistics, commerce, and other real-world scenarios, their data requirements soar. 'A robot might need thousands of hours of training data for a single task, and even more for complex tasks,' Yang said.

Third, the value of non-physical data is validated, and collection efficiency jumps. Previously, embodied data collection relied on manual lab operations, yielding only dozens of hours per day—far insufficient for model training and industrial deployment. Now, technologies like VR teleoperation, exoskeletons, UMI, and Ego are maturing, enabling data collection to scale from small 'workshops' to larger, more efficient production.

However, this explosive demand contrasts sharply with a severe 'data desert.' The consensus is that training embodied models with generalizable capabilities requires at least ten million hours of data. Yet by early 2026, the global total of high-quality real-world physical interaction data was only about 500,000 hours—less than 1/20,000th of the data used to train large language models. CSDN data also shows that embodied AI needs hundreds of petabytes of physical interaction data, with a current gap exceeding 99%.

Amid opportunities and bottlenecks, a battle for embodied data has begun.

02

The Data Pyramid: Player Positioning

Faced with a 99% data gap, the supply side has moved beyond scattered experiments to rapidly build data infrastructure.

'One million hours' has become the standard entry threshold, with Lingchu AI, Luming Robotics, and Xinghaitu racing to collect million-hour-scale effective data. JD plans to collect 1 million hours of robot physical data and 10 million hours of human real-world video data within two years. Mifeng Technology announced it will achieve ten-million-hour-scale data production capacity in 2026.

Behind this industry-wide expansion lies a consensus on the 'data pyramid': at the top is real-robot data, offering the highest precision and real-world alignment but at high cost and scarce supply; in the middle is simulated synthetic data, low-cost and easy to scale but facing 'Sim-to-Real' transfer challenges; at the bottom is internet video and human behavior data, highly generalizable but low-precision, requiring extensive cleaning and action alignment. All three are indispensable, and players are positioning themselves across the pyramid.

The supply side first focused on real-robot data at the pyramid's apex. Mainstream teleoperation data, considered 'gold-standard data,' is collected by professionals remotely controlling real robots via master-slave systems or VR devices to perform precise actions. By early April 2026, 64 embodied AI data collection centers, innovation hubs, and training grounds were planned or under construction nationwide, covering at least 27 cities.

Leading companies have become the main builders: Zhiyuan has set up data collection centers in Shanghai, Chengdu, and elsewhere; Luming Robotics has built three standardized collection sites. After opening a data factory in Tianjin last April, Paxini announced plans to build four more in Suqian, Wuhan, and Ganzhou this year. JD plans to engage 600,000 people in crowdsourced data collection. Local governments like Shanghai's Zhangjiang have built the nation's first heterogeneous humanoid robot training ground, aiming to collect 5 million real-robot data entries this year.

However, constrained by collection costs and efficiency, real-robot data is hard to scale quickly. The industry is shifting to a hybrid strategy of 'strengthening mid-tier simulated data + solidifying bottom-tier human data' to reduce reliance on expensive real-robot data.

Simulated synthetic data is currently the mainstream route for scalable data production. Lightron AI predicts that simulated data will handle scalable pre-training, evaluation, and reinforcement learning tasks, with human video data providing behavioral priors and real-robot data used for scenario alignment and 1% final tuning. To this end, Lightron developed a proprietary physics simulation engine to replicate real-world object motion and deformation, building a technical system covering simulation world generation, scalable data production, and model capability evaluation around a 'world-behavior-evaluation' three-tier architecture.

Beyond real-robot and simulated data, non-physical data represented by UMI and Ego-centric data (first-person human video data, hereafter 'Ego data') is emerging as a disruptive force. Collected via wearable devices worn by operators, this data is efficient, low-cost, and highly generalizable. Yao Maoqing revealed that real-robot data costs about 500-1,000 yuan/hour domestically, while non-physical data collection is roughly two to three times more efficient. Though it was once priced higher due to limited scalability, it is expected to converge to one-third to one-half the cost of real-robot data.

The UMI approach involves human-demonstrated operations using handheld grippers, with cameras recording the entire process. As long as gripper appearance and camera parameters match, the data can be reused across different robotic arms, supporting cross-hardware data sharing. Ego data is collected via head-mounted or wrist-worn devices capturing first-person views and actions. Both approaches lend themselves to crowdsourced collection.

Luming Robotics released the FastUMI non-physical data collection 'full suite,' planning to achieve over 1 million hours of UMI data production capacity in 2026. JD introduced its self-developed ultra-HD collection terminal, JoyEgoCam, for warehousing, retail, and housekeeping scenarios. Mifeng Technology launched the MEgo series of non-physical data collection devices, with 60-70% of its planned ten-million-hour-scale data capacity in 2026 coming from non-physical collection.

The embodied data market is booming, but million-hour scales are far from the finish line. The industry's real bottleneck is not a lack of single data sources but the absence of unified, tradable, and sustainable data infrastructure. JD launched a full-stack embodied AI data infrastructure and trading platform. Leju is building a data ecosystem with China Mobile, Huawei, and Alibaba Cloud. Mifeng Technology positions itself as a one-stop physical AI data service platform. Lightron AI continues to refine its embodied data engine, building simulation ecosystems and evaluation loops, planning to collaborate with over 1,000 scenario partners to produce 10 million hours of embodied data this year.

03

What Kind of Data Can 'Feed' Embodied AI?

As the battle for embodied AI data intensifies, a key question arises: what do data buyers care about most? What constitutes 'good data' that the industry urgently needs?

A Lightron AI representative told Shuzhi Qianxian that today's clients care less about 'data volume' or 'unit price' and more about whether the data can translate into actual model improvements. They're not just buying 'data quantity' but 'systemic capabilities to support training, evaluation, and deployment loops.'

Cao Yu, head of embodied data solutions at Kupasi, echoed this, saying feedback from leading companies shows that algorithms now need more than just more data—they need methods to directly feed data into models for immediate use. The focus is on working backward from commercial application scenarios to determine how to collect, annotate, train, and evaluate data, with clear performance metrics. The industry is pursuing an 'AI-ready' state.

A JD embodied AI representative noted: 'Clients first ask about data types—is it teleoperated or head-mounted? Then they care about whether it's annotated, what dimensions are labeled (e.g., hand keypoints, positions, text descriptions), and the precision (millimeter or centimeter-level).' These factors heavily influence whether embodied AI companies choose to use the data.

Industry observations suggest high-quality embodied data typically meets four criteria:

First, physical authenticity. This is non-negotiable. Unlike internet image-text data, embodied data must not only look real but also accurately reproduce key physical information like contact, force, and state changes. Without physical authenticity, trained robots will frequently miss grasps or lose balance in the real world.

Second, scalability. Data must support pre-training and continuous iteration, not just a few demos. Xie Chen, founder and CEO of Lightron AI, emphasizes that good data must be both scalable and capable of lifelong learning.

Third, high diversity. Models need to see the world in its entirety, requiring data to cover diverse scenarios, tasks, execution paths, and operational habits—especially including imperfect trajectories. Yang Haibo of Lightron stressed that failed or flawed data is highly valuable: 'We've had clients pay 1.5x for such 'less successful' case data.' Yao Maoqing of Mifeng Technology said their collections deliberately capture failures and recovery processes.

The logic is that during pre-training, data 'diversity' matters more than 'correctness.' Like infants learning to walk through trial and error, embodied AI must autonomously learn physical laws and causal logic from mixed data. The real world has no permanently standard actions; data containing 'failure-correction-success' processes is often more valuable for mirroring real-world learning paths.

Thus, Zhu Zheng, co-founder and chief scientist at Jijia, noted: 'Some industry efforts, like defining only final goals without strictly dictating collection processes and letting collectors improvise based on their understanding, are a good start.'

Huang Yongtao, chief data scientist at Ant Lingbo Technology, added that while factory assembly line data is voluminous and highly standardized, its homogeneity offers diminishing marginal returns for model improvement. High-quality embodied data prioritizes diversity over mere uniformity.

Fourth, end-to-end usability. Zhu Zheng pointed out that current embodied data annotations are overly simplistic. Traditional multimodal image-text models annotate single images with thousands of words, fully reconstructing scene context, details, and diverse perspectives. In contrast, most embodied video data today has only basic action labels, lacking environmental semantics and task process descriptions—far from meeting high-quality model training needs.

Beyond these four dimensions, the industry proposes a deeper standard: behavioral alignment. Yao Guocai, head of embodied Infra & Data at the Beijing Academy of Artificial Intelligence (BAAI), argues that embodied data's mission is to better represent human behavior and align models with it. Valuable data should high-fidelity, diversely capture and record authentic human behavioral patterns, including unconscious hidden behaviors—like checking if a cup is clean before picking it up. Such details are often overlooked in current models and data systems.

Take the highly regarded Ego data: one core value lies in its 'in the wild' (natural/real-world) collection, capturing diverse daily behaviors. However, many data collectors still use pre-designed tasks, discarding the most valuable aspect of Ego data—natural behavioral capture. Additionally, to better represent behavioral intent, data modalities closely tied to human intent, such as electromyography (EMG) and electroencephalography (EEG), are becoming important exploration directions for high-quality embodied data.

From a demand structure perspective, Lightron AI told Shuzhi Qianxian that the most urgent data needs currently focus on manufacturing, warehousing, and logistics scenarios, especially flexible assembly, handling, and repetitive, dangerous, or monotonous tasks. These scenarios offer the clearest real-world value, driving stronger client willingness to pay. They also demand higher physical interaction, stability, and generalization capabilities—exactly where high-quality embodied data is most scarce.

04

What Other Bottlenecks Remain?

Despite the rising heat around embodied data, many bottlenecks persist in scaling data collection.

First, the industry lacks consensus. Yao Guocai of BAAI pointed out that the biggest issue is 'rushing too fast'—especially with the rise of Ego data in 2026, companies feel pressured to claim million-hour data goals. Yet fundamental questions remain unanswered, such as how much data AGI requires, which modalities are needed, and how to evaluate quality. He believes embodied data still faces many unresolved data science challenges, like precisely representing human behavior for model alignment—far from ready for data engineering scale-up.

Cost and efficiency are the most intuitive obstacles. Zhu Zheng, co-founder of Jijia, revealed: 'It costs about 200 yuan to collect one hour of data. At this cost, it is difficult to collect tens of billions of hours.' A report from CCID Think Tank also shows that producing 10,000 hours of real-world data from a single device can cost over a million yuan, with one person able to collect only 300-500 pieces of data per day. While new collection models like UMI and Ego have reduced costs and improved efficiency, they have also brought new challenges. Zhang Minying, a senior algorithm expert at Alibaba Cloud, suggests drawing inspiration from Tesla's Shawdow mode, where data segments with discrepancies between predicted and actual behaviors are considered high-value long-tail data worthy of priority collection.

Data utilization is also an issue. Li Yonglu, an associate professor at Shanghai Jiao Tong University, revealed that after screening approximately 120,000 hours of Ego-centric human behavior data, less than 5,000 hours were truly usable for VLA pre-training. Recently, an institution publicly released 110,000 hours of factory video data, with an optimistic estimate of only about 3% being usable. 'We need new architectures and new paradigms,' Zhu Zheng admitted. His company uses hundreds of thousands of hours of data to train models, spending tens of millions on GPUs annually. If the data scale were to expand 100 or even 1,000 times, startups simply couldn't afford it. 'That's why I strongly agree that while scaling data, we should also strive to improve model architectures and enhance operational efficiency.'

Difficulty in aligning cognition and demand is a hidden bottleneck in data collection. Che Zhengping, head of Beijing Humanoid Embodied Intelligence, pointed out that during fine operations, data collectors may rely on the naked eye or VR, while robots depend on hand-eye cameras. If perspective deviations are not promptly addressed, visual gaps may directly render the data 'unusable.'

Huang Yongtao, chief data scientist at Ant Lingbo Technology, further summarized three types of 'misalignment': misalignment between learning objects and data, where the quality ceiling of teleoperation actions is far lower than human capabilities; misalignment between task distribution and data, where collected actions are singular and environmentally constrained (e.g., mostly grasping and placing actions, while users demand chopping and washing dishes); and misalignment between robot bodies, where differences in degrees of freedom, sensor layouts, and zero-position errors across robots make data unification impossible.

The lack of a data standardization system is the most fundamental pain point in the industry. Currently, there are no unified standards for data collection formats, annotation specifications, or quality assessments. Robots from different manufacturers vary in configuration and sensor layouts, resulting in vastly different data formats. Yao Guocai from the Beijing Academy of Artificial Intelligence admitted: 'We spend a significant amount of time on data format conversion when training models. After conversion, we encounter many standard definition issues, such as differing coordinate system definitions, requiring further data processing.'

The absence of standards also makes it difficult to accurately measure data value. Fan Haoqiang, co-founder of Yuanli Lingji, stated: 'Currently, browsing available data on the market is overwhelming—there's everything, but it's hard to answer what I truly need or what's missing.' He suggests using Benchmarks as a driving force to form a closed loop of 'evaluation → data → model,' similar to how ImageNet served as both a dataset and evaluation standard, propelling the previous wave of visual revolution.

Domestically, standardization efforts have accelerated: in September 2025, Shanghai released standards for humanoid robot datasets; in March 2026, the Ministry of Industry and Information Technology issued the first top-level design document covering the entire industrial chain. On the enterprise side, Lightron Intelligence enhances cross-body data reuse efficiency in industrial scenarios through a closed-loop capability of 'simulation generation, evaluation verification, and minimal real-world alignment,' reducing development cycles from 3-6 months to approximately 2 weeks in some typical projects, significantly lowering real-machine trial-and-error costs. Additionally, Mifeng Technology launched the MEgo Engine, a one-stop data governance platform, while JD.com released the first embodied intelligence data infrastructure covering the full lifecycle of 'collection, storage, annotation, training, evaluation, simulation, and testing.'

Industry insiders believe the sector is still far from 'data sufficiency.' What is truly scarce is not quantity itself but high-quality, reusable, evaluable data that can enter closed loops. Whoever can first establish a closed loop from data to value will gain an early advantage in the next phase.

In 2026, standing at a critical inflection point for scalability, the story of embodied intelligence data has only just begun.