Technology Innovation丨Domestic Native AGI Infra Hits RMB 700 Million Funding Record, Ushering in New Productivity Barriers for AI Infrastructure

![]() 05/13 2026

05/13 2026

![]() 513

513

Preface:

By 2026, capital will shift its focus to a more fundamental layer of assets—the ability to compress GPUs, domestic chips, intelligent computing centers, power, model services, and enterprise scenarios into a stable, deliverable Token production capacity.

This is the core reason behind the surge in popularity of native AGI Infra, which sells a comprehensive system capability to transform computing power into intelligent output.

RMB 700 Million Funding Bet on the [AI Refinery] of the Token Economy

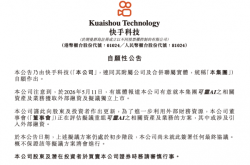

Recently, Infinitech announced it had secured over RMB 700 million in funding, a milestone hailed by multiple media outlets as the largest-ever financing round for a China-based AI-native infrastructure company.

Joint lead investors include Hangzhou Hi-Tech Financial Investment Group and Huiyuan Capital, with participation from Guoxing Capital, Chindata, GF Qianhe, Lihua Qingtong, China Insurance Investment, AEF NextGen, Tengrui Investment, Coretronic, CITIC Construction Capital, and Kuande Intelligent Learning Lab. Existing shareholders Legend Capital, Shanghai Guotou Futeng, and Yuanzhi Future also increased their investments.

The funds will be allocated to multi-heterogeneous technologies, software-hardware synergy efficiency improvements, and the construction of an enterprise-grade intelligent agent service platform.

Infinitech’s business is often misunderstood as merely a [computing power operator], but this is only half correct.

Traditional computing power services focus on the supply side, where enterprises purchase GPU servers, lease cloud resources, and build clusters. Key metrics include card count, rental costs, bandwidth, and data center conditions.

AI-native infrastructure takes this further by addressing engineering efficiency after computing power enters the model.

A single chip does not automatically equate to productivity. Model deployment, parallel inference, memory management, workload scheduling, KV Cache optimization, model routing, service stability, and tool invocation accuracy all influence final Token costs and user experience.

Enterprises truly care about how many business requests can be stably processed daily, the latency per invocation, and whether unit Token costs can continue to decline.

Tokens are becoming a high-frequency unit of measurement in the AI industry. According to the National Data Bureau, Token volume surged to 100 trillion by the end of 2025 and exceeded 140 trillion by March 2026, representing over a thousandfold increase in two years.

AI commercialization is transitioning from trial phases to high-frequency usage, with intelligent agents now integrated into sales, R&D, manufacturing, financial risk control, code generation, and data analysis workflows, driving exponential growth in invocation frequency.

Once an enterprise’s internal processes are reconstructed by AI, Token consumption shifts from sporadic needs to a continuous foundational resource akin to electricity, bandwidth, or cloud storage.

This is where AGI Infra companies like Infinitech derive their value, acting as [AI refineries] within the industrial chain.

They connect upstream diversification (diversified) chip suppliers, intelligent computing centers, and models with downstream enterprise applications, agent platforms, and industry clients. Through scheduling, optimization, and servitization, they transform raw computing power into measurable, callable, and deliverable intelligent resources, organizing fragmented capabilities into usable production systems.

AI Productivity Formula: Defining Token Value

Over the next few years, the AI industry will develop clearer stratification.

The top layer consists of applications and agents directly integrated into business processes; the middle layer comprises model providers offering reasoning, planning, generation, and multimodal capabilities; the bottom layer includes chips, servers, data centers, and energy; running through all layers is AI Infra.

It organizes bottom-layer resources, servitizes model capabilities, and delivers these services to applications.

This positions AI Infra as a critical [narrow waist] of the AI era.

It may not be closest to users or hardware manufacturing, but it connects both ends, determining the efficiency, cost, and stability of intelligent production. Following this funding announcement, Infinitech unveiled a new AI productivity logic, introducing the first AI productivity formula:

AI Productivity = Intelligent Scale × Token Production Efficiency × Token Value Conversion.

Historically, Tokens served as a mere technical metric for tracking model interactions.

This new formula elevates Tokens into a core economic variable driving AI industry development, clearly outlining the closed-loop logic for AI industrialization and value realization.

① Intelligent Scale: Multi-heterogeneous computing power scale optimized through technology.

② Token Production Efficiency: The ability to efficiently convert electrical energy into Tokens (Tokens/s).

③ Token Value Conversion: The ability to efficiently transform Tokens into societal productivity (Productivity/Token).

Multiplying the units of [Token Production Efficiency] and [Token Value Conversion] yields [Productivity/s].

Infinitech’s value conversion logic follows the path: Input → Electrical Energy → Tokens → Productivity → Value.

Three New Productivity Barriers

Infrastructure from major corporations typically serves their own ecosystems, leveraging strong technical capabilities but organizing resources around proprietary clouds, models, and client systems.

Numerous small-to-medium model companies, industry application firms, research institutions, local intelligent computing centers, and enterprises using domestic chips cannot necessarily reuse these highly customized internal systems.

China’s AI industry faces complex supply-side challenges: chips span multiple architectures (GPUs, NPUs, ASICs, domestic AI chips), models are highly fragmented (DeepSeek, Kimi, Zhipu, Tongyi, MiniMax, etc.), each with unique interfaces, performance, costs, and deployment methods. Local intelligent computing centers advance rapidly but face pressure on utilization rates, scheduling efficiency, and client conversion.

① Neutrality: Infinitech’s core and most difficult-to-replicate advantage lies in its neutral third-party positioning.

The AI industry adheres to the principle of letting specialists handle specialized tasks: model companies focus on algorithms and scenarios, while bottom-layer computing power optimization and Token production are delegated to professional third-party MaaS platforms.

However, infrastructure from major corporations primarily serves internal businesses, and chip manufacturer infrastructure is tied to hardware ecosystems, making full industry-wide fairness and openness difficult.

Model companies building their own infrastructure face competition-related limitations, preventing them from becoming universal industry foundations.

In this landscape, neutrality becomes the greatest common denominator for China’s AI industrial chain.

Infinitech’s neutrality allows it to simultaneously serve multiple leading model companies, including MiniMax, Zhipu, Kimi, and DeepSeek.

Its Agentic MaaS platform hosts over 160 large models, optimizes services for mainstream domestic models, and enhances reasoning for trillion-parameter models.

By late April 2026, daily Token invocations on the platform had grown over 20-fold compared to the end of 2025.

② Multi-Heterogeneous Integration: The AI industry’s upstream features N types of AI chips with different architectures, while the downstream has M types of large models with varying structures, resulting in high adaptation costs between hardware and models.

Adapting a model to a new chip typically requires 3–6 months, nearly unacceptable in the rapidly evolving AI industry.

Infinitech’s multi-heterogeneous technology addresses this [M×N] adaptation challenge.

Its platform supports over 10 chip types, including NVIDIA, Huawei Ascend, and Biren. By pooling and virtualizing heterogeneous computing power, it masks underlying hardware differences.

Through task decomposition, it enables heterogeneous mixed training and inference, allowing model providers to increase domestic chip usage without suffering 3–6 months of iteration delays.

More importantly, Infinitech has raised industry-average GPU utilization from below 30% to over 97%.

While others utilize only 40% of an H100 chip, Infinitech achieves near-full capacity, cutting computing power costs by more than half.

It breaks free from the supply and cost constraints imposed by single-chip vendors, offering the industry greater choice and flexibility.

③ Software-Hardware Synergy: Infinitech’s software-hardware synergy technology involves joint design across layers, maximizing chip application performance.

Its Agentic MaaS platform boosts system throughput by 2–3x through software-hardware co-optimization, reduces overall latency by 50%, and controls first-character latency within 500 milliseconds.

In execution precision, it maintains over 99.9% alignment with original model accuracy and achieves 99.95% enterprise-grade high availability.

This extreme efficiency gain is particularly critical amid surging Token demand.

As Token prices rise, even marginal improvements in Token production efficiency translate into tangible cost savings and profit growth.

Conclusion:

Whoever can convert electricity and chips into Tokens at lower costs and then transform Tokens into enterprise productivity will wield stronger influence in the AI industrial chain.

China possesses unique advantages for developing the Token economy: a robust and stable energy structure provides a solid foundation for large-scale computing operations, while the world’s most complete AI industrial chain and largest AI application consumer market further bolster its position.

Partial sources referenced: QbitAI: “Token Demand Surges 1,000x, RMB 2.2 Billion Flows to This AGI Infra Leader”; Infinitech: “Infinitech Secures Over RMB 700 Million in Funding | Unveils AI Productivity Formula, Becomes Token Economy Hub”; Huxiu APP: “RMB 700 Million Funding Flows to Tsinghua-Affiliated [Token Factory]”