Jensen Huang's 'Work Report' for 2026

![]() 03/18 2026

03/18 2026

![]() 463

463

On Monday, Jensen Huang, clad in his iconic leather jacket, opened NVIDIA's 2026 GTC Conference.

This year's GTC Conference was different from previous years. While the world expected faster GPUs, Jensen Huang did not deliver a product launch but rather a narrative about the industrial revolution in the AI era.

NVIDIA's new grand narrative defined the means of production, production methods, economic models, as well as the hardware foundation and operating system driving everything in this revolution.

In just two hours, Jensen Huang clearly outlined the blueprint for NVIDIA's future and that of the entire AI industry.

01

The Birth of the 'AI Factory' and the Arrival of the 'Inference Inflection Point'

Jensen Huang presented two core assessments in this speech as the strategic foundation for NVIDIA:

① Inference Inflection Point: AI moves from learning to working.

Over the past two years, AI's computational consumption has primarily focused on the training phase, with major AI companies striving to design more powerful models. The Scaling Law has been effective during this stage: the larger the model and the more data, the better the performance.

Today, the industry has moved beyond this phase and entered an explosive period of the inference phase. The rise of products like OpenClaw has led to the large-scale practical application of AI models.

According to Jensen Huang, the computational load required for inference may reach tens or even hundreds of thousands of times that required for training.

Whether it's ChatGPT, Gemini, DeepSeek, or Doubao, every daily interaction and every code generation represents a complex inference.

Therefore, even though the cycle for introducing cutting-edge large models has begun to slow down, the demand for GPUs continues to surge.

The global popularity of OpenClaw, an open-source product with significant security risks, further indicates that the growth we currently see is likely just the tip of the iceberg.

② AI Factory Economics: Defining the New World's KPIs

With the arrival of the inference inflection point, Jensen Huang also proposed a new economic model for data centers:

Tokens will become the new product. Data centers will no longer be cost centers for storing data but profit centers for producing intelligence, the so-called 'AI factories.'

Computational power will become the new currency, positively correlated with tokens.

The new KPI metric will be the number of tokens generated per watt of electricity.

In the United States, electricity remains the ultimate physical bottleneck for all data centers. Maximizing token output per watt of electricity is equivalent to maximizing revenue.

This straightforward yet precise explanation not only makes abstract professional terminology in the AI industry more concrete but also firmly aligns the thinking of everyone from CEOs to developers with NVIDIA's most advantageous track (translated as 'track' or 'domain').

Under this new economic paradigm, NVIDIA's ambition is clear: it holds a significant amount of 'currency' and is unwilling to merely sell shovels to gold miners but aims to create an entire 'factory blueprint' and 'production line.'

02

The Nuclear Power of the 'AI Factory': Hardware Foundation

NVIDIA's greatest advantage lies in computational power. Except for Google, which has carved out a niche with its self-developed TPU, most companies worldwide still rely on NVIDIA's GPU supply.

The explosion in inference demand means that 'AI factories' will consume unprecedented amounts of energy.

At this juncture, NVIDIA unveiled the long-anticipated Vera Rubin.

Compared to the single GPUs showcased at previous GTC Conferences, Vera Rubin is a rack-level supercomputer.

Essentially, it is a product of extreme vertical integration, integrating seven key chips, including a new generation of GPUs, CPUs designed specifically for AI agent tasks, new-generation networking, and new-generation storage, all combined with liquid cooling technology and precision collaborative design, encapsulated within a single rack.

From this point onward, NVIDIA's delivery unit has leaped from chips to plug-and-play computing systems.

In addition, there was another technological surprise at this GTC Conference: the integration of Groq LPU technology.

NVIDIA has noticed that current AI computing demands are beginning to show extreme trends:

First, high throughput: Vera Rubin excels at massive parallel computing, suitable for processing batch tasks.

Second, ultra-low latency: Groq LPU has extremely fast single-response speeds, crucial for interactive applications.

NVIDIA's solution is to 'divide and conquer' the required capabilities at the software level, placing high-intensity mathematical operations on Vera Rubin and delegating token generation, which is extremely sensitive to latency, to Groq. This solution has boosted the performance of high-value interactive applications by 35 times.

The output of AI factories extends far beyond tokens in the digital world.

In Jensen Huang's view, there is vast potential in the broader physical world of embodied AI.

However, relying solely on real-world data to train robots to handle all unexpected situations will likely never be sufficient.

The solution lies in the new economic model he proposed: computational power is currency, and currency can bring data.

Generating massive amounts of high-quality synthetic data through simulation platforms, training AI in virtual worlds, and then deploying it to robots in the real world. This Sim-to-Real pathway may be the key to solving robot intelligence.

Of course, blind technological optimism is not advisable. The current foundational capabilities of AI models are insufficient to support the commercialization of embodied AI.

Nevertheless, both the general-purpose robot foundational model GR00T and the autonomous driving model Alpamayo, which possesses thinking and reasoning capabilities, demonstrate that NVIDIA is investing the productivity provided by 'AI factories' into the $50 trillion manufacturing and automotive industries.

03

The Brain of the 'AI Factory': Dynamo 1.0

With the powerful 'nuclear reactor' Vera Rubin now in place, the next question is how to keep it running efficiently 24/7 like crayfish.

The answer to this question also lies at the software level. NVIDIA's technical blog on Dynamo 1.0, released concurrently with the GTC Conference, officially unveiled the brain driving the 'AI factory' to the public.

Dynamo is a software framework specifically designed for large-scale, multi-node, enterprise-level AI inference. If Vera Rubin is the hardware production line for tokens, then Dynamo is the controller of the production line. While it does not directly produce tokens, it ensures the efficiency, speed, and stability of the production process.

Specifically, Dynamo has achieved technological breakthroughs in the following four areas:

1. On the Vera Rubin platform, Dynamo can enhance the model's inference request processing capability by approximately 7 times through technologies like decoupled services, directly improving the core 'KPI metric.'

2. After AI evolves from Chatbot to Agent, the workflow of intelligent agents requires complex processes such as multi-round dialogues, background thinking, and tool invocation. Dynamo possesses 'agent awareness' capabilities, enabling it to understand task priorities through agent hints and prioritize critical tasks, reducing the first response time of agent applications by 4 times.

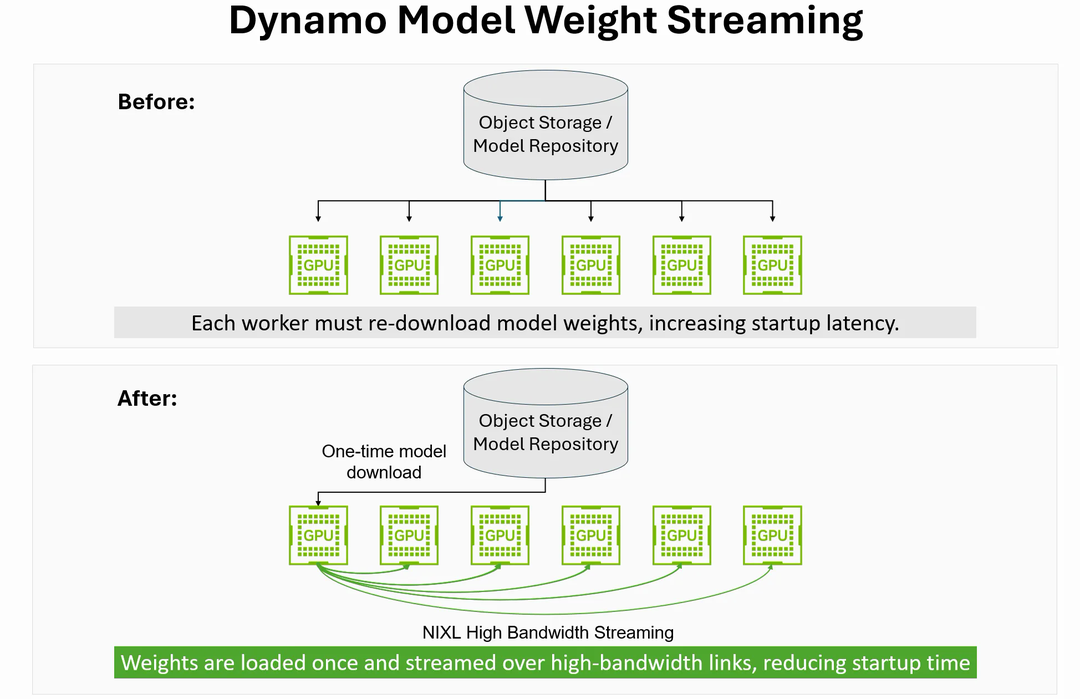

3. Modern AI applications generally require frequent initiation of new model instances, but the traditional approach of loading, compiling, and optimizing models is time-consuming and laborious. Dynamo's ModelExpress technology accelerates the initiation of new instances by 7 times through methods like checkpoint recovery and model weight streaming, making 'AI factory' production more flexible.

4. Deploying large models remains too high a threshold for most people. Dynamo's DGDR function allows developers to provide only targets such as models, hardware, and traffic, and the system will automatically complete performance analysis, configuration, and deployment.

The introduction of Dynamo perfectly demonstrates that NVIDIA's leadership is no longer limited to the hardware level but also encompasses profound software and systems engineering capabilities.

04

NVIDIA's Ecosystem Strategy

In summary, NVIDIA's moat, built through GPUs, has now extended to an ecosystem strategy.

In his speech, Jensen Huang compared the open-source agent framework OpenClaw to Linux in the AI era and predicted that it would become the next-generation operating system, inevitably ushering in a new era of 'Agent as a Service.'

As mentioned in a previous article, a platform product called NemoClaw has now been officially released. NVIDIA's goal is clear: to become the standard setter and security guardian of this technological revolution, allowing all companies to confidently join this wave of 'shrimp farming.'

The same strategy applies to the foundational models underlying intelligent agents. Through the establishment of the Nemotron Alliance, NVIDIA has partnered with renowned AI companies like Mistral and Perplexity to jointly build the next generation of foundational models. This way, the software in the AI ecosystem will achieve deeper integration with NVIDIA's hardware.

This is precisely Jensen Huang's brilliance:

From chips (Rubin), systems (racks), networking (NVLink), software (Dynamo), operating systems (NemoClaw) to AI models (Nemotron), NVIDIA has achieved deep in-house development and extreme collaborative design at every level.

This vertically integrated model delivers performance and efficiency that competitors find difficult to match.

At the same time, NVIDIA is not attempting to monopolize the market.

By collaborating with major cloud providers and AI startups, NVIDIA on the surface (translated as 'ostensibly') aims to 'empower everyone' and 'bring customers to cloud providers,' but in reality, it embeds its technology stack into global computing platforms, establishing the entire ecosystem on NVIDIA's underlying technology.

If we look back at the 2026 GTC Conference in the future, it may mark a turning point in an era.

NVIDIA has constructed a new economic cycle with tokens as commodities, computational power as currency, and 'AI factories' as the core production units.

In this cycle, NVIDIA possesses the most efficient production tools, the most intelligent production management system, and even defines production standards.

It is no longer just a GPU supplier but a builder of AI infrastructure and economies.

The future 'chokepoint' will only tighten.