Token's Great Leap Forward | Business Wave

![]() 04/24 2026

04/24 2026

![]() 406

406

Editor|Yang Xuran

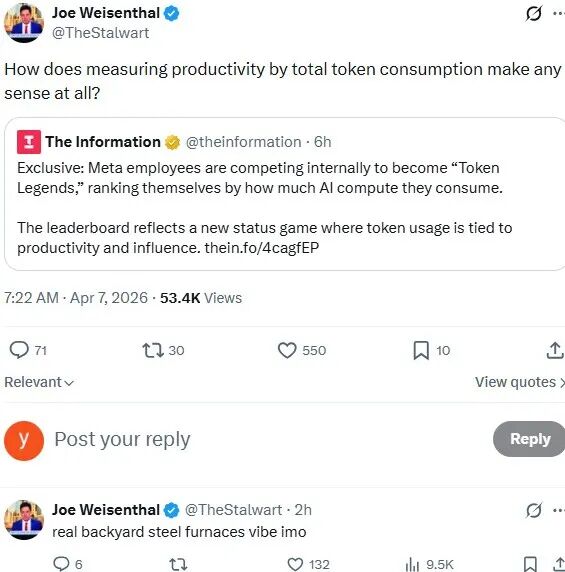

The exponential growth in Token (data unit) usage is driving up the valuations of a number of companies.

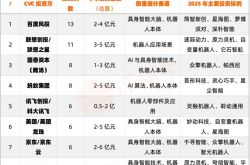

Xunce Tech, known as the "first Token stock," saw its share price surge nearly sixfold from its issue price just 108 days after listing, reaching a total market cap of HK$105 billion.

Zhipu, which listed on the Hong Kong Stock Exchange on January 8 this year, had a market cap of approximately HK$58 billion on its debut. After releasing its first annual results, its stock price quickly broke through the HK$1,000 mark, with its market cap also surpassing HK$400 billion.

Zhipu CEO Zhang Peng defined the keyword for 2026 as "Token volume," believing that breakthroughs in intelligence capabilities, coupled with exponential growth in Token consumption, jointly constitute the commercial value of the AGI era.

Among companies yet to go public, Yuezhi Anmian completed a new round of financing exceeding $1 billion last month, reaching a valuation of $18 billion. In contrast, its valuation was just $4.3 billion in a $500 million financing round at the end of last year.

This rapid valuation jump is directly linked to OpenClaw's announcement in February this year that it would adopt Kimi K2.5 as its official primary model. Just one month after K2.5's release, Yuezhi Anmian's ARR (Annual Recurring Revenue) surpassed $100 million. This performance growth not only supported the valuation increase but also opened up the possibility of the company's IPO.

Both domestically and internationally, capital markets are pricing the rise of the Token economy with remarkable enthusiasm. In the coming months, we will have the opportunity to hear various wealth myths. This booming Token economy is completing cognitive popularize (popularization) for all in a "Great Leap Forward" manner while accumulating risks.

This article is a deep-value piece from the Business Wave content team. Feel free to follow us on multiple platforms.

Growth

When chatbots first emerged, few could have imagined that just two years later, a single large model like Doubao would see its average daily Token usage exceed 120 trillion. Even at a relatively low price of two yuan per million Tokens, this implies that 300 million yuan in real funds are being "burned" daily on Doubao.

In fact, similar scenarios are playing out among large model companies both domestically and internationally, with global average daily Token consumption growing exponentially.

Data released by the China Academy of Information and Communications Technology (CAICT), under the Ministry of Industry and Information Technology, shows that as of March 2026, China's average daily Token usage had surpassed 140 trillion, a more than 1,000-fold increase from early 2024.

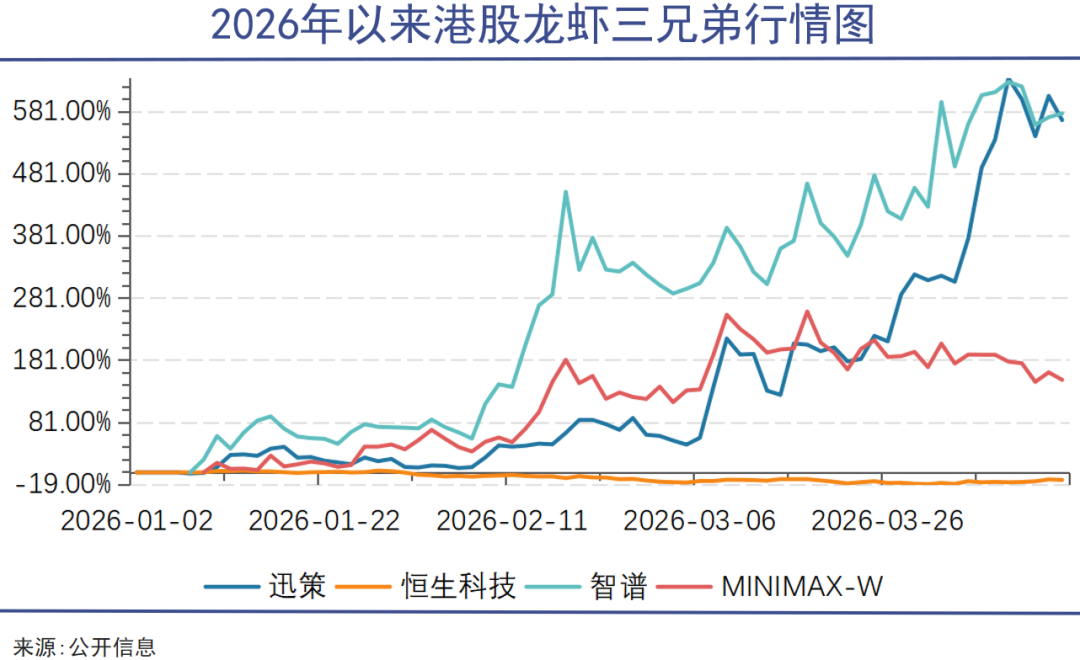

OpenRouter, the world's largest AI model API aggregation platform, reported that the number of Tokens processed weekly on its platform soared from 1.62 trillion in March 2025 to 16.90 trillion in March 2026, a more than tenfold increase in just one year.

OpenRouter connects to the API interfaces of nearly all mainstream model providers, including Anthropic, OpenAI, Google, and Meta. Its weekly Token consumption curve essentially serves as a real-time monitor of global AI application activity.

This Token consumption curve is nearly vertical, unlike the linear growth of GDP or the S-shaped curve of internet user penetration. It represents a unique development trend of the AI economy.

What is driving this explosion in Token demand? The answer lies in the technological evolution of artificial intelligence.

Early AI applications were primarily chatbots (Bots). Users input a sentence, and the model returned a response, consuming a few hundred to a few thousand Tokens per interaction. However, since the second half of last year, new application paradigms represented by Agents and Claw have spread more rapidly and widely. Their common feature is transforming AI from a simple "question-and-answer" dialogue tool into a digital employee capable of autonomous planning, tool invocation, and long-term task execution. This fundamental change in technological architecture has caused Token consumption to surge in ways unexpected by many users.

Industry estimates suggest that to achieve the same business objective, the Agent mode consumes approximately 50 to 200 times more Tokens than the Bot mode.

This is because Agents need to carry the entire historical conversation context when executing tasks, with complex tasks often accumulating hundreds of thousands of Tokens in context windows.

Moreover, each round of Agent reasoning triggers an API request and requires continuous loading of system configuration files and memory banks to maintain task consistency and personalized experiences. This results in Token consumption under the Agent mode resembling a black-box operation beyond subjective user control.

More alarmingly, the apparent Token consumption at this stage does not necessarily equate to genuine demand.

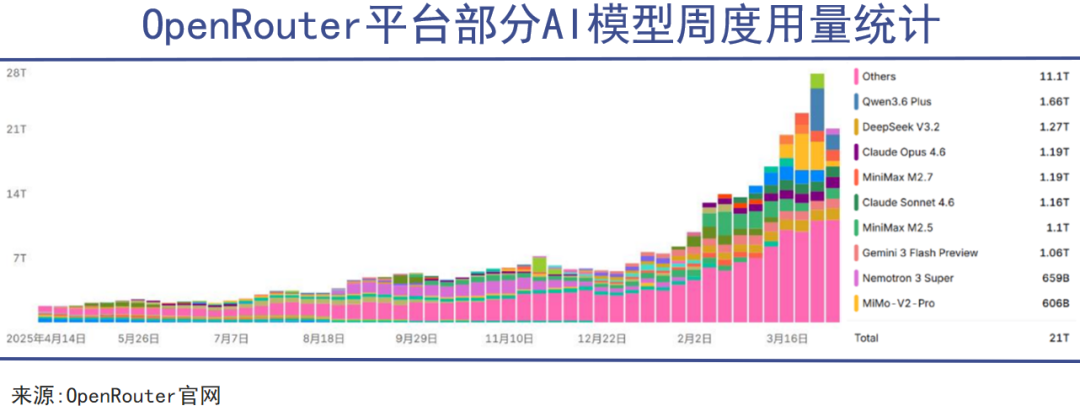

As AI adoption becomes a corporate imperative and Token consumption is increasingly included in employee performance metrics, a situation of "pseudo-demand for Tokens" has emerged.

Meta already has teams using Token consumption as a metric for AI penetration. Some employees, to "appear AI-savvy," deliberately run large numbers of redundant model invocation tasks. Similar phenomena have been reported at Chinese tech giants like Tencent, with some business lines even inventing gray-area practices like "Token inflation."

This behavior—creating excessive consumption and exaggerating non-existent performance out of fear of being left behind—is reminiscent of the absurdities of the Great Leap Forward.

Core

As Token consumption grows exponentially, a serious industrial question arises: Who will foot the bill, and who will benefit?

On April 15, the National Data Administration released a draft implementation plan for promoting high-quality industry dataset construction, publicly soliciting opinions. For the first time in an official policy document, it proposed "exploring new trading models such as Token trading and constructing a quantifiable, priceable dataset value system based on Tokens."

From the moment "Token trading" was included in national top-level design, Tokens ceased to be merely a technical concept and began evolving into a legal pricing unit for the AI economy. In a sense, Token-based pricing is the core of the AI economy.

Xunce Tech is called the "first Token stock" because it pioneered a business model adjustment, fully transitioning to a new model of Token-based consumption pricing and revenue sharing. It has built a growth model where "revenue = Token price × invocation frequency × number of module applications."

Currently, Token-based revenue accounts for about 5% of Xunce Tech's total revenue, with the company expecting this proportion to rapidly increase to 20-30% by the end of the year. The market's valuation logic for Xunce has thus undergone a major transformation, breaking free from traditional PSR (Price-to-Sales Ratio) constraints and allowing for greater imagination.

Xunce Tech's model also suggests that in the AI economy, large model providers will likely play the role of "refiners," processing underlying computing power and data into directly consumable "finished products" priced in Tokens, while controlling key ecological niches in the value distribution system.

Meanwhile, cloud service providers will likely play roles akin to "power plants" and "power grids." They do not directly price Tokens but determine their underlying costs.

Take Alibaba Cloud as an example. By the end of February 2026, its cumulative external commercial revenue for the 2026 fiscal year had surpassed 100 billion yuan. Revenue from AI-related products continued its high-growth trajectory, achieving double-digit percentage growth for the tenth consecutive quarter.

Rising demand has led to price hikes. Last week, Alibaba Cloud issued three product price increase notices in four days, adjusting service prices for certain model units in its BaiLian platform and free API quotas for DataWorks—highlighting cloud providers' influence on Token costs.

In traditional architectures, cloud providers' main revenue came from infrastructure billing for virtual machines, storage, and networking. Entering the Agent era, they can fully promote resource (Token)-based billing and secure long-term contract revenue through Agent platform subscriptions, developer ecosystem suites, and industry-specific solutions.

Computing power providers resemble even more upstream raw material and fuel suppliers. NVIDIA's high-end GPUs remain at the core of the industrial chain, while high-bandwidth memory (HBM) is now in short supply. The three major memory manufacturers—Samsung, SK Hynix, and Micron—face both capacity constraints and rising gross margins.

Some may worry about potential overcapacity in computing power or inflated stock prices for NVIDIA and memory manufacturers, but none of this affects the historical progression toward Token-based pricing.

Token-based pricing provides a clear calculation basis for value distribution across the entire industrial chain.

Just as the establishment of the kilowatt-hour enabled the formation of electricity markets, and traffic/exposure metrics became pricing units for Douyin and WeChat Channels, Tokens are transforming the AI economy from something "perceived as useful" into something "accountable and taxable," becoming a tangible economic component.

Carnival

Token-based pricing clarifies the operational logic of the AI economy. However, as a transformative technological revolution, does the AI economy follow traditional economic laws?

At the very least, the traditional economic theory of supply-demand balance does not fully apply to the AI economy.

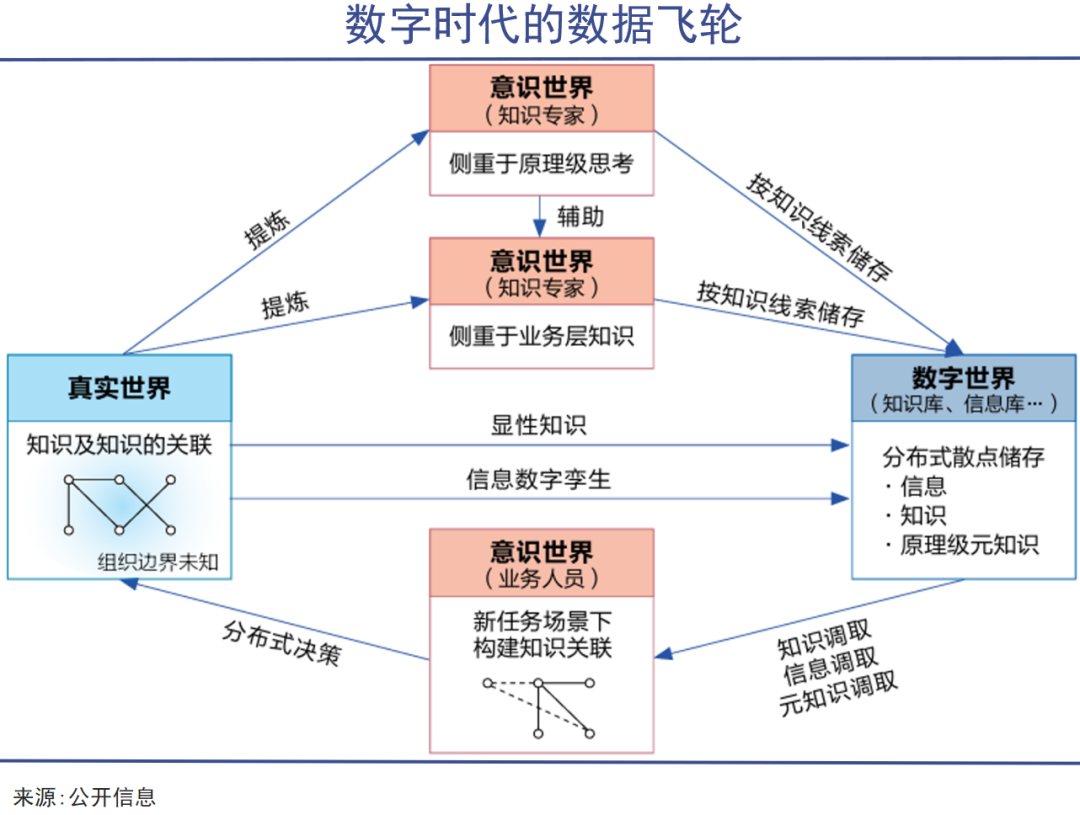

In traditional models, supply and demand are two independent curves intersecting. In the AI economy, however, supply itself improves quality through data flywheels. The rightward shift of the demand curve occurs not due to external income changes but because the supply curve itself shifts downward and to the right.

At this stage, we are more likely to witness a replay of the "Jevons Paradox" from the steam era in the AI age. The Jevons Paradox states that when steam engine efficiency improves and coal consumption per horsepower decreases, total coal consumption actually surges because cheaper steam power spurs the creation of more factories, trains, and ships.

Similarly, the lower the unit production cost of Tokens, the more groups willing to consume them, and the more scenarios where Tokens are preferred over human labor. Ultimately, the total cost of Tokens—or the total value of the AI economy—increases.

According to domestic media statistics, Token production costs have dropped by over 99% in the past two years. The cost per million Tokens for GPT-4 fell from $37.5 in 2024 to $0.14 in 2025. However, according to Silicon Valley venture capital firm Menlo Ventures, global corporate AI spending in 2025 increased 3.2 times compared to 2024.

If this trend continues, even if the unit price of Tokens approaches zero, the total value of Tokens consumed globally (total volume × unit price) and its proportion of GDP could grow by hundreds or thousands of times.

This explains why companies like Zhipu and MiniMax, despite massive losses, are still valued by capital markets at levels exceeding many traditional internet firms—markets are pricing not today's profits but the future total value of the Token economy.

Moreover, what is produced with Tokens becomes increasingly valuable.

The value created by the same one million Tokens can vary ten thousandfold across scenarios, depending entirely on the tasks performed. Tokens used for casual chat may be worth a few cents, those for coding hundreds or thousands of yuan, and those for quantitative trading or corporate mergers and acquisitions could be worth tens of thousands of yuan.

Stanford University's 2026 AI Index Report estimated that in 2024 alone, generative AI created approximately $172 billion in consumer surplus for U.S. consumers, with users deriving far more value than they actually paid.

It is worth noting that after AI replaces a significant amount of human mental labor, traditional labor supply theories will also face challenges. The traditional demand curve will collapse as a whole due to shrinking purchasing power, while the supply side remains robust due to automation. This is what Keynes referred to as 'insufficient effective demand caused by technological unemployment.'

It's just that at this stage, everything surrounding the exponential growth of Token consumption is still cloaked in prosperity, leading to a carnival across the industrial chain and capital markets.

However, history has repeatedly proven that whenever a new technology is endowed with boundless imagination by the capital markets, the bubble always reaches its end before the value does.