Farewell to Price Wars: Large AI Models Usher in the Inflation Era

![]() 04/24 2026

04/24 2026

![]() 406

406

Value Creation Outweighs Cost Control

By Chen Dengxin

Edited by Li Ji

Formatted by Annalee

Currently, price increases have emerged as a defining theme for large AI models.

As we step into 2026, large AI models are no longer offering discounts; instead, they have openly embraced price hikes, with manufacturers of all sizes joining the trend. Zhipu has even raised its prices twice in just three months.

Consequently, domestic large AI models have, for the first time, aligned their pricing with leading overseas counterparts.

Are the price hikes for large AI models a fleeting trend or a long-term shift? Has the strategy of competing on price to capture market share reached its end? Will value competition become the central narrative of future competition?

There was a period when price reductions were the focal point of competition for large AI models.

"Across-the-board price cuts," "two products for free," "one cent per million tokens," "90% cheaper than similar industry products," "free, completely free, permanently free"... These were common slogans.

Behind these actions, large AI models chose to sacrifice short-term profits for long-term development.

In simpler terms, to achieve AI inclusivity, the token prices of large AI models needed to continuously decrease. Only then could they empower various industries on the B2B front and become standard daily tools on the B2C side.

Tokens Take Center Stage for Large AI Models

After all, expanding the market pie allows for enjoying economies of scale and gaining greater momentum.

Zhu Xunyao, a senior expert at Alibaba Cloud, once stated, "Alibaba Cloud's large AI model price cuts aim to enable more users and small and medium-sized enterprises to use large AI models, accelerating the early outbreak of the AI application market."

Xin Zhou, General Manager of Baidu Smart Cloud's AI and Large Model Platform, also commented, "Large AI models are still in the market cultivation stage. Only when enterprises recognize the immense value brought by large AI models can they apply them to larger-scale and more complex business scenarios."

It's crucial to note that large AI models do not blindly pursue price reductions but also offer concessions through innovation.

Take the Doubao Large Model 1.6, released in June 2025, as an example. It broke away from previous industry pricing norms: deep thinking or multimodal capabilities did not require additional token payments, and token prices increased with input lengths across three zones (0–32K, 32K–128K, and 128K–256K), offering higher cost-effectiveness through zonal pricing.

Zonal Pricing Breaks the Mold

Unexpectedly, the tradition of large AI model prices only decreasing and never increasing has been broken.

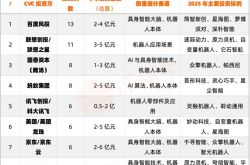

After entering 2026, large AI models such as GLM, Seedance, and HY2.0 Instruct have, to varying degrees, raised their prices. Notably, GLM-5.1's cache hit token price in Coding scenarios is close to that of Anthropic's Claude Sonnet, marking the first time a domestic large AI model has achieved price parity with leading overseas manufacturers in a core scenario.

This indicates that large AI models are increasingly unwilling to sell tokens at bargain prices.

Luo Fuli, head of the MiMo large AI model, stated, "I advise LLM companies not to blindly engage in price wars before figuring out how to price Coding solutions without causing financial losses. Selling tokens at extremely low prices while opening doors to third parties may seem attractive to users, but it's a trap—the same trap Anthropic just escaped."

In short, competing on price is inferior to competing on value.

Low token prices do not necessarily mean strong model capabilities. Insufficient model capabilities can lead to higher token consumption, resulting in greater waste and ultimately a lose-lose situation for users and large AI models.

Thus, large AI models must return to value-based competition.

The shift of large AI models from price wars to value competition is driven by three main factors.

First, the supply-demand imbalance.

In the age of intelligent agents, token lengths have jumped from tens of thousands to millions or even tens of millions, with consumption increasing by hundreds of times, becoming a key factor for large AI models to acquire customers.

This is closely related to the extension of thinking chains.

Token consumption grows linearly in a question-and-answer mode but exponentially in an intelligent agent mode, which allows for long-chain thinking, executing multiple tasks, and completing circular calls.

This is evident from Zhipu's data.

In the first quarter of 2026, Zhipu's large AI model API call pricing increased by 83%, while token consumption grew by 400%. The price increase did not suppress demand; instead, supply fell short of demand.

Zhang Peng, CEO of Zhipu, said, "The commercial value in the AGI era can be summed up in a simple formula: intelligence ceiling × token consumption scale. The intelligence ceiling determines pricing power, while the token consumption scale determines value volume. In the future, the standard for measuring an individual's or organization's value will no longer be how much information they possess but their ability, as token architects, to build complex Agent systems within a given budget and drive large AI models to autonomously operate these systems."

Thus, the competitive focus of large AI models has shifted.

Large AI models no longer compete solely on parameters, quantity, or benchmark rankings but instead focus on applications and ecosystems. Cost-effectiveness is no longer the sole key metric; value creation has taken center stage.

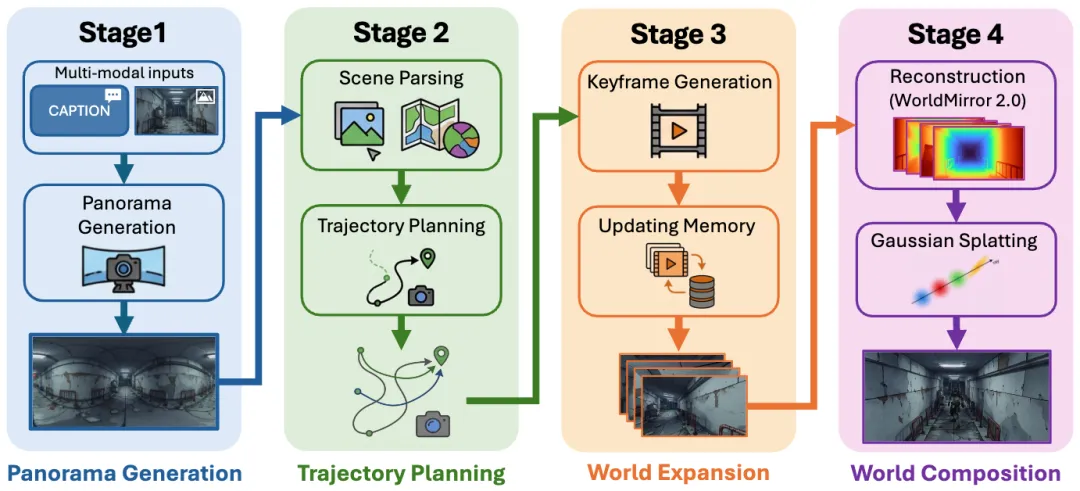

For example, the Hunyuan 3D World Model 2.0 can understand various inputs like text, images, and videos, automatically generating 3D scenes and seamlessly integrating with workflows such as game development and AI-driven comics.

Generate a 3D World in One Sentence

Another example is GLM-5.1, which can independently and continuously work for over 8 hours on a single task, making it the only open-source model with this capability currently.

Second, cost-sharing.

The deployment of large AI models relies heavily on cloud computing, but cloud computing costs are visibly increasing, making price hikes inevitable.

Take data centers as an example. On one hand, components like storage chips have become a seller's market, driving up construction costs. On the other hand, as major energy consumers, data centers face rising operational costs amid high energy prices.

It's clear that large AI model services are more expensive than traditional internet services.

More critically, as AI technology continues to iterate, large AI models must also innovate, further driving up expenses and necessitating the exploration of viable and reasonable commercialization paths.

Tan Dai, President of Volcano Engine, once said, "For the enterprise market, business models must be built on sustainable development. Any product must be profitable and cannot rely on subsidies for price reductions. If price reductions lead to losses, larger scales will result in greater losses, which is not a reasonable business model."

Third, survival of the fittest.

As the "Hundred-Model Battle" evolves, many weaker players have exited the market. Even strong players find it difficult to excel in every area and must focus on their core strengths.

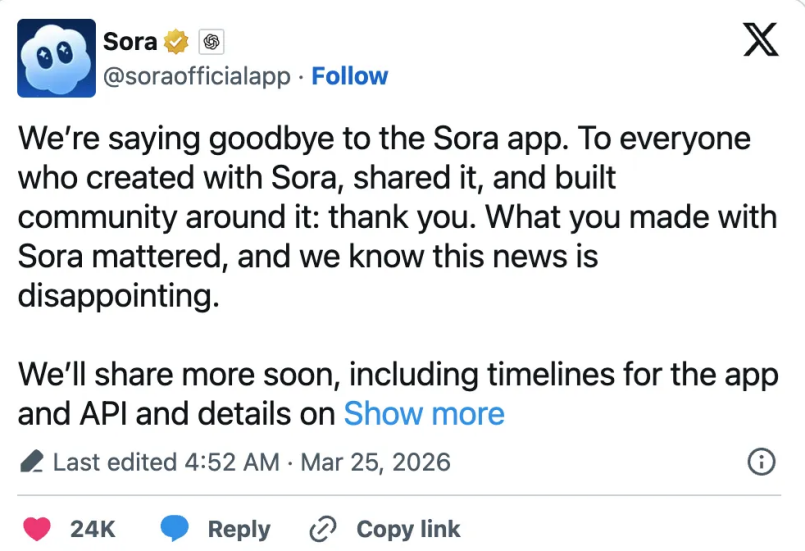

Sora is a prime example.

As an AI video generation tool under OpenAI, Sora initially received praise and was seen as a disruptive product in the AI-generated video space.

However, due to financial losses, it became a liability for OpenAI.

Sora's Demise

Public data shows that Sora's hit rate for generating commercial-grade content was only 5%–10%, with inference costs ranging from $30 to $50 per minute of high-quality video. Consequently, its 30-day user retention rate was 1%, and its 60-day retention rate was 0%.

In short, Sora failed to become a productivity tool.

However, Sora's failure does not mean AI-generated videos are unviable. Chinese AI video generation models like Seedance and Kling not only excel in technology but also have abundant application scenarios, ultimately achieving a comeback.

"Blue Whale Tech" reported, "Before Seedance 2.0, most models could only generate a 5-second video at a time, with 3 seconds potentially being unusable. A complete shot required stitching multiple videos together. In contrast, Seedance 2.0 can generate a complete 15-second video with smooth camera transitions."

Beyond Seedance, Kling has also performed impressively.

Financial data shows that from the first to fourth quarters of 2025, Kling's revenue was 150 million yuan, 250 million yuan, 300 million yuan, and 340 million yuan, respectively. While growth has slowed, it remains on an upward trajectory.

In summary, large AI models have moved past the stage of using low-cost or even free strategies to promote AI inclusivity and have entered a phase of value creation. To better unleash productivity, they must compete not only on model efficiency but also on scenario adaptability.

Thus, the race for large AI models has entered the 'deep waters.'