Apple's Innovative Leap: Crafting Conservative 'AI Glasses'

![]() 04/14 2026

04/14 2026

![]() 492

492

The Dawn of the 'iGlasses' Era

By VR Gyroscope

On April 12 (local time), the latest scoop from Gurman reveals that Apple's display-free AI glasses project has achieved significant strides.

Dubbed N50, this smart glasses model is poised for a debut as early as the end of this year or early 2027, with sales slated to commence in 2027. Lacking a display, they aim to rival Ray-Ban Meta glasses, boasting cameras for capturing photos and videos, speakers for calls and music, and a design akin to conventional glasses.

Based on the current information, Apple's first-generation AI glasses, in contrast to the Apple Vision Pro—the second offering in Apple's 'spatial computing' lineup—can be deemed quite 'conservative'.

01

Embracing Cellulose Acetate: Four Glasses Designs Undergoing Testing

It is reported that Apple will independently design the N50 smart glasses, forgoing partnerships with renowned eyewear brands like Meta's collaboration with EssilorLuxottica or Google's with Warby Parker.

Currently, four design options for the test frames have surfaced, with plans to launch some or all of these styles. Available colors include black, ocean blue, and light brown:

① Rectangular-frame glasses reminiscent of the classic Ray-Ban Wayfarer;

② Small-frame glasses akin to those donned by Tim Cook himself;

③ Oval large-frame glasses;

④ More refined and petite oval glasses.

For the frame material of its latest smart glasses, Apple has opted for cellulose acetate—a material that seamlessly blends lightness, low allergenicity, durability, and a premium feel. The lightweight cellulose acetate frame imposes no significant burden on the nose bridge. Crafted by cutting, shaping, and polishing multiple material pieces, the frame ensures durability while also catering to those with sensitive skin by considering low allergenicity.

Ordinary glasses designed with cellulose acetate sheets. Image source: Internet

The camera design stands out as a key feature of these glasses. Apple is reportedly considering vertically oriented oval lenses encircled by indicator lights. This light design not only considers aesthetics but also respects the privacy of the individual being filmed during recording, aligning with Apple's emphasis on privacy.

This design diverges from the circular camera on Ray-Ban Meta glasses. Internally, Apple refers to this design language as 'icon,' sharing a similar distinctive and unique style with AirPods and Apple Watch.

Rendering of Apple's AI glasses, not an actual product, for reference only. Image source: AppleInsider

Gurman stated that these smart glasses are part of Apple's 'Trident' strategy for AI wearable devices, with the other two being a new generation of AirPods and a neck-worn device equipped with a camera. These three devices aim to leverage computer vision technology to interpret the user's surroundings, providing data support for upgraded Siri and Apple Intelligence to achieve core functions such as visual understanding and real-time translation.

02

Apple's 50th Anniversary: Cook Discusses Smart Glasses, Pays Tribute to Jobs

To some extent, the development of these AI glasses may have been an ongoing endeavor within Apple for some time.

On April 1, 2026, Apple celebrated its 50th anniversary. Over the years, Apple has created numerous truly remarkable and successful products, such as the iPod, iPhone, and Apple Watch, which have nearly revolutionized the entire tech landscape.

On this momentous occasion, Apple CEO Tim Cook was interviewed by Ben Cohen, a columnist for The Wall Street Journal. In the video, Apple opened its company archives, showcasing representative patents and prototypes of successful products over the years.

Ben Cohen: 'If we're still here ten years from now, what will be the next hot product on this table?'

Tim Cook: 'I believe it will be the convergence of hardware, software, and services. Because we cherish delivering a complete user experience. We don't just aim to create great products. We want to observe how others will interact with them. We hope they enrich people's lives.'

Ben Cohen: 'You mentioned wanting to observe how others will act. Will it involve wearing glasses?'

Tim Cook: 'I can't divulge that. You can't have a ship that leaks from the top.'

Interestingly, this phrase originated from an interview with Steve Jobs in 2007, where he also mentioned that the form of mobile devices five years later would be unpredictable. He explained that he hadn't envisioned five years prior that map applications would emerge on such devices, but as technology evolved, certain features gained increasing popularity.

Jobs also employed this phrase to describe the internal situation at Apple, discussing former Apple CEO Gil Amelio, saying, 'Gil is a good person, but he often said that Apple is like a leaking ship, and my job is to steer the ship in the right direction.' Now, Tim Cook has once again invoked the 'leaking ship' metaphor, perhaps hoping that Apple can continue to forge ahead steadily. Apple has always been known to unveil 'surprises' at pivotal moments.

03

The Evolution of Apple's Visual Intelligence: From iOS to GlassOS?

In terms of specific functionalities of AI glasses, Apple is anticipated to integrate Visual Intelligence, which has already been validated on iOS.

Visual Intelligence made its official debut as part of Apple Intelligence with the iOS 18.2 system following the release of the iPhone 16 in 2024. Initially limited to this model, Apple gradually extended this capability to more subsequent models starting with iOS 18.4.

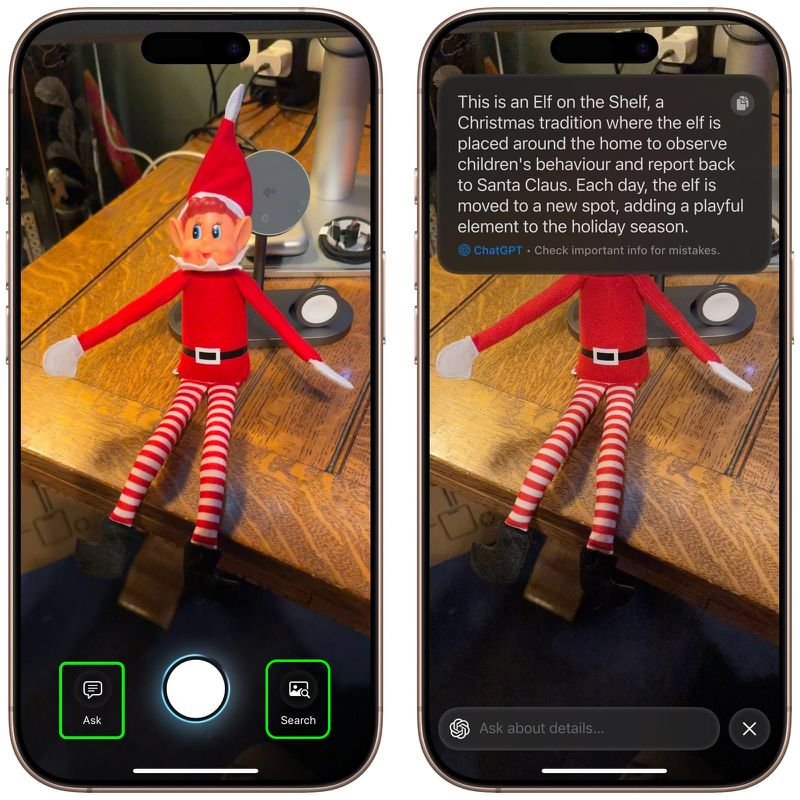

Users can pose AI questions through the camera's Visual Intelligence. Image source: Internet

The visual intelligence feature on the phone can identify detailed information about the location being photographed, summarize and translate text, access website links, dial phone numbers, create calendar events when viewing posters or flyers, identify biological species, and more. Even in iOS 26, this feature has been expanded to screen search, where visual intelligence appears directly next to screenshots, further streamlining search operations. Essentially, this feature still serves as an enhancement tool for the phone screen.

This feature has also garnered high praise from Tim Cook, who pointed out, 'One of our most popular features is visual intelligence, which aids users in learning and interacting with content on the iPhone screen more than ever before, enabling faster searches, actions, and answers within apps.'

From a system architecture perspective, Apple's AI glasses capture environmental information through cameras, which is then visually analyzed by large models. Siri, in combination with user instructions, understands and decides upon this information, achieving a hands-free interaction experience of 'what you see is what you get.'

Visual Intelligence is primarily activated through the camera button on the phone, utilizing the camera to capture objects in the physical world and performing deep environmental understanding through large models. From the phone's iOS to the smart glasses' 'GlassOS,' Apple's Apple Intelligence function call entries are systematically integrated, making Siri more convenient and user-friendly. However, this is not a mere function migration; it must be reconstructed under constraints of energy consumption and battery life.

Concept image of Apple's AI glasses, not an actual product, for reference only. Image source: Internet

To meet the all-day usage demands of camera and AI visual perception functions, there are heightened requirements for battery life and energy consumption on extremely lightweight devices like glasses. According to previous leaks, Apple is developing a chip for wearable devices for its first smart glasses. This chip is expected to be based on the processor used in the Apple Watch, with enhanced energy efficiency after customization. It can also control the 'multi-camera' system that Apple plans to integrate into its smart glasses. The chip is slated to enter mass production between 2026 and 2027.

Clearly, Apple's objective is to position AI glasses as a pure Apple Intelligence device. To explore more advanced application scenarios, Apple's AI glasses will also occupy a pivotal role in the entire Apple ecosystem. It is reported that Apple has long emphasized device synergy, and its smart glasses are more likely to serve as a perception entry point in the Apple Intelligence system, integrating into the existing iOS, iPadOS, and macOS ecosystems.

In the past, the industry anticipated Apple to launch a true AR glasses. However, considering the price, weight, and volume of the Apple Vision Pro, there are still physical bottlenecks in optics that need to be surmounted over time. Nonetheless, the popularity of Ray-Ban Meta smart glasses has undoubtedly provided Apple with fresh inspiration. By forgoing the addition of a screen in its first AI glasses and emphasizing the expansion of AI capabilities from the phone screen to the user's glasses, Apple, with Vision Pro's spatial computing and AI glasses' multimodal situational awareness as its two 'weapons,' is poised to become a key contender in competing with giants like Meta and Google for the next generation of human-computer interaction interfaces.

However, it is worth noting that before Apple releases its basic AI photography glasses, Meta's first consumer-grade AR glasses with a display, the Ray-Ban Meta Display, have already gained traction in the U.S. market since their launch in the fourth quarter of last year, with supply struggling to meet demand. In terms of the product roadmap for AI glasses, Apple is actually one generation behind.

04

Apple's Foray Accelerates Growth in the AI Glasses Industry

Apple's entry will undoubtedly have a profound impact on the fiercely competitive landscape of the 'hundred glasses battle.'

The most affected entity is undoubtedly Meta, which dominates the global AI glasses market with an 85.2% market share through its Ray-Ban series. Apple's arrival may disrupt this near-monopoly. Compared to Meta's reliance on EssilorLuxottica, Apple has chosen to independently design its frames. Gurman believes that with its formidable brand influence, self-developed chip technology, global retail network, and mature ecosystem, Apple is expected to catch up later, just as it did with the Apple Watch.

For Apple itself, entering the AI glasses market is also a strategic move to identify the next growth engine. By allocating more resources to lightweight glasses that better align with consumer habits, AI glasses are anticipated to penetrate the market more swiftly than the heavier Vision Pro. According to Gyroscope Research Institute data, global AI glasses shipments reached 8.7 million units, marking a significant year-on-year increase of 322%; they are expected to surpass 10 million units by 2026. Apple's entry may expedite this growth.

The crux of future competition lies in the ability to seamlessly integrate AI glasses into a broader device ecosystem. The N50 is not an isolated device; by deeply binding iPhone users, Apple further solidifies its ecological barriers. AI glasses will serve as a new entry point into Apple's integrated 'hardware + software + services' ecosystem.

Apple is ushering in the next product of the 'spatial computing' era.