Samsung AI Glasses Unveiled, Featuring Audio Broadcasting Capability

![]() 04/23 2026

04/23 2026

![]() 556

556

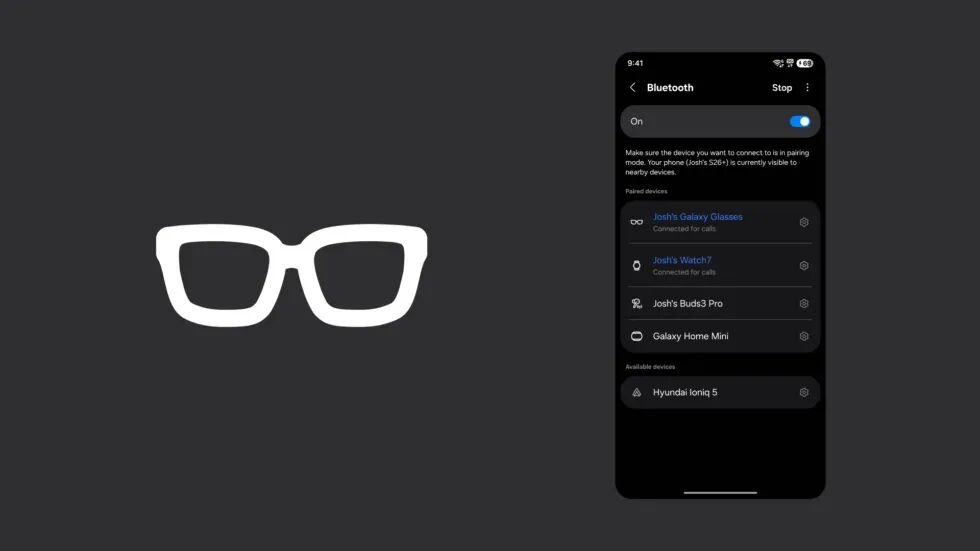

Recently, foreign media outlets have successively revealed UI images of Samsung's AI glasses. The first image, discovered deep within the system files of Samsung's One UI 8.5, is named "list_ic_glass 1." It showcases a design with a white border, square lenses, and a minimalist frontal silhouette. The second image is a simulated effect crafted by developer Josh Skinner, depicting "Josh's Galaxy Glasses" appearing between the Galaxy Watch and Buds in the One UI Bluetooth pairing list.

Simulated UI interface of Samsung AI glasses. Image source: sammyguru

Concept image of Samsung AI glasses. Image source: AR Circle (Unauthorized reproduction prohibited).

In the same week, significant developments occurred in the global AI glasses sector: Huawei launched its inaugural HarmonyOS AI glasses, while Apple announced that Tim Cook would step down as CEO in September, to be succeeded by John Ternus, Senior Vice President of Hardware Engineering—a technocrat deeply involved in Apple's hardware product development and widely regarded as a "product-focused" leader. The AI glasses arena is swiftly becoming a primary battleground for global tech giants, and Samsung has opted for the most understated yet potentially robust entry strategy.

Huawei AI glasses. Image source: Huawei

What Do They Look Like?

Judging from the leaked icons and available hardware information, the Galaxy Glasses pursue a radical "de-tech" aesthetic.

Samsung AI glasses icon. Image source: That Josh Guy

The icon itself serves as a compelling visual cue—square lenses, thick borders, and no visible modules make them nearly indistinguishable from ordinary plastic-framed glasses on the market. According to foreign media outlet Phandroid, these glasses weigh approximately 50 grams and may feature photochromic lenses that automatically darken outdoors while remaining transparent indoors.

According to the XR Research Institute, the Galaxy Glasses set to launch in 2026 will be pure AI glasses without a display, closely aligning with Meta Ray-Ban's product philosophy: prioritizing camera, voice interaction, and audio experiences over attempting to project information onto lenses.

The decision to omit a display follows a clear product rationale: extended battery life, reduced heat generation, and minimized technical risk. Samsung can first validate camera and AI interaction experiences with real users, leaving the most challenging hurdles for subsequent generations.

Core Hardware: 12MP Sensor, with Gemini as the Driving Force

According to Phandroid, the Galaxy Glasses feature a 12MP Sony IMX681 sensor supporting photography, video recording, QR code scanning, and gesture recognition. Connectivity options are expected to include Bluetooth and Wi-Fi, but not standalone cellular data. With a 245mAh battery, estimated battery life ranges from 6 to 8 hours, significantly shorter with continuous camera operation.

The Sony IMX681 is widely utilized in the smart glasses sector. A horizontal comparison reveals that Huawei's AI glasses also boast a 12MP camera with first-person perspective shooting and AI composition correction; Meta Ray-Ban similarly employs a 12MP sensor. All three are on par in terms of specifications, with differences ultimately determined by algorithms and ecosystems.

Meta Ray-Ban equipped with Sony 681 sensor. Image source: EssilorLuxottica

Omitting a display offers a distinct advantage in battery life—screens are the primary power drain in smart glasses. Without one, actual usage time under the same battery capacity becomes significantly longer.

At the software level, the Galaxy Glasses are expected to integrate Gemini AI, supporting voice control, notification push, and intelligent message processing across scenarios like hands-free calling, music playback, real-time translation, and navigation prompts. Third-party developers have already begun optimizing apps for the Android XR form factor, with Spotify among the earliest adopters.

The Smartphone as the True "Brain"

Understanding the Galaxy Glasses requires looking beyond the frame and camera to its host device—the Galaxy S26—and the behind-the-scenes preparations brought by One UI 8.5.

Glasses without a display possess extremely limited computing power and storage. They perceive the world and collect signals, but true processing and decision-making occur on the smartphone in your pocket. This is not a compromise but a deliberate architectural choice: reserving limited battery and space for sensors and audio while offloading computing pressure to the phone.

Several One UI 8.5 updates facilitate this "phone-as-brain, glasses-as-senses" division of labor.

My Files unified management aggregates files from Samsung phones, tablets, PCs, and TVs into a single interface. A practical scenario: you finish a PPT on your office computer, then say to your glasses during your commute, "Send today's presentation to Mr. Zhang." The phone automatically locates the file on your work computer and sends it. No need to pull out your phone, open an app, or even remember which device holds the file.

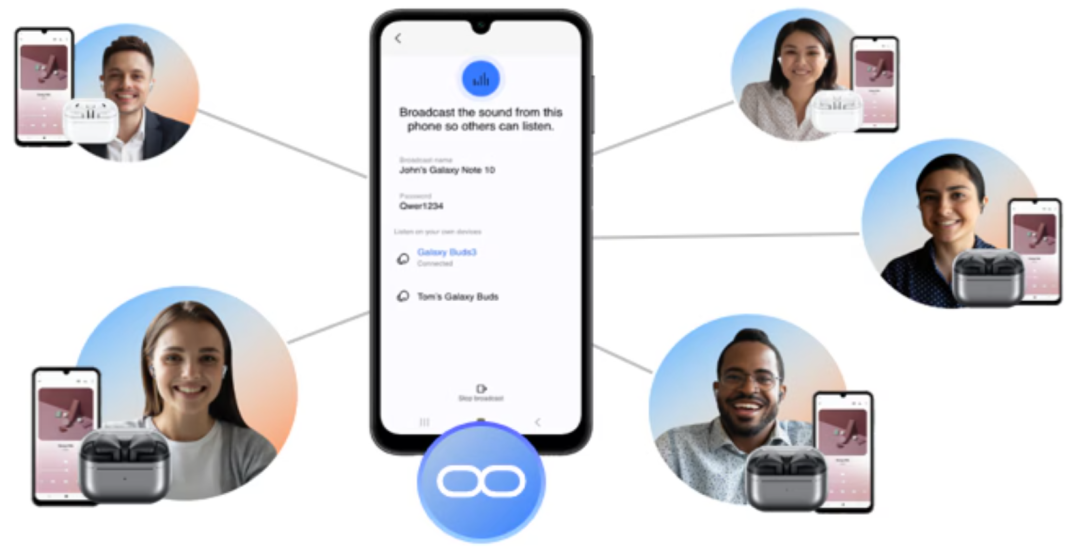

Auracast audio broadcasting enables the phone to broadcast sound in real-time to nearby Bluetooth devices via its built-in microphone. In the glasses scenario, the phone becomes the audio hub: during a meeting with foreign clients, the phone microphone picks up speech, performs translation, and broadcasts results to the glasses' speakers. The glasses themselves consume almost no power for translation—all heavy lifting is done by the phone, with the glasses merely "announcing" results.

Auracast audio broadcasting. Image source: Samsung

Bixby's natural language upgrade serves as the neural hub of the experience. Glasses without a touchscreen must rely on voice and AI. The issue with older voice assistants was their requirement for precise formatting; upgraded Bixby removes this limitation. When the glasses announce a calendar reminder, you can casually say, "Postpone this by two days," and Bixby understands "this" refers to the just-mentioned reminder, modifying it directly without a full command. Passing a restaurant, you ask, "What's the rating here?" Bixby answers mid-music playback, "Dianping 4.2 stars, ¥68 per person." Natural, fast, and frictionless.

DeX persistent window memory ensures the phone's display output to external monitors resumes its previous state with each connection. This reflects Samsung's broader intent: positioning the Galaxy S26 as a stable, content-distributing core node, with glasses serving as a natural endpoint in this chain.

Samsung hasn't explicitly stated, "This is designed for Galaxy Glasses," but these features collectively resemble a pre-laid infrastructure blueprint.

Deeply Bound to Google

Among all competitors, Samsung has chosen a unique path: fully entrusting the AI glasses' platform foundation to Google's Android XR.

Four "glasses" form factors covered by Android XR. Image source: LinkedIn

In the short term, this brings clear advantages. Gemini, embedded directly into the interaction layer as the AI engine, brings Google's real-time translation, Google Maps navigation, and multimodal understanding capabilities along with it. Android XR is an open platform; third-party developers adapting to it reach users across Google's entire ecosystem—a scale effect Samsung's proprietary platform cannot match in the short term.

However, the trade-offs are equally clear. Samsung lacks autonomy at the platform level, with the product's core experience dictated by Google's technical roadmap and business decisions. Huawei chose HarmonyOS, Apple opted for a proprietary system—their ecosystems have boundaries, but platform destiny remains in their hands. From day one, Samsung's Galaxy Glasses are built on a foundation it cannot fully control.

This is not an unprecedented choice. Samsung phones have thrived in the Android ecosystem for years while contending with the stereotype of "strong hardware, weak software, ecosystem reliant on Google." The Galaxy Glasses follow the same path, leveraging Google's strength in the short term. However, if AI glasses truly become the gateway to next-gen personal computing, platform autonomy will grow increasingly critical. By then, the relationship between Google and Samsung may prove more complex than today.

This Track Is Already Crowded

Even before its official debut, the Galaxy Glasses face formidable opponents.

Meta Ray-Ban is currently the market's most successful AI glasses, establishing category mindshare internationally. Samsung must carve out its position in a market already defined by a pioneer. Huawei has already made its move in China, with frames weighing just 35.5 grams and deep HarmonyOS ecosystem integration. Apple remains the wildcard—with new CEO John Ternus hailing from hardware, an Apple AI glasses launch would redefine the category's pricing logic through brand premium and iOS ecosystem stickiness, forcing all players to reposition.

John Ternus to succeed as Apple CEO. Image source: appleinsider

Rumors suggest the Galaxy Glasses will start at $379, pricier than Meta Ray-Ban. The strategy is clear: avoid competing on price and instead offer deeper Galaxy ecosystem integration to retain existing Samsung users. The glasses haven't arrived, but the game has already begun.

The war has begun. Huawei made the first move, Apple appointed a hardware-centric new CEO, and Meta defends its market share with rigor.

Samsung, meanwhile, has laid its groundwork through One UI 8.5's underlying restructuring, Galaxy S26's ecosystem positioning, and its deep Android XR platform integration with Google—all forming the stage for the Galaxy Glasses.

The glasses haven't launched, but the chessboard is set.

END