Is Android 17 Underdeveloped? Android 17 Beta First Impressions: None of Google's Touted AI Features Have Come to Fruition

![]() 05/14 2026

05/14 2026

![]() 325

325

Google has unveiled an ambitious vision for Android.

Just recently, Google outlined a grandiose plan for Android's future.

Held a week prior to I/O, Google's Android Showcase was quite a spectacle. For a more comprehensive introduction, refer to the initial report by Leitech, titled "Android 17 Update: Google Speeds Up AI Integration on Smartphones and Launches a Premium PC." We won't delve deeper into that here.

However, it's crucial to note that the major updates announced by Google don't solely pertain to Android 17. They encompass at least three distinct layers:

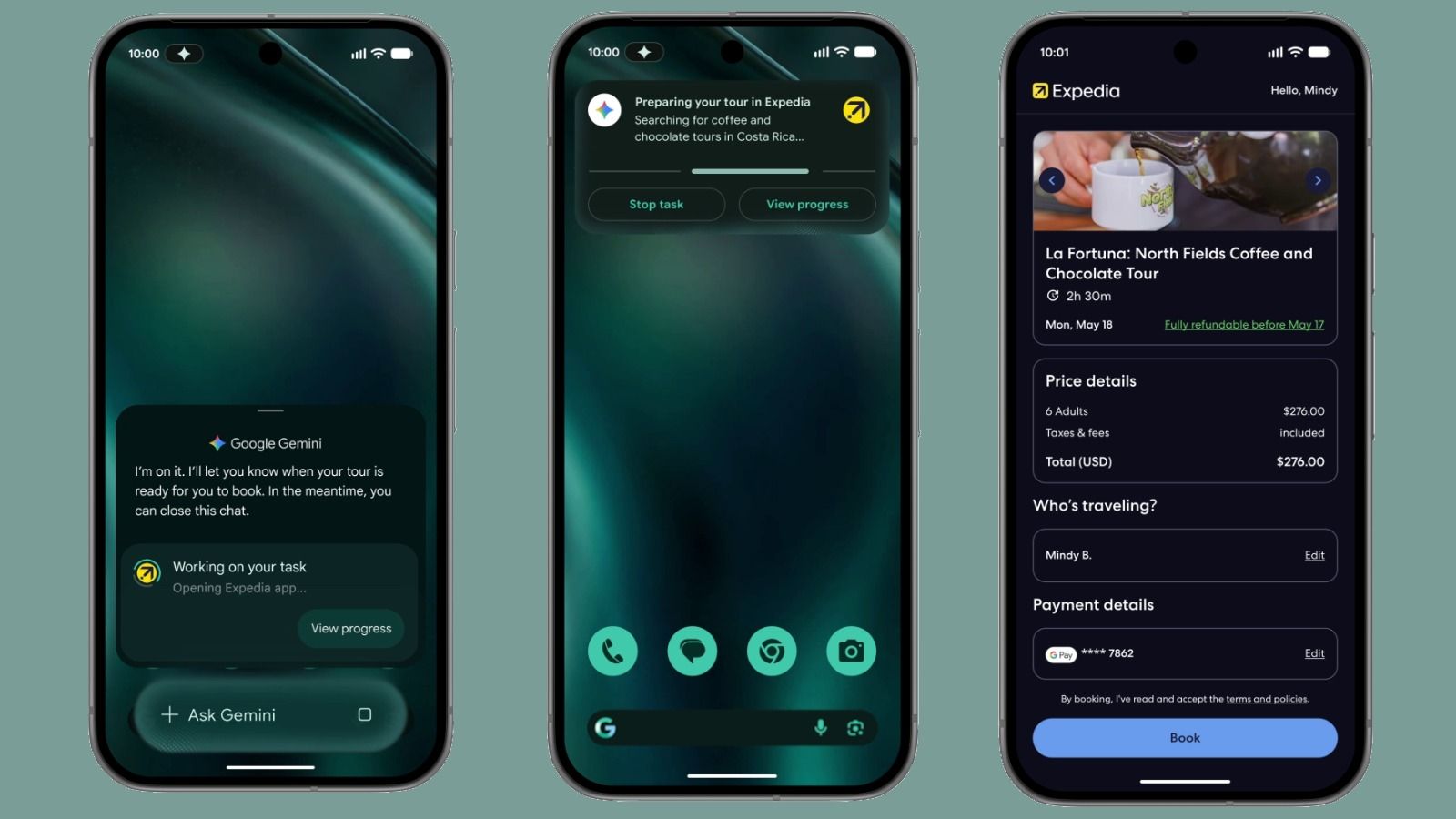

The first layer involves system updates for Android 17 itself, including enhancements to the user interface, notifications, security, windows, multitasking, and other core experiences, along with updates to the underlying framework. The second layer introduces the new AI feature set, Gemini Intelligence, which is tasked with contextual understanding, cross-application collaboration, and proactive task completion. The third layer pertains to the cross-device Agent ecosystem, exemplified by features like Agentic Browsing with Gemini in Chrome.

Image Source: Google

Naturally, Android 17 is breaking free from the confines of smartphones and will be available in various forms, including Googlebook, Google AI glasses, and XR headsets.

Over the past few years, people have wondered why Google, with its suite of super apps like Android, Chrome, Gmail, Maps, Photos, YouTube, and Search, seems to lag in implementing AI on hardware products. Now, the answer is becoming clearer. Google's true ambition is to make Gemini an intelligent layer that spans systems, applications, and devices.

The question remains: How far along is Google's grand vision?

A Glimpse at Android 17 Beta: Much Remains Unfinished

Following the event, I upgraded to Android 17 QPR1 Beta 2 on my Pixel 8. The experience is somewhat nuanced. While numerous changes are evident in the system layer of Android 17, and some features are indeed becoming available,

the most groundbreaking and forward-looking aspects of the Android Showcase—Gemini Intelligence and cross-device Agent capabilities—are mostly still in the planning, backend coding, hidden settings, or server-side deployment phases.

The most noticeable changes are in the system interface.

Google continues to refine the Material 3 Expressive design language. Compared to the more subdued and engineering-focused interface styles of previous Android iterations, this version emphasizes dynamism, hierarchy, and emotional resonance. The notification shade, quick settings, volume panel, power menu, and lock screen animations now feature more translucency, blurring, and elastic transitions.

Image Source: Leitech

This may not immediately make every user feel like the system is "brand new," but the overall system is indeed evolving towards a softer and more spatially aware direction.

The most apparent change is the Blur effect. Previously, Android's visual hierarchy was relatively straightforward—panels were distinct, notifications were clear, and system controls had defined boundaries. However, this could also make the interface feel rigid. With Android 17 QPR1 Beta 2, many system panels now feature a frosted glass-like background treatment. The quick settings and volume panels no longer feel like rigid overlays but instead have a subtle connection to the content beneath them.

The Android system has rarely undergone such a large-scale unified adjustment to its visual system over the years. Especially on Pixel devices, Google has been somewhat contradictory: on one hand, it wants Pixel to be the aesthetic benchmark for Android, but on the other hand, it can't fully control all Android devices like Apple can.

However, this transformation is not yet complete. Some pages have clearly been updated, while others still retain the previous generation's style.

In addition to the UI, some functional changes are also evident in QPR1 Beta 2. For instance, App Bubbles (application bubbles) are beginning to take shape as a global bubble window. By long-pressing an app, you can access a bubble-like operation entry point, and you can also convert any app's shortcut into a bubble. In essence, Google is transforming a special design previously limited to messaging into a standard capability for all apps, expanding it to a broader multitasking scenario.

Image Source: Leitech

This direction is quite logical. As smartphone screens enlarge, and foldables, tablets, and desktop modes push Android away from the traditional single-window logic, apps no longer have to open solely in full-screen mode but can instead float, be temporarily stored, and be accessed at any time in a more lightweight manner. Moreover, on desktop Android, there is theoretically even more room for development.

However, App Bubbles are far from mature at this stage. The UI design requires optimization, and the experience is unstable. We may need to wait for the official release in June to have a definitive experience.

Desktop Mode is in a similar state. Google has been promoting desktop mode for several years, and Android 17 shows continued progress in this area. The direction is clear: smartphones, tablets, external displays, and new PC form factors may all share a more flexible Android computing foundation in the future.

Especially when considering the newly announced Googlebook, Desktop Mode is not just a minor feature for connecting smartphones to external displays but also a part of Google's "roadmap" for Android PCs.

Android interface on Googlebook. Image Source: Google

However, from the current QPR1 Beta 2, it still leans more toward a developer preview. Window management, app adaptation, input/output, and cross-screen continuity still have a long way to go. It's no longer just an experimental project like a few years ago, but it's not yet at a stage where it can compete head-on with iPadOS, Windows, or macOS.

Live Updates are in a similar situation. Google clearly aims to extract continuous statuses like ride-hailing, food delivery, navigation, and timers from the notification shade and turn them into clearer, real-time system-level information. This is very similar to Apple's Live Activities, with the core goal of allowing users to track task progress without repeatedly opening apps.

Android 17 already has some prototypes, but the completeness and consistency are still lacking. It requires app ecosystem cooperation and stronger system-level guidelines from Google.

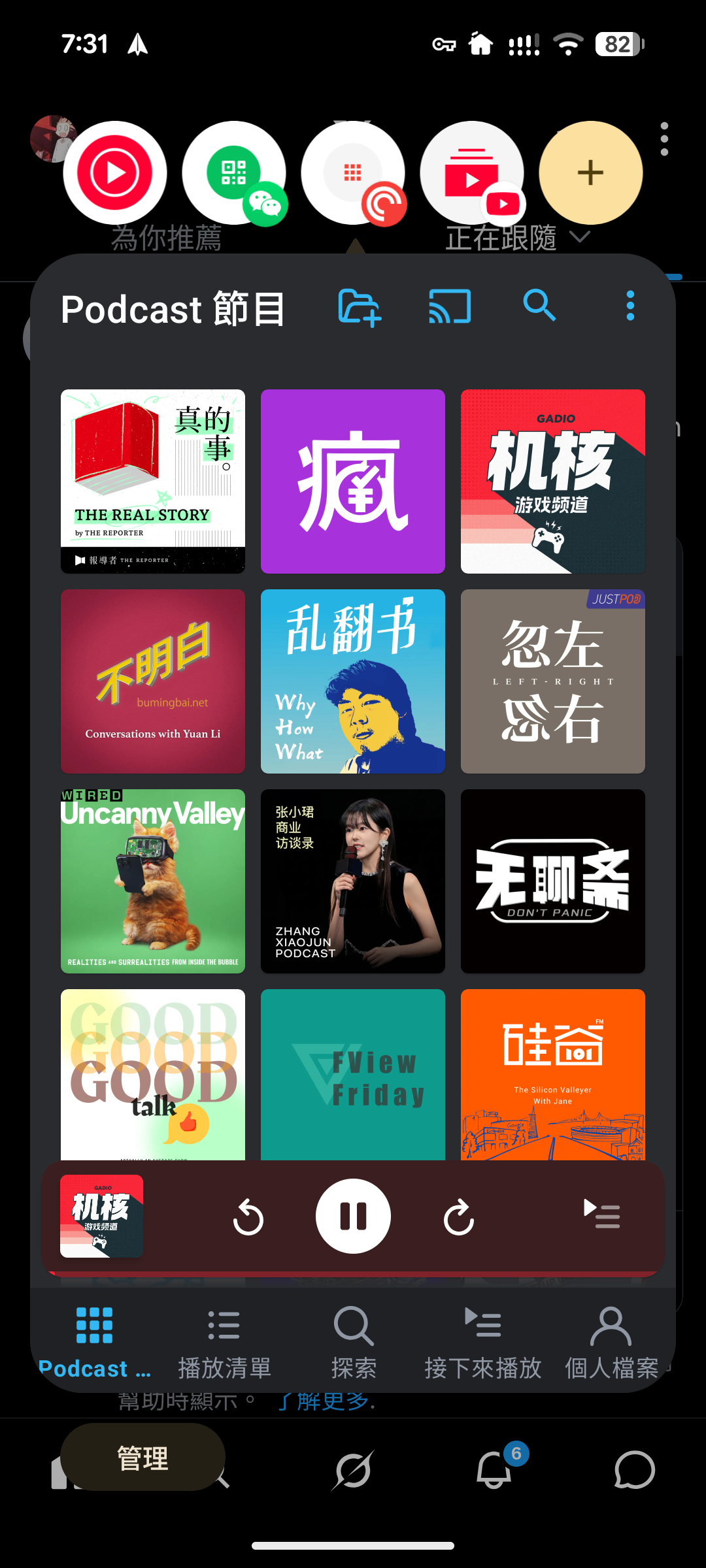

There are also some features that we can only glimpse, such as native App Lock, more Pixel Launcher customization options, removing the fixed search bar, removing At a Glance, Action Corners, Universal Cursor, new Contact Picker, and multi-device Handoff.

Image Source: Leitech

Some of these appear in code, strings, or hidden flags, while others can be traced through developer tools, but users can't really use them yet.

Standing on Apple's Shoulders: What Are We Expecting from Gemini Intelligence?

Google has integrated many elements into the system's foundation, but most of them haven't been officially activated yet. For us, these are mostly clues that help us judge where Google wants to take Android, but we can't truly incorporate them into our daily experience yet.

The biggest gap, however, lies in the most attractive part of the Android Showcase: Gemini Intelligence.

Image Source: Google

At the event, Google presented a future closer to reality than Apple Intelligence. Gemini is no longer just an app that you actively open but an intelligent layer that understands your current screen, apps, emails, calendar, photos, web pages, and device status. It can perform tasks across apps, automatically organize information, proactively suggest next steps based on context, conduct Agentic Browsing in Chrome, and continuously understand the real world through cameras and voice on future XR devices.

Additionally, the planned Android 17 will support Gemini's ability to freely generate widgets, offering users "widget freedom" while also narrowing the gap with iOS's widget ecosystem and even surpassing it.

Google has a strong hand. Android is the world's largest mobile operating system, Chrome is one of the world's largest browsers, and Gmail, Maps, Photos, YouTube, and Drive cover the digital lives of a vast number of users. Theoretically, Google is better suited than any AI company to create an Agent that "understands you, can operate, and can collaborate across devices."

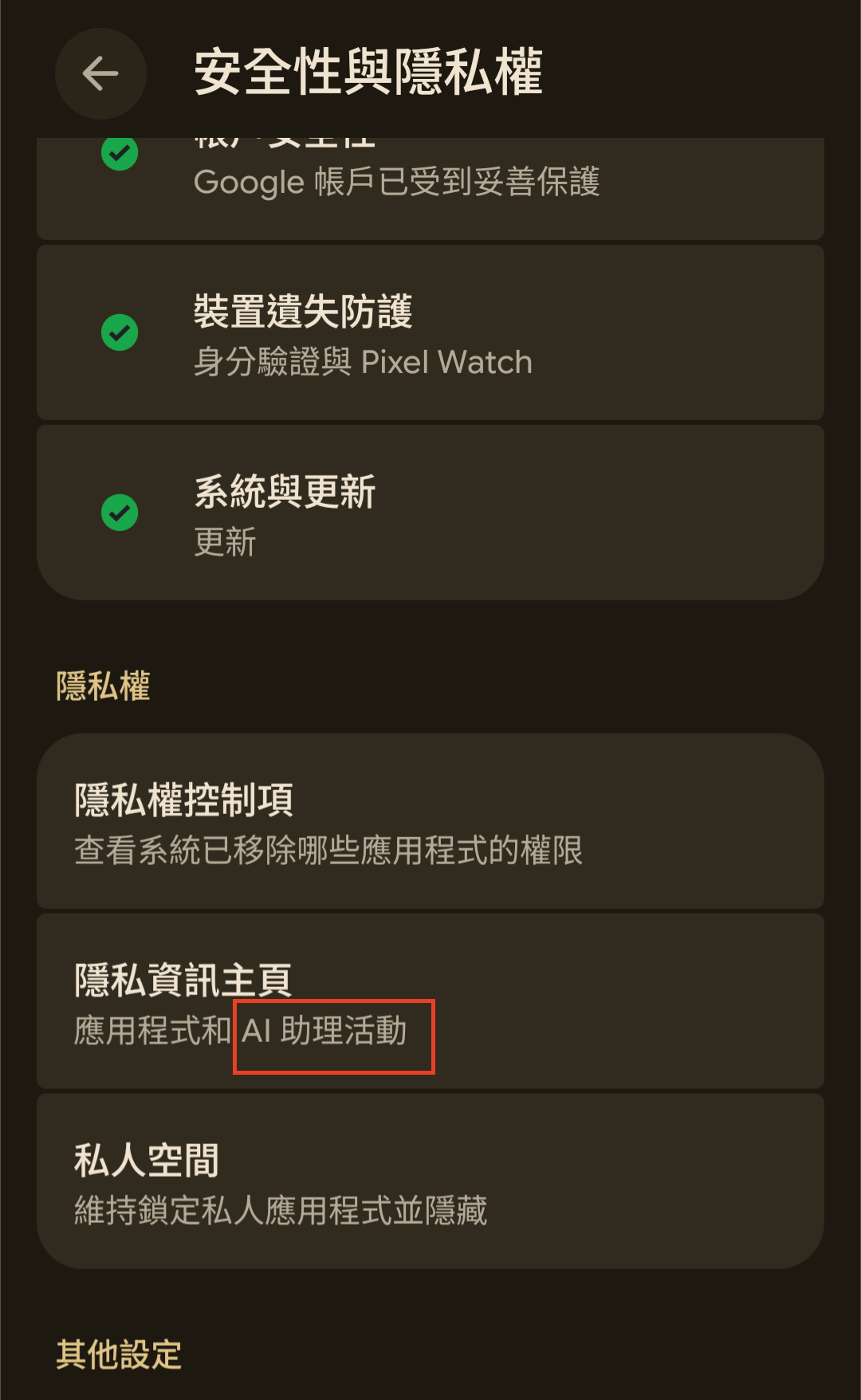

However, on Android 17 QPR1 Beta 2, these most critical AI Agent capabilities are still largely unimplemented. What we can see now are mostly interfaces and infrastructure. For example, the system permissions page now includes privacy management for AI assistant-related activities, and some services and system components seem to be preparing for deeper AI integration.

Image Source: Leitech

The boundaries between Google Play Services, the Gemini App, and the system are also becoming more blurred. However, this is still far from the intelligent agent presented at the event that can proactively complete complex tasks for users.

This is not surprising.

Gemini Intelligence is not just a single feature of Android 17 but a capability layer composed of the system, models, the cloud, Google services, and device manufacturers. Even next month's official release of Android 17 may not include a complete upgrade. Additionally, according to Google's current statements, Gemini Intelligence will initially be limited to newer Pixel and Samsung Galaxy devices.

In Conclusion

To summarize, we can now see that Google is refining the system visuals of Android 17, rebuilding multitasking logic, laying the groundwork for AI assistant permissions, and preparing to embed Gemini Intelligence more deeply into Android. This is the next-generation Android that Google truly envisions.

But don't get too excited just yet. It has been confirmed that the official version of Android 17 will be released in June, at which point Agentic Browsing with Gemini in Chrome will also be available, and we may see the official rollout of Gemini Intelligence. Perhaps we will have a different perspective by then.

Let's just hope it doesn't repeat the delays and half-baked launches of Apple Intelligence.

Google, Android, Gemini, AI, smartphones

Source: Leitech

Images in this article come from the 123RF stock photo library.