Autonomous Driving: Achieving Hardware-Software Synergy, Li Auto’s Innovative Approach

![]() 03/06 2026

03/06 2026

![]() 439

439

In July 2016, Elon Musk ended Tesla’s collaboration with Mobileye, a provider of intelligent driving chips.

The split was rooted in a fundamental disagreement over the concept of a full-stack closed loop in autonomous driving technology. At the time, Tesla aimed to achieve full control over data and algorithms, but Mobileye consistently declined to provide full access. When negotiations failed, Musk decided to pursue a hardware-software integration strategy. In subsequent earnings calls, Musk reflected that this bold move—to “build our own chips”—enabled Tesla to establish an unparalleled competitive edge in autonomous driving.

Today, Chinese autonomous driving companies have also entered the hardware-software integration phase.

Standing at the crossroads of 2026, significant progress has been made in self-developed chips by numerous autonomous driving companies. Among them, NIO’s Shenji chip and XPeng’s Turing chip are already deployed in vehicles, while Li Auto’s Mach 100 chip is set to debut with the all-new Li L9.

However, a common challenge in the industry is the exorbitant development costs of self-developed chips and the difficulty of software adaptation. Chip tape-outs can cost billions of dollars each time, requiring algorithm teams to spend months on repeated adaptation and optimization. A slight miscalculation can lead to a situation where “the chip’s computational power is fully utilized, but actual efficiency falls short.”

If self-developed chips represent an inevitable trend in the autonomous driving industry, how can the pain points of high costs and hardware-software compatibility be addressed? Recently, Li Auto unveiled a research breakthrough that provides theoretical support for the integration of intelligent driving hardware and software.

Over the past few years, a key focus in autonomous driving has been the race for computational power. Consumers focus on hardware parameters, and automakers compete on TOPS (Tera Operations Per Second), seemingly believing that greater computational power equates to stronger intelligent driving capabilities. Throughout this development, we have witnessed intelligent driving chips evolve from NVIDIA Orin’s 254 TOPS to Thor’s 1000 TOPS, and then to even higher computational power in domestically self-developed chips, with data continuously being refreshed.

But is intelligent driving fully applicable to the Scaling Law?

Not entirely. For instance, as the industry enters the VLA (Vision-Language-Action) model era, autonomous driving faces unprecedented challenges. On one hand, as a logically self-consistent technical architecture, VLA requires higher cognitive intelligence to unleash its full potential. It must “understand scenes, interpret intentions, and make decisions” like a human driver. On the other hand, automotive intelligent driving differs significantly from cloud-based large models. In-vehicle chips are constrained by power consumption, heat dissipation, cost, real-time performance, and safety redundancy, making it impossible to blindly stack parameters and computational power. The result is that models become increasingly intelligent, but chips struggle to keep pace.

Li Auto’s proposed “Hardware-Software Synergy Design Law for On-Device Large Language Models” identifies the key to breaking this deadlock.

In this study, Li Auto addressed two core questions. First, a chip’s peak performance does not equal its actual system efficiency; effective computational power matters more. Second, through mathematical means, a quantifiable, predictable, and implementable mathematical framework can be constructed, turning “algorithm-defined chips” from mere talk into reality.

In summary, intelligent driving software and hardware can find the optimal solution for a given scenario. Simultaneously, compatible hardware and software can be discovered through collaborative design.

Based on this research, Li Auto plans to deploy its self-developed Mach 100 chip in the all-new Li L9, pushing the boundaries of automotive intelligence.

So, what exactly does Li Auto’s hardware-software synergy design law entail? What industry pain points does it aim to address? Let’s examine this research together.

Algorithms and Chips Need to ‘Grow Together’ Through Collaboration

Over the past few years, NVIDIA’s computing platform has been the de facto standard for high-end automotive intelligent driving. However, as intelligent driving technology advances, NVIDIA faces increasing competition. Among automakers, Li Auto, XPeng, NIO, and others have chosen to self-develop chips. On the chip manufacturer side, AMD and Qualcomm have also joined the “battlefield” in recent years, collectively vying for a share of NVIDIA’s “pie.”

Why are automakers choosing to switch computing platforms? Behind this transformation, autonomous driving technology has encountered two significant barriers.

The first barrier is the rapid evolution of large models versus the relatively slow iteration of chips, causing hardware iteration to struggle to keep pace. With VLA gradually becoming the mainstream technical paradigm, the parameter scale, training data, and capability boundaries of intelligent driving models are refreshed almost every few months. In contrast, automotive-grade chips typically require 3-5 years from design to tape-out, verification, and vehicle integration. For these new model demands, many new computing platforms now emphasize native support for MoE (Mixture of Experts) sparse computing, provide ultra-large KV cache capacities, or enable dynamic resource scheduling. These signs indicate that traditionally “accepted” computing platforms are increasingly unable to meet the performance demands of the VLA era.

The second barrier is the realization within the autonomous driving industry that general-purpose computing platforms cannot fully unlock a model’s capability potential. Intelligent driving models require specific performance parameters from chips, which general-purpose computing platforms struggle to provide. For example, when making decisions, intelligent driving models require extensive MoE invocation capabilities, but general-purpose computing platforms lack native support for sparse computing and quantization. Ensuring driving safety requires low-latency feedback, but general-purpose computing platforms may “block tasks,” failing to guarantee stable output. As a result, algorithm adaptation often ends up “fitting the model to the platform,” sacrificing either model accuracy or real-time responsiveness, or increasing redundant chips and driving up costs.

To address these two challenges, Li Auto argues in this paper that hardware-software collaborative design is the key to breaking the deadlock.

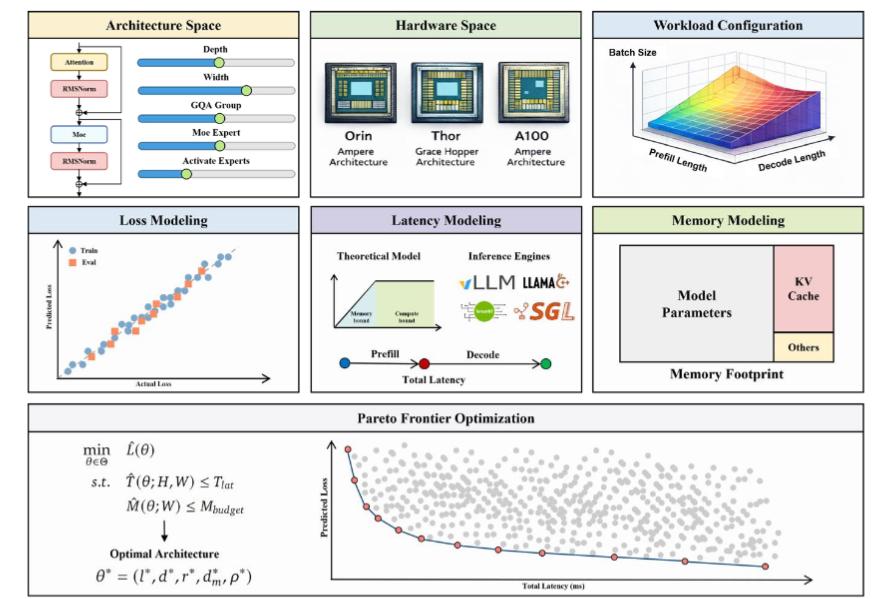

Specifically, Li Auto employs two core mathematical methods to achieve this synergy.

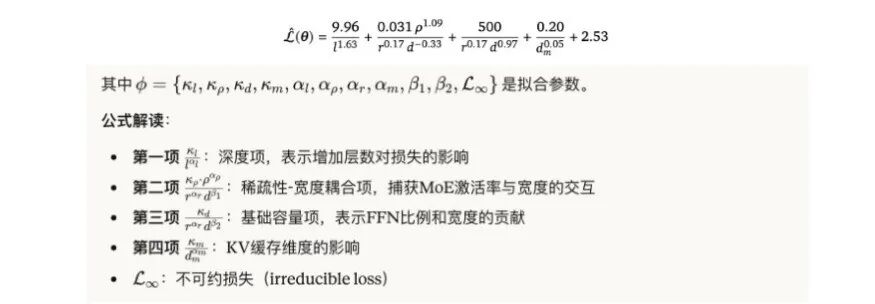

The first is the vehicle application of the loss function expansion law, using low-cost methods to “calculate” the upper limit of a model’s capabilities. This is a relatively common process in industry-scale large model research. The basic principle is that large models inherently have an “error rate,” with smaller models exhibiting higher error rates, but the growth curve of this error rate is predictable. This means that given a model’s hyperparameters (parameter count, layer count, FFN multiplier, etc.), its final accuracy can be predicted directly without complete training.

In simple terms, running a small model a few times can estimate “how intelligent a large model can potentially become,” thereby saving on exorbitant GPU electricity costs and time.

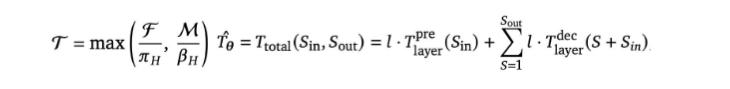

The second method is a vehicle-based innovation in Roofline performance modeling, “calculating” the key hardware parameters required by the model. Roofline was originally a visualization framework for performance analysis in HPC (High-Performance Computing), used to quantitatively assess bottlenecks in application processors. Li Auto has extended it for vehicle scenarios, adding unique demands of large models—such as KV cache (key information caching), MoE routing (mechanisms for assigning expert model operations), and attention mechanisms—beyond traditional considerations of computational and memory bandwidth balance. This calculates the model’s impact on the intelligent driving computing platform.

In simple terms, it “calculates” the level of intelligence the computing platform can support in a model.

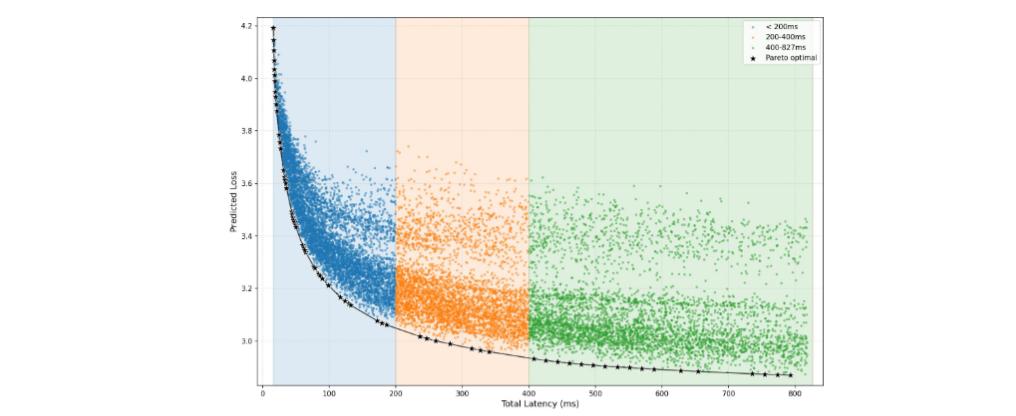

Building on these two approaches, the PLAS (Pareto-Optimal LLM Architecture Search) framework was born, enabling collaborative design. By inputting the chip’s computational power, bandwidth, cache hierarchy, and engineering constraints (e.g., latency <100ms, power consumption, memory), the framework automatically generates the optimal model architecture—finding the “boundary of highest accuracy and lowest latency on current hardware.” In simple terms, it simultaneously identifies the common optimal solution for algorithm capabilities and chip design.

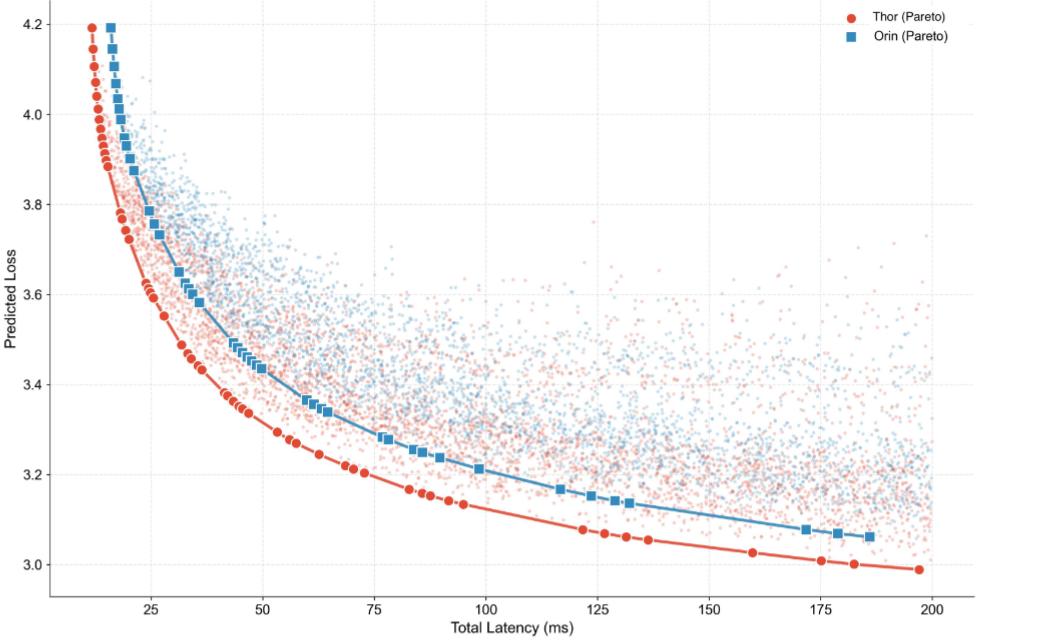

Additionally, Li Auto presented the Pareto-optimal frontiers on different hardware platforms (Jetson Orin/Thor), verifying the cross-hardware generalizability of the “Hardware Synergy Design Scaling Law” and identifying the performance limits of NVIDIA’s computing platforms.

The greatest value of this design paradigm is that it renders the industry’s previous fragmented processes—“designing chips first, then adapting algorithms” or “developing algorithms first, then finding chips”—obsolete.

“Originally, the Orin chip didn’t support running language models. But since NVIDIA didn’t have time, we wrote our own low-level inference engine,” said Li Auto founder and CEO Li Xiang in an interview.

Traditionally, chip engineers relentlessly pursued higher computational power while algorithm engineers strived for more intelligent models, only to discover “incompatibility” during integration, resulting in significant resource waste. Collaborative design aims to break down these silos, enabling chips and algorithms to work closely from the outset, allowing them to “grow together” through collaboration.

For players in the autonomous driving industry preparing to pursue hardware-software integration, Li Auto’s research undoubtedly provides the industry with a replicable key.

There Are No Universal Chips, Only Scenario-Optimized Chips

The mathematical calculations behind Li Auto’s collaborative design are not overly complex. However, in the AI era, the value of a good question far surpasses countless superficial pieces of information.

Why did Li Auto conduct research on collaborative design? Because it encountered the challenges of implementing autonomous driving technology early on.

“Deploying VLM on in-vehicle chips presents significant challenges, especially on mainstream Orin-X chips, which were not designed with large model applications in mind. Thus, we had to overcome numerous engineering hurdles during deployment,”

as stated by Zhan Kun, head of Li Auto’s foundational models, in 2024. As early as the era of NVIDIA Orin chips for high-end intelligent driving, Li Auto deeply experienced the pain of “hardware-software fragmentation.” To be fair, NVIDIA’s computing platforms did offer robust theoretical computational power, but when deploying large language models in practice, Li Auto’s technical teams frequently encountered the dilemma of “chip peak performance ≠ actual system efficiency.”

Meticulously designed model architectures often failed to fully leverage hardware characteristics, while compromises made for hardware adaptation could impair model intelligence. It was akin to an exquisite statue only being displayable in a damaged state. This sense of fragmentation motivated Li Auto to seek a fundamental solution.

The approach involved improving model performance while attempting to find a balance between model deployment time, hardware, and application costs. Specific goals included: reducing the model design and selection cycle from months to one week; eliminating the need to blindly use more expensive chips while still delivering a superior intelligent experience to users; and quickly selecting the most suitable model configuration based on application scenarios, thereby shortening the overall development cycle.

Based on this research, Li Auto distilled these goals into six core conclusions. Each directly addresses the pain points of deploying large in-vehicle models and elevates the necessity of self-developed chips to an imperative level.

First, sparse computing will become standard for in-vehicle AI. Under typical vehicle batch processing sizes of 1, MoE sparse architectures dominate efficiency frontiers. This means future in-vehicle chips will require native support for sparse computing and dynamic routing, rather than simply providing dense matrix multiplication capabilities. In short, the development direction of in-vehicle AI models differs from cloud-based “large and comprehensive” approaches; computing platforms need native support for “specialized and refined” architectures.

Second, memory subsystem design matters more than peak computational power. The paper points out that the optimal architecture form indicates that memory bandwidth and cache efficiency often determine actual system performance more than theoretical TOPS. This means chip memory hierarchy design must adapt to demand, such as reserving sufficient high-speed cache space specifically for KV cache and attention mechanisms.

Third, optimize the microarchitecture with phase awareness. During model operation, the Prefill and Decode phases have significantly different demands for hardware resources. The Prefill phase requires a large number of parallel computing units, while the Decode phase demands high memory bandwidth and space, which can leave computing power idle. In conventional GPU designs, these computing processes are typically fixed. However, automotive intelligent driving requires a balance between real-time performance and determinism. This necessitates the development of new chips capable of supporting dynamic microarchitecture reconstruction or resource allocation to ensure stable computation in both phases.

Fourth, break away from the fixed 4x FFN (Feed-Forward Network) pattern. Traditional Transformer architectures typically default to a 4x FFN expansion ratio, acting like a magnifying glass that enlarges dimensions by 4 times regardless of input complexity, and then compresses them back. However, in vehicle scenarios with relatively limited computing resources, 'running at full throttle' is equivalent to 'exploding fuel consumption.' This means that chip matrix multiplication units and activation function units require more flexible ratios to adapt to the actual load distribution of VLA models.

Fifth, quantization acceleration requires native hardware support. To meet the real-time, safety, and power consumption requirements of intelligent driving outputs, theoretically, quantizing intelligent driving models from FP16 or BF16 weights to INT8 should yield a 2-fold acceleration factor. However, Li Auto's actual tests showed only a 1.3 to 1.6-fold acceleration on conventional platforms due to resource consumption from nonlinear operators and precision conversions during the process. Thus, next-generation chips need native support for mixed-precision computing and operator fusion at the instruction set and computational unit levels.

Sixth, there are no universal chips, only scenario-optimized chips. Synthesizing the above conclusions, maximizing model capabilities requires reconfiguring hardware computational architectures, fundamentally proving the necessity of 'algorithm-defined chips.' Only by deeply understanding the demands of upper-layer algorithms can the most efficient specialized computational architectures be designed.

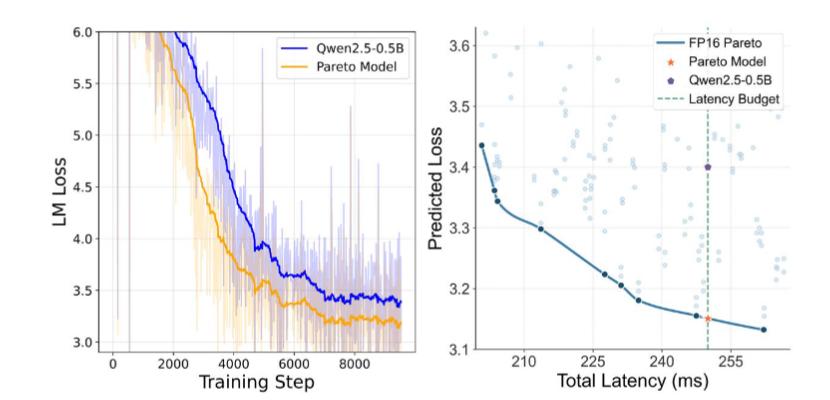

These findings are not merely theoretical exercises. To validate the collaborative design law, Li Auto conducted rigorous comparative tests on NVIDIA Jetson Orin/Thor platforms. The results showed that models optimized using the collaborative design law achieved a 19.42% accuracy improvement while maintaining identical latency to Qwen2.5-0.5B. This directly proves that hardware-software collaborative design can achieve 'equivalent hardware, superior performance,' immediately delivering quantifiable engineering benefits.

For product development, this discovery directly led to Li Auto's self-developed Mach 100 chip. As the first model to be equipped with the Mach 100, the all-new Li L9 was even touted by Li Xiang on Weibo as having 3 times the effective computing power of NVIDIA's Thor-U chip, making it the world's most powerful intelligent driving brain.

Having a self-developed chip not only signifies Li Auto's transition from "passive chip adaptation" to the stage of "algorithm-defined chips," but also provides Chinese autonomous driving companies with a ready-to-use theoretical framework in the VLA era.

Li Xiang's AI Engineering Methodology

The integration of hardware and software, along with collaborative development, has long been a mandatory course for every AI giant worldwide.

In 2013, Jeff Dean, then head of Google Brain, casually performed a calculation on a napkin. The results showed that to support users' use of speech recognition models, Google would need to double its data center cluster. These simple numbers sent chills down the spines of all the executives present.

To avert this crisis, Google swiftly initiated the TPU development project. The hardware was defined based on an old paper, designing the chip to match the matrix operations required by the algorithm. Fifteen months later, Google developed the TPU, freeing itself from being "held hostage" by GPUs. Today, through Google Cloud and Gemini, Google sells TPUs worldwide.

Google's practical actions prove that only through hardware-software synergy can every bit of computing power be utilized effectively. On this path, Li Auto has also found the direction for a closed-loop, full-stack autonomous driving technology.

Remember back in 2025, leading players in intelligent driving technology were still referencing DeepSeek's techniques, using distillation methods to bring AI large models "down from the cloud." At that time, Li Auto made a series of adjustments to pre-training, post-training, and reinforcement training for its intelligent driving large model, ultimately creating the "Driver Model"—VLA—that rivals human intelligence.

"We conducted extensive research and embraced Deepseek R1 from its launch to its open-sourcing. DeepSeek's speed exceeded expectations, so the arrival of VLA was faster than anticipated," Li Xiang once summarized.

Today, after achieving hardware-software integration, the "algorithm-native model" tailored for vehicles enables intelligent driving to optimize the entire chain of perception, decision-making, planning, and control within the same mathematical framework, further reducing system latency, improving accuracy, and enhancing energy efficiency.

This transformation is essentially an evolution of AI engineering capabilities. In the past, engineers relied on experience to fine-tune and iteratively test for optimal solutions. Now, with the PLAS framework and mathematical laws, the optimal solution can be "generated with a single click."

"Whenever we want to change and enhance capabilities, the first step is always research, the second step is development, the third step is expressing those capabilities, and the fourth step is transforming them into business value," said Li Xiang.

Li Auto has put considerable effort into achieving this goal.

At the foundational research level, Li Auto's investment can be described as "extravagant." Over the past eight years, Li Auto has continuously increased its R&D investment. In 2025 alone, the company expects R&D spending to reach 12 billion yuan, with 6 billion yuan allocated to the field of artificial intelligence.

With this R&D investment, we can clearly see the growth trajectory of Li Auto's autonomous driving technology. From 2021 to November 2025, Li Auto has published nearly 50 papers in areas such as BEV (Bird's-eye-view), end-to-end models, VLM (Visual Language Model), VLA (Visual Language Action Model), reinforcement learning, world models, and AI foundation models, garnering over 2,500 citations. Among these, 32 papers were accepted by top conferences.

In foundational research, Li Auto's organizational structure has also evolved to better suit AI research. In January of this year, Li Auto took the lead in implementing a series of organizational adjustments. Among them, Zhan Kun, Li Auto's Senior Expert in Autonomous Driving Algorithms, took over the foundation model business, overseeing the overall development of Li Auto's VLA foundation models and fully integrating the relevant R&D teams. This marks Li Auto's intelligent driving entering the era of AI large models.

At the end of January, Li Xiang also made it clear internally that the company would significantly adjust the structure of its technology R&D teams, referencing the operational models of the most advanced AI companies and redefining personnel roles based on collaborative construction of silicon-based life forms. Through continuous optimization of its internal structure, Li Auto hopes to achieve deep synergy among its algorithm, chip, and OS teams, enabling research results to be rapidly transformed into mass-production capabilities.

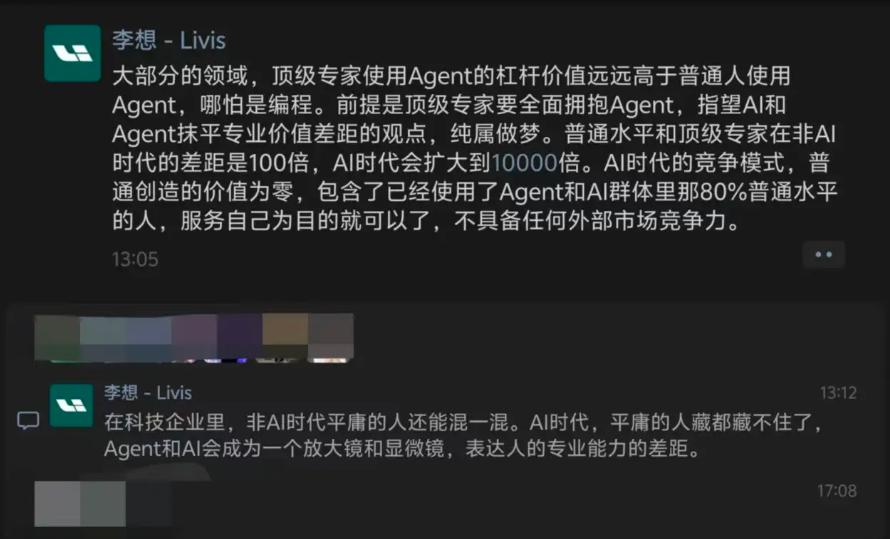

Based on his understanding of AI, Li Xiang has also become the CEO in the automotive circle who "most supports" AI development. Recently, Li Xiang explicitly remarked on WeChat Moments that learning to use Agents can amplify the gap between top experts and ordinary people.

Perhaps the most important rule in the AI era is to go ALL in on AI.

Once the global leader, Tesla's FSD (Tesla Autopilot) is gradually losing its "wow factor" as Chinese autonomous driving companies catch up with full-stack closed-loop technologies.

The law of hardware-software collaboration design (collaborative design) is just the beginning. Chinese smart car manufacturers are now defining the upper limits of automotive intelligence.