DeepRoute.ai's 40B VLA Autonomous Driving Foundation Model and Methodology

![]() 03/23 2026

03/23 2026

![]() 399

399

As a rising star among China's ADAS/autonomous driving algorithm providers, DeepRoute has seen a significant increase in mass-produced vehicles over the past two years, securing partnerships with Great Wall Motors, Geely, and even rumored to have won business from the new energy vehicle brand Leapmotor. Moreover, DeepRoute was among the early adopters to promote and mass-produce "VLA" technology.

Thus, it is a provider of both mass-produced and forward-looking autonomous driving solutions. At GTC 2026, DeepRoute's CTO, Tongyi Cao, delivered a speech titled *Redefining the Boundaries of Autonomous Driving with Foundation Model*, sharing its VLA methodology and theory based on foundation models.

This article shares the core content and highlights of the speech through industry knowledge.

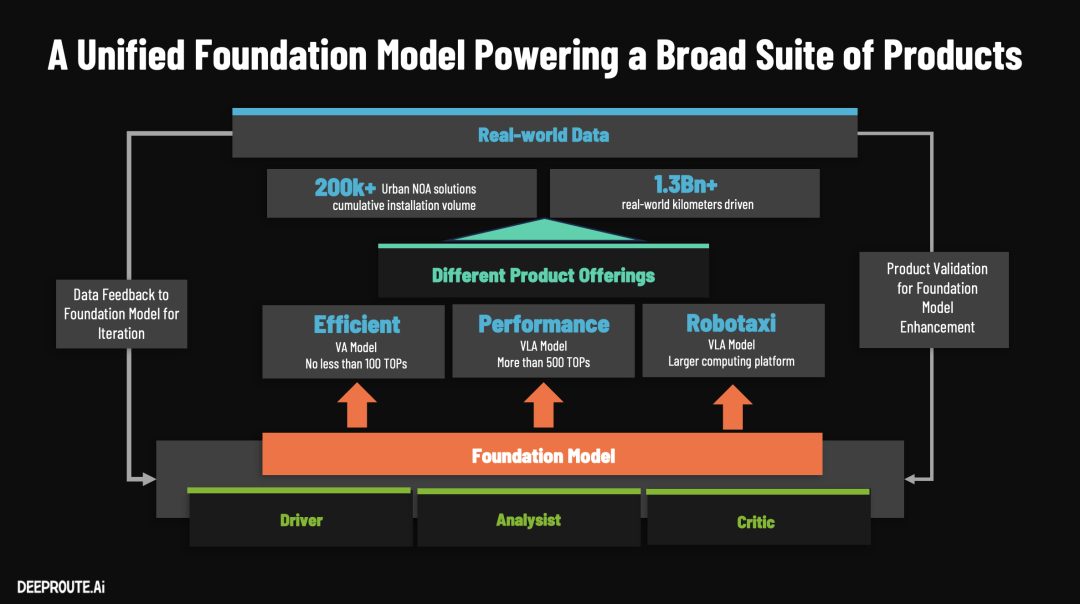

DeepRoute.ai's (DeepRoute.ai) core approach to solving autonomous driving, even advancing toward Level 5 autonomy, is a firm belief in the "Scaling Law," driving simultaneous growth in model size and data scale through a unified foundation model.

This also reflects the industry's current confidence in end-to-end technologies, seeing the dawn of autonomous driving. The current industry focus is on optimizing algorithms, increasing model parameters, advancing compute chips, and refining engineering implementation.

Below are the technical highlights of DeepRoute's foundation model architecture and autonomous driving software methods:

I. Technical Highlights of the Foundation Model (40B VLA) Principles and Architecture

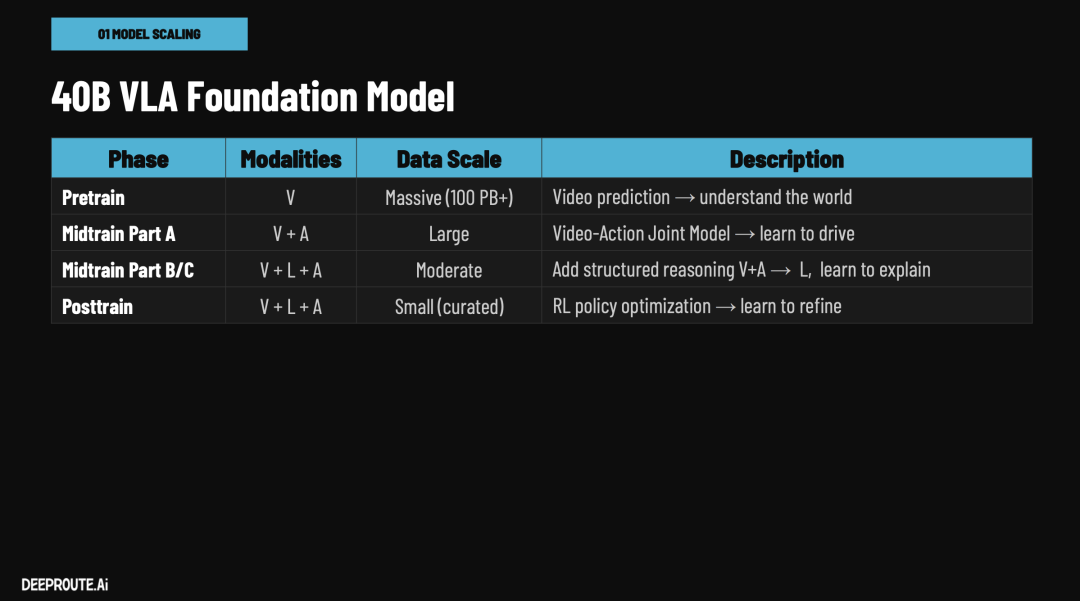

DeepRoute built a native 40B-parameter VLA (Vision-Language-Action) model based on 100 million GB of video data. XPENG also announced at the end of last year that it had developed a 72B (72 billion)-parameter ultra-large-scale model trained on 200 million clips (approximately 10 billion GB of data).

DeepRoute stated that it has made the following underlying innovations in training mechanisms and edge deployment:

1. Architectural Innovation: The "Trinity" Model RoleThis large model breaks away from the single role of being merely a "driver." It integrates three capabilities within a single model: driver, analyst, and commentator/referee. This capability reuse not only enables shared cognition and scene understanding but also effectively enhances the performance of driving tasks themselves. In simpler terms, this model can interpret sensor input data streams like videos, reason and analyze them, and ultimately provide evaluations of good or bad outcomes.

2. Breakthrough in Pre-training Principles: From "Trajectory Supervision" to "Video Prediction"Traditional end-to-end models typically rely on driving trajectories for supervised training, but this results in significant data waste—out of 1 PB of driving video, trajectory data accounts for only about 10 GB, yielding a data utilization rate of just 0.001%. DeepRoute innovatively adopted video prediction tasks during the pre-training phase to enable the model to understand the world, meaning every pixel in the video serves as a supervisory signal, achieving 100% data utilization and providing ultra-high-quality representations of the physical world for large-parameter models.

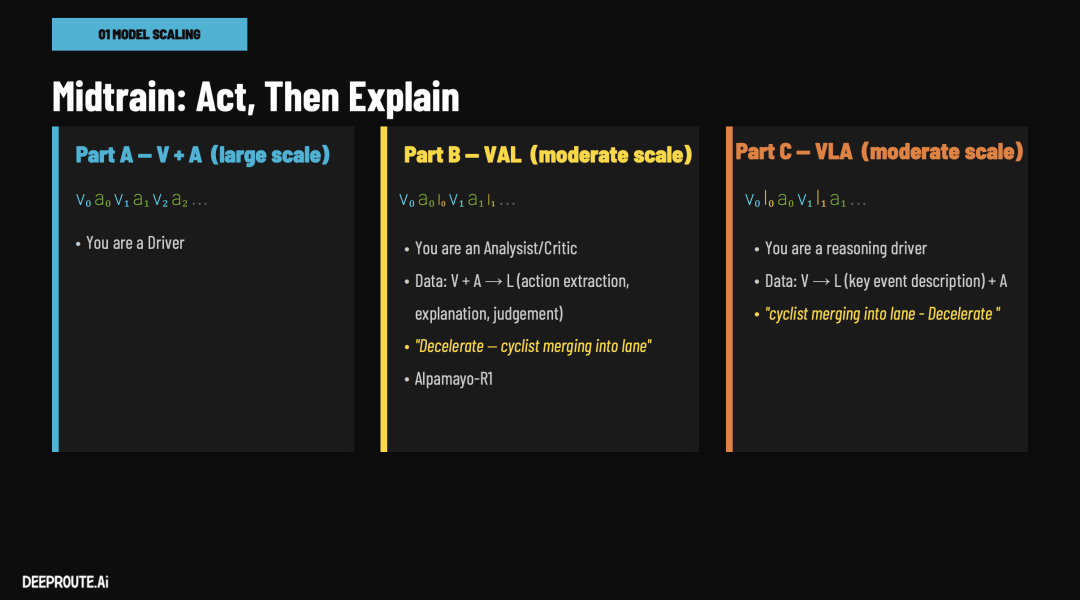

3. Cross-Modal Reasoning Integration in Mid-trainingAfter acquiring an understanding of the world, the model undergoes joint training on three core tasks:

V+A (Vision+Action): Learning conventional end-to-end driving, a typical end-to-end architecture.

V+A -> L (Post-Action Explanation): Activating the analyst and referee roles, inputting visual and action sequences to output abstract descriptions of key events, causal explanations of behaviors, and evaluations of good or bad outcomes.

V -> L+A (Multimodal Logical Reasoning): Training drivers with reasoning capabilities. Given visual input, the model uses Chain-of-Thought (CoT) to first output linguistic descriptions of key events and decision-making logic before outputting specific driving trajectories.

4. Ultimate On-Vehicle Deployment Optimization and Mass-Production DistillationAccording to Tongyi Cao's presentation at GTC, DeepRoute's VLA currently achieves 10-15 Hz real-time closed-loop control on the vehicle (for the importance of real-time closed-loop control, refer to our previous article *Revealing Tesla FSD's Core: The "Three Challenges" and "Unique Solutions" of End-to-End Algorithms, and Thoughts on Voice-Controlled Driving*).

DeepRoute stated that it has introduced KV Cache (avoiding redundant computation of historical features, a technique also adopted by Li Auto as mentioned at GTC 2026), Multi-Token Prediction (MTP), quantization techniques, and a customized inference engine to strictly control the single-step processing delay within 60-85 milliseconds for sequences containing 1,000 visual tokens and dozens of reasoning tokens. Additionally, the foundation model can be flexibly "distilled" based on the onboard chip's compute capabilities: deploying a pure driving VA model on 100 TOPS platforms and a VLA model with logical reasoning capabilities on 500 TOPS platforms.

II. Highlights of Autonomous Driving Software and Data Methods

At the software and data engineering level, DeepRoute has completely reconstructed its data closed loop and simulation system, addressing industry pain points such as "boring data harming model performance" and inefficient manual intervention:

1. Rapid Data Closed Loop Fully Managed by Large ModelsTraditional data closed loops (problem identification, diagnosis, mining, labeling, training) heavily rely on manual or small rule-based models, often taking 5 days (over 100 hours) per cycle with no accumulated capabilities. DeepRoute directly utilizes its foundation model (leveraging its analyst and referee capabilities) to manage the entire process, including data mining, automatic diagnosis, Chain-of-Thought (CoT) labeling, and action scoring. This not only shortens the closed-loop cycle from 5 days to just 12 hours but, more importantly, ensures that all manual reviews and machine labeling results generated during the process become new inputs for the model's mid-training, enabling a flywheel effect of AI capability growth.

2. Data Synthesis Technology for Breaking Through Long-Tail ScenariosFacing rare and high-risk scenarios (Long-Tail Scenarios) that are difficult to collect in reality, DeepRoute employs advanced generative and synthetic techniques:

3D Reconstruction and Style Transfer: Utilizing Nvidia's 3D GUT for high-fidelity reconstruction and the Cosmos model for weather and lighting style transfer, transforming daytime footage into rainy or nighttime variants.

DiPIR Insertable Editing: A self-developed technology by DeepRoute that seamlessly inserts generated 3D pedestrians, cyclists, or animals (such as sheep suddenly darting onto the highway) into real road videos, automatically matching lighting and shadows to systematically generate "extremely dangerous and hard-to-capture" training data in bulk.

3. Reinforcement Learning (RL) Self-Evolution in Simulated EnvironmentsDuring simulation backtesting, DeepRoute's model no longer solely relies on human-defined standard answers (as humans struggle to label perfect trajectories in extreme scenarios). The foundation model can "sample" multiple different driving solutions in reconstructed simulation scenarios (e.g., choosing between abrupt braking or lateral avoidance when encountering illegal lane cuts). Subsequently, the model's internal "commentator (Critic)" analyzes and scores these trajectories based on preset safety and comfort rules. Through continuous iteration of this closed-loop reinforcement learning (RL Policy Optimization), the model can output safer and more precise decisions in highly complex edge scenarios.

The above represents the core content shared by DeepRoute.ai at GTC 2026. We welcome comments to discuss more algorithm details behind the core technologies.

References and Images

*Redefining the Boundaries of Autonomous Driving with Foundation Model* - Tongyi Cao, DeepRoute.ai *Unauthorized reproduction or excerpting is strictly prohibited.*