Intelligent Driving Isn't an Either-Or Choice: VLA + World Model Is the Only Right Path to L4

![]() 03/24 2026

03/24 2026

![]() 373

373

Intelligent Driving Enters the Dual-Engine Era.

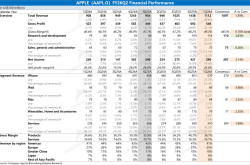

The intelligent driving industry has been abuzz lately, with various advanced intelligent driving solutions making their debuts, each more capable than the last:

Huawei ADS 3.0 unveiled an 896-line dual-light-path LiDAR, elevating the ADS 4.0 intelligent driving capabilities to new heights;

Xpeng launched VLA 2.0, a next-generation advanced intelligent driving system, directly targeting L4-level capabilities;

Momenta will debut its R7 World Model intelligent driving on the ID.ERA 9X, achieving a leap from L2+ to L4-level intelligent driving;

Li Auto released the MindVLA-o1 VLA solution, also aiming for L4-level advanced intelligent driving;

Horizon Robotics' HSD takes an inclusive approach, being integrated into the iCAR V27 Falcon 700.

In terms of model architecture choices for intelligent driving, companies generally fall into two camps: Li Auto and Xpeng favor the VLA model architecture, while Huawei ADS and Momenta bet on the World Model architecture.

These two paths could have developed in parallel, but advocates on both sides have engaged in heated debates.

Some argue that the World Model demands high chip computing power and has weak interaction capabilities, making it less friendly for mass-market vehicle models. Others feel that VLA's physical accuracy is generally lacking, which might affect the vehicle's real-time decision-making abilities.

(Image source: Weibo livestream screenshot)

Is it truly the case, as the debate suggests, that one must choose between VLA and the World Model? How do companies address the technical pain points of advanced intelligent driving models?

VLA Mimics Human-Like Driving Logic, While the World Model Excels in Physical Simulation

Before discussing whether a choice must be made between the two intelligent driving paths, we should first understand their underlying technical differences to make an objective judgment.

Let's start with VLA, which stands for Vision, Language, and Action integration. This technical route follows a path from image perception to semantic definition, then to logical decision-making, and finally to action output. The entire driving decision-making process closely aligns with how a real person drives.

For example, when approaching an intersection without traffic lights, VLA first identifies uncertain factors such as sudden appearances of pedestrians or non-motorized vehicles. If none are present, it makes a decision to Slow down and avoid (slow down, yield, and proceed) based on traffic rules like yielding to pedestrians and giving priority to straight-moving traffic.

This process resembles the “look, assess, proceed” driving logic we usually follow, involving thought and reasoning to make decisions. Even in unfamiliar scenarios, the vehicle can make reasonable judgments based on logical generalization.

(Image source: Dianchetong production)

Now, let's look at the World Model, whose underlying logic is based on dynamic simulation through a physics engine, operating entirely differently.

From the process perspective, the World Model first uses LiDAR and cameras to simultaneously scan the surrounding environment, constructing a real-time road condition model around the vehicle for the intelligent driving chip. The chip then completes physical simulations and ultimately issues action decisions. The entire process resembles a highly precise “traffic simulator.”

The World Model makes deductions by training on physical rules from massive amounts of data, excelling in standardized scenarios with extremely high precision. However, it tends to struggle with decision-making rigidity when encountering non-standard scenarios outside its training database.

For instance, when encountering pedestrians crossing the road, it doesn't prioritize yielding like a human would. Instead, it precisely calculates data such as the pedestrian's movement speed, the vehicle's braking distance, and the time difference for the two to intersect, planning an optimal driving trajectory. As a result, it often fails to proactively yield to pedestrians.

VLA Excels in Urban Scenarios, While the World Model Offers Greater Precision

In practical usage scenarios, VLA's core strength lies in better handling unknown and ever-changing planned routes, whereas the World Model adapts better to ideal end-to-end road conditions.

Domestic urban roads present the richest and most complex driving environments, often featuring sudden construction zones, non-standard intersections, temporary traffic controls, and unexpectedly appearing pedestrians and electric bikes—all posing significant challenges to intelligent driving technology. VLA is better suited to adapt to such situations.

Relying on human-like logical reasoning, VLA can quickly handle these sudden events, proactively planning detour routes when obstructed rather than stubbornly adhering to a preset trajectory. From a scenario adaptability perspective, VLA is undoubtedly more suitable for complex urban road driving.

This is why, when showcasing VLA 2.0 technology, Xpeng didn't choose ideal testing environments like spacious new development zones or satellite cities. Instead, they drove a test vehicle equipped with VLA 2.0 directly into Guangzhou's most complex and challenging urban villages, maximizing the difficulty level. The actual test results demonstrated that VLA 2.0 intelligent driving technology could effectively handle driving in non-standard scenarios.

However, VLA technology has a pain point of generally lacking physical precision. In driving scenarios requiring high precision, its performance falls short of the World Model. This limitation stems precisely from its architectural foundation.

VLA makes judgments and decisions based on “semantic thinking.” Objects identified by cameras are converted into language tokens, and decisions are made through large model reasoning.

This operational model results in VLA outputting descriptive information like “there's a car ahead,” “the distance is a bit far,” or “the pedestrian is about to cross.” However, what the intelligent driving chip actually needs is precise quantitative feedback like “distance: 3.72 meters,” “speed: 42.5 km/h,” or “intersection in 1.2 seconds.” The information dimensionality difference directly leads to VLA's insufficient physical precision.

In contrast, physical precision is precisely the World Model's core strength. Therefore, in end-to-end advanced intelligent driving scenarios, vehicles equipped with World Model technology can easily achieve precise predictive driving from parking spot to parking spot while demonstrating superior performance in energy consumption control and driving safety management.

However, the World Model's applicable scenario range is relatively limited, far less broad than VLA's. It is more suitable for standardized roads with regular conditions and fewer variables, such as highways, closed campuses, and urban expressways.

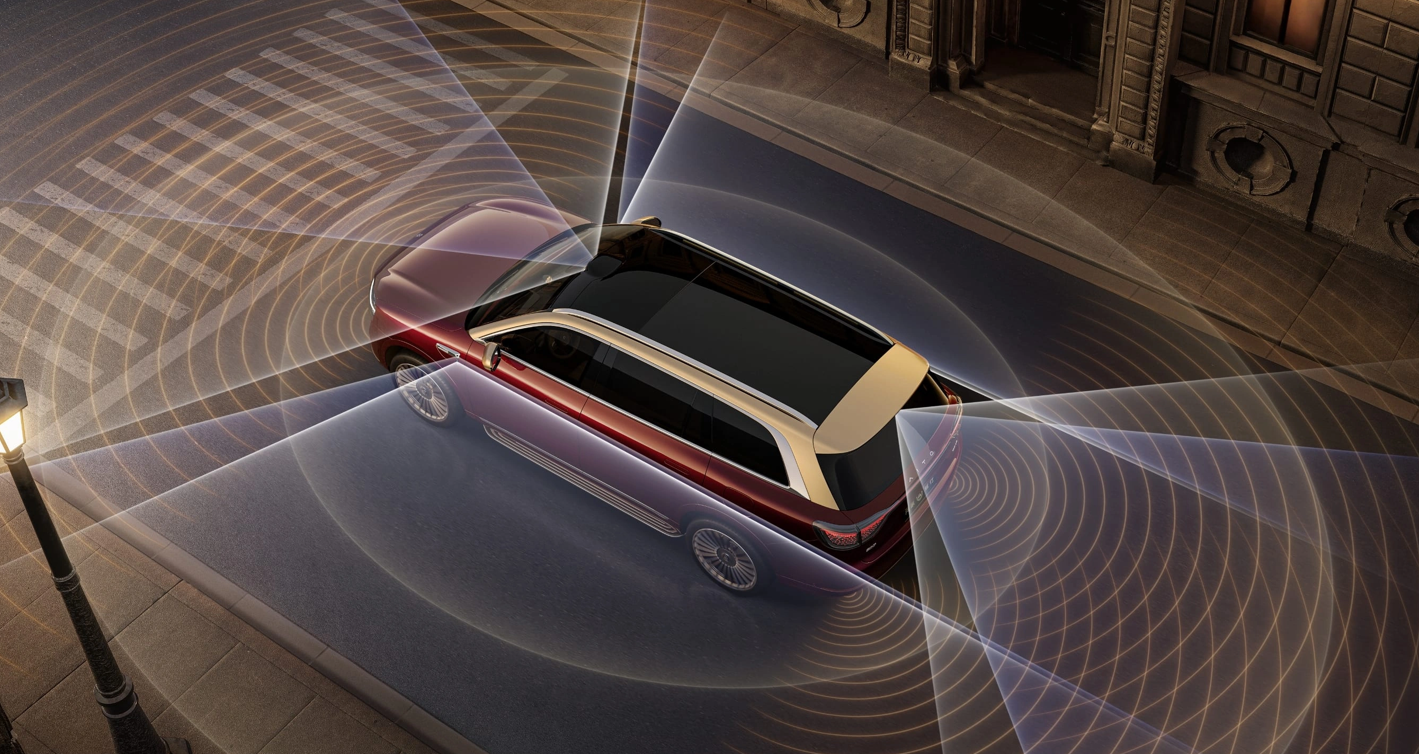

(Image source: Harmony Intelligent Driving official website)

Besides limited scenario adaptability, the World Model has two other significant disadvantages: high dependence on high-computing-power chips and weak natural language interaction capabilities.

Due to the need for large-scale real-time data simulation, the World Model consumes enormous computing power and places extremely high performance demands on intelligent driving chips. This directly results in overall high pricing for intelligent driving vehicle models based on the World Model, making them difficult to popularize.

Of course, this disadvantage is gradually being mitigated through technological upgrades.

A current example is Huawei ADS's recently released 896-line LiDAR, already applied in the 200,000-yuan-range Shangjie Z7/Z7T and Aito M6 models. Through reduced hardware costs and continuous optimization of computing architectures, the World Model, which originally demanded extremely high chip requirements, can now stably land in mainstream price-range vehicles.

Even among end-to-end intelligent driving solutions, Horizon Robotics' HSD solution, based on the World Model, has successfully achieved technological democratization of advanced intelligent driving, extending it to mainstream family vehicles priced around 150,000 yuan. This completely breaks the inherent cognition (existing perception) that World Model-based intelligent driving is costly and difficult to popularize. With this cost-effective intelligent driving solution, Horizon Robotics' Journey chips have surpassed 10 million cumulative shipments, successfully empowering over 500 vehicle models to launch, making the popularization of World Model technology possible.

On the other hand, the World Model lags behind VLA in natural language interaction capabilities. For example, when using intelligent driving, drivers might issue commands like “the car ahead is too slow, find an opportunity to overtake,” “don't follow large vehicles too closely,” or “pull over and stop ahead,” which might not receive timely responses from the World Model. It would still “drive on its own” according to the preset route, lacking flexibility.

Dual-Engine Synergy and Complementarity: The Trend Toward Achieving L4 Intelligent Driving

Since the VLA and World Model technical routes each have their strengths and weaknesses while complementing each other in scenario adaptability, can we combine their advantages to create a more comprehensive advanced intelligent driving solution?

The answer is clearly yes, and the industry has already begun deploying such integrated technical paths.

Xpeng VLA 2.0, Li Auto MindVLA-o1, and Momenta R7 Reinforcement Learning World Model are representative solutions that integrate both approaches, also known in the industry as the intelligent driving “dual-engine” mode.

Take Momenta R7 as an example. This large model introduces the World Model based on reinforcement learning, enabling AI to gradually understand the physical nature of the world, including objects' physical properties, causal relationships in motion, and potential interactions. It goes beyond simply imitating driving actions.

In this collaborative architecture, the World Model serves as the “foundational infrastructure,” relying on LiDAR and computing power support to construct high-precision physical simulation environments in the cloud. It generates massive amounts of long-tail extreme scenarios, completes physical trajectory planning and foundational data training, and establishes a precise execution foundation for intelligent driving.

VLA, on the other hand, focuses on “upper-level decision-making.” Leveraging the World Model's precise physical predictions and combining its own human-like semantic logical reasoning capabilities, it specifically handles complex road social scenarios and non-standard sudden road conditions, making decisions that better align with human driving habits.

When these two “engines” combine in actual usage scenarios, it might look like this: The driver activates end-to-end intelligent driving, and the vehicle exits the parking spot. Encountering pedestrians on the road, it yields and then proceeds. During the intelligent driving process, the driver wants to stop by the roadside to buy a bottle of water and issues a voice command for the vehicle to pull over and wait. After the driver returns, the vehicle automatically drives to the destination parking spot, completing the entire intelligent driving process without requiring driver takeover.

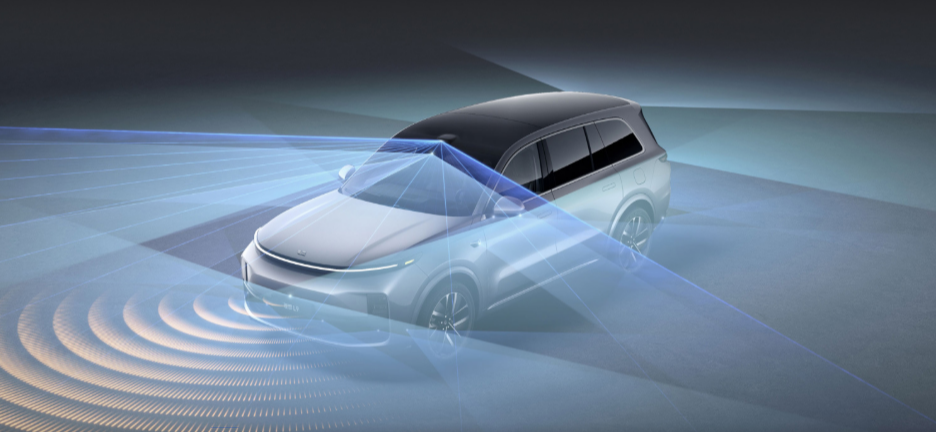

(Image source: Li Auto official website)

This integrated architecture solves the World Model's rigidity problem while compensating for VLA's efficiency limitations, enabling the intelligent driving system to both precisely calculate physical trajectories and flexibly understand social rules, truly approaching human-like driving capabilities.

This represents the charm of combining VLA and the World Model, as well as the vision for L4-level advanced intelligent driving—and it may achieve even more.

(Image source: Li Auto official website)

This also confirms that the development direction of next-generation intelligent driving technology has never been about choosing between mutually exclusive paths. Instead, it involves hierarchical collaboration and refined optimization, enabling a single system to adapt to complex road conditions across all scenarios. Relying on continuously improving data closed loop (closed-loop) iterations, the intelligent driving system optimizes with use, becoming increasingly intelligent.

It's foreseeable that within the next 1-2 years, dual-engine intelligent driving solutions will inevitably become the choice of most leading automakers. The industry's competitive focus will no longer revolve around single technical route comparisons.

Moreover, some automakers and intelligent driving companies like Horizon Robotics are already focusing on this approach. It likely won't take long for advanced intelligent driving to truly integrate into daily driving, no longer being exclusive to high-priced vehicles. It will both calculate accurately and drive steadily while flexibly responding to various sudden situations, genuinely meeting ordinary users' driving needs.

(Cover image source: Dianchetong production)

Xpeng, Li Auto, Harmony Intelligent Driving, Horizon Robotics, Momenta, Intelligent Driving

Source: Leikeji

All images in this article come from the 123RF licensed image library