Can Pure Vision Autonomous Driving Systems Identify Highly Transparent Glass Walls?

![]() 02/25 2026

02/25 2026

![]() 618

618

Recently, during discussions on whether pure vision autonomous driving systems can recognize 3D images, some buddies (a colloquial term retained for cultural context; could also be translated as 'friends' or 'colleagues') raised the question of whether they can identify highly transparent glass walls. Today, Intelligent Driving Frontier will briefly delve into this topic with everyone.

Before we dive into today's discussion, I'd like to clarify that the likelihood of encountering a highly transparent glass wall in front of a vehicle under normal driving conditions is extremely low. If such a scenario does occur, it would indeed be a rare edge case. Today's discussion will solely analyze the technical feasibility of pure vision autonomous driving systems recognizing highly transparent glass walls.

In urban architectural design, transparent glass walls are extensively used in shopping malls, office buildings, and various public spaces due to their aesthetic appeal and transparency. However, this material, which is visually attractive to humans, poses a significant challenge for autonomous driving perception, acting as an 'invisible killer.'

For pure vision autonomous driving systems, which rely solely on cameras and eliminate LiDAR, accurately identifying highly transparent glass walls presents a major test of the underlying logic of computer vision.

Physical Barriers and Optical Illusions in Visual Perception

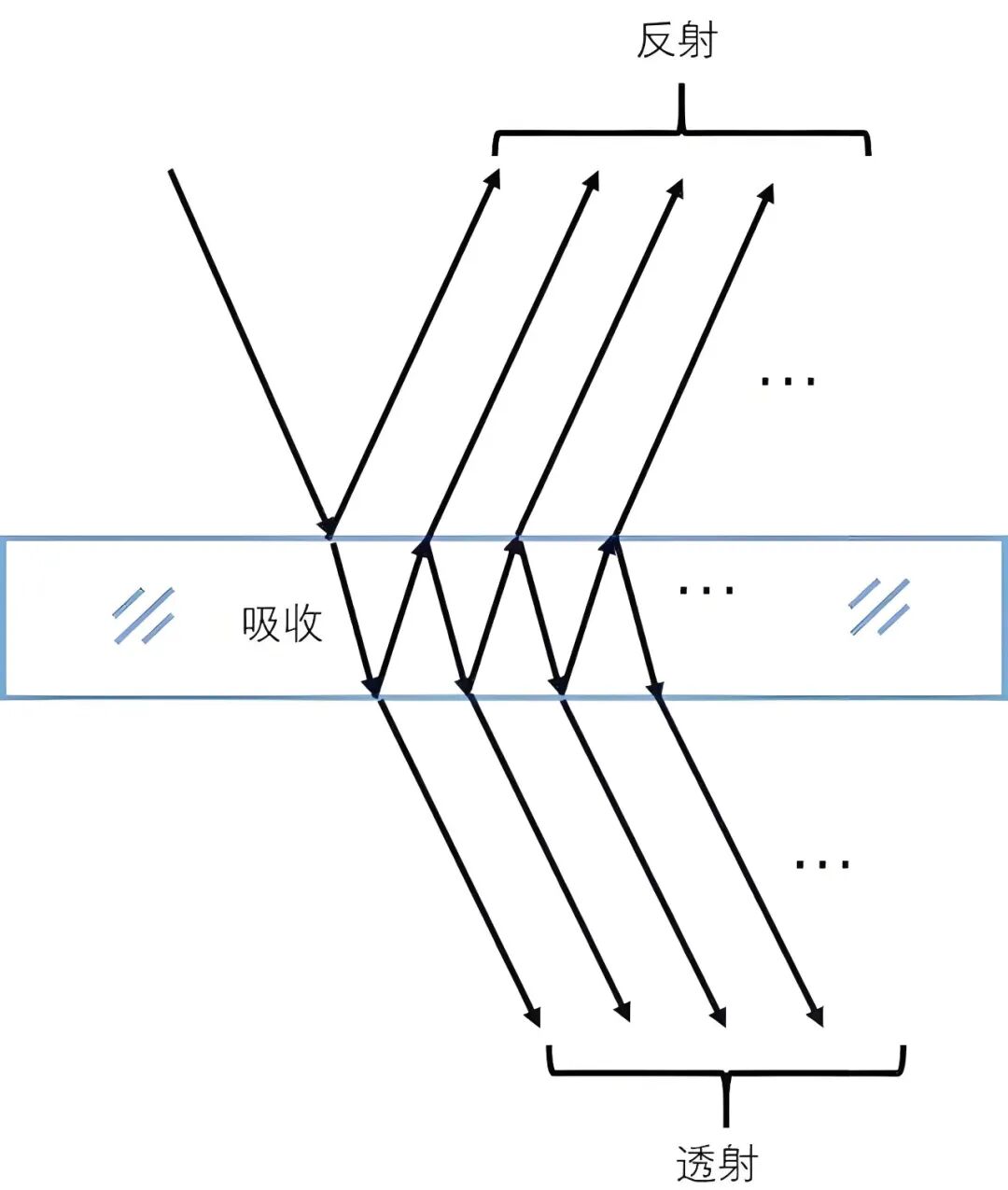

To explore the capability of pure vision systems to recognize glass, it's crucial to understand the physical nature of light interaction with glass. Glass's high transparency is due to its extremely high transmittance of visible light, meaning only a very small portion of light undergoes diffuse reflection and returns to the camera sensor.

For traditional computer vision algorithms, the essence of an image lies in changes in pixel brightness and color. If a region lacks distinct texture, color differences, or edge features, the algorithm will perceive it as an open space.

Humans rely on subtle reflections, fingerprints, oil stains, or even slight refraction displacements of objects behind the glass when their line of sight moves to identify glass. In contrast, pure vision systems must employ highly complex mathematical models to reconstruct these subtle visual signals.

Glass processes light according to the laws of reflection and refraction. When light enters a glass medium from the air, the proportion of reflected light is significantly influenced by the angle of incidence, as described by the Fresnel equations. At certain specific angles, specular reflection becomes very strong, forming 'virtual images' that can interfere with perception.

For pure vision autonomous driving systems, these virtual images are highly misleading. The system may misinterpret reflections of shopping mall chandeliers or moving pedestrians on the glass surface as real physical objects ahead, leading to unnecessary emergency braking.

If light completely penetrates the glass, traditional monocular or stereo depth estimation techniques will lock the depth value onto background objects behind the glass, causing the vehicle's calculated 'drivable space' to include the glass wall itself. This failure in depth perception is a direct cause of collision accidents.

Image Source: Internet

In indoor scenarios with complex artificial lighting, such as shopping malls, the direction and intensity of light change dramatically, making the reflection patterns on glass surfaces even more unpredictable. Pure vision systems can no longer rely solely on traditional feature point matching in these scenarios.

Due to the lack of texture on glass surfaces, feature matching algorithms cannot find sufficient anchor points in the image to construct a three-dimensional spatial structure. This may lead to centimeter- or even decimeter-level errors in the system's judgment of obstacle distances during low-speed cruising or parking.

To compensate for this shortcoming, the technical approach must shift from 'detecting objects' to 'understanding the environment.' By analyzing associated structures around the glass wall, such as seams in the floor, edges of the ceiling, and continuity of the wall surface, the system can indirectly infer the presence of a transparent plane.

Evolution from Feature Recognition to Spatial Occupancy Networks

Early autonomous driving algorithms primarily relied on object detection models, which identified specific objects (such as cars, pedestrians, traffic signs) in images and assigned them three-dimensional bounding boxes.

However, glass walls, as non-standardized architectural elements with varied forms and lacking fixed classification features, pose a challenge for this 'box-based' detection logic when dealing with transparent obstacles.

The emergence of occupancy networks has steered pure vision autonomous driving toward a more fundamental spatial representation.

Occupancy networks divide the three-dimensional space around a vehicle into billions of tiny voxels. Instead of attempting to define 'this is a glass wall,' the system predicts whether each voxel is occupied by matter or is free space.

This shift from 'object-centric' to 'space-centric' thinking provides a new approach to recognizing transparent objects. Even if the glass itself is invisible, if light passing through the region exhibits unnatural refraction patterns or if cross-validation from multiple camera perspectives reveals physical exclusivity in the three-dimensional coordinate system, the occupancy network will increase the occupancy weight of that voxel at the probabilistic level.

In pure vision architectures, Transformer models play a crucial role. Since glass recognition heavily relies on global context, the attention mechanism of Transformers allows the system to observe every pixel in the image simultaneously and establish long-range associations.

For example, when the system observes that the tile texture on the ground exhibits mirror symmetry along a vertical line or that the ceiling lines exhibit slight refraction bending in mid-air, the Transformer can aggregate these subtle, scattered anomalous signals throughout the image and infer the presence of a planar transparent medium ahead.

To achieve high-precision recognition, occupancy networks from companies like Tesla have achieved sub-voxel-level refinement. When processing narrow spaces such as parking lots or shopping malls, the system can dynamically increase the default voxel resolution of 33 centimeters to 10 centimeters or even lower.

This level of detail enables the algorithm to capture information about the slight frames or sticker thicknesses at the edges of glass. In this way, glass walls that were originally 'invisible' in the visual field are reconstructed in the system's digital model as a set of spatially meaningful barriers.

Although this probabilistic prediction-based modeling approach is significantly more computationally expensive than traditional algorithms, it endows pure vision systems with the ability to handle 'long-tail scenarios' (i.e., extremely rare cases), enabling vehicles to make correct obstacle avoidance maneuvers based on physical space occupation logic when encountering unfamiliar glass designs.

This technological evolution also brings about a profound change: the management of 'uncertainty.' When perceiving glass, autonomous driving systems often receive conflicting signals, such as geometric ranging indicating an open path ahead while semantic reasoning suggests the presence of glass.

At this stage, pure vision frameworks have introduced probabilistic distribution prediction. Instead of providing a definite 'yes or no,' the system outputs a distribution model containing mean and variance.

If the variance is too high, it means the system lacks confidence in its judgment of that region. In such cases, the decision-making layer will trigger a conservative strategy, such as reducing speed or prompting the driver to take over.

This 'self-awareness' of the system's own perceptual limitations is a key indicator of the maturity of pure vision solutions.

Collaborative Reasoning of Motion Parallax and Semantic Context

When dealing with stationary transparent glass, the information provided by a single frame in a pure vision system is insufficient. To simulate human behavior of moving the head to confirm glass positions, autonomous driving systems incorporate motion parallax and structure from motion techniques.

When the vehicle is in motion, the camera captures a continuous stream of images. According to geometric optics principles, objects closer to the camera displace faster in the image than background objects farther away.

For glass walls, although their main bodies are transparent, reflections, dust, or fingerprints on the surface produce unique displacement patterns as the vehicle moves.

By analyzing the displacement differences between these reflective points and background objects, the algorithm can calculate the depth of the glass plane. This method, known as 'parallax analysis,' is the cornerstone of pure vision systems for acquiring distance information without relying on LiDAR.

When dealing with glass walls with frames, structure from motion techniques can track the trajectories of frame feature points across multiple frames and reverse-engineer the camera's motion trajectory and the obstacle's 3D coordinates. This process involves extensive matrix operations aimed at finding an optimal spatial model that explains all pixel displacements.

Semantic context is another powerful reasoning tool for recognizing highly transparent glass walls. For example, in shopping mall environments, the presence of glass walls follows certain architectural patterns.

For instance, glass doors are embedded between solid walls, or storefront windows are located at the junctions of marble floors. Through deep learning training, the perception system can acquire these 'environmental commonsense.' Semantic segmentation models classify pixels in the image into categories such as 'floor,' 'wall,' 'ceiling,' and 'potential transparent obstacle.'

If the system detects an interruption in the continuity of the floor at a certain point or regular distortions in the reflection of ceiling lights on the glass surface, the semantic model will label that region as 'high-probability glass.'

This reasoning logic can even extend to analyzing 'absences.' If the vehicle's forward-facing camera detects rich background details along a certain path, but the side-facing cameras detect discontinuous image blocks at the same location (due to refraction or reflection), the system will infer the presence of a transparent interfering source at the intersection of viewpoints. This cross-view collaborative verification significantly enhances the robustness of pure vision systems in complex indoor environments.

Perception Boundaries and Safety Redundancy Driven by Data

The upper limit of pure vision autonomous driving solutions largely depends on the scale and diversity of their training data. For the task of glass recognition, which heavily relies on 'experience,' if the neural network has never encountered transparent objects under specific lighting or angles during training, it is highly prone to missed detections during real-world deployment.

To address this, some technical solutions attempt to use physically based rendering (PBR) techniques to generate highly realistic synthetic data.

These simulated data can not only model perfect glass but also simulate special transparent materials with cracks, stains, condensation droplets, or different refractive indices.

By generating tens of millions of video clips containing glass scenarios in simulators, the model can learn extremely subtle optical features on glass surfaces under various natural and artificial lighting conditions.

This 'digital twin' training method compensates for the data scarcity caused by the vast variety of glass types and high collection costs in the real world.

Currently, publicly available datasets specifically targeting transparent objects, such as Trans10K and ClearGrasp, are driving improvements in algorithm accuracy.

The Trans10K dataset contains over 10,000 real-world images of transparent objects and provides fine-grained annotations for 'things' (such as glasses, bottles) and 'stuff' (such as glass walls, windows).

The application of these datasets enables visual algorithms to achieve precise pixel-level segmentation of glass by learning the Fresnel effect at object edges and background distortions, with their mIoU (mean Intersection over Union) metrics continuously improving.

Final Thoughts

With the introduction of end-to-end (End-to-End) large models, autonomous driving's recognition of glass will no longer be divided into independent steps such as detection, tracking, and prediction. Instead, raw pixels will be directly mapped to driving actions.

In this mode, the system can gain a deeper understanding of the causal relationships in the physical world—that is, recognizing that an area that appears open ahead actually possesses impassable physical resistance. This enhancement in cognition marks the transition of autonomous driving perception technology from mere mathematical simulation to more advanced artificial intelligence reasoning.

-- END --