Qianwen, Doubao, and Yuanbao: What Should They Compete for in the Next Phase?

![]() 03/05 2026

03/05 2026

![]() 497

497

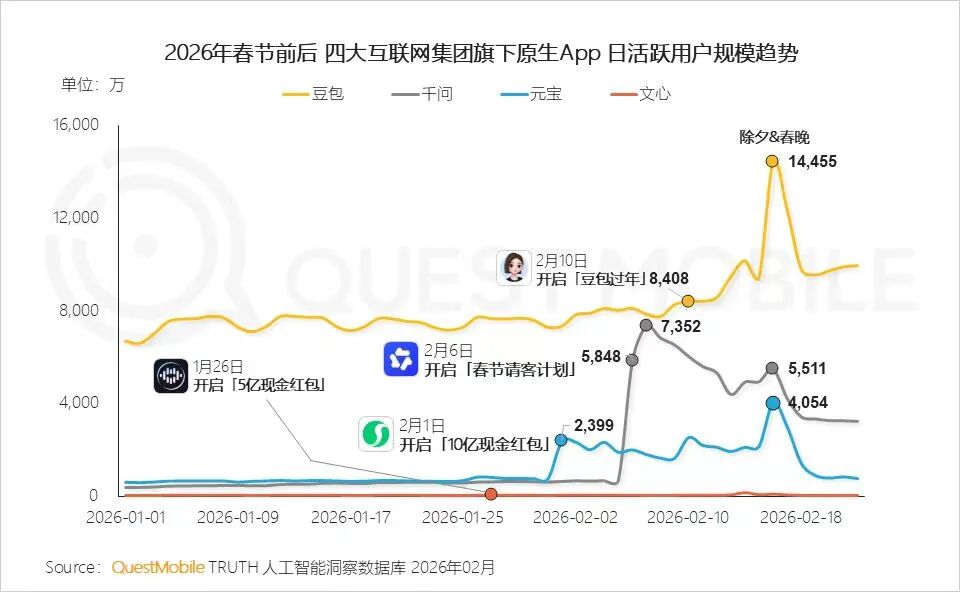

During the Spring Festival, Qianwen, Doubao, and Yuanbao all entered a phase of intense competition almost simultaneously. The Spring Festival Gala, red envelope promotions, subsidies, trending searches, and collaborative events emerged in full force, transforming AI applications into a major festive attraction.

While the daily active user (DAU) figures are impressive, they don't paint the full picture. During the holiday season, users are more inclined to try new things, share their experiences, and spread the word. Much of this growth is driven by emotions and incentives rather than sustained, long-term demand.

The real challenge arises after the holiday—when users return to their daily routines, will AI continue to be a part of their lives?

According to QuestMobile statistics, amidst fierce marketing efforts during the Spring Festival, Doubao, Qianwen, and Yuanbao all reached their peak DAUs. On one particular day, Qianwen nearly matched Doubao's user numbers, while Doubao's Spring Festival Gala campaign was unparalleled. However, after the initial "money-throwing" effect subsided, all three saw a significant decline.

Image Source: QuestMobile

Meanwhile, as the Spring Festival battle concluded, the heightened attention on AI applications turned into apologies to users or the departure of core management teams. This left the market puzzled: After relentlessly spending to boost DAU, have they truly figured out their next steps?

The issue in the next phase is not just about retention rates but the underlying structure of retention.

The first challenge is cost.

If every conversation requires substantial computational power, scaling up will only lead to greater losses. Only those who can continuously reduce unit inference costs can talk about sustainable growth.

Next, gaps will widen in scenario construction.

For the domestic market, standalone AI chatbots may not be the ultimate battleground. Truly stable usage frequency is likely to come from real work processes—office tasks, searches, social interactions, e-commerce, customer service, and other system entry points.

Some Domestic AI Chatbots

New variables are emerging in terminal forms.

Shortly after the Spring Festival, Qianwen began promoting standalone AI hardware, signaling a clear shift: AI competition is moving from the application layer to the terminal layer. Voice, visual, and multimodal devices may become the new default entry points.

In other words: The Spring Festival primarily addressed awareness issues.

In the next phase, companies must compete on token costs, find an irreplaceable usage scenario as soon as possible, and move beyond theoretical plans for next-generation AI hardware.

Token Cost Curve: Who Can Turn 'High Volume, Low Cost' into a Competitive Edge First?

While short-term active growth during the Spring Festival could be achieved with subsidies, these cannot secure long-term advantages.

The scalability of AI applications is fundamentally constrained by unit inference costs: Every generation, every multi-round conversation, and every multimodal understanding involves computational power, bandwidth, electricity, and scheduling expenses.

The recent trend of "self-built AI agents" around frameworks like OpenClaw has rapidly amplified this issue.

More and more companies and developers are building their own agents, automated workflows, and multi-step reasoning systems. AI is no longer just a single conversation but continuous invocation and long-chain execution.

Image Source: unsplash

This change is directly reflected in token usage.

Statistics from some developer platforms show that global AI inference token usage surged from about 6.4 trillion per week to 13 trillion per week in just one month at the beginning of 2026, nearly doubling. A significant portion of this growth comes from automatic invocations by agent systems.

Meanwhile, token consumption no longer grows linearly but exponentially with task complexity.

This means cost issues are shifting from "cost per conversation" to "system-level invocation costs." If every step relies on high-cost models, the more complex the AI agent, the faster costs rise.

The industry's most common mistake over the past year has been treating "stronger models" as the only direction and "larger parameters" as the only metric while ignoring a more practical question: When invocation scale increases tenfold, will costs rise proportionally? If the answer is yes, growth becomes a financial stress test.

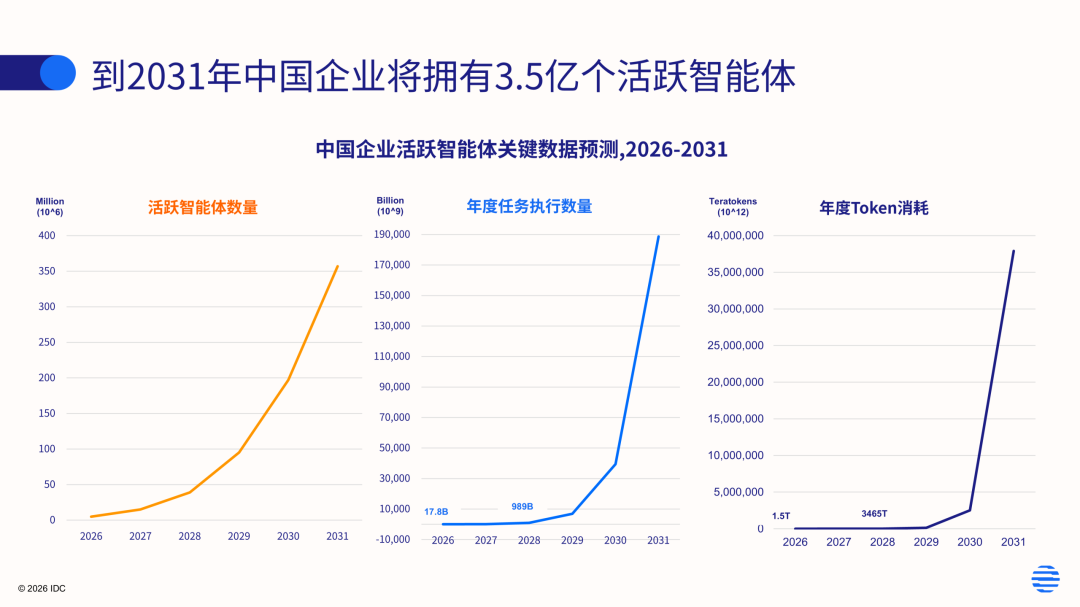

Image Source: IDC Consulting

In the next phase, whoever can flatten and accelerate the decline of the token cost curve will have greater strategic flexibility.

The token cost curve has at least three layers—

The first is inference-side optimization: Can we achieve the same output quality more cost-effectively through quantization, distillation, compilation acceleration, KV caching, and parallel strategies?

The second is model routing capability: Can we automatically select "good enough" lightweight models for different tasks instead of always using the highest-tier model?

The third is computational power and supply chain organization: Can we find the optimal balance between in-house computational power, cloud computational power, domestic chip adaptation, and cross-regional scheduling?

Of course, this curve alone has different implications for Qianwen, Doubao, and Yuanbao.

Image Source: Internet

Qianwen, backed by Alibaba Cloud and the e-commerce ecosystem, can theoretically combine computational power with business operations for "bulk procurement + tiered invocation," turning cost control into a platform advantage.

Doubao, relying on ByteDance's content and distribution ecosystem, has advantages in training data and scenario richness but also faces higher and more fragmented invocation frequencies, making cost optimization more demanding.

Yuanbao, within Tencent's ecosystem, is naturally close to social and content scenarios, but social scenarios are more sensitive to latency, stability, and security compliance, requiring cost optimization to proceed alongside experience and risk control.

The token cost curve will soon become a competitive bottleneck because it determines the competitive approach: When costs are low enough, you can use more aggressive pricing strategies to expand; when costs are not low enough, you can only rely on subsidies or "restricted features" to control consumption, ultimately narrowing the user experience.

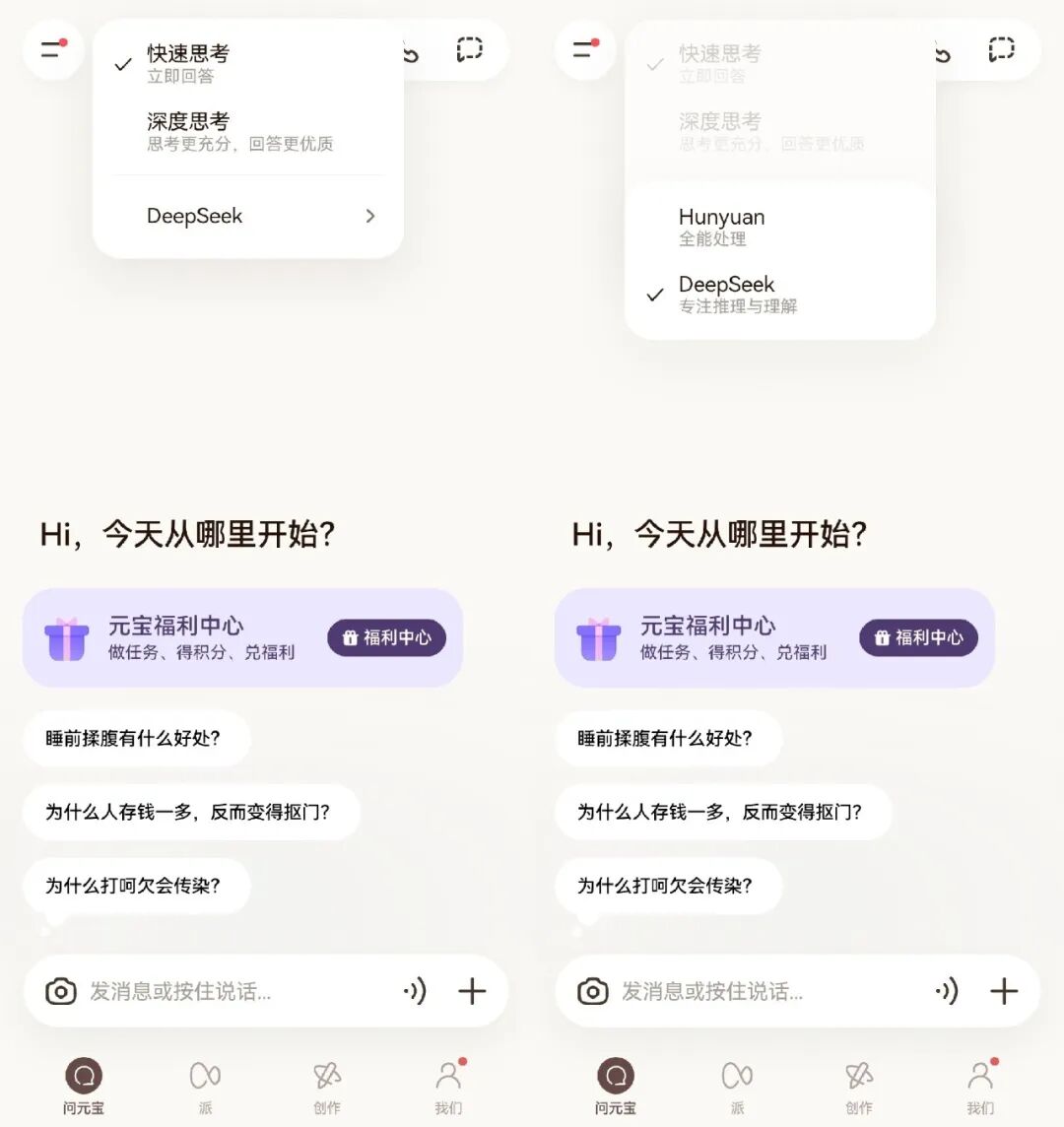

For example, Yuanbao still frequently switches to DeepSeek to replace its own Hunyuan large model, clearly due to computational power bottlenecks.

Screenshot of Yuanbao APP

Or take the temporarily popular Seedance 2.0, where even paid users often have to wait hours for a single generation—a desperate move born from excessively high computational costs as platforms try to balance expenses.

A better token cost curve will determine whether "AI can transition from the consumer (C)-side to the business (B)-side and transaction chains." Enterprise clients will not pay for a fun conversation but will pay for predictable efficiency improvements—provided prices are transparent and quality is stable.

In this sense, the core of the next phase has nothing to do with traditional internet product metrics like DAU or MAU; it is about smaller unit costs and TPD (Token Consumption Per Day).

From Scenarios to Entry Points: Hardware-Software Integration May Become the Norm

After the Spring Festival, competition among AI applications will increasingly resemble "phased battles": Leaderboards can be climbed, and DAU can be boosted, but these are unlikely to be decisive.

The real battleground lies in scenarios—whether AI can integrate into the paths users already follow daily and become a default underlying capability.

This is why "scenario integration" is even more critical than "retention rate." Even if users do not open the app, its model capabilities can still be invoked during daily use.

Image Source: unsplash

Scenario integration typically has three levels.

The first level is tool-based: Using AI as a basic tool for writing, translation, summarization, and search.

The second level is process-based: Integrating AI into office suites, customer service systems, and content production chains to participate in decision-making and collaboration.

The third level is transaction and execution: Enabling AI not just to answer but to directly invoke services, complete orders, appointments, payments, and after-sales.

Hardware's role here is to transform the entry point from one of many app options into a terminal "default."

Qianwen's recent focus on AI hardware is not complicated: AI entry points within smartphone screens are too crowded, with distribution rights divided among operating systems, super apps, and content platforms. Wearable devices, earphones, glasses, and other terminals may offer a more natural interaction method—voice activation, casual photography, real-time translation, navigation, and information prompts.

Qianwen AI Glasses G1

Once these scenarios are established, users will not need to "open a specific AI product"—AI will appear automatically within the scenario.

But hardware does not win just by being made.

It pushes competition to the physical level—

First is localization capability: For devices to be "always available," they must rely on a certain degree of on-device inference and low-latency architecture.

Second is service orchestration capability.

Third is long-term operational capability: Once hardware is shipped, it enters a long-term battlefield of supply chain management, after-sales service, system updates, privacy, security, and compliance—far heavier than software.

For Doubao and Yuanbao, developing hardware sooner rather than later is advisable, especially since Doubao's smartphone efforts faced fierce resistance last year. For ByteDance, many rumored hardware projects now have better reasons to be launched.

Doubao Mobile Assistant

Beyond costs, scenarios, and hardware entry points, there is a more direct variable in the AI industry: talent competition.

In the early morning of March 4, Lin Junyang, the core technical leader of the Qianwen team, announced his departure, followed by several key researchers leaving around the same time.

Of course, leadership changes are not uncommon in the AI industry, but their symbolic significance is amplified when large model competition reaches a critical stage.

Large models are not traditional engineering pipelines. Beyond tens of thousands of GPUs, what truly determines model capability are the dozens to hundreds of core researchers responsible for model architecture, training strategies, inference optimization, and data systems.

Image Source: X

The departure of a core researcher often means adjusting or even migrating entire technological pathways.

Thus, competition among AI companies appears to be about products, computational power, and entry points on the surface but is fundamentally a sustained competition in talent density.

This is why frequent poaching among domestic AI companies has occurred in recent years. From Alibaba to ByteDance, from Tencent to startups, the flow of top researchers almost always accompanies team migrations and technological route changes.

As model capabilities gradually converge and open-source ecosystems expand, what truly creates differentiation is often not parameter scale but the stability and innovation capacity of research teams.

Image Source: Internet

Ultimately, this phase of competition is no longer just about Spring Festival-style peak growth but about whether three capabilities can coexist:

The cost curve of tokens continues to decline, AI capabilities penetrate into real-world scenarios, terminal access points achieve software-hardware collaboration, while maintaining a sufficiently high talent density.

Only by simultaneously meeting these conditions can AI products transform from 'trendy applications during festivals' into long-lasting infrastructure.

The Spring Festival of 2026 is just the beginning; the long-term quagmire battle will continue.