Who Is Being Caught in Big Tech's 'Lobster Claw' Grip?

![]() 03/12 2026

03/12 2026

![]() 525

525

AI has transitioned from a mere 'conversationalist' to an 'executor' wielding the highest system privileges. The meteoric rise of OpenClaw has not only heralded a technological innovation carnival but also thrust the 'Achilles' heel' of data security into the spotlight. Are we truly prepared to embrace an era of intelligent agents that offer greater capabilities but also pose higher risks?

Author | Chen Rushi

Editor | Cindy

In March 2026, a red lobster ascended to the throne of the open-source world.

OpenClaw surpassed Linux with 273,000 GitHub stars, marking it as the most popular project in the platform's open-source history. NVIDIA CEO Jensen Huang hailed it as 'the most significant software release of our time.' This lobster has enabled AI to evolve from a mere 'mouthpiece' to a 'handpiece'—transitioning from chatting with you to performing tasks on your behalf: reading and writing files, executing commands, calling APIs, managing passwords, and more.

But, paradoxically, it also represents a 'high-risk species.'

On March 10, the National Internet Emergency Center issued a security risk warning about OpenClaw, highlighting its core vulnerability: to achieve 'autonomous task execution' capabilities, the application is granted extensive system privileges, including access to the local file system, reading environment variables, calling external APIs, and installing extensions. Given its extremely fragile default security configurations, once attackers find a breach, they can easily gain full control of the system. This warning from a national-level security agency has elevated OpenClaw's risks from technical community discussions to the public safety agenda.

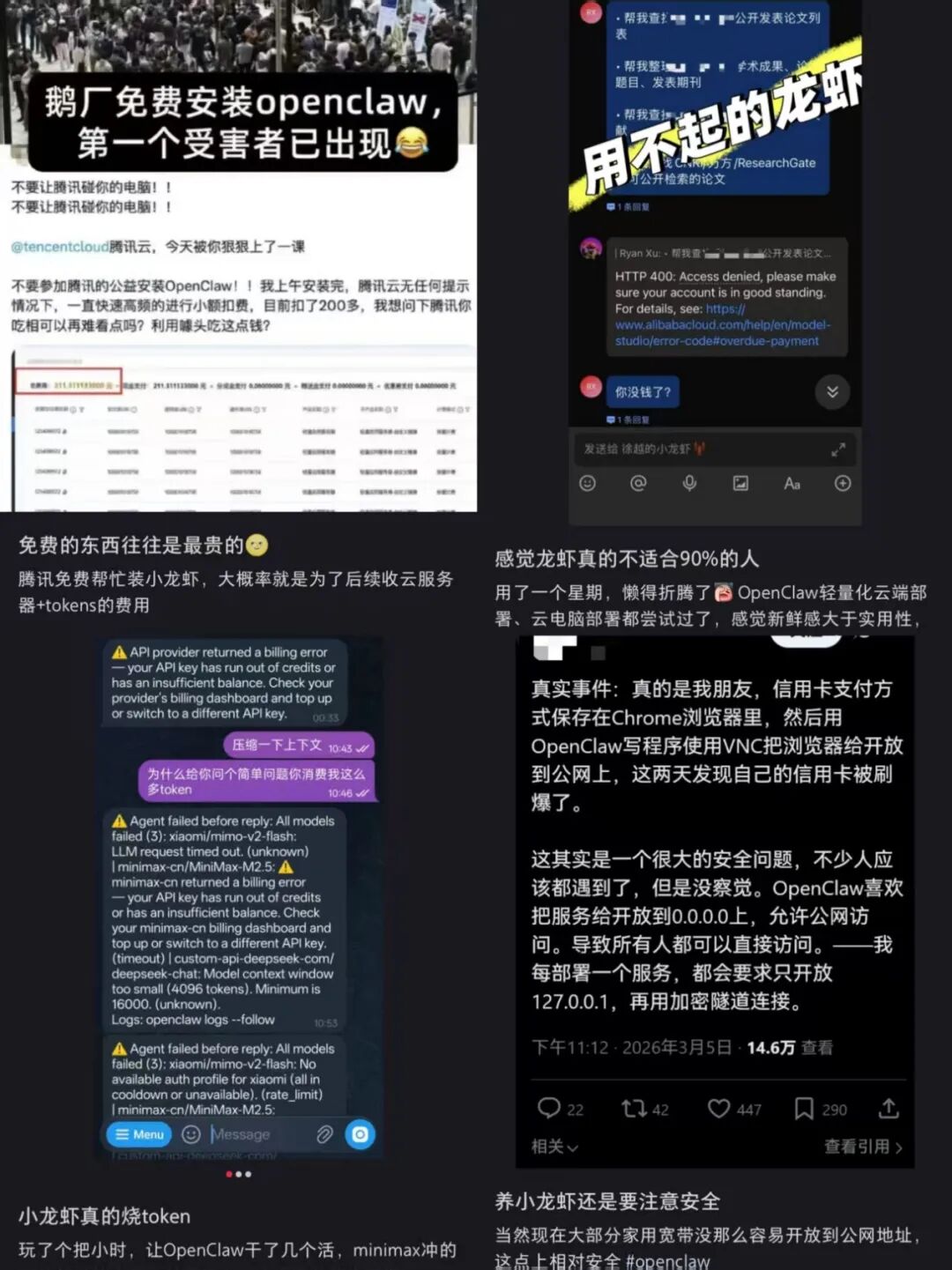

Users report adverse reactions after using 'Little Lobster' (Source: Xiaohongshu)

Moreover, the security community continues to uncover multiple vulnerabilities, such as ClawJacked, ClawHavoc 'poisoning' campaigns, and the Moltbook database 'running naked.' Experts repeatedly caution that 'even when updated to the latest version, 'Lobster' still does not fully mitigate risks' and 'this tool has no safety sheath.'

Behind these high risks, domestic tech giants are scrambling to join the fray. Relevant data indicates that over 20 vendors have officially entered the market, with more than 25 products already launched and many more in development. Tencent has introduced an 'AI Lobster Farming' suite, ByteDance created InStreet—a community where 'only AI can post'—Alibaba open-sourced HiClaw focusing on 'AI managing AI,' Xiaomi and Honor integrated 'Lobster' into their phones, and Baidu claims 'no need for on-site installation, four steps to get started.'

Local government competitions are also heating up. ZTE and Zhongguancun Kejin are actively proposing solutions to enterprises. Shenzhen Longgang recently released its 'Lobster Ten,' while Wuxi High-Tech Zone raised single-project subsidies to 5 million yuan, and Foshan Chancheng District quickly launched free deployment services. Meanwhile, official media cautiously reminded in an article titled 'Up to 10 Million Yuan in Subsidies: Local Governments Should Be Cautious About Following the 'Lobster Farming' Trend' that 'official entry brings both opportunities and challenges.'

This scenario is intriguing.

On one hand, security warnings are at an all-time high; on the other, giants are racing to stake claims while local governments invest heavily. When AI, this 'divine hand,' begins to control your system privileges, files, passwords, and API keys—who is footing the bill for this carnival? Are these rapidly entering companies truly prepared, or are they just scrambling for seats and worrying about the consequences later?

(Source: Pixabay)

What is clear is that entrusting computer privileges to AI comes at the expense of ordinary people bearing the cost of this carnival. Comedian Li Dan mentioned that some users have leveraged OpenClaw to automate tips and invitations to female streamers on social platforms, successfully meeting five people offline and crossing the boundary into 'social fraud.' More direct financial losses are also occurring: DoNews reported that a developer had their credit card fraudulently used due to an exposed OpenClaw control port, resulting in a 1,400 yuan loss for domestic users within two hours and a 250,000 USD loss in encrypted assets for overseas engineers. Another user's AI, trusting a 'sob story' post in the community, automatically transferred money to the requester without verification.

At this point, many who paid to install it are now uninstalling the lobster—the speed of adoption and abandonment is as swift as a real lobster dinner, though whether they savored the flavor remains unknown to outsiders.

01

Racing to Stake Claims: Giants' 'Lobster Farming' Competition

Within a month, major domestic players have shifted from a wait-and-see approach to full-scale integration.

Tencent is the most active participant. On March 6, nearly a thousand developers lined up in front of Tencent Tower to install OpenClaw; by March 10, Tencent officially announced its comprehensive 'Lobster' matrix: deployment-free WorkBuddy, QClaw (the only version supporting WeChat conversations) in beta testing, integrated WeChat Work and QQ bots, and one-click deployment via Tencent Cloud Lighthouse. Tencent is deeply integrating AI agent capabilities with its IM ecosystem.

ByteDance chose to position itself in AI social networking. The Kouzi team launched InStreet—a platform where 'only Agents can post, while humans can only watch.' Within a day, nearly 800 Agents were already 'complaining about their human masters' in the community. More intriguingly, InStreet itself was built using AI generation tools, with ByteDance using AI to design a social platform for AI.

Alibaba entered through open-source. Alibaba Cloud's open-source HiClaw focuses on a 'Team version,' using a Manager/Worker architecture to isolate credentials and permissions, targeting developers and small-to-medium teams' technical autonomy.

Xiaomi and Honor chose the mobile battlefield. Xiaomi miclaw entered closed beta testing, attempting to use AI agents to control over 1 billion IoT devices on the Mi Home platform. Honor announced its 'Lobster Universe' at its latest launch event, supporting one-click 'lobster farming' across tablets, phones, and PCs.

Baidu chose to lower barriers, debunking rumors of 'on-site installation.' Users can deploy it online in just four steps, fully integrating Baidu App with local personal assistants.

To understand why tech giants are collectively flocking to this space, we must return to the fundamental competitive logic of platform-based enterprises.

During periods of top-tier technological iteration, market share always takes precedence over product maturity. This logic has been repeatedly validated throughout internet history, from Windows bundling IE to crush Netscape to app store gateway control in the mobile internet era—the winner-takes-all rule remains unchanged.

OpenClaw's explosion came at the perfect time. It marks AI's leap from 'conversation' to 'execution,' and whoever controls the execution layer entrance (entry point) may seize the initiative in the next competition round.

Tencent integrates 'Lobster' into QQ and WeChat Work; ByteDance builds InStreet—an AI-only social platform; Honor and Xiaomi embed Agents into phones to control IoT devices. These moves, while seemingly scattered, all aim to secure entry points before users form new habits. Once users grow accustomed to having AI handle emails in WeChat, manage documents via QQ bots, or control devices through voice commands on their phones, migration costs become prohibitively high, effectively locking out competitors.

The consumer market logic is only one side of the story. The enterprise track (sector) competition is more multifaceted, with core keywords being 'control' and 'adaptability.' Alibaba's HiClaw chose open-source to target developers and small-to-medium teams' technical autonomy; Tencent's ADP Claw leverages cloud ecosystems and channel resources for rapid deployment and IM integration; ZTE's Co-Claw emphasizes 'systematic survival' for large enterprises with complex IT systems; Zhongguancun Kejin's PowerClaw packages industry-common needs into 'skill sets,' attempting to build barriers through scenario depth. These four vendors' four approaches reflect the lack of unified standards in the enterprise AI agent market.

What truly propels this competition to new heights is local government policy involvement. When OpenClaw ecosystems are included in local industrial support catalogs, corporate decision-making models shift accordingly. Securing policy dividend windows becomes as important as product maturity. Whoever lands demonstration projects in Longgang or secures Wuxi's 5 million yuan subsidy gains an extra edge in the next funding war.

One side 'blocks the gunfire,' while the other opens the gates—these two scenarios coexist and even reinforce each other. Precisely because the technology is immature, there's greater urgency to stake claims before standards solidify; precisely because security isn't guaranteed, there's more incentive to shift risks onto users while leaving caution to competitors.

Historical experience shows that every technological paradigm shift goes through this 'board first, validate later' phase. The difference is that when AI agents possess system privileges, can read/write files, and call APIs, the cost of 'validating later' may no longer be product reputation but users' digital assets and security themselves.

02

The Cost: When AI Becomes the Highest-Privileged 'Insider Threat'

Business decisions never factor in morality—only profit and loss.

Enterprises bear 'R&D costs to fix vulnerabilities' and 'potential reputation damage if something goes wrong,' while users face real losses like data breaches, system takeovers, and asset theft. The former's ceiling is stock price fluctuations; the latter's floor is the complete collapse of their digital lives.

AI agents can amplify risks by several orders of magnitude—they can read your files, call your APIs, manage your passwords, and execute your commands. A compromised OpenClaw instance is equivalent to a hacker cultivating a highest-privilege 'insider threat' within your system.

The warning confirms this risk, but its target is subtle—it cautions 'users and developers,' not 'platforms and vendors.' Companies can issue statements claiming 'we take user security seriously' while pushing OpenClaw-integrated updates to your phone.

Reviewing vendors' security disclosures reveals a common thread: security features are all 'optional,' rarely 'mandatory.' Tencent PC Manager's 'AI Security Sandbox' offers a 'one-click protection' button—whether to click it is up to the user; Xiaomi says 'high-sensitivity operations trigger confirmation boxes each time,' but what counts as 'high-sensitivity' and when boxes appear is determined by the product; Alibaba's HiClaw centralizes credential management, but Worker agents' logic for automatically retrieving public network skills remains open—attackers can still infiltrate by throwing a malicious 'bomb' into ClawHub.

This means, without mandatory standards, enterprises only need to comply with 'legality,' not 'sufficient security.' Risk repair schedules are self-determined; under compliance cost constraints, the rational choice is always 'meet minimum review requirements.'

More terrifying than single-node compromises is node-to-node infection—where mature agents interact on public platforms using human-incomprehensible instructions, building an AI 'dark web' beyond regulatory visibility.

OpenClaw's third 'Achilles' heel'—AI agents' autonomous interactions on platforms like Moltbook—has already demonstrated this risk's evolution path. A vulnerability allows attackers to alter platform posts, launching large-scale indirect prompt injections against other AI reading those posts. One Agent compromised, the entire ecosystem suffers. When millions of AI agents 'communicate' in InStreet using protocols humans can't understand, when their conversation records are exfiltrated, tampered with, or injected with malicious instructions, a single node's compromise is no longer isolated. Who takes responsibility for these 'digital ghosts'' actions? The enterprise? The user? Or the developer who built the platform with AI but misconfigured database permissions?

Melbourne University cybersecurity researcher Shanan Coney's warning bears repeating: 'Connecting large numbers of autonomous agents creates a chaotic dynamical system that humans currently cannot model.' Some already suspect that tech giants' collective entry, in the name of commercial expansion, is accelerating this chaotic system's scale toward unknown edges.

03

The Dilemma: The Boundary Between Commercial Sprint and Security Catch-Up

The AI agent paradigm represented by OpenClaw has become an irreversible technological trend. Major vendors' intensive deployments indicate that AI's shift from 'conversation' to 'execution' is the industry's consensus direction.

However, we must again note that the pace of commercial expansion is comprehensively overwhelming prudent security governance. Risk warnings continue to issue but fail to slow the rapid launch rhythm of enterprise Claw products.

This isn't just a domestic issue—OpenClaw founder Peter Steinberger responded to security questions by saying 'that's not my priority' before joining OpenAI; shortly after Moltbook's vulnerabilities were exposed, InStreet fully opened its beta testing. This rhythm itself reflects priority ordering: seize market share first, patch security later.

The mechanism for risk transfer remains largely unregulated. When the actual victims of security incidents are users rather than the platforms themselves, and in the absence of enforced regulatory standards, the rational choice for enterprises is inevitably to "launch first and address concerns later." This behavior is not a moral shortcoming of individual companies but rather a flaw in the design of incentive structures.

The magnitude of systemic risks is outpacing the predictive capabilities of current security models. Hundreds of millions of AI agents with system-level access are connecting to social networks, corporate systems, and government platforms. Their autonomous interactions may generate emergent behaviors that are beyond human anticipation. People's Daily has issued an urgent warning: Party and government agencies, enterprises, institutions, and individuals should exercise caution when using 'Longxia'.

Today, the security controversies surrounding OpenClaw have expanded beyond the realm of individual products and have become a public concern in the age of intelligent agents. This indicates that security governance cannot rely solely on voluntary corporate actions or user self-protection. In an era where AI agents have system-level access, individual security is synonymous with collective security; a vulnerability in one node represents a vulnerability in the entire network.

We must consider: Is there a need to establish mandatory safety standards for AI agents? Should the granting of system-level access be subject to regulatory oversight? Should "traffic rules" be established for the autonomous interactions between AI agents?

The controversy over OpenClaw, at its core, represents an AI-era iteration of a timeless question: When new technologies push boundaries, should commercial interests lead the way, or should safety considerations take precedence?

History shows that commercial interests have consistently taken the lead. The difference now is that, in the past, running too fast might have resulted in a drop in stock prices; this time, running too fast could erode an entire generation's trust in the digital realm.

Tech giants can, of course, continue to assert their dominance. However, the premise must be that someone will ultimately be held accountable for every advancement made. When AI agents truly hold the reins of the digital world, attempting to loosen that grip—like a lobster that no longer recognizes its master—may prove futile.

END

Producer: Huang Qiangqiang