NVIDIA's "Strategic Shift": A $26 Billion Bet on Open-Source AI, Marking a New Era of Industry Dominance

![]() 03/13 2026

03/13 2026

![]() 635

635

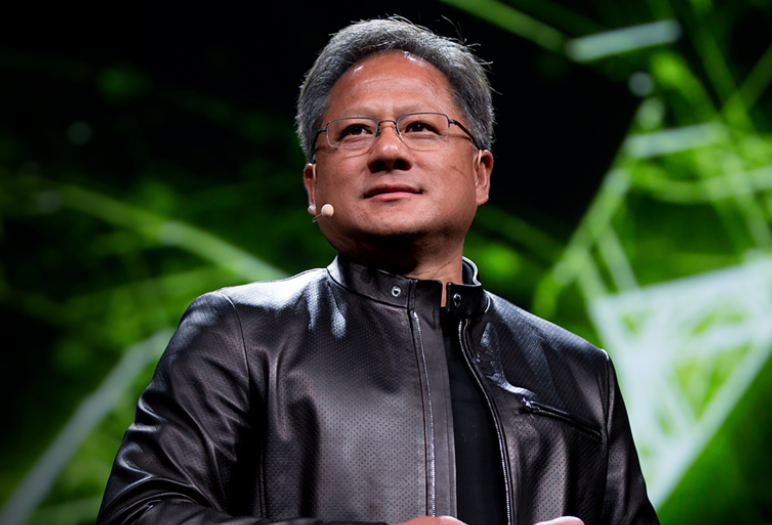

Jensen Huang, the CEO of NVIDIA, is renowned as one of the business world's most compelling storytellers.

However, this time, he bypassed the narrative flair and went straight to the point with an SEC filing: a staggering $26 billion investment over five years in open-source AI large models, aiming for full-industry-chain coverage.

What does this figure signify? Consider that OpenAI invested approximately $3 billion in training GPT-4. NVIDIA's $26 billion commitment is nearly ninefold that amount. This isn't merely an investment; it's a strategic offensive against the entire AI large model sector.

Why would the "shovel seller" enter the fray?

For the past three years, NVIDIA has thrived in the role of the "shovel seller" amidst the AI gold rush. As companies rushed to mine AI's potential, NVIDIA provided the essential tools. With H100 chips priced at $20,000 each, the higher-priced H200, and the B200 doubling that cost, NVIDIA watched as OpenAI, Anthropic, Google, Baidu, ByteDance, and others trained their models, all contributing to NVIDIA's coffers willingly.

This business model is historically near-perfect: NVIDIA doesn't need to win the AI race; it just ensures everyone else competes fiercely within the AI landscape, while it collects the profits.

Jensen Huang once remarked, "NVIDIA is the infrastructure of AI, akin to electricity and the internet." While this sounds modest, the underlying logic is audacious—your success or failure is inconsequential to me; I simply collect the tolls.

So, why the sudden shift in strategy?

Three layers of strategic rationale

The reasons are multifaceted and increasingly profound.

Layer 1: Eroding competitive moats.

Groq's LPU inference chips, Cerebras' wafer-scale chips, and the relentless pursuit of domestic alternatives are all challenging NVIDIA's computational power hegemony. More ominously, OpenAI is developing its own chips, Google's TPU is maturing, and Amazon's Trainium is poised for mass adoption—NVIDIA's largest customers are all planning to reduce their reliance on NVIDIA.

When the shovel-selling business faces instability, the best defense is a strong offense: if your model becomes the industry standard, who dares leave your ecosystem?

Layer 2: Open-source as the ultimate competitive barrier.

Meta demonstrated this with Llama: when your open-source model becomes the industry foundation, everyone contributes to expanding your ecosystem. Your competitive barrier doesn't thin; it thickens. NVIDIA's launch of Nemotron 3 Super, with 120 billion parameters, designed for enterprise-grade multi-agent systems, isn't mere showmanship; it's embedding a "only NVIDIA's full stack runs smoothest" spike in every ToB scenario.

An open-source model paired with private chip optimization forms an open yet formidable alliance. Everyone sees it, but few can resist.

Layer 3: The AI battlefield is shifting from "training" to "inference."

This insight is pivotal. Training large models is a resource-intensive endeavor accessible to anyone with sufficient capital. However, inference—enabling models to respond in real-time to billions of users—is the true battleground of scale, the territory NVIDIA most covets.

With the most optimized inference chips (new inference chips integrating Groq LPU technology will be unveiled at GTC) and open-source models finely tuned for performance, NVIDIA aims to guide every global enterprise's AI deployment along the path it paves. This is the essence of ecosystem lock-in.

Navigating customer relationships

Yet, this "strategic shift" comes with a cost.

NVIDIA's original largest customers—OpenAI, Anthropic, Google—now face a formidable new competitor. These companies not only purchase NVIDIA's chips but also vie with NVIDIA's models for users and enterprise clients in the application market. This dynamic is known in business history as the "supplier turning competitor." Mishandle it, and the consequences could range from lost orders to joint boycotts. NVIDIA is acutely aware of this risk. Hence, they've chosen "open-source" as a shield—you can't accuse a company giving away technology for free of malicious competition. This stance is both a business strategy and a display of political acumen.

However, the deeper contradiction persists: when NVIDIA both sells shovels and mines gold, who defines the boundaries of NVIDIA's ecosystem power? These are questions that $26 billion cannot resolve.

What qualifies NVIDIA to build models?

Another question looms large: what qualifies NVIDIA to build models?

Chipmaking demands hardware engineering prowess: precision manufacturing, physical limitations, yield control. Large model development requires AI research acumen: data engineering, algorithmic innovation, RLHF alignment, evaluation systems. These are two distinct organizational DNAs.

NVIDIA possesses the best chips, the strongest engineers, and limitless computational resources—but does it possess a world-class AI research culture? The "obsession" required to refine top-tier models?

Meta's Llama succeeded due to Zuckerberg's years of strategic patience and resource commitment. Anthropic's Claude thrived because of the team's unwavering belief in AI safety. NVIDIA builds models for commercial moats, not technical idealism. These motivations yield different textures in the final product.

Of course, this doesn't preclude NVIDIA's models from becoming industry infrastructure—after all, Linux wasn't created to subvert the world, yet it did.

AI Capital Bureau's Perspective

Returning to that SEC filing: $26 billion, five years, open-source, full-industry-chain.

This isn't mere business expansion; it's a declaration of ecological imperialism. Jensen Huang is asserting: In the AI era, it's not about whose model is smartest, but whose foundation is most unshakable. I'm not building a large model; I'm constructing a foundation no large model can bypass.

This logic mirrors Microsoft bundling IE with Windows or Google tying search to Chrome.

AI Capital Bureau is a professional platform dedicated to observing and analyzing capital market dynamics in the AI field. We closely monitor financing, listings, M&A, and other capital operations of AI and embodied intelligence companies, deeply analyze industry and enterprise development trends, and provide valuable insights for industry participants. We are committed to bridging AI innovation and capital markets, aiding Chinese hard-tech companies in achieving value discovery and growth.

Risk Warning and Disclaimer: The market is fraught with risks; invest cautiously. This article does not constitute investment advice, practical operational suggestions, and transaction risks are borne by the individual.