GTC 2026 | NVIDIA: Pioneering the Transformation of the World into an AI-Powered Computing Network

![]() 03/17 2026

03/17 2026

![]() 439

439

Produced by Zhineng Zhixin

In March 2026, the NVIDIA GTC conference kicked off at the SAP Center in San Jose, USA.

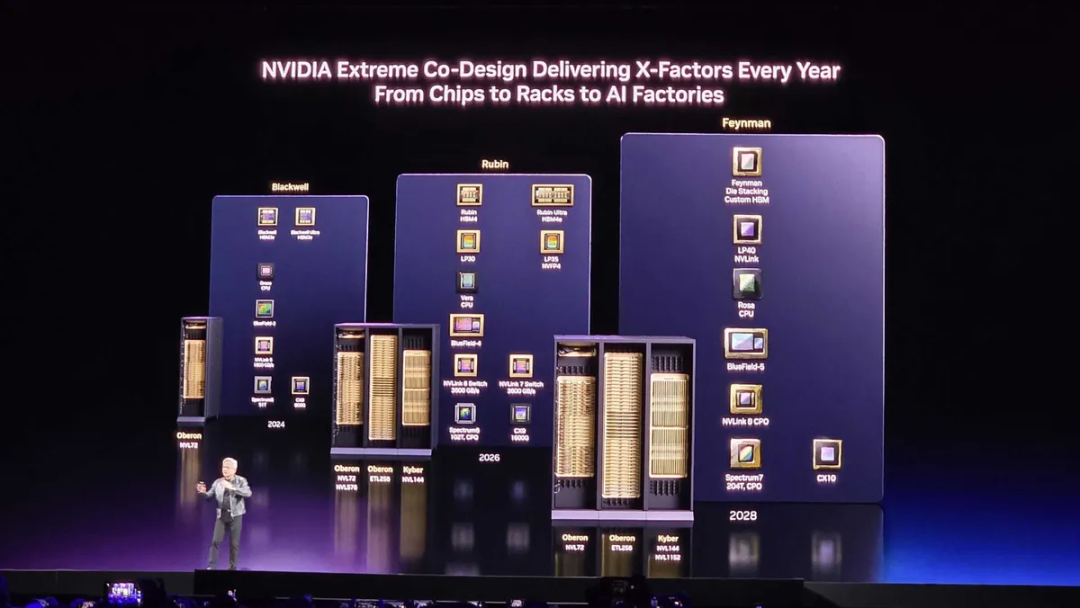

Over the past few years, this conference has evolved from a GPU technology symposium into the annual barometer for the global AI industry. With each new architectural release—such as Hopper and Blackwell—the landscape of AI computing undergoes a virtual redefinition. This year, NVIDIA unveiled its next-generation computing platform: Vera Rubin.

Jensen Huang comprehensively presented NVIDIA's AI technology roadmap for the next three years, covering everything from next-generation chip architectures to 'AI Factory' infrastructure, extending to robots and autonomous driving in the physical realm, and even venturing into space computing.

Part 1: Computing Leap—Vera Rubin and the AI Factory

● Vera Rubin: More Than Just a Chip, It's a System

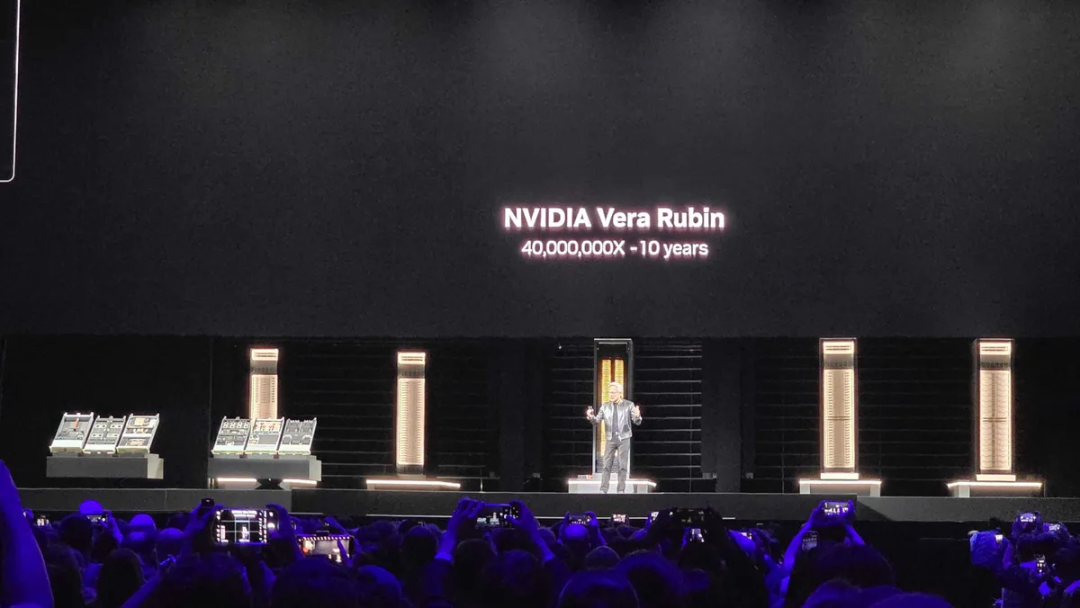

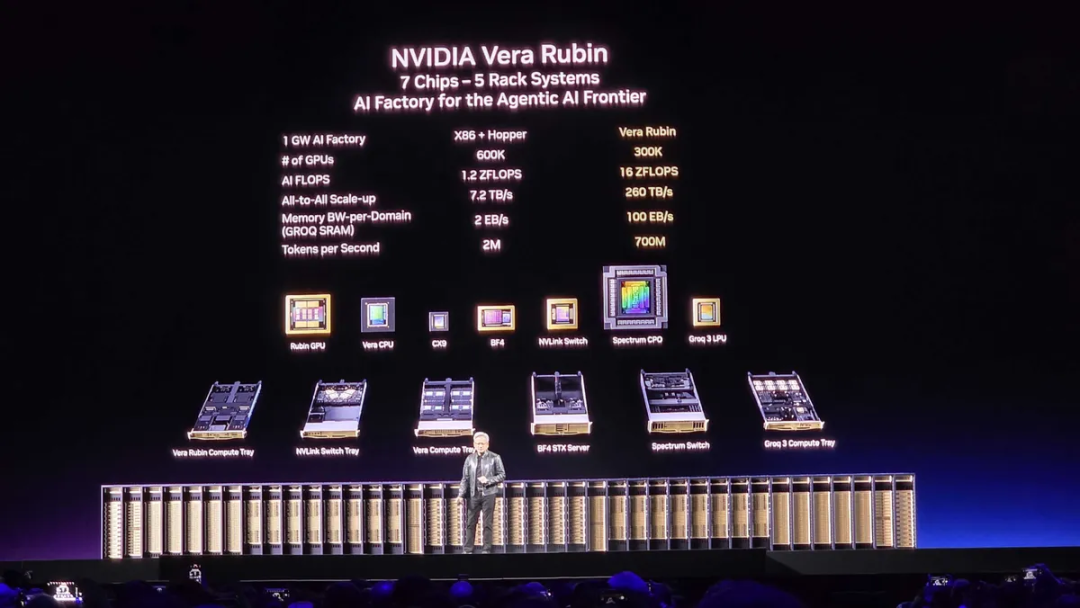

The most significant announcement at this GTC is NVIDIA's next-generation AI platform, Vera Rubin.

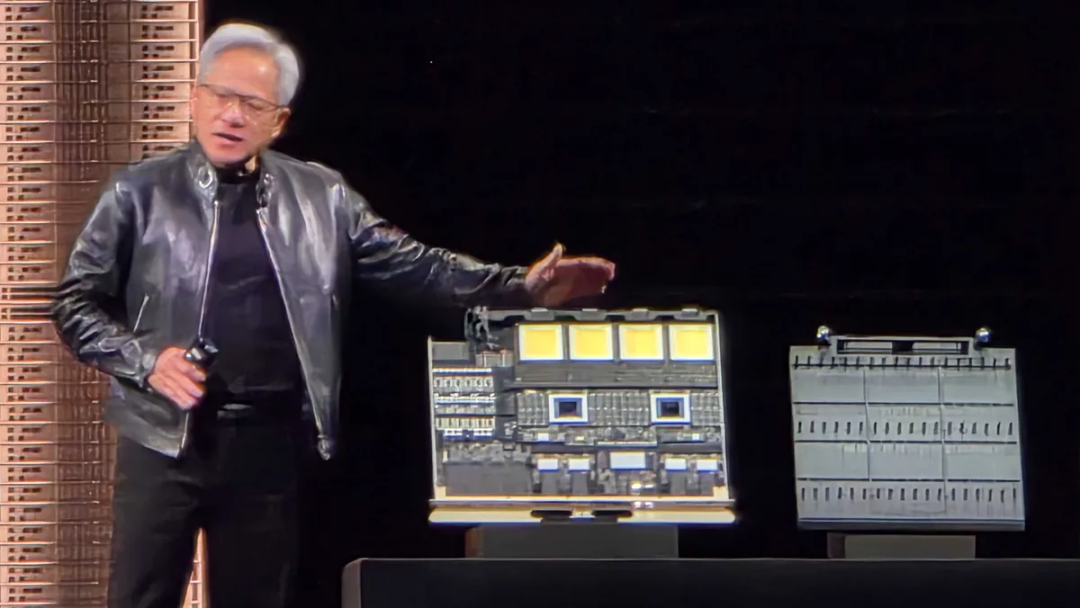

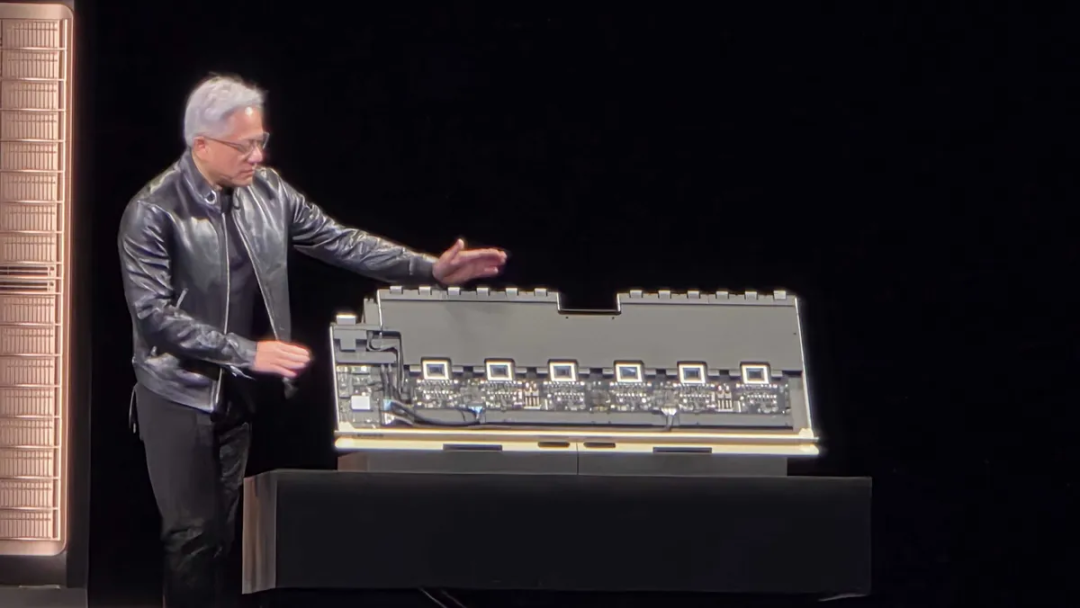

To fully grasp this release, one must first correct a common misconception: Vera Rubin is not merely a GPU but an entire computing system architecture. It encompasses the Vera CPU, Rubin GPU, BlueField-4 DPU, NVLink interconnect, Photonic Ethernet, and HBM4 high-bandwidth memory.

Every component is either developed in-house or deeply integrated by NVIDIA, with the overall design logic emphasizing collaborative optimization of computing, storage, and networking at the system level, rather than functioning independently.

Compared to Blackwell Ultra, the Vera Rubin platform will deliver several-fold improvements in AI inference performance, further increase computational density, and significantly reduce the cost per token for inference. Mass production is anticipated in the second half of 2026. Relying solely on scaling GPU computing power is increasingly inadequate to overcome AI computing bottlenecks.

The real limitations often stem from memory bandwidth, interconnect latency, and the coordination efficiency between computing and storage. The design of Vera Rubin is a systemic response to these bottlenecks.

● NVL144: A Single Rack, A Supercomputer

For rack-level deployment, NVIDIA introduced the NVL144 system, capable of integrating 144 Rubin GPUs and Vera CPU nodes within a single rack. The overall inference performance reaches the 3.6 ExaFLOPS level. In 2012, the world's fastest supercomputer, Titan, had a peak performance of approximately 27 PetaFLOPS. The inference computing power of NVL144 surpasses that of 100 Titans combined.

Of course, the definition of floating-point operation precision differs, so this comparison is not strictly precise, but it still underscores a point: the density of AI computing is now entering a scale previously achievable only by national-level supercomputers—now attainable with a standard rack.

● AI Factory: Redefining the Data Center

Huang repeatedly used the term 'AI Factory,' a conceptual framework that redefines the function of data centers.

The core function of traditional data centers is to store data and run software. In contrast, the core function of an AI Factory is to produce tokens—training and inference models that continuously output computational results.

Under this framework, computing power becomes an industrial resource akin to electricity, quantifiable, sellable, and dispatchable on demand.

Around this concept, NVIDIA introduced the DSX AI Factory reference architecture, providing a complete data center solution ranging from server design and network topology to power and cooling.

The accompanying Omniverse AI Factory Blueprint utilizes digital twin technology, allowing the entire data center's operational state—power distribution, GPU load scheduling, network bottlenecks—to be simulated in a virtual environment and optimized before construction.

This represents an industrial design mindset: designing data centers like cars or chips.

● From Training to Inference: A Structural Shift in Computing Demand

The AI industry is transitioning from the training era to the inference era. Over the past few years, the vast majority of AI computing power has been consumed in training large models.

However, as model capabilities stabilize, future computational growth will increasingly come from inference—deploying models into the real world to continuously provide services for AI search, AI assistants, autonomous driving, and enterprise agents.

The demand characteristics of inference are entirely different from those of training: low latency, high concurrency, and long-duration operation.

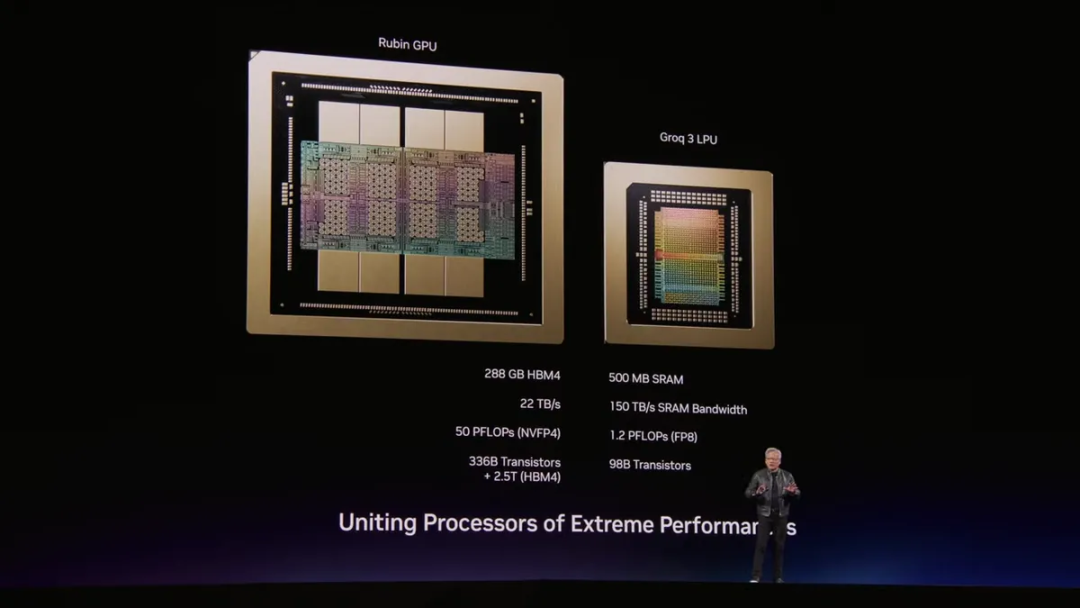

NVIDIA unveiled the Groq 3 LPX system, combining Rubin architecture GPUs with dedicated inference processors, aiming to achieve a throughput capacity of 700M tokens per second, representing an over 350-fold improvement over the previous architecture.

Training is like building a factory; inference is like operating the production line. NVIDIA's focus at this GTC has shifted from the former to the latter.

Part 2: AI Enters the Physical World

● Autonomous Driving: From Software to Steel

Automakers joining NVIDIA's autonomous driving ecosystem include BYD, Geely, Hyundai, and Nissan, covering the three major automotive industrial systems of China, Japan, and South Korea. The 'NVIDIA-ization' of autonomous driving is permeating from software into the entire vehicle supply chain.

NVIDIA's core value in autonomous driving lies not in cameras or radar but in its simulation system. The virtual driving environment constructed through the Omniverse platform can generate billions of training scenarios, covering rare long-tail situations that are difficult to encounter on real roads.

This is a logic of trading computing power for safety margins, with NVIDIA serving as the infrastructure provider for this logic.

● Robotics: Physical AI Data Factory

Robotics was one of the most extensive physical AI scenarios covered at this GTC.

Industrial robot manufacturers such as ABB, KUKA, and Universal Robots announced their entry into NVIDIA's ecosystem, backed by the infrastructure of NVIDIA's newly released Physical AI Data Factory—a data production system dedicated to training robotic vision and control models.

This system addresses the most challenging issue in robotic AI training: the scarcity of real-world data and the difficulty in covering all operating conditions.

By synthesizing training data on a large scale in a digital twin environment, the development cost for robots entering new scenarios can be significantly reduced. This follows the same logic as autonomous driving—NVIDIA is using the same infrastructure framework to connect the physical AI landscape from driving to manufacturing.

● DLSS 5: Neural Rendering Rewrites Graphics

NVIDIA did not overlook its traditional areas of strength this time.

DLSS 5 introduces the concept of neural rendering, directly incorporating AI models into the image generation pipeline to handle content such as human facial textures, hair details, and fabric materials that are difficult to render efficiently with traditional rasterization pipelines.

The traditional game rendering pipeline involves geometry, lighting, materials, and pixels, with each step being a deterministic mathematical calculation. Neural rendering allows AI to participate directly in this process, producing higher-quality images at a lower computational cost.

NVIDIA positions this as the most significant technological leap in graphics since real-time ray tracing, with an official release expected in autumn 2026.

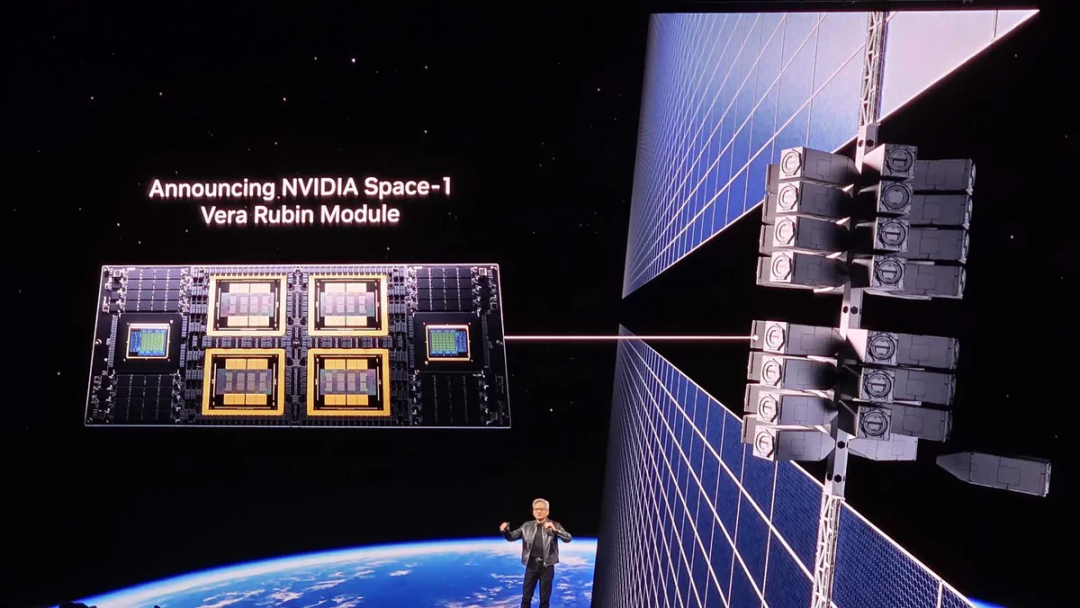

● Space Computing: Orbital Data Centers

NVIDIA showcased the Space-1 Vera Rubin Module—a plan to deploy AI computing into orbital data centers, achieving high-performance AI computing through solar power while maintaining low-latency communication with the ground.

This direction is still in its early stages, but its logic is noteworthy: as AI's demand for computing power continues to grow, the limitations of ground-based data centers in terms of land, power, and cooling will become increasingly apparent, while orbital computing offers a possibility of breaking free from physical constraints.

NVIDIA's choice to propose this direction at GTC is more about declaring boundaries—the AI infrastructure landscape extends beyond the Earth's surface.

Part 3: What Is NVIDIA Betting On?

● From GPU Company to AI Operating System

If we review NVIDIA's strategic evolution over the past decade, a clear path emerges: GPU computing → AI training platform → AI infrastructure → AI operating system. With each upgrade, NVIDIA has moved toward higher software layers while bringing more hardware capabilities under its ecological control.

CUDA was the starting point of this strategy, making NVIDIA's GPUs the de facto standard for AI research.

NVLink and InfiniBand were the next steps, bringing the organization of multi-GPU systems under NVIDIA's design purview.

Vera Rubin is the latest extension of this route: CPUs, GPUs, DPUs, and interconnect networks are all developed in-house or deeply integrated, with the entire computing system becoming an object that NVIDIA can define and optimize.

"It all starts here." This phrase carries deeper meaning than it sounds: NVIDIA is positioning itself as the computational origin point of the AI era, not just a supplier.

From 2025 to 2027, the AI hardware market is expected to reach $1 trillion, with NVIDIA anticipated to capture a significant share through its Blackwell and Rubin platforms.

Whether this figure holds depends on several premises: whether AI inference demand explodes as expected, whether data center capital expenditures continue to increase, and whether NVIDIA can maintain its market share amid competition.

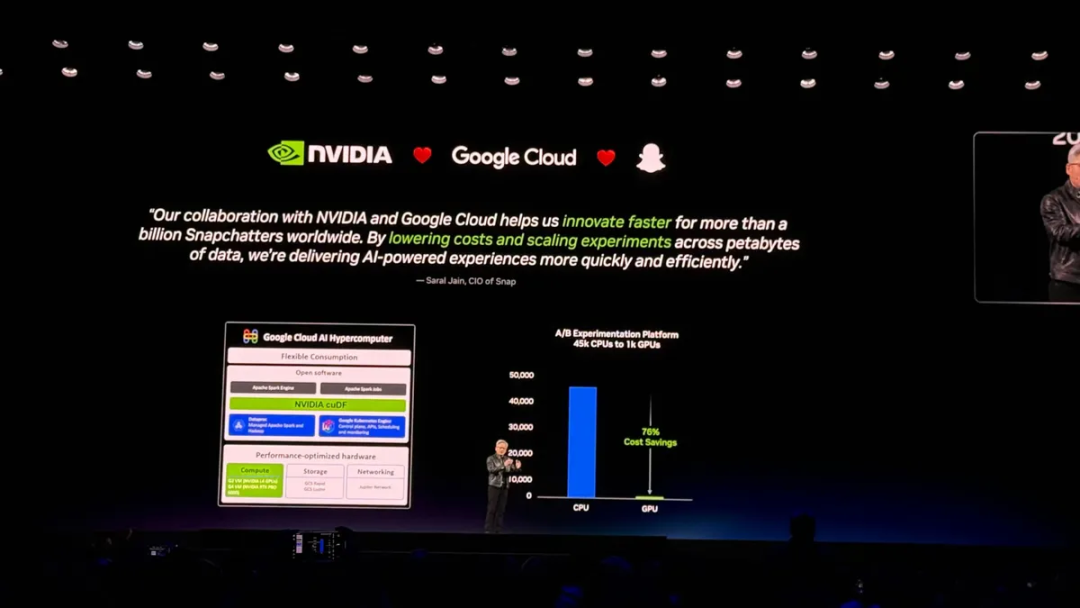

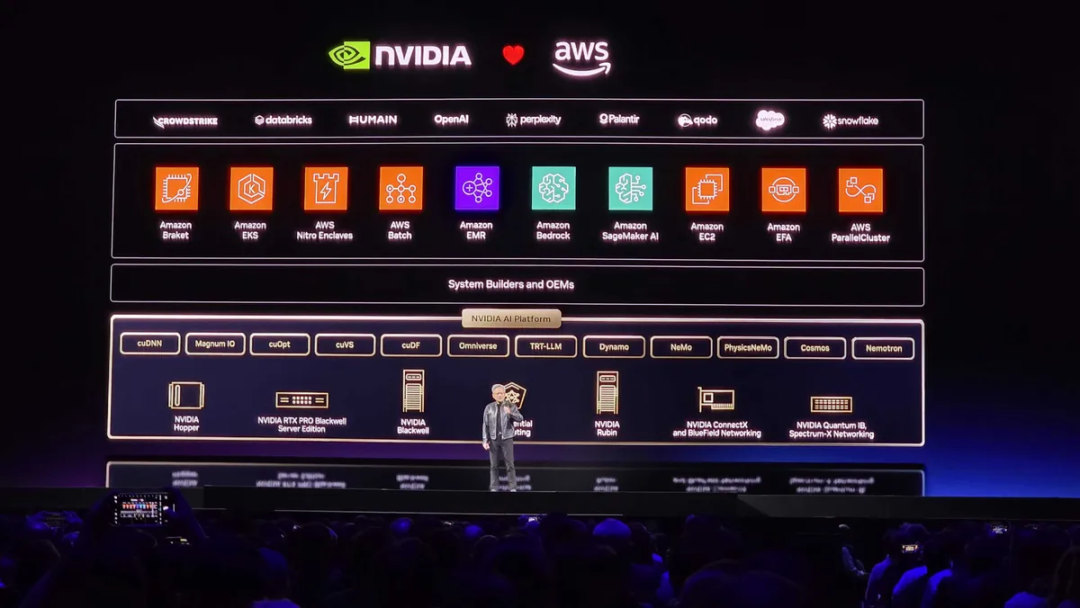

Currently, NVIDIA's AI ecosystem covers major cloud providers like AWS, Google Cloud, Microsoft, and Oracle, as well as vertical industries such as autonomous driving, healthcare, robotics, and manufacturing. However, the stability of this ecosystem is being tested by an increasing number of challengers.

● Competitive Landscape: The Silent Challengers

At this GTC, NVIDIA's competitors maintained a relatively low profile. However, this does not mean the threat is diminishing.

◎ AMD's MI400 series is launching an offensive against NVIDIA's high-end inference market, already demonstrating competitiveness in certain specific workloads.

◎ Google's TPU v5 and AWS Trainium 2 represent another route—hyperscale cloud providers are replacing purchased GPUs with in-house chips, gradually transforming from NVIDIA's largest customers into potential competitors.

This structural contradiction of "being both a buyer and a competitor" is one of the most challenging strategic issues NVIDIA will face in the coming years.

One of the core hardware components of the Vera Rubin platform is HBM4 high-bandwidth memory, a market currently dominated by three companies: SK Hynix, Samsung, and Micron.

The supply capacity of HBM directly determines the production capacity ceiling for AI chips. The tight supply of Blackwell over the past two years was partly due to constraints in HBM production capacity. As Vera Rubin enters mass production, the supply chain competition surrounding high-bandwidth memory will intensify further.

Whoever achieves large-scale mass production of HBM4 first will directly influence the ramp-up speed of NVIDIA and even the entire AI computing power market. This is a topic not mentioned on stage at GTC but widely discussed offstage.

Summary

Every year, GTC uses new terminology to describe an even grander ambition. From GPUs to accelerated computing, from accelerated computing to AI factories, from AI factories to Physical AI, and now to orbital data centers—with each expansion, NVIDIA pushes the boundaries of what "computing" means.