NVIDIA GTC: The Spring Festival Gala of the AI World, Full of Anticipation, but Disappointed in the End?

![]() 03/17 2026

03/17 2026

![]() 642

642

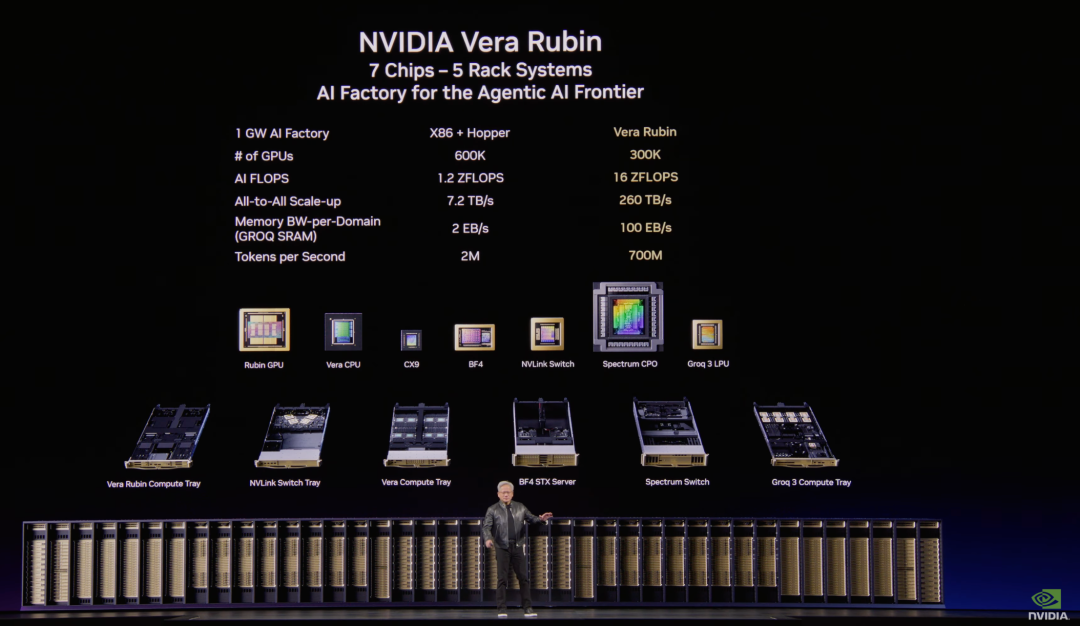

On March 16, 2026, NVIDIA founder and CEO Jensen Huang delivered a keynote speech at GTC 2026, covering core topics such as the 20th anniversary of the CUDA platform, the inflection point of inference and the explosion in computing power demand, the Vera Rubin system architecture, Groq integration, the OpenClaw agent revolution, and physical AI and robotics.

I. Key Highlights of GTC2026

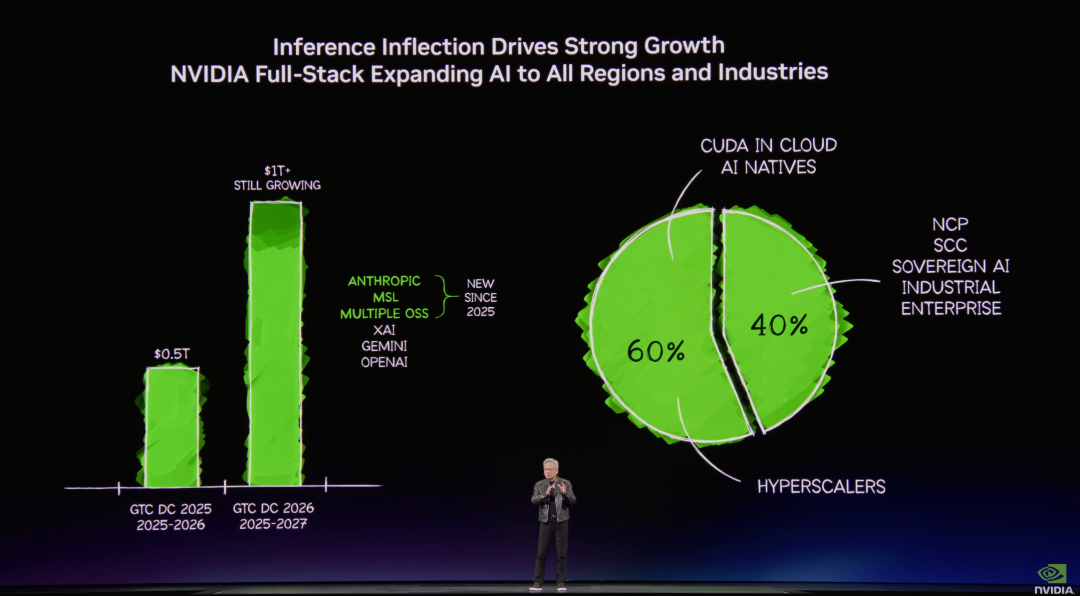

1) Data Center Revenue Outlook: Cumulative data center revenue from 2025 to 2027 is expected to reach $1 trillion (last year's GTC projected $500 billion in cumulative revenue from 2025 to 2026), meeting expectations. The market's mainstream expectations have already risen above $1 trillion, with greater anticipation for the company to provide specific information on orders.

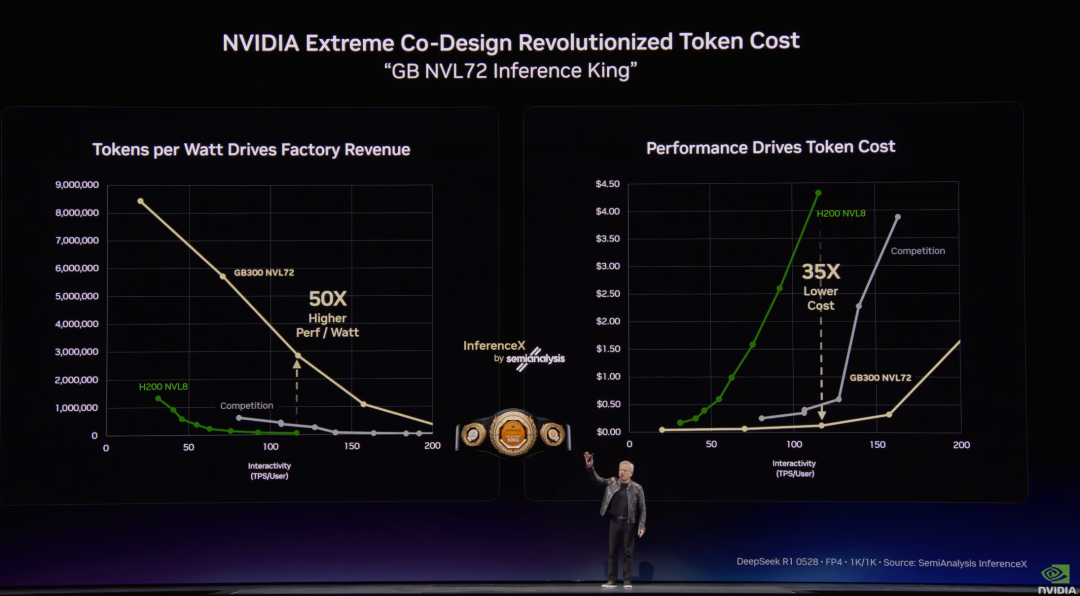

2) Performance and Cost: NVIDIA leads globally in both tokens/watt (throughput) and token speed (intelligence), with the lowest token cost worldwide.

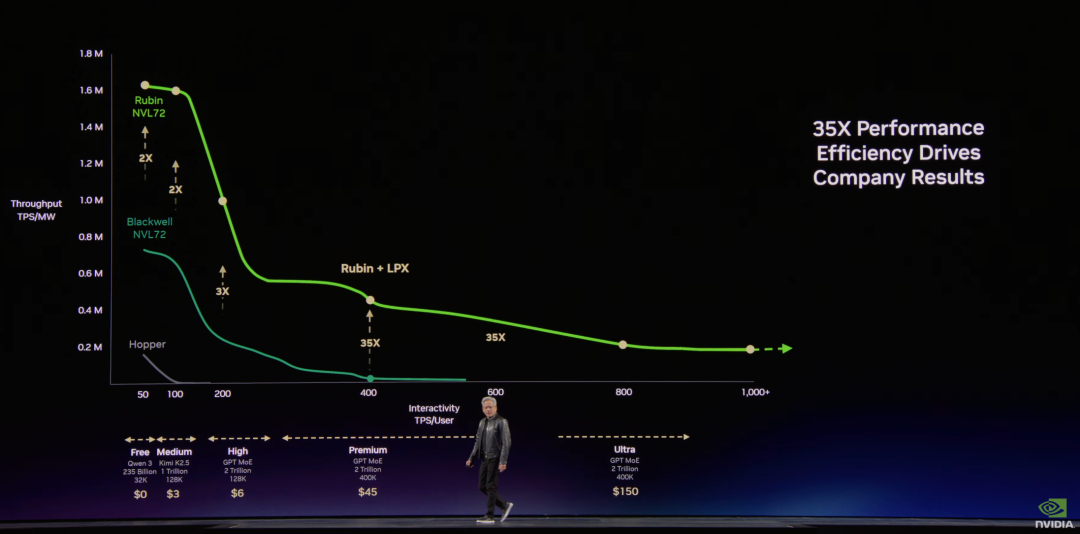

3) Data Centers as 'Token Factories': Each factory is limited by power (e.g., 1GW) and needs to manage the throughput and speed of token production.

Tokens will be segmented into tiers like commodities: Free tier (high throughput, low speed) -> $3/million tokens tier -> $6/million tokens tier -> $45/million tokens tier -> $150/million tokens tier (top-tier low-latency, high-bandwidth computing power).

Taking a 1GW data center as an example, with each 25% of power allocated to a tier: Grace Blackwell can generate 5x the revenue of Hopper, and Vera Rubin can further increase it by 5x.

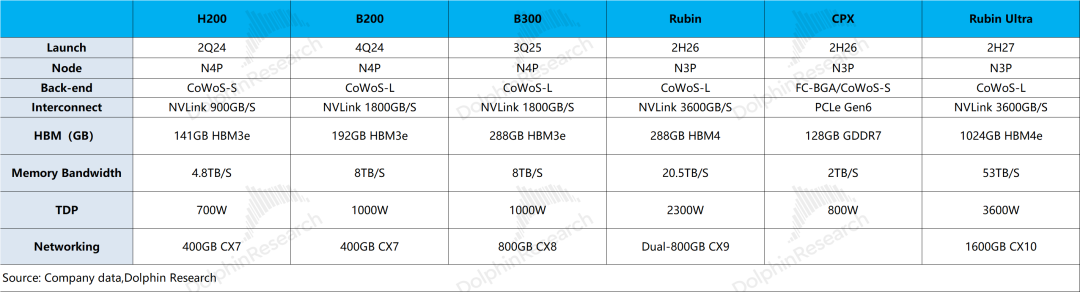

4) Vera Rubin: In addition to the previous six types of chips, a new Groq 3 LPU has been added.

①Vera Rubin: 100% liquid-cooled (45°C hot water cooling), all cables eliminated, installation time reduced from two days to two hours;

②CPO (Co-Packaged Optics) Spectrum-X Switch: Fully mass-produced, jointly developed with TSMC;

③CPU: The world's only data center CPU using LPDDR5, sold independently, set to become a multi-billion-dollar business;

The Vera CPU Tray is used for Agentic workloads. A single Vera Compute Tray integrates eight Vera processors, each with 88 cores, while supporting 8-channel LPDDR5x memory. A single socket supports 1.2TB/s of memory bandwidth. Two BF4-DPUs are integrated on the CPU Tray.

④Vera Rubin: Already live on Microsoft Azure (first rack). NVIDIA's supply chain can now produce thousands of systems per week, with monthly AI factory capacity in the gigawatt range;

⑤Rubin Ultra: While Rubin slides horizontally into cabinets, Rubin Ultra is placed vertically into a new rack, Kyber, where 144 GPUs are within a single NVLink domain, and NVLink switches replace copper cables behind the midplane.

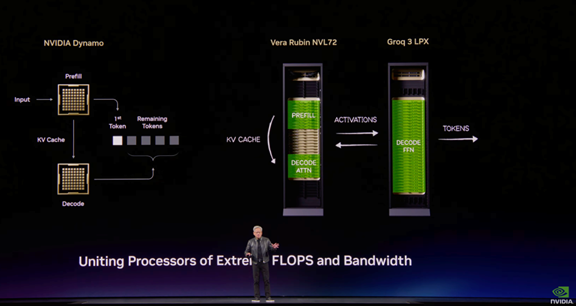

5) Groq 3 LPU (New Chip): Groq and HBM are used in conjunction, as expected.

The technology comes from the acquired Groq team. The Groq LP30 is manufactured by Samsung and is expected to ship in the third quarter.

A single Groq chip has 500MB SRAM vs. 288GB for a single Rubin chip. Groq alone cannot handle the parameters and KV Cache of mainstream large models.

Solution: A Dynamo software is introduced to break down the inference steps:

1. Prefill stage: Also known as Prefill, this is the stage where the model batch-processes user input Prompts, primarily computation-heavy, and is completed on Vera Rubin;

2. Decoding attention phase: Mainly involves calculating the relationship between the currently generated token and historical tokens (KV Cache, the memory of stored conversations). This is a task that requires both computation and storage and is also completed on Vera Rubin, frequently reading HBM memory units on Rubin.

3. Decoding feed-forward network (FNN): After the Attention phase determines the contextual relationship, the FNN is responsible for outputting the probability distribution of the next token based on the previous tokens and selecting the next token, i.e., 'uttering the word'.

Each layer in this phase reads the model's weight parameters, and only one token can be processed per read. Originally, the parameters were stored in HBM, and the computing units were constantly waiting for data to be transferred from HBM, which is the real bottleneck of the 'memory wall'.

After splitting the decoding into two phases with software, the model's 'contextual memory' at work remains on HBM, but most of the model parameters are transferred to Groq's SRAM. The on-chip storage layer, SRAM, can read these weight parameters with extremely low latency, thus solving the slow inference word-generation problem.

Rubin and Groq are tightly coupled via Ethernet, and RDMA's special connection mode can reduce the interaction latency between the two chips by about half.

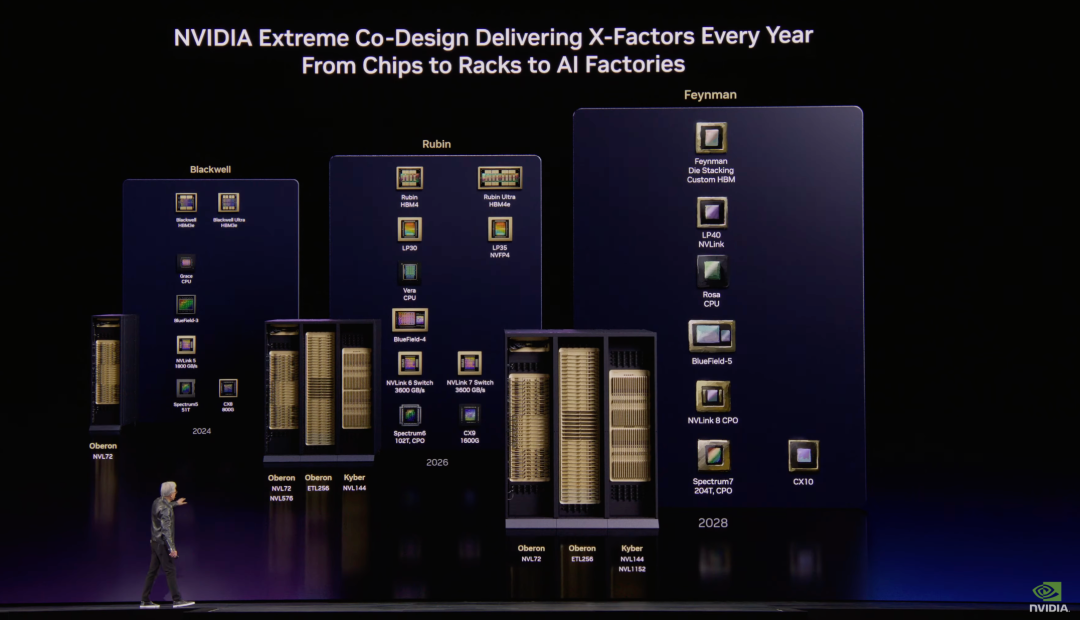

6) Feynman: A new GPU + LP40 (LPU) + Rosa CPU (named after Rosalind) + BlueField-5 + CX10.

Kyber copper cable scale-up + Kyber CPO scale-up (first time supporting both copper cable and CPO scale-up simultaneously). This means that even in the Feynman phase, a hybrid approach combining copper and CPO will be supported.

Although NVIDIA is bullish on the CPO solution in the long term, customers tend to maximize the use of copper cable solutions before switching to CPO (due to simpler deployment/maintenance).

7) Other Information:

①Space Data Centers: To address energy shortages, NVIDIA announced Vera Rubin Space-1, planning to deploy data centers in space (requires solving radiation heat dissipation issues, as there is no conduction or convection in space, only radiation);

②OpenClaw: Every SaaS company will become a GaaS company (Agent-as-a-Service).

Agent systems can access sensitive information, execute code, and communicate externally within enterprise networks—this requires enterprise-level security. NVIDIA has partnered with OpenClaw founder Peter Steinberger to launch NemoClaw (OpenClaw's enterprise security reference design), integrating OpenShell technology, including network guardrails and privacy routers, which can connect to the policy engines of various SaaS companies;

③Physical AI and Robotics: In autonomous driving, manufacturers such as BYD/Geely/Hyundai/Nissan have joined Robtaxi and partnered with Uber. In robotics, manufacturers like KUKA/ABB, as well as many robot/drone platforms, are involved.

Overall, besides clarifying that copper cables and CPO will be used in conjunction, the main addition in this launch is the inclusion of Groq's LPU option in servers. After Groq was acquired, the market had already fully expected this; even the three-year, $1 trillion revenue guidance had already been surpassed by market expectations.

Overall, from NVIDIA's product iterations, it can be seen that the focus in recent years has shifted away from chip microarchitecture innovation. From Hopper to Blackwell, the main issues addressed were composition and connectivity, with NVIDIA primarily completing the transition from selling chips to selling systems and services.

From Blackwell to Rubin, whether it's the newly added DPU (NAND chip) or the newly introduced LPU (SRAM) after the acquisition, the main focus is on addressing the memory wall issue as AI enters the era of inference and agents.

II. NVIDIA's Recent Situation: Underwhelming Conference Guidance, in Need of a 'New Growth Story'

NVIDIA's stock price has largely oscillated within the $170-200 range over the past six months. Even with increased capital expenditures from downstream major players and the company's consistently Exceeding expectations performance, its stock price has failed to break through upwards, primarily due to market concerns in several areas:

1) Sustainability of Major Players' Capital Expenditures: Companies like Meta and Google have clearly increased their capital expenditures for 2026, with the four core cloud providers expected to reach over $660 billion in capital expenditures in 2026, a 60% year-over-year increase. However, it's worth noting that the proportion of capital expenditures in revenue for major players has reached a relatively high level.

Taking Meta as an example, the company expects its capital expenditures to reach $115-135 billion in 2026, with capital expenditures/annual revenue exceeding 50%, leaving relatively limited room for further increases. Even though major players have increased their 2026 investment outlook, it's still difficult to allay market concerns about the sustainability of subsequent capital expenditure growth.

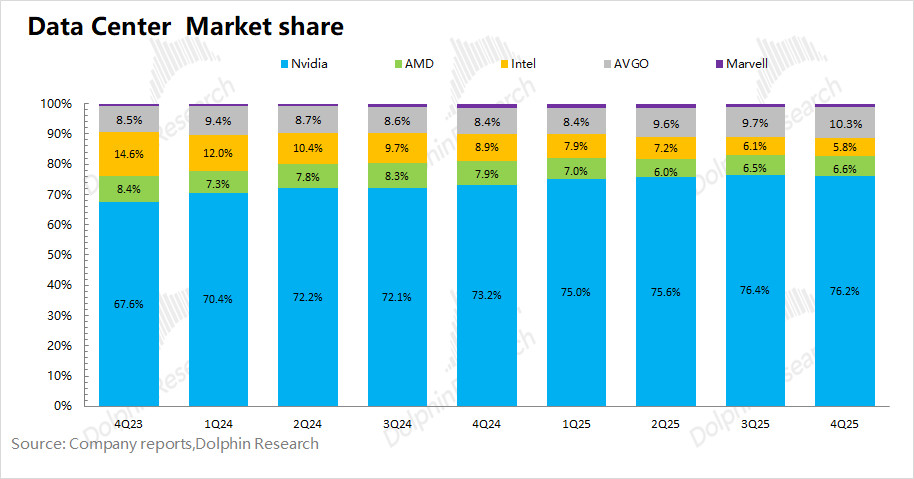

2) AI Chip Market Share: NVIDIA currently maintains over 75% market share in the AI chip market. Its high prices and 'near-monopoly' market structure have prompted downstream cloud providers to seek 'alternatives'.

In addition to Google, Broadcom (AVGO) has clearly secured large orders from Anthropic, OpenAI, and others, with multiple customers also launching in-house solutions. Even though NVIDIA has subsequent Rubin products, the market generally expects the company's market share in the AI chip market to gradually decline.

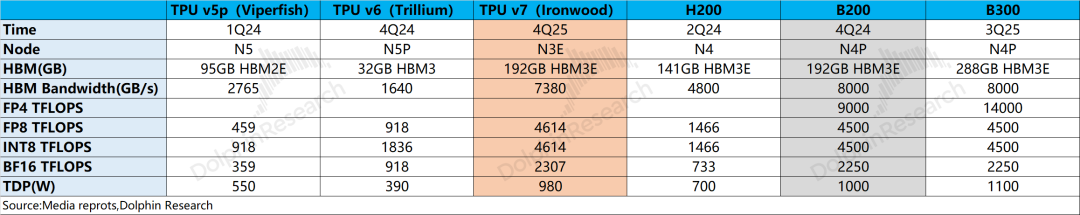

3) Product Competitiveness: Google's TPUv7 now performs roughly on par with NVIDIA's B200 (mass-produced in Q4 2024) in areas like FP8, with Google's TPU roughly lagging NVIDIA by about a year.

NVIDIA introduced the NVFP4 format in the Blackwell series, which can double inference performance over FP8. However, FP8 already meets most of the current market demands, and TPUv7 has become a 'viable alternative'.

To counter industry competition, NVIDIA is locking down its supply chain upstream and downstream through strategic investments and computing power expansion, such as its strategic investment in OpenAI ($30 billion) and Anthropic ($10 billion) based on chip deployment, as well as providing computing power support with millions of GPUs for Meta's new AI lab, MSL, with some agreements implying price reductions to lock in customer demand.

Given the aforementioned market concerns, the company's valuation is also at a relatively low level. Haitun Tab calculates that based on data center revenue of $1.15 trillion from FY25-27 (higher than the company's current guidance of $1 trillion), NVIDIA's current market cap ($4.4 trillion) corresponds to a PE of about 13x for FY2028 (close to CY2027) net profit (assuming a two-year CAGR of 64% in revenue, a gross margin of 72%, and a tax rate of 18%).

NVIDIA delivered better-than-expected financial results last quarter, but its stock price did not rise. This is mainly because after the 2027 revenue expectations have been fully priced in, the market is concerned that after downstream cloud providers have increased their capital expenditure intensity to over 50%, there is very limited room for further increases in capital expenditures.

Theoretically, as a secondary derivative of cloud providers, even if customers maintain high capital expenditures, NVIDIA's revenue from cloud customers would reach zero growth, and the market dares not assign a high valuation to NVIDIA beyond 2027, resulting in NVIDIA currently having a PE of only 13X for its 2027 profits, with little interest in building positions.

Combining the content of this GTC conference, the 'over $1 trillion in cumulative data center revenue by 2027' given by Huang Renxun has already been surpassed by market expectations.

More time in the meeting was dedicated to NVIDIA's product promotion and roadmap planning, with a greater focus on the impact on companies in the industrial chain (CPO and copper will still be used in combination, with LPU and HBM sharing different tasks), while there was less incremental information about the company itself.

For NVIDIA's PE to rise again in the future, Dolphin Research believes that in addition to the larger-scale and faster implementation of AI applications, a new "growth curve" will be needed to drive it, such as "Physic AI" and "space computing power."

Dolphin Research will continue to update the detailed content of this GTC conference in the "Trends-Depth (Investment Research)" section of the Longbridge App. Stay tuned.

- END -

// Reprint with Permission

This article is an original piece from Dolphin Research. Reprinting is only allowed with authorization.

// Disclaimer and General Disclosure Notice

This report is intended solely for general comprehensive data purposes, designed for general viewing and data reference by users of Dolphin Research and its affiliated entities. It does not take into account the specific investment objectives, investment product preferences, risk tolerance, financial situation, or special needs of any individual receiving this report. Investors must consult with an independent professional advisor before making any investment decisions based on this report. Any person making investment decisions based on the content or information referenced in this report assumes all risks. Dolphin Research shall not be liable for any direct or indirect responsibilities or losses that may arise from the use of the data contained in this report. The information and data contained in this report are based on publicly available sources and are provided for reference purposes only. Dolphin Research strives to ensure, but does not guarantee, the reliability, accuracy, and completeness of the information and data.

The information or opinions mentioned in this report shall not, under any jurisdiction, be considered or construed as an offer to sell securities or an invitation to buy or sell securities, nor shall they constitute advice, inquiries, or recommendations regarding relevant securities or related financial instruments. The information, tools, and data contained in this report are not intended for, nor are they intended to be distributed to, jurisdictions where the distribution, publication, provision, or use of such information, tools, and data would contravene applicable laws or regulations, or would result in Dolphin Research and/or its subsidiaries or affiliated companies being subject to any registration or licensing requirements in such jurisdictions, or to citizens or residents of such jurisdictions.

This report merely reflects the personal views, insights, and analytical methods of the relevant creators and does not represent the stance of Dolphin Research and/or its affiliated entities.

This report is produced by Dolphin Research, and the copyright is solely owned by Dolphin Research. Without the prior written consent of Dolphin Research, no institution or individual shall (i) make, copy, reproduce, duplicate, forward, or create any form of copies or reproductions in any manner, and/or (ii) directly or indirectly redistribute or transfer them to other unauthorized persons. Dolphin Research reserves all related rights.