The New Battle of Shovel Sellers: Jensen Huang’s Vision of a Trillion-Dollar AI 'Token Factory'

![]() 03/18 2026

03/18 2026

![]() 573

573

Editor | Zhang Lianyi

On the GTC 2026 stage, NVIDIA CEO Jensen Huang’s keynote shifted focus from chips to unveiling a blueprint—a blueprint for a 'factory.'

This factory doesn’t produce steel or assemble cars. Its product is intangible: Tokens. Huang told the audience that by 2027, global demand for this factory’s output would reach at least $1 trillion. 'I’m certain actual compute demand will be far higher,' he added.

This response directly aligns with the growth path he revealed during the FY2026 Q4 earnings call, which he dissected over two hours.

With this, NVIDIA officially transformed from a 'chip company' to an 'AI infrastructure and factory company.'

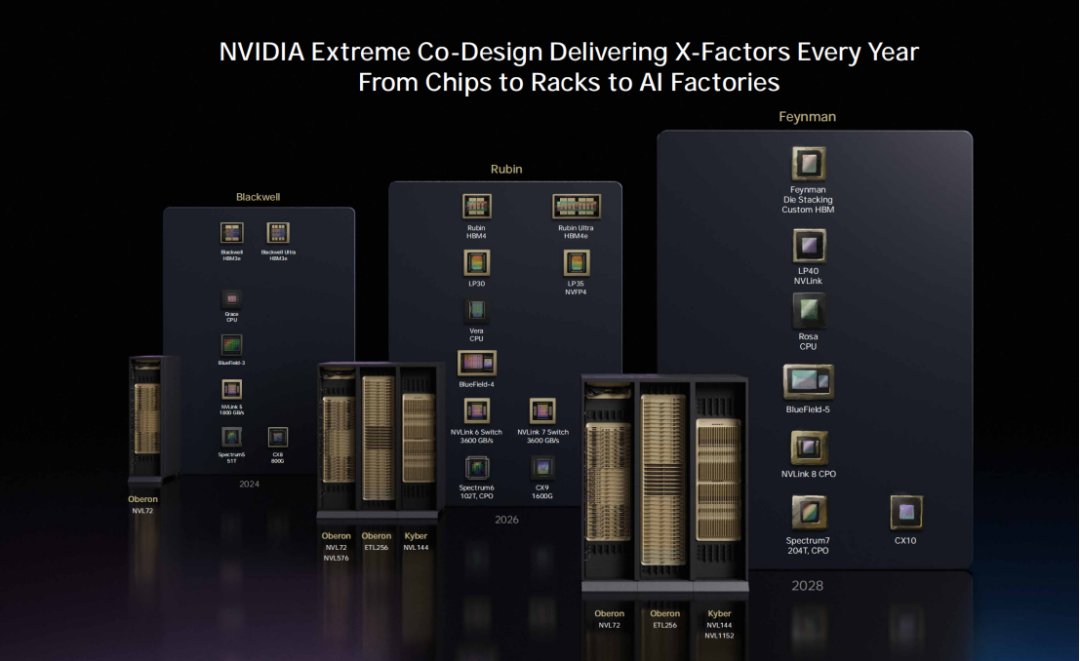

Of course, chips will still evolve. Huang teased the next-gen architecture—Feynman—built on TSMC’s 1.6nm process with optical interconnects, delivering massive compute gains and energy efficiency improvements to support million-fold compute demands.

01

NVIDIA’s Identity Evolution

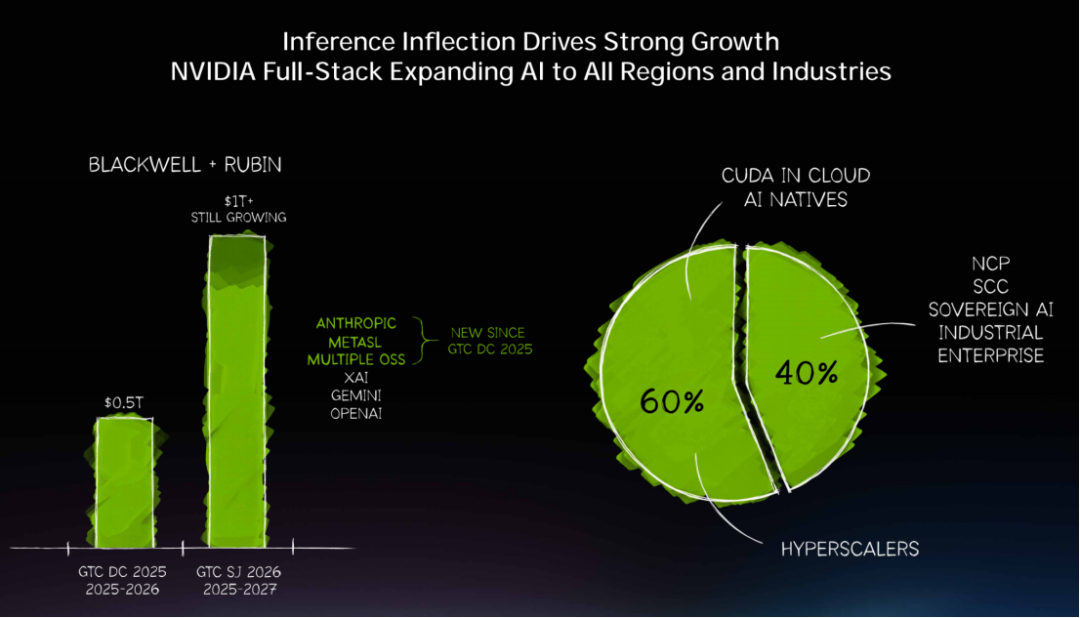

'This time last year, I said we saw $500 billion in high-conviction demand. Now, here and now, I see at least $1 trillion by 2027,' Huang stated during his speech.

However, the narrative shifted. Instead of GPU sales, he discussed building low-cost 'Token factories.'

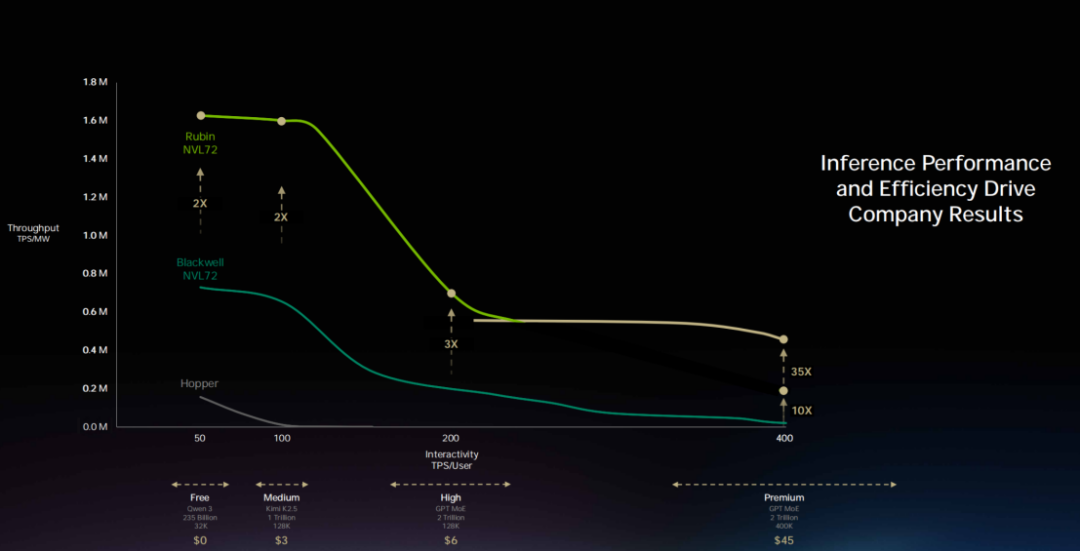

'Every data center, every factory, is fundamentally power-constrained. A 1GW factory will never become 2GW—that’s physics,' Huang said. 'At fixed power, whoever achieves the highest throughput per watt has the lowest production costs.'

This represents a radical mindset shift: Data centers are no longer warehouses for files but factories producing Tokens. NVIDIA is no longer just a supplier of 'production equipment' but the designer, builder, and standard-setter for entire factories.

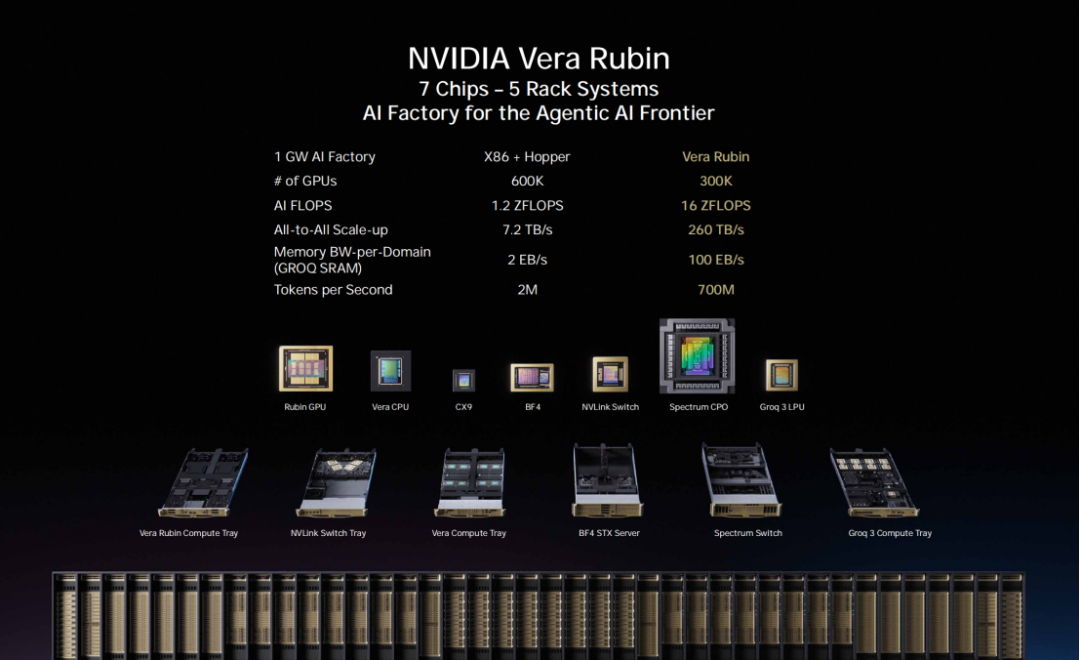

The launch of the Vera Rubin system embodies this transformation. 'With Hopper, I’d hold up a chip—that was cute. But with Vera Rubin, it’s the entire system,' Huang said.

This 100% liquid-cooled, cable-free system reduces rack installation time from two days to two hours. Critically, it boosts token generation rates in a 1GW AI factory from 2 million tokens/second to 700 million tokens/second—a 350x improvement. By comparison, Moore’s Law delivers just ~1.5x gains in the same period.

This isn’t 'selling chips'—it’s 'selling entire factories.'

02

Tokens as the New Oil

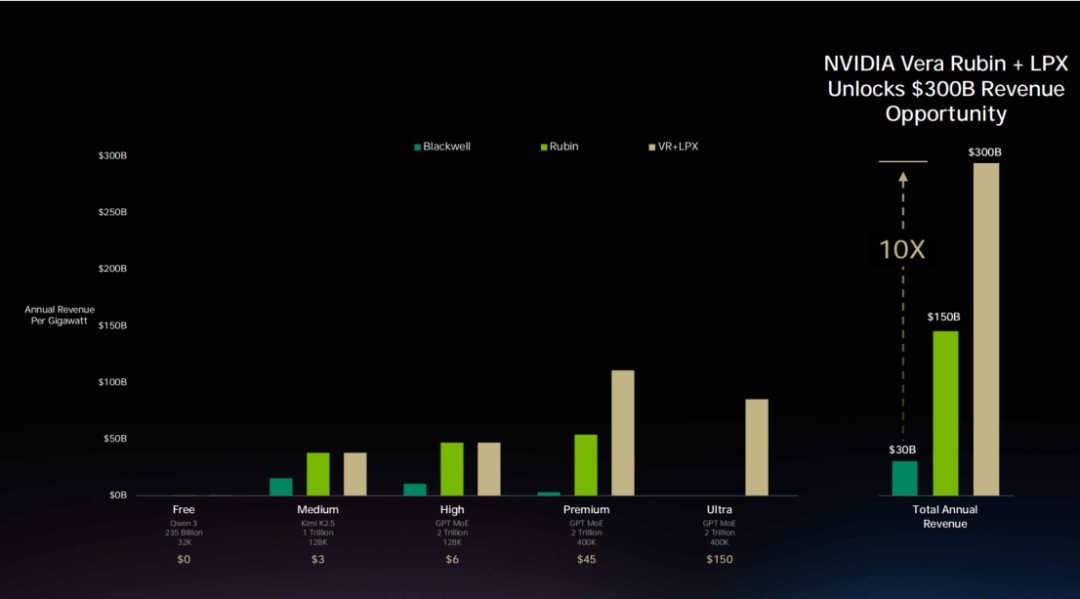

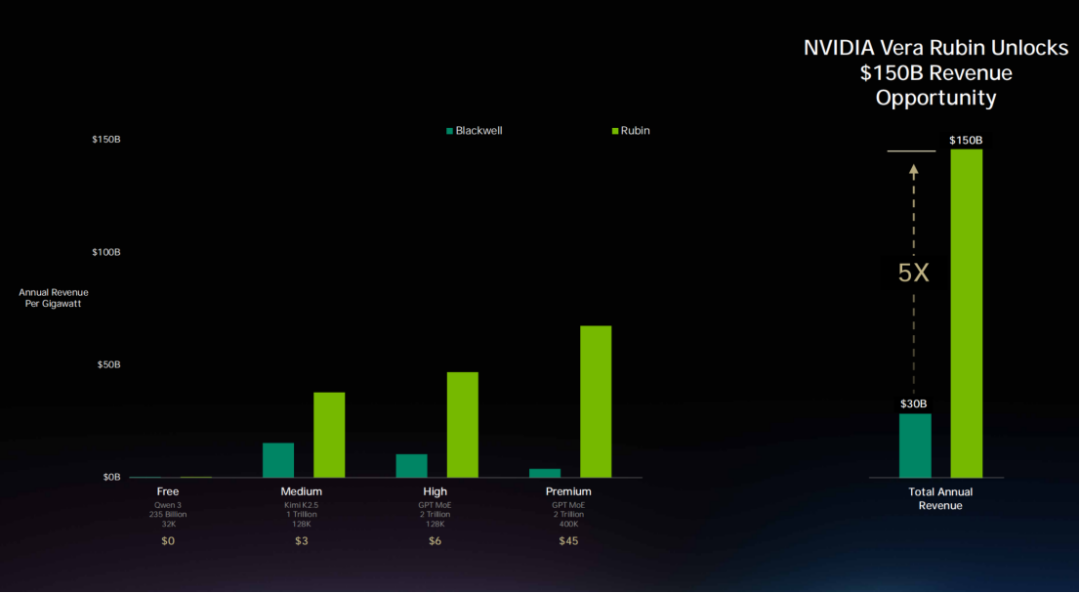

Underpinning this $1 trillion projection is Huang’s 'Token Factory Economics.'

He divided future AI services into five commercial tiers: Free (high throughput, low speed), Mid-tier (~$3 per million tokens), Premium (~$6 per million tokens), High-Speed (~$45 per million tokens), and Ultra-High-Speed (~$150 per million tokens).

'In this Token factory, your throughput and token generation speed directly translate into next year’s precise revenue,' Huang stated.

This framework converts compute power into a priceable commodity. Tokens become a 'commodity' like oil or electricity, with NVIDIA’s architecture determining how cheaply clients can produce them.

Using a simplified model, allocating 25% market share across four tiers, Grace Blackwell could generate 5x more revenue than Hopper. At the highest-value inference tier, performance gains reach an astonishing 35x.

This is what Huang meant by 'lowest-cost infrastructure.' NVIDIA’s ability to run AI models across nearly all domains ensures clients’ 'infrastructure' investments are fully utilized with long lifespans.

Currently, 60% of NVIDIA’s business comes from the top five hyperscalers, with the remaining 40% spread across regional clouds, sovereign clouds, enterprises, industries, robotics, edge computing, and more. 'AI’s broad coverage is its resilience,' Huang said. 'This is undoubtedly a new computing platform revolution.'

03

Hardware, Software, and Ecosystem Synergy

NVIDIA’s 'shovel' isn’t a single product but a trinity of hardware, software, and ecosystem—far harder to replace.

Hardware-wise, Vera Rubin is a complete, end-to-end optimized system designed for agentic workloads.

The new Vera CPU, designed for extreme single-thread performance, uses LPDDR5 memory for exceptional energy efficiency—the world’s only LPDDR5-based data center CPU, ideal for AI agent tool invocation.

For storage, BlueField 4+CX 9 is a new AI-era storage platform adopted by 100% of the global storage industry.

The CPO Spectrum X switch, the world’s first co-packaged optical Ethernet switch, is in full production.

The Kyber rack is a new rack system supporting 144 GPUs in a single NVLink domain, with front-end compute and back-end NVLink switching forming a massive computer.

Rubin Ultra, the next-gen supercomputing node, features a vertical design for larger-scale NVLink interconnects with Kyber racks.

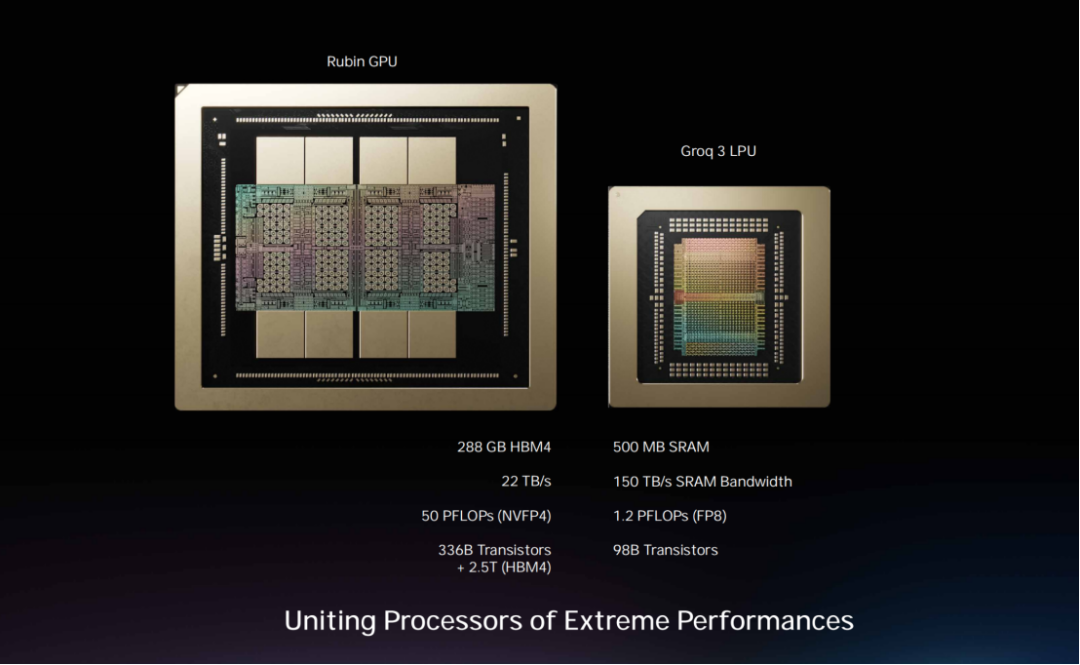

More notable is NVIDIA’s integration of Groq.

Groq chips have 500MB of SRAM, while a single Rubin chip has 288GB of memory—vastly different characteristics. NVIDIA’s Dynamo software system assigns computationally intensive 'prefill' stages to Vera Rubin and latency-sensitive 'decoding' stages to Groq.

'If your workload is high-throughput, use 100% Vera Rubin. If you need high-value programming-level token generation, allocate 25% of your data center to Groq,' Huang recommended.

Samsung-manufactured Groq LP30 chips are in mass production, with shipments expected in Q3 this year, while the first Vera Rubin rack is already running on Microsoft Azure.

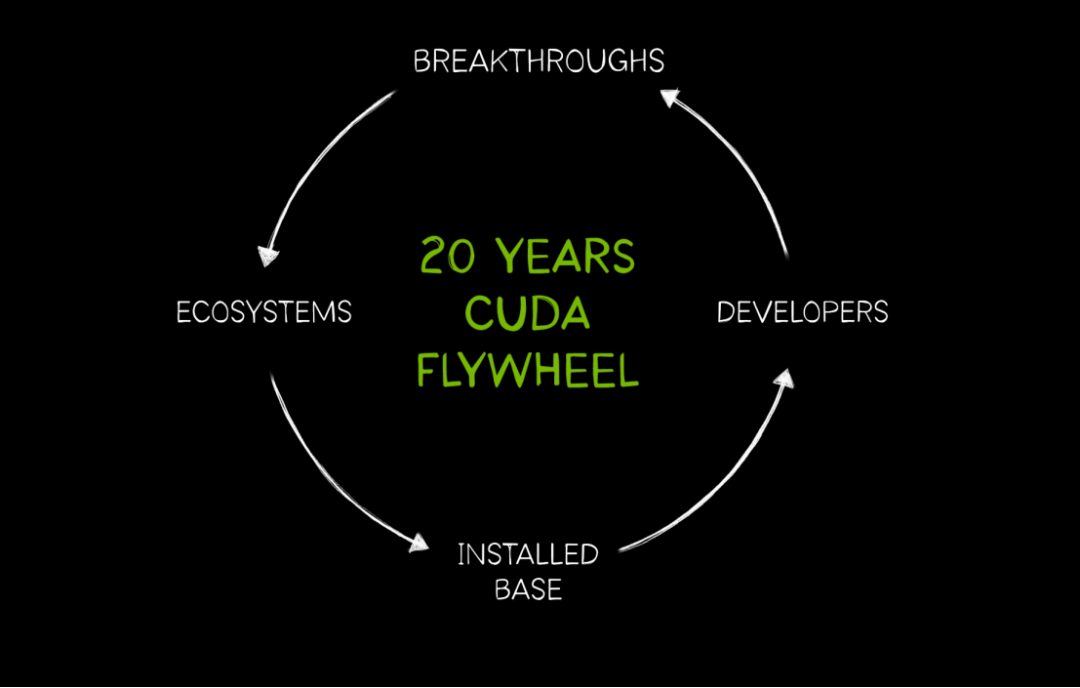

Software-wise, CUDA remains NVIDIA’s two-decade-long moat.

'This year marks CUDA’s 20th anniversary,' Huang emphasized in his speech.

Over two decades, hundreds of millions of CUDA-enabled GPUs and computing systems have been deployed globally, spanning all cloud platforms and serving nearly every computer vendor and industry.

'Installed base attracts developers, developers create new algorithms and breakthroughs, breakthroughs spawn new markets, new markets form ecosystems that attract more enterprises, expanding the installed base—this flywheel is accelerating,' Huang said.

The direct result: NVIDIA GPUs retain extremely high practical value. Huang cited a counterintuitive example: Cloud prices for Ampere architecture GPUs released six years ago are rising.

'The reason is clear: NVIDIA CUDA runs an incredibly rich ecosystem of applications covering every AI lifecycle stage, data processing platforms, and scientific solvers. Once installed, NVIDIA GPUs deliver immense value,' he explained.

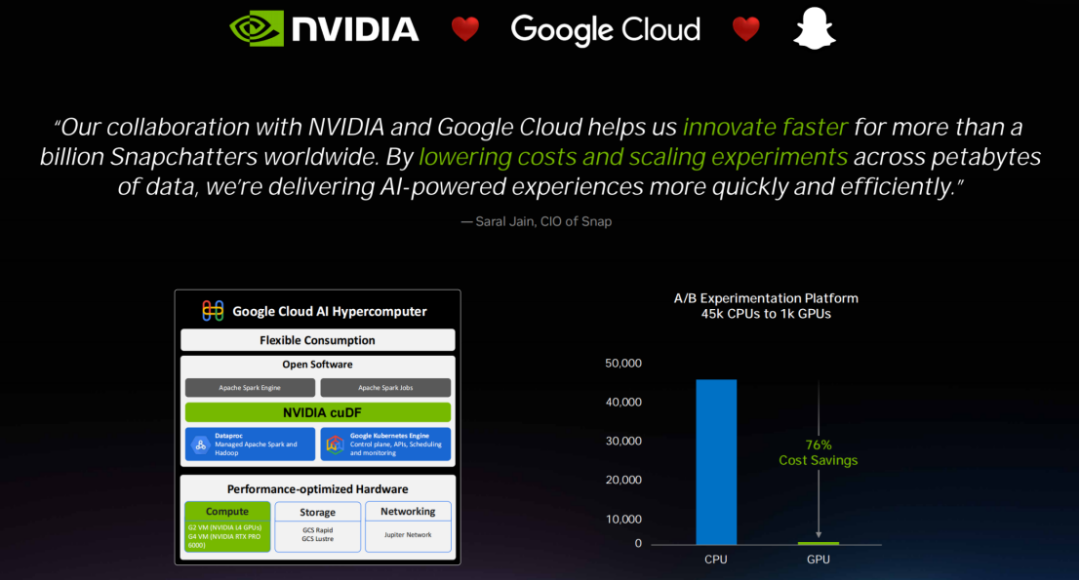

For structured data processing, NVIDIA launched cuDF and cuVS libraries, partnering with IBM, Dell, and Google Cloud to help Snapchat reduce computing costs by nearly 80%. For unstructured data, vector databases, PDF, video, and audio processing capabilities now turn previously unusable data into AI fuel.

Ecosystem-wise, deep cloud partnerships define NVIDIA’s strategy.

'NVIDIA and global cloud providers have a unique relationship—we bring customers to the cloud, creating a mutually beneficial ecosystem,' Huang said.

On Google Cloud, NVIDIA accelerates VertexAI and BigQuery, integrating deeply with JAX/XLA to onboard clients like Base10, CrowdStrike, Puma, and Salesforce.

On AWS, NVIDIA accelerates EMR, SageMaker, and Bedrock, and will bring OpenAI to AWS this year to expand regional deployments and compute scale.

On Microsoft Azure, NVIDIA’s 100PFLOPS supercomputer—the first deployed on Azure—laid the foundation for its OpenAI partnership. NVIDIA GPUs are also among the first to support confidential computing, enabling secure deployments of OpenAI and Anthropic models across global cloud regions.

At Oracle, NVIDIA became the first AI customer. 'I’m proud to have explained AI cloud concepts to Oracle for the first time,' Huang said.

Additionally, Core Weave became the world’s first AI-native cloud, while Palantir and Dell built AI platforms deployable in any country under any air-gapped environment.

'NVIDIA is the first vertically integrated yet horizontally open company,' Huang declared. 'We must understand applications, domains, algorithms, and deploy them anywhere—data centers, clouds, on-premises, edges, even robotics. Meanwhile, NVIDIA remains horizontally open, integrating technologies into any partner’s platform.'

04

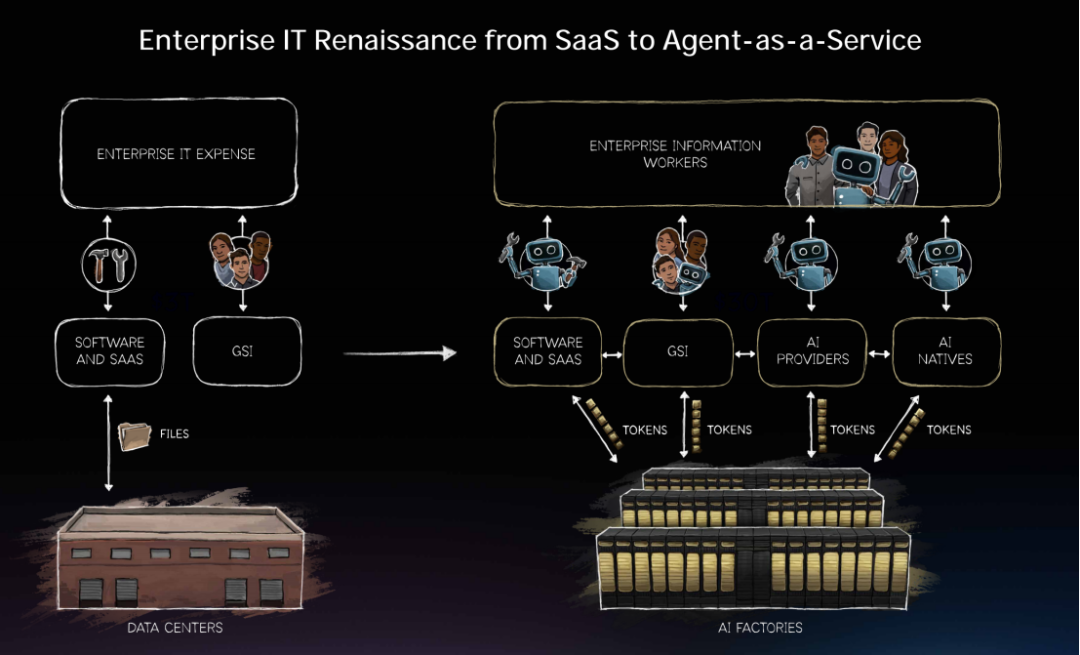

Redefining Enterprise IT and Workplace Rules

Huang described the open-source project OpenClaw as 'the most popular open-source project in human history,' claiming it surpassed Linux’s 30-year achievements in just weeks. 'OpenClaw is essentially the operating system for agentic computers,' he said.

OpenClaw manages resources, accesses tools, filesystems, and large language models, executes scheduling and timed tasks, decomposes problems into sub-agents, and supports arbitrary input/output modalities.

'Windows made personal computers possible. OpenClaw makes personal agents possible,' Huang said. 'Every enterprise needs an OpenClaw strategy, just like we needed Linux, HTML, and Kubernetes strategies.'

This brings about a comprehensive reshaping of enterprise IT. Jensen Huang asserted: 'Every SaaS company will become an AaaS (Agent as a Service) company.'

However, the ability of agents to access sensitive data, execute code, and communicate externally poses entirely new security challenges. To address this, NVIDIA has collaborated with Peter to integrate security into the enterprise-grade version, introducing the NeMoClaw reference design and OpenShield security layer.

'This is the Renaissance of enterprise IT,' Huang said. 'An industry originally worth $2 trillion is about to grow into one worth several trillion, shifting from providing tools to delivering specialized AI agent services.'

He even envisioned a new form of the future workplace: 'In the future, every engineer in our company will need an annual Token budget. Their base salary might be several hundred thousand dollars, and I will allocate roughly half of that amount as Token credits to enable them to achieve a 10-fold increase in efficiency. This has already become a new recruitment tool in Silicon Valley: How many Tokens come with your offer?'

Future software will no longer be about 'humans operating tools' but rather 'intelligent agents collaborating with humans,' and NVIDIA's technology serves as the core support for this entirely new software paradigm.

05

The Future Battlefield: Physical AI, Robots, and Space-Based Data Centers

Digital agents operate in the digital world, while physical AI represents embodied agents—robots.

A total of 110 robots made appearances at this GTC, encompassing nearly all robotics R&D companies globally.

In the field of autonomous driving, Huang announced four new partners joining NVIDIA's Robotaxi Ready platform: BYD, Hyundai, Nissan, and Geely, with a combined annual production capacity of 18 million vehicles. This expands the lineup further, joining previous partners Mercedes-Benz, Toyota, and General Motors. Additionally, a major collaboration with Uber was announced to deploy and integrate RoboTaxi Ready vehicles across multiple cities.

For industrial robots, companies such as ABB, Universal Robotics, and KUKA are partnering with NVIDIA to combine physical AI models with simulation systems.

In the telecommunications sector, Caterpillar and T-Mobile are also part of the collaboration. Future wireless base stations will no longer be mere communication nodes but NVIDIA Aerial AIRAN—an intelligent edge computing platform capable of real-time traffic sensing, beamforming adjustments, and energy-efficient optimization.

A particularly noteworthy debut was the Olaf robot, developed in collaboration with Disney. This snowman, which learned to walk in Omniverse, is powered by NVIDIA Warp's Newton solver (co-developed with Disney and DeepMind) and can adapt to the real physical world.

'Can you imagine? Future Disney parks—where all these robotic characters roam freely around the grounds,' Huang said.

Even more speculative is NVIDIA's foray into space. The Thor chip has passed radiation certification and is now operational in satellites. Furthermore, NVIDIA is developing the 'Vera Rubin Space-1,' a space-based data center computer, opening up boundless imagination for extending AI computing power beyond Earth.

Looking back at the 19th-century Gold Rush, the sellers of shovels made the most money. But today's NVIDIA is far more than just a shovel seller.

Through a series of technological innovations and ecosystem development, it has convinced the world that mining gold requires its shovels.

In Huang's narrative, every future enterprise—whether building models, developing agents, manufacturing robots, or operating data centers—will become a workshop within NVIDIA's 'Token Factory' system. And Huang, standing in the control room of this trillion-dollar factory, quietly observes as every kilowatt-hour of electricity and every Token ultimately transforms into figures on its financial reports.

-END-