Teasing Huang, Being Adorable! Olaf Robot Debuts at GTC: Is Embodied AI Finding Its 'Killer App'?

![]() 03/22 2026

03/22 2026

![]() 500

500

IPs: The Shortcut to Robot Adoption

Have you heard of Olaf?

This beloved snowman, a character from Disney's blockbuster film Frozen and created by Queen Elsa with her ice magic, is both goofy and mischievous. With his adorable appearance and optimistic personality, Olaf has won the hearts of audiences across all age groups.

What if I told you that you might soon see him come to life?

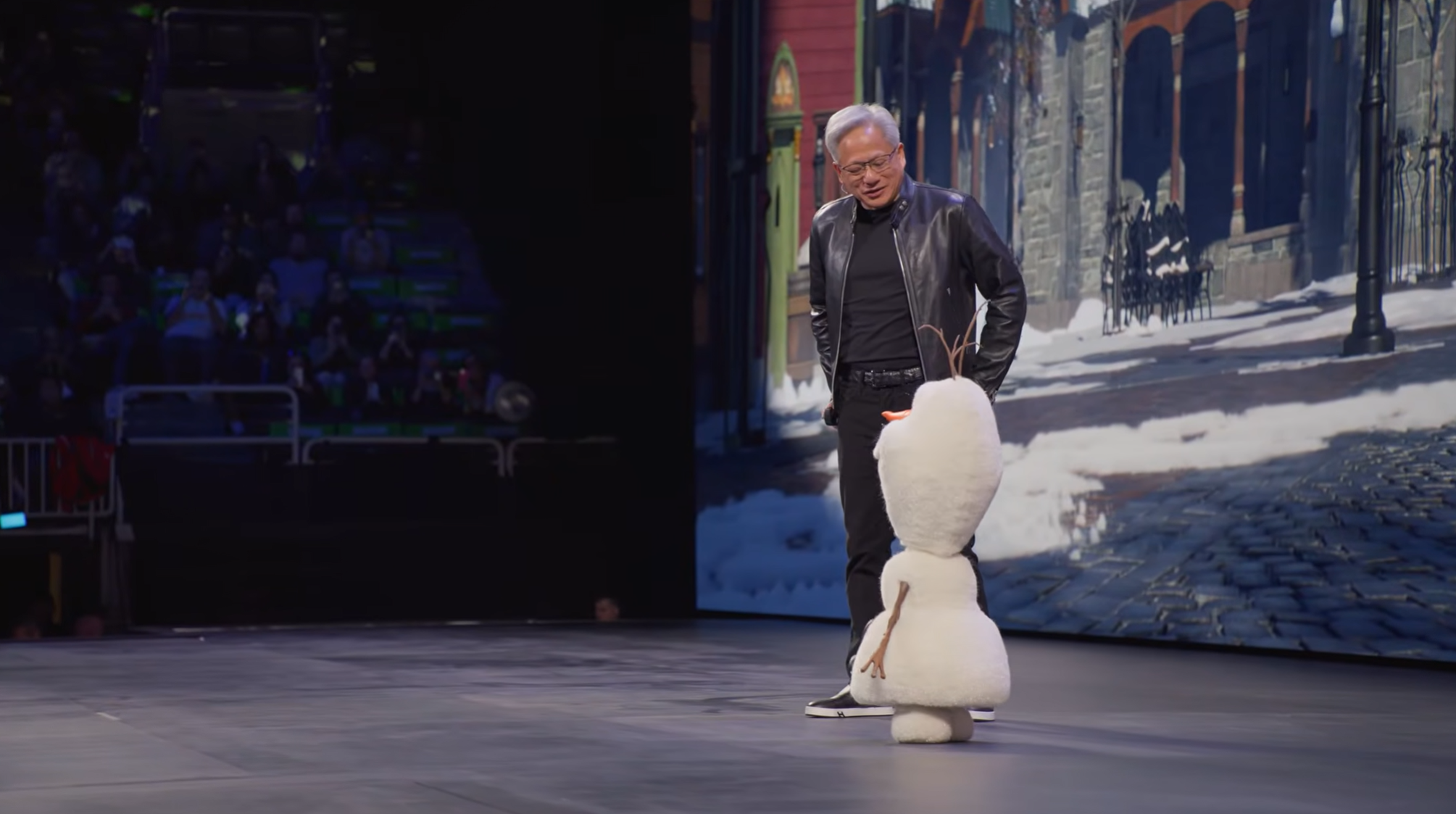

At the recently concluded GTC 2026 conference, NVIDIA CEO Jensen Huang, at the end of his keynote speech, introduced a special “guest” with a grand entrance.

Yes, it was Disney's Olaf.

This round, adorable robot not only walks, talks, and acts cute but also teases Jensen Huang. With blinks, arm lifts, and head shakes, all interactions are natural and smooth, effectively blurring the lines between animated characters and the real world.

(Image source: NVIDIA)

“Can you imagine? This is what the future of Disneyland will look like,” Huang said, gesturing to the Olaf robot beside him. “All robotic characters will move freely around the park.”

In my view, this Olaf robot, co-developed by Disney Research and Imagineering teams in collaboration with NVIDIA and Google DeepMind, is not just a technical marvel but also signifies a new phase in the materialization of animated IPs, paving a fresh path for the entertainment robotics field.

Bringing animated characters from the screen into reality is likely to become an inevitable trend in the robotics industry.

From Animation to Reality: Disney's Challenge in Recreating a Physics-Defying Character

Putting aside its cute appearance, the Olaf robot, a showcase product jointly developed by Disney and NVIDIA, boasts top-tier hardware configuration and training methods.

In the animated world, characters often move in ways that defy physical laws, with exaggerated proportions.

Olaf is a prime example: he has a giant head, a slender neck, and feet shaped like snowballs beneath his body.

(Image source: NVIDIA)

This character is far from being humanoid.

To transform such a “physics-defying” character into a robot capable of walking and interacting freely in the real world, engineers packed twenty-five movable joints and three microcomputers for data processing into its small body, including the Jetson computing platform designed specifically for edge computing and robotics.

Looking at the official demonstration, the exposed robot skeleton is quite eerie.

(Image source: NVIDIA)

Fortunately, once covered with its “skin,” the robot closely resembles the character.

In terms of design, to recreate the texture from the animated film, engineers dressed Olaf in a specially made elastic fabric that emits a crystalline, icy glow under lighting. It can flexibly deform with the robot's movements while maintaining the character's iconic appearance.

Even better, to recreate the hilarious scenes where Olaf often falls apart in the animation, his twig arms and carrot nose are all magnetically attached, allowing him to “lose his nose” at any moment in the amusement park, delivering maximum comedic effect.

(Image source: NVIDIA)

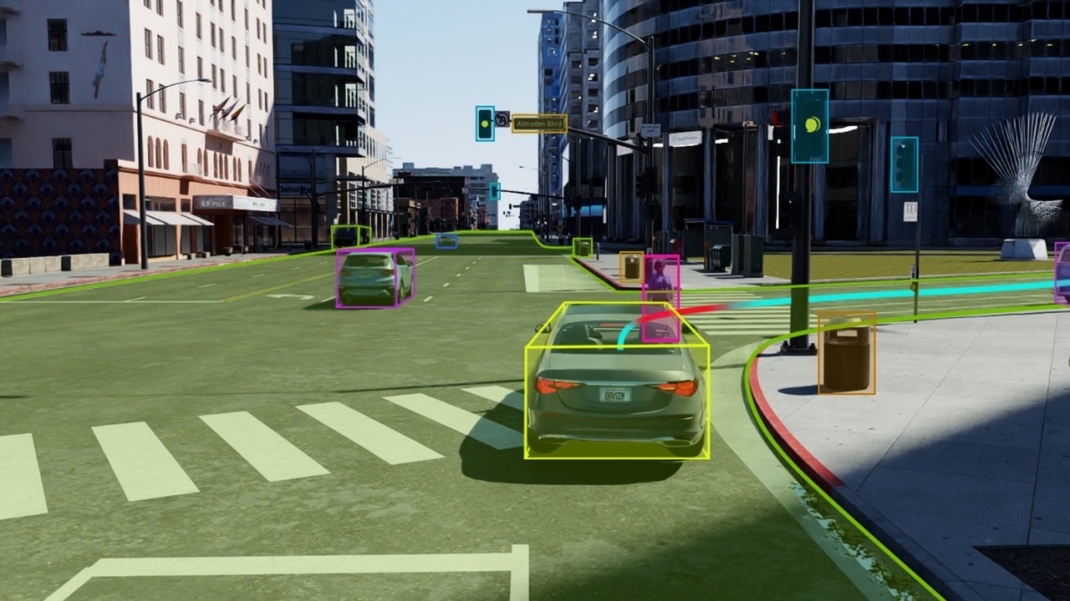

However, if it were just about having a well-made exterior, it would only be a high-end figurine. What truly gives it a “soul” is NVIDIA's simulation system, Omniverse.

According to reports, engineers simulated physical movements and behaviors in a virtual world on computers, creating a test environment identical to real-world physics. They then placed thousands of virtual Olafs inside to learn how to walk on their own, getting back up whenever they fell.

Through this massive data trial and error, in just a few days, the system figured out how to maintain balance while walking in Olaf's signature comical gait and even how to walk on different surfaces like gravel paths and snow.

(Image source: NVIDIA, Omniverse simulating snow)

Next, engineers simply packaged the data into a chip, and the Olaf robot was ready to run.

Judging by the live demonstration, the results were impressive. When interacting, Olaf's eyes first look at you before his body turns, a design that aligns with biological instincts. Paired with exaggerated movements, it truly feels like an animated character has come to life.

(Image source: NVIDIA)

Now, take a look at footage shot by netizens at Disneyland Paris—this thing makes you feel like it's alive.

(Image source: X)

Hmm... too bad it's not real.

Despite using cutting-edge technology in motion control and learning algorithms, the Olaf robot itself is not fully autonomous but relies on human performers for operation.

According to an interview by CNET producer Jesse Oral at Disney's R&D headquarters, the robot's actions can be categorized into two types: behavioral scripts and dialogue. The former requires Disney to pre-program and record movements, with AI displaying them based on real-time situations, while the latter requires operator selection and has certain limitations on dialogue content.

One can only say that when it comes to safety and compliance, Disney and NVIDIA still need to exercise caution.

NVIDIA's Collaboration with Disney: What's the Commercial Strategy?

You might wonder why Jensen Huang, CEO of a trillion-dollar chip giant, would invest so much effort into collaborating with Disney on a toy for amusement parks.

In fact, there are two commercial considerations behind this move.

As early as 2021, Huang explicitly stated that NVIDIA's next phase would focus on robotics and manufacturing, repeatedly emphasizing the strategic importance of Omniverse in his speeches.

However, after five years of efforts, Omniverse has seen little substantive progress, according to foreign media reports.

Although NVIDIA initially announced a long list of companies using Omniverse software, ranging from BMW and Siemens to Foxconn and Boston Dynamics, very few have actually signed up to run large-scale simulations on Omniverse Cloud servers.

Moreover, developers' feedback on Omniverse tools has been less than stellar. Those who have used Omniverse's scene-building and simulation tools often complain about the software's difficulty, frequent crashes, and poor experience when simulating flexible objects, fluids, or pipelines. Some even bluntly stated that Unity offers a better software experience.

(Image source: NVIDIA)

Huang is eager to turn this situation around. He hopes that by showcasing the Olaf robot as the grand finale, he can prove that Omniverse can assist in developing highly complex, non-standardized robots.

The logic is simple: if Omniverse can enable a structurally unstable snowman to perform confidently on stage, it can also enable industrial robots to operate flexibly in complex factory environments.

Besides endorsing Omniverse, this move also points the industry in a clear direction.

Here, I'd like to give two examples familiar to us in China: Robosen and YuanDian Intelligence.

Last year, I attended iFlytek's embodied AI product launch, where Robosen showcased Optimus Prime and Buzz Lightyear robots capable of automatic transformation.

(Image source: Robosen)

Ultimately, these products are essentially electric toys with movable joints and voice interaction. However, by slapping on the shell of the Transformers super IP, their prices can skyrocket to thousands or even tens of thousands of dollars, with fans worldwide lining up to buy them.

Another example is my recent in-depth conversation with the YuanDian Intelligence team during AWE.

They are also aggressively advancing embodied AI technology, but given current technical and supply chain costs, making robots truly capable of household tasks like washing dishes, folding clothes, and cooking is extremely difficult. Who would want to spend over a hundred thousand dollars on a humanoid robot that can't even sweep the floor properly?

Therefore, they also chose the path of IP licensing, launching the W1 robot based on WALL-E's design.

In terms of functionality, this robot is almost equivalent to a power bank + outdoor speaker + surveillance camera. Its hand design is more of a de-functionalized ornament, with its main selling point being the ability to tow a camping cart behind it to carry heavy loads while traveling. Its full-track design also sets it apart from the popular quadruped/multi-legged all-terrain robots on the market.

But it looks like WALL-E, so it was quite popular at the exhibition, even in short supply.

And that's the charm of a well-known IP.

Combining IPs with Robots Opens Up a New Industry Direction

In my view, combining robots with well-known IPs directly lowers the barrier to acceptance.

No one will demand an Olaf robot to help move bricks; as long as it can recognize you, chat with you, and perform a few comical actions, consumers are willing to pay for this emotional value, even if it only roams around an amusement park or sits at home as an electronic pet.

Moreover, this is an excellent commercial shortcut to leverage cultural IPs to support high-tech R&D.

We can fully expect that in the next two to three years, a large number of tech companies and toy manufacturers will follow this path, accelerating the entry of robots into our living rooms and bedrooms.

Today, it's Disney's Olaf; tomorrow, it could be a Pikachu powered by a large language model that helps you with homework, a Marvel Iron Man figurine that recognizes when you're feeling down and comes over to comfort you, or even an AI lamp produced by Apple that resembles the Pixar mascot.

(Image source: Apple)

After all, compared to a cold, silver robot, who wouldn't want an animated character that has accompanied them since childhood in their home?

Disney Olaf NVIDIA

Source: Leikeji

Images in this article are from 123RF's licensed image library.