Farewell to the Subsidy Era: China's AI Cloud Services Usher in a New Cycle of 'Value Restoration'

![]() 03/22 2026

03/22 2026

![]() 354

354

The essence of pricing restoration is to bring AI services back to a 'cost-value' matching range, driving the industrial chain from zero-sum competition toward a positive cycle. Only when cloud providers achieve sustainable profitability can they continuously invest in underlying technologies; only with reasonable profit expectations can they attract developers and ISVs to deeply cultivate scenarios; only with stable supply can enterprises dare to deploy AI at scale.

Ultimately, this restructuring from pricing to ecology will propel AI into an era of competing on 'cost-effectiveness', becoming a critical pivot for China's AI industry to move from conceptual hype to large-scale implementation.

Author | Wei Doudou

Produced by | Industrial Insight

On March 18, two major domestic cloud providers, Alibaba Cloud and Baidu Intelligent Cloud, released product price adjustment announcements almost simultaneously. Unlike previous narratives centered around 'price cuts,' 'free offerings,' and 'price wars' for large models, the keyword this time has shifted to 'increase.'

Over the past year, China's large model industry has experienced a wave of intense price reductions. From Alibaba Cloud and Baidu Intelligent Cloud to ByteDance's Volcano Engine and Tencent Cloud, providers have continuously lowered token prices at the MaaS level, even offering free quotas, in a bid to quickly establish developer ecosystems and enterprise customer bases.

During this phase, computing costs were hidden beneath subsidies, serving as implicit investments to support market expansion. Now, as usage scales rapidly grow and industry applications gradually land, the scarcity and true costs of computing resources are coming to the fore.

The simultaneous price adjustments by Alibaba Cloud and Baidu Intelligent Cloud are not coincidental to some extent.

This seemingly technical price adjustment actually touches on the core issues of AI industry development: as large models transition from 'concept validation' to 'real deployment,' and as usage volumes leap from experimental to production levels, who will foot the bill for sustained computing consumption? And has the industry reached a stage where it can shed subsidies and move toward self-sufficiency?

The price hikes by Alibaba Cloud and Baidu Intelligent Cloud may well mark the beginning of an answer.

I. Simultaneous 30% Increase: Alibaba and Baidu Fire the First Shot in Monetizing Computing Power

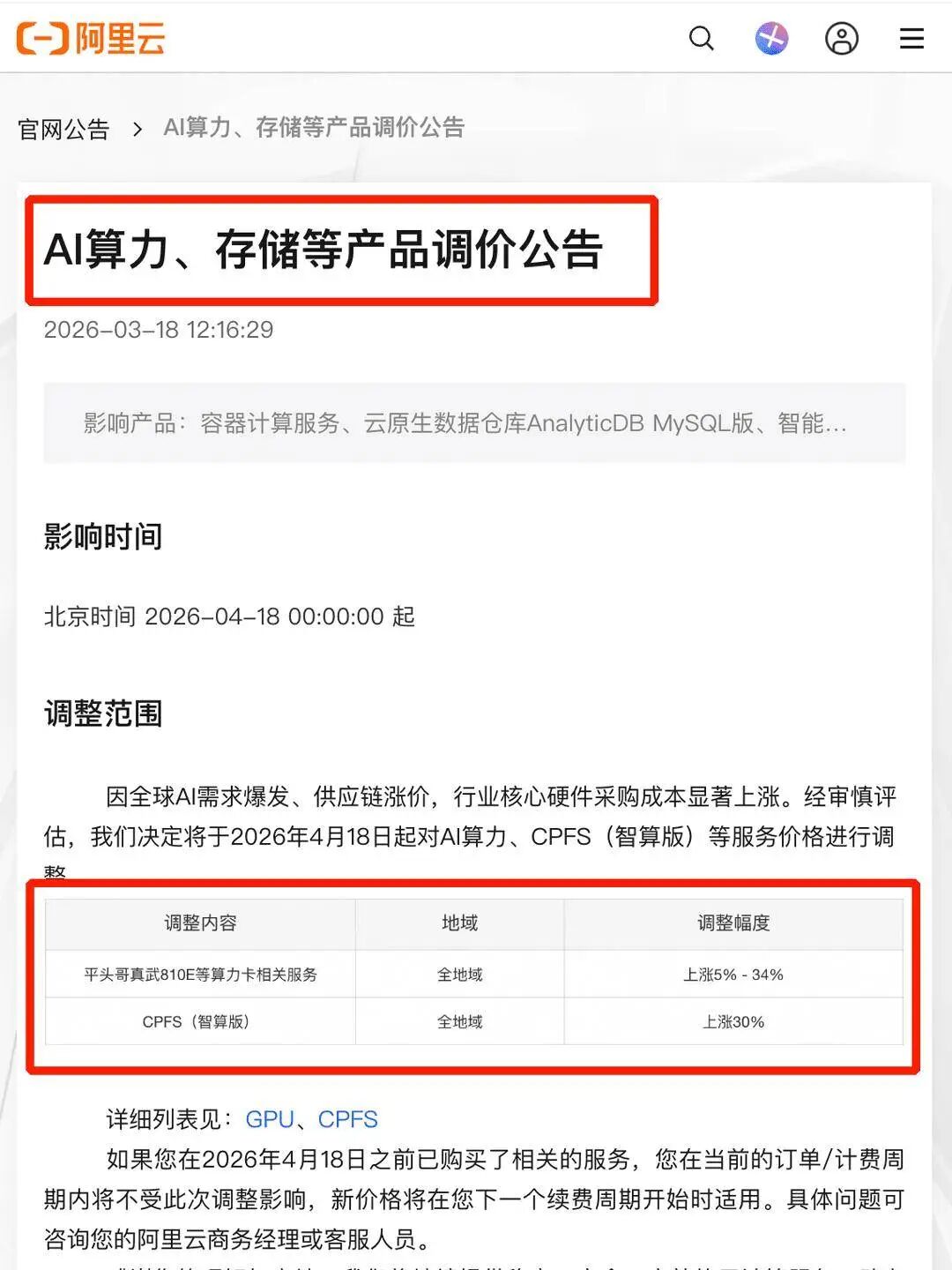

According to official announcement details, Alibaba Cloud took the lead by releasing its 'AI Computing Power and Storage Product Price Adjustment Announcement' at noon on March 18, explicitly stating that starting from April 18, 2026, it would adjust prices for its core AI-related products.

The specific adjustments cover two core areas. First, services related to computing cards such as the T-Head Zhenwu 810E will see price increases of 5%-34%, covering all global regions. Second, the CPFS (Intelligent Computing Edition) parallel file storage service for AI intelligent computing scenarios will have a uniform price increase of 30%.

That same afternoon, Baidu Intelligent Cloud released a similar price adjustment announcement, stating that starting from 0:00 on April 18, 2026, it would optimize prices for its AI-related products.

The specific adjustments closely mirror those of Alibaba Cloud. First, AI computing power-related product services will see price increases of approximately 5%-30%. Second, parallel file storage services for AI training and inference scenarios will increase by about 30%.

Baidu Intelligent Cloud noted in its announcement that the price adjustments are based on the industry backdrop of 'rapid global AI application development, sustained computing demand growth, and significant cost increases in core hardware and related infrastructure.' The goal is to 'ensure long-term stable platform operation and service quality.' Existing users will maintain current pricing for the duration of their current billing cycle, with new pricing applying only upon renewal.

Notably, the scope of this price adjustment by both providers focuses on AI computing infrastructure and high-performance intelligent computing storage products at the IaaS layer, without involving MaaS-layer large model API token pricing. This fundamentally differs from previous token price adjustments by Tencent Cloud for its Hunyuan large model and third-party integrated models.

The former targets the most fundamental computing resources in the AI industry, serving as the base for all large model training and inference services; the latter targets model invocation services directly oriented towards developers and enterprises, belonging to the application service layer of the industrial chain.

Simultaneously, it can be observed that this price adjustment is not a blanket increase across all product lines but a structural adjustment targeting core AI scenarios. Both providers' general cloud computing services and non-AI storage products remain unaffected, further confirming the strong correlation between this adjustment and the surge in AI demand.

From a product structure perspective, this round of adjustments reveals another key insight: the cost center of gravity of the AI industry is shifting from the models themselves to infrastructure.

Previously, improvements in large model capabilities relied heavily on algorithmic optimization and model parameter expansion, with cost discussions often centered on the training phase. Now, with the explosion in inference demand and continuous implementation of application scenarios, computing consumption from long-term operation has become a larger source of expenditure. Cloud providers' prioritization of computing power and storage price adjustments is a direct response to this structural change.

This shift implies that AI is no longer just an 'incremental business' that can be driven by subsidies but is becoming an infrastructure service requiring refined pricing and cost control. For the industry, this represents not just a price adjustment but a recalibration of AI's economic model.

II. Intelligent Agent Boom Ends 'Burning Money for Market Share'

This simultaneous price adjustment is not a random move by the two providers but an inevitable result of a phased transition in the entire AI cloud computing industry.

Over the past two years, the competitive logic in the large model track has been 'exchanging price for scale.' Cloud providers have hidden true computing costs beneath low token prices through internal cost absorption, long-term resource lock-ins, and even loss-making subsidies. The core goal was not short-term profitability but rapidly building developer ecosystems and capturing enterprise customer mindshare—essentially a capital-for-time upfront investment.

However, with demand-side volumes surging over the past year, a February 2026 report by Frost & Sullivan revealed that daily average invocations of enterprise-grade large models in China skyrocketed to 37.0 trillion tokens in the second half of 2025, up 263% from 10.2 trillion tokens in the first half, representing nearly triple capacity expansion in six months.

If cloud providers continue subsidies, their cost losses will only grow.

The proliferation of intelligent agent frameworks, exemplified by OpenClaw, has fundamentally restructured computing consumption models, further amplifying cost pressures. Before intelligent agents, large models were primarily used for single-round dialogues and one-time inferences, with computing consumption being instantaneous and low-volume. In contrast, OpenClaw's long-chain execution and persistent operation characteristics have caused computing consumption for single business tasks to surge exponentially.

A leading domestic finance and taxation SaaS provider's intelligent tax filing assistant built on OpenClaw saw daily token consumption per enterprise user skyrocket from 8,000 to 1.27 million, a more than 150-fold increase.

IDC estimates show that the decline in computing reuse rates due to persistent agent operation has raised cloud providers' unit effective computing supply costs by 120%-180%.

Simultaneously, OpenClaw's open-source proliferation has ignited the long-tail market. By late February 2026, cumulative downloads exceeded 1.2 million, with over 1.6 million intelligent agent applications launched, further exacerbating computing supply-demand tensions through fragmented demand.

Under such pressure, a business model shift has become almost inevitable.

Beyond this, a more critical reason lies in the shifting center of gravity of the AI cloud computing value chain.

Since 2023, the rapid development of China's large model industry has been accompanied by massive subsidies from cloud providers. To promote market education for large models and lower usage barriers for enterprises and developers, domestic leading cloud providers and large model companies have continuously engaged in 'price wars,' with large model API token prices dropping by over 90% in two years. They also offered substantial free public testing quotas, computing subsidies, and even experienced 'inference prices below computing costs' price inversions, with the core aim of rapidly cultivating the market and establishing the MaaS ecosystem.

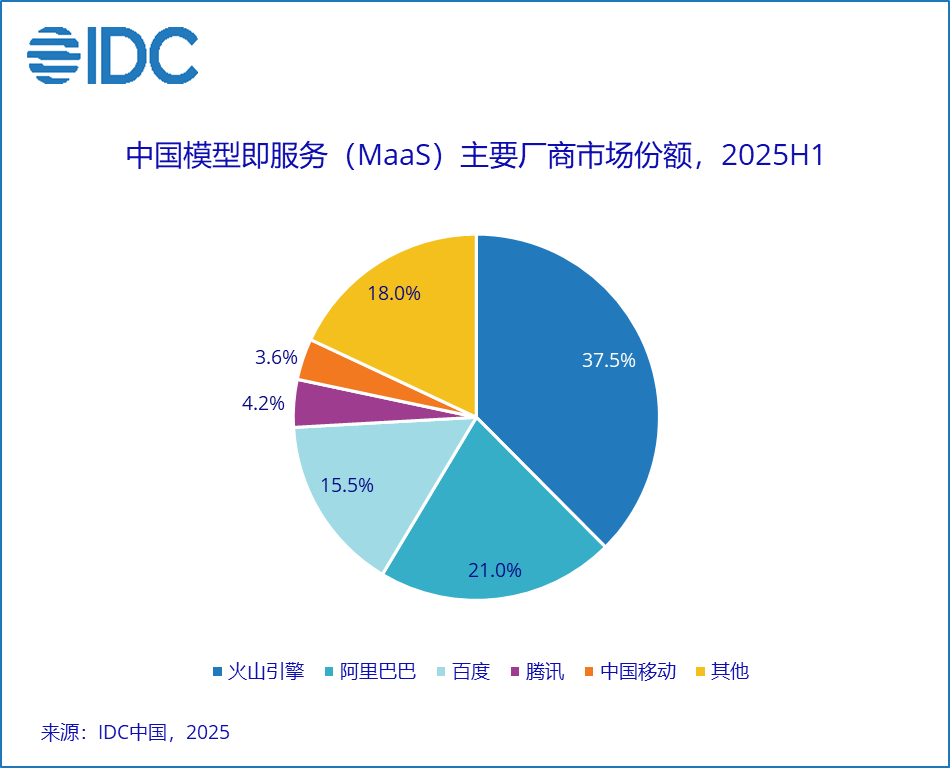

After nearly three years of market cultivation, China's MaaS ecosystem has fully matured, completing its historical mission of market education. According to IDC's 'China Model-as-a-Service (MaaS) and AI Large Model Solution Market Tracker, H1 2025' report, China's MaaS market reached RMB 1.29 billion in the first half of 2025, surging 421.2% year-on-year, while the AI large model solution market hit RMB 3.07 billion, up 122.1% year-on-year.

More importantly, the MaaS market landscape has largely stabilized, with significant head concentration, eliminating the need for market share battles through subsidies.

CCID Consulting data shows that by the end of 2025, over 80,000 Chinese enterprises had completed large model pilots or production deployments, covering nearly all core industries including finance, government affairs, manufacturing, and retail. The primary motivation for enterprises to use large models has shifted from 'enhancing product experience' in the early stages to 'improving operational efficiency and R&D effectiveness,' with AI transforming from a 'novelty application' into a core infrastructure for enterprise operations.

At this juncture, the industry's core contradiction has shifted from 'how to get enterprises to use AI' to 'how to provide stable, high-quality, and sustainable AI services for enterprises.' Continuous low-price subsidies are no longer necessary and may even undermine long-term service quality stability. Especially faced with the exponential computing demand brought by intelligent agents, the subsidy model can no longer align with the industry's development pace, making a pricing system restoration to value an inevitable choice.

In summary, behind the price hikes lies not just cost increases but the emergence of a turning point where AI cloud computing shifts from 'burning money for market share' to 'monetizing computing power.'

III. Industrial Chain Bids Farewell to Involution: AI Enters an Era of Competing on 'Cost-Effectiveness'

A key question arises: Does this mean AI cloud services are entering an overall price hike cycle? And what chain reactions will this change trigger in the industrial chain?

Objectively, structural price increases will become a long-term norm but by no means a blanket rise across all categories.

The fundamental reason lies in how hard constraints on both supply and demand sides are reshaping pricing logic. On the supply side, core hardware such as high-end AI chips and HBM faces long expansion cycles, with tight balance expected to persist for 2-3 years, making hardware cost reductions unlikely. On the demand side, AI is shifting from consumer-end to enterprise production, with computing demand sustaining exponential growth. The long-term existence of this supply-demand gap means core intelligent computing services cannot return to the old trajectory of 'only decreases, no increases.'

But this does not equate to across-the-board price hikes.

Future industry pricing will show clear differentiation. On one end, lightweight models and general-purpose computing for entry-level scenarios will maintain low or even free pricing to support AI accessibility. On the other end, high-end intelligent computing and heavy-duty models for complex B-side scenarios supporting long-chain intelligent agent tasks will have prices more closely aligned with costs and actual value, entering reasonable profitability ranges.

This change aligns closely with current technological evolution trends. With the rise of edge AI and 'physical world AI,' massive real-time, lightweight inference tasks are shifting downward, freeing clouds from repetitive execution to take on 'central' roles in global decision-making and complex reasoning, significantly boosting the value density per unit of computing power and making price-to-value alignment inevitable.

Deeper drivers stem from the maturation of intelligent agent applications.

Frameworks like OpenClaw have moved from concept to large-scale implementation, with millions of intelligent agent applications in use by early 2026. While significantly increasing computing consumption and cloud service costs, these agents directly create quantifiable commercial value, reshaping enterprise payment logic. For example, after introducing intelligent agents, a cross-border e-commerce enterprise saw cloud costs rise 20% but human costs drop 12%, with overall ROI improving 37%. Enterprises' price sensitivity is significantly lower than their demand for capability and stability.

Against this backdrop, this round of price adjustments is unlikely to remain an isolated move by individual providers but may evolve into an industry consensus.

Over the past few years, China's cloud computing industry has been mired in low-price competition of 'subsidies for scale,' universally facing the dilemma of 'revenue growth without profitability.' This model not only fails to cover high hardware and R&D investments but also undermines technological and service competition, squeezes ecosystem partners, and ultimately damages the industry's foundation.

Therefore, the essence of pricing restoration is to bring AI services back to a 'cost-value' matching range, driving the industrial chain from zero-sum competition toward a positive cycle. Only when cloud providers achieve sustainable profitability can they continuously invest in underlying technologies; only with reasonable profit expectations can they attract developers and ISVs to deeply cultivate scenarios; only with stable supply can enterprises dare to deploy AI at scale.

Ultimately, this restructuring from pricing to ecology will propel AI into an era of competing on 'cost-effectiveness,' becoming a critical pivot for China's AI industry to move from conceptual hype to large-scale implementation.