Tesla AI5 Chip: Latest Progress Summary

![]() 04/20 2026

04/20 2026

![]() 621

621

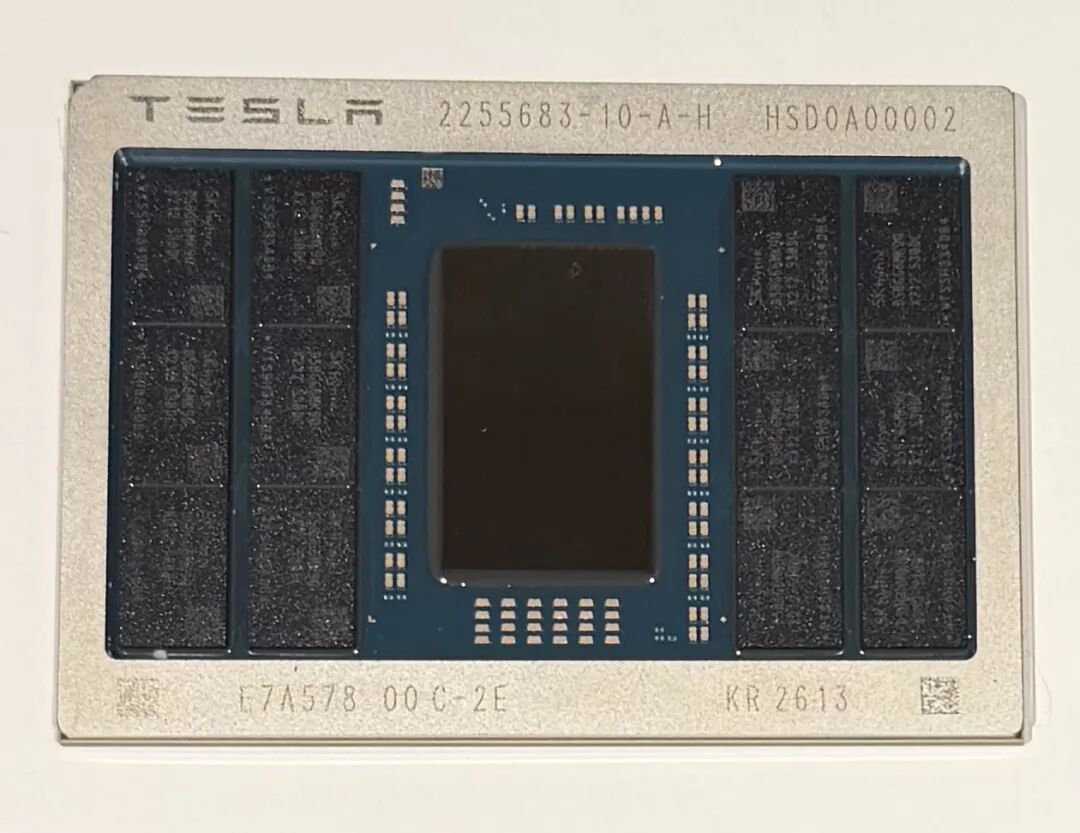

(Announced by Musk on April 15, 2026) A genuine photograph of the Tesla AI5 chip package has been released, vividly displaying its physical form post-tape-out. The prominent central die and surrounding DRAM memory modules are clearly visible, truly showcasing the allure of AI chips.

While the tape-out milestone is indeed exciting, the industry must maintain a level-headed perspective. Tesla's journey with custom chips is fraught with execution risks and challenges. These are the pivotal moments that test Tesla's resilience in innovation and could act as catalysts, propelling the entire AI hardware ecosystem towards greater efficiency and vertical integration.

Below is a detailed summary encompassing the latest progress (including a comprehensive product roadmap timeline), technical specifications, performance parameters, manufacturing capacity, commercial development, and industry impact. It also incorporates an objective analysis of risks and drawbacks, aiming to offer comprehensive and insightful perspectives for the automotive technology sector.

1. Latest Progress: Tape-Out Milestone Achieved, Entering Manufacturing Preparation Stage; Detailed Product Roadmap Timeline

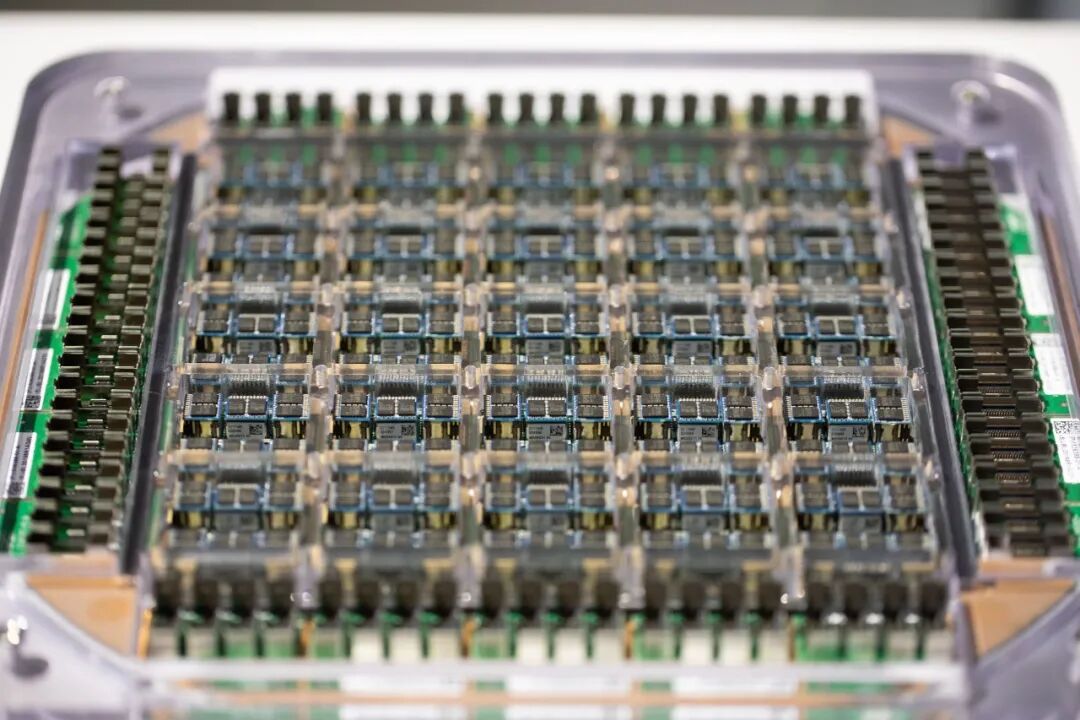

On April 15, 2026, Tesla CEO Elon Musk officially announced on the X platform that Tesla's AI chip design team had successfully completed the tape-out of the AI5 chip (the final design blueprint, marking the penultimate stage before chip fabrication). This milestone signifies a transition from design to mass production, indicating that the physical design, verification, and layout are complete and ready for direct submission to the foundry for manufacturing. Musk also shared images of the packaged chip and expressed gratitude to TSMC and Samsung for their support, stating that the AI5 will be one of the highest-volume AI chips ever produced. The AI6 is already in its nascent stages, and Dojo3 (training cluster chip) development has recommenced, with a clear vision of achieving a 9-month chip design cycle in the future.

Detailed Product Roadmap Timeline (based on Musk's latest public statements and Tesla's historical execution pace):

2024-2025: Accelerated design phase for AI5 (Musk personally dedicated multiple Saturdays to this endeavor), with AI6 entering its initial stages. April 15, 2026: Official tape-out of AI5 for chip fabrication (milestone achieved).

Late 2026: Engineering samples and small-batch trial production will emerge.

Mid-2027: High-volume production will commence (requiring the stockpiling of hundreds of thousands to millions of AI5 motherboards to facilitate the transition of entire vehicle production lines). Priority will be given to deploying the Cybercab/Robotaxi and the initial rollout of Optimus, with a gradual switch for passenger vehicles.

2027-2028: AI6 mass production target (planned tape-out around late 2026, volume production around mid-2028, achieving a true doubling of performance over AI5). Subsequent chips like AI7/Dojo3 will enter development.

Long-term (post-2028): 9-month iteration cycle (AI7, AI8, AI9...), targeting an annual demand of 200 billion chips to support GW-scale AI computing power.

However, this milestone must be viewed in the context of Tesla's historical execution pace. An initial plan for 2026 mass production has been postponed to mid-2027. The risk of timeline delays is a significant concern in the industry: Tesla's chip development history has witnessed multiple deviations from optimistic schedules, with subsequent validation, yield ramp-up, and automotive-grade certification typically requiring an additional 12-18 months. Further delays could postpone the deployment of larger neural networks, resulting in missed opportunities for significant advancements in FSD/robot capabilities.

This serves as a reminder to industry professionals that the AI5 is not merely a technological breakthrough but also a test of execution. Successfully navigating this challenge will greatly inspire the industry to accelerate custom silicon iterations.

2. Product Technical Specifications and Performance Highlights (including detailed performance parameters)

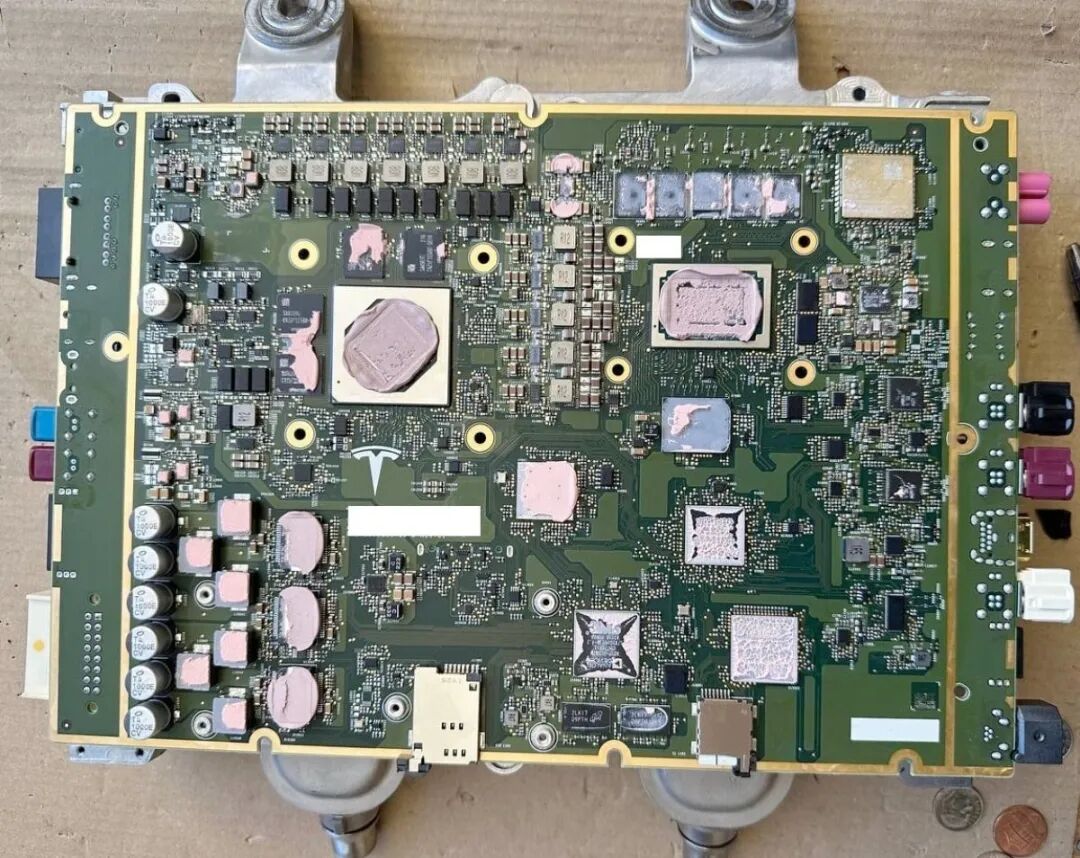

The AI5 is a dedicated edge inference chip meticulously optimized for Tesla's proprietary AI software stack (neural networks), emphasizing maximizing utilization per circuit. It adopts a half-reticle design (as detailed in our previous article, "Technical Details and Implications of Tesla's AI5 Chip"), eliminating redundant modules like traditional GPUs' image signal processors (ISPs) and transforming the entire chip into an AI inference GPU, compatible with TSMC/Samsung process variations. Software and hardware are co-designed, delivering performance that far exceeds general-purpose chips under Tesla's specific workloads.

First, let's revisit the benchmark parameters of the AI4 (current HW4 dual-SoC system) (based on industry teardowns and Musk-confirmed data):

Memory Capacity: Approximately 16 GB (GDDR6)

Memory Bandwidth: Approximately 384 GB/s

Useful Compute (INT8 equivalent): Approximately 400-500 TOPS (dual-SoC system, industry estimates)

Raw Compute: Corresponding to the above useful compute benchmark

Power Consumption: Typical 80-100 W, peak around 160 W

Now, based on inferences, let's delve into the detailed performance parameters of the AI5 (based on Musk's latest confirmations and industry leak estimates, with direct comparison to AI4):

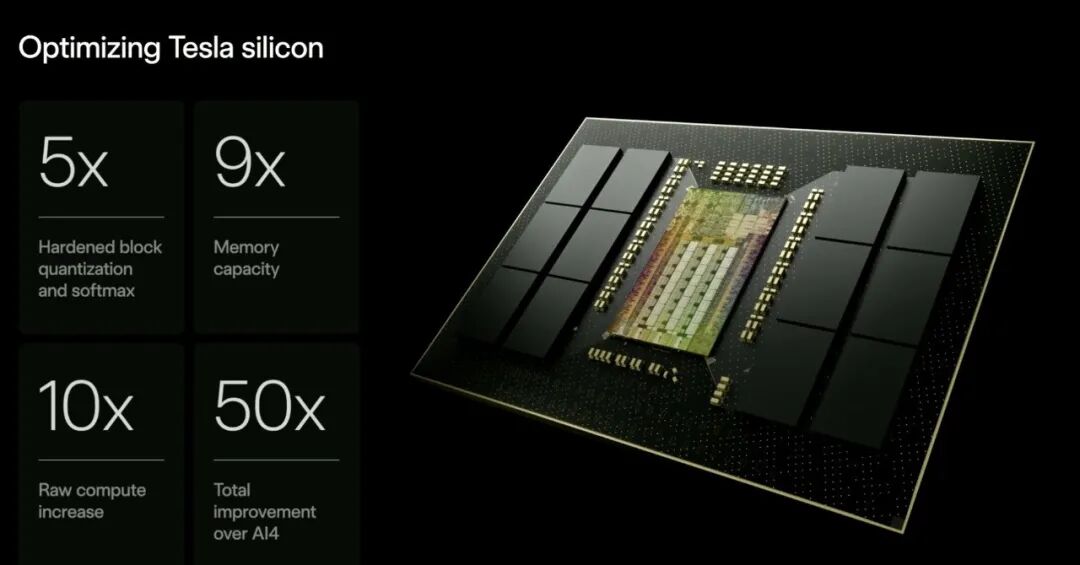

Useful Compute: A single AI5 is approximately 5 times that of the AI4 dual-SoC, i.e., around 2000-2500 TOPS (industry estimates, comparable to NVIDIA H100 under Tesla's specific workloads).

Raw Compute: Approximately 8 times improvement, i.e., 8 times the benchmark of the AI4 dual-SoC.

Memory: Approximately 9 times, i.e., around 144 GB (HBM-level, supporting larger neural networks).

Bandwidth: Approximately 5 times, i.e., around 1920 GB/s.

Key Optimization Metrics: Up to 40-50 times improvement in certain scenarios, primarily due to hardware-native acceleration of operations like SoftMax (AI4 requires 40 simulation steps, while AI5 completes in a few steps), mixed-precision support, and fine-grained quantization optimization.

Energy Efficiency and Power Consumption: A single SoC is equivalent to NVIDIA Hopper (H100) level, with dual SoCs approaching Blackwell (B100/B200); cost is extremely low, and power consumption is significantly lower than comparable GPUs. Optimized for Optimus at around 250W, with peak TDP possibly approaching 800W (higher than AI4's peak but more efficient).

Industry comparisons indicate that the AI5 offers extremely high cost-effectiveness within Tesla's ecosystem, with early estimates suggesting inference costs could be 10 times lower than NVIDIA's.

However, risks in technology and performance realization do exist. Actual benefits are highly dependent on collaborative optimization with the software stack. If neural networks do not scale accordingly or if silicon bugs emerge, performance improvements may fall short of expectations. Power consumption and thermal control in edge inference scenarios also pose challenges in real-world vehicle and Optimus deployments. Core limitations of FSD (such as camera performance in adverse weather) cannot be fully resolved by the chip alone; the AI5 serves as an enabler rather than a panacea.

These potential shortcomings are precisely the areas Tesla continuously addresses through closed-loop iteration. Overcoming them will further demonstrate the unique value of vertical integration in edge AI scenarios, inspiring more companies to explore software-defined hardware paths.

3. Manufacturing Capacity and Production Plans

Tesla has adopted a dual-foundry strategy (U.S. localization): TSMC's Arizona plant + Samsung's Texas plant for dual-source production. Initially, TSMC will be the primary source (possibly utilizing the 3nm N3P process), with Samsung serving as a secondary supplier. The objective is to mitigate supply chain risks while promoting the revival of U.S. semiconductor manufacturing. The capacity ramp-up path is clear:

Late 2026: Engineering samples, small-batch trial production;

2027: High-volume production will commence, initially meeting Cybercab/Robotaxi refresh and Optimus's initial demand, then gradually transitioning passenger vehicle production lines.

In the long term, Tesla's annual chip demand could reach 200 billion, with the AI5 becoming the highest-volume AI chip and plans for Terafab (learn about Terafab in "Terafab Interpretation: Computing Power is Escaping Earth") and other self-built or partnered foundries to support GW-scale operations.

However, risks in manufacturing capacity and yield ramp-up cannot be overlooked. While the dual-factory strategy reduces geopolitical risks, yield stabilization, process differences, and ramp-up challenges at new facilities persist. Samsung has faced issues at advanced nodes in the past, and TSMC's Arizona plant, as a new facility, also faces initial production uncertainties. Global memory shortages and supply chain bottlenecks may further constrain the transition pace. Tesla needs to stockpile millions of chips for its vehicle production lines, and any interruptions could lead to delivery delays.

These risks are a microcosm of the industry's supply chain reshaping. If Tesla can efficiently ramp up, it will not only benefit itself but also drive explosive demand for upstream materials and equipment, becoming a landmark case for the revival of U.S. semiconductors.

4. Commercialization and Business Development

The AI5 primarily serves Tesla's proprietary businesses—FSD vehicles, Robotaxi (Cybercab expected to switch in 2027), Optimus robots, and Dojo training clusters. There are no public plans for external sales or licensing, but ultra-large-scale production may derive ecosystem-level influence. It is not merely a hardware upgrade but the cornerstone of Tesla's transformation from an electric vehicle company to an AI+robotics platform company. Musk has repeatedly emphasized its existential significance: supporting rapid software iteration, reducing reliance on external GPUs, and potentially unlocking significant gross margin opportunities.

Capital expenditures and financial pressure are another critical consideration. AI5 design and Terafab self-building plans (estimated at $20-25 billion) significantly increase spending. Against the backdrop of weak EV demand and margin pressure, such capital-intensive investments may dilute short-term profitability.

Supply chain dependencies and ecosystem closure disadvantages also exist. Short-term reliance on TSMC/Samsung persists, and while a closed ecosystem ensures control, it struggles to achieve the scale effects of an open platform. These challenges test Tesla's capital allocation wisdom. If the AI5 successfully lands, it will greatly unlock the commercial potential of Robotaxi/Optimus, proving the feasibility of the vertical integration + massive self-use model and providing the industry with a transformation example from buying chips to making chips.

5. Industry Impact and Research Outlook

The AI5 strengthens Tesla's leading edge in automotive and robot-specific AI inference, creating differentiated competition with NVIDIA (focusing on low-power, high-cost-effectiveness edge scenarios) and accelerating the industry trend toward custom silicon + software closed loops. Meanwhile, TSMC/Samsung's deep binding and U.S. domestic foundry layout will reshape supply chain hotspots.

Strategic execution and external competition risks also warrant attention. Tesla has limited semiconductor manufacturing experience, and plans like Terafab represent high-risk gambles. NVIDIA still dominates the training side, and if the AI5 cannot sustain its lead, it may be surpassed by hybrid solutions. Regulatory, geopolitical, and talent factors are also implicit variables. From a quantitative perspective, the average delay probability for automotive-grade AI accelerators from tape-out to mass production is around 30-50%, with Tesla's HW3/HW4 also facing similar challenges in the past.

Despite these risks and drawbacks, the AI5's progress remains highly inspiring. It signifies that aggressive vertical integration, while accompanied by execution pressure, may forge a competitive moat that is difficult to replicate. In 2027, Robotaxi and Optimus will be the first to benefit from larger model real-time inference, with FSD capabilities expected to leap significantly. In the medium to long term, a 9-month iteration cycle + massive capacity may enable Tesla to form a moat in humanoid robots and autonomous driving scale-up. History has shown that Tesla consistently achieves breakthroughs at seemingly high-risk junctures. After successful mass production of the AI5, Tesla is poised to become one of the world's largest self-producing and self-using AI chip enterprises and drive the entire industry toward a paradigm shift from general-purpose computing to specialized, edge-optimized approaches.

Summary:

The tape-out of the AI5 is the most critical milestone for Tesla's AI hardware in 2026, technically achieving a leapfrog upgrade over the AI4 (5 times useful compute to around 2000-2500 TOPS, 8 times raw compute, 9 times memory to around 144 GB, 5 times bandwidth to around 1920 GB/s, etc.), securing a dual-U.S. foundry supply chain for capacity, with a clear roadmap pointing to high-volume production in 2027 and subsequent 9-month iterations, commercially paving the way for large-scale Robotaxi and Optimus deployments.

From an industry research perspective, it not only increases the AI weight in Tesla's valuation but may also reshape the global automotive AI chip supply chain landscape. Risks and challenges coexist, but it is precisely these high-risk, high-reward traits that inspire Tesla to continuously iterate and provide valuable insights for global AI hardware practitioners. True innovation often emerges from the ultimate test of execution and resilience. Subsequent tracking of sample verification in late 2026, 2027 mass production yields, and AI6 progress will serve as key signals for risk mitigation and opportunity realization. Let us witness the acceleration of this physical AI era together.

*Unauthorized reproduction or excerpting is strictly prohibited.*