OpenAI and Anthropic: If You're My Brother, Come and Challenge Me

![]() 04/24 2026

04/24 2026

![]() 454

454

When facing a 'Bro,' you must pursue relentlessly and strike with lethal intent.

Author I Xue Xingxing

Editor I Zhang Wen

Cover I Generated by Nano Banana

Chinese people love to talk about brotherhood, but often, it's the brothers who are the most ruthless. In ancient times, there was the Xuanwu Gate Incident; today, there's 'Brother Dong doesn't deserve to be my brother.' Kuaishou streamers like to use the term 'laotie,' which means 'iron buddy,' but it's often these 'iron buddies' who are the most severely exploited in live-streamed shopping, with the closer the relationship, the more ruthless the exploitation.

Apart from Brother Dong, tech moguls rarely talk about brotherhood. Perhaps because they understand that while brotherly affection is precious, stock options are even more valuable. Mr. Yu should have a deep understanding of this, as not only have his decade-long comrades proven unreliable, but even his younger brothers are untrustworthy.

Westerners rarely shout 'Bro,' and their emotions seem the most distant. Probably only black guys who don't speak the same language like to say 'Bro.' With 'Bro' this and 'Bro' that, amplified by local hip-hop 'laotie' culture, now even sisters on Xiaohongshu are saying 'Bro'—to hedge against the 'jimei' (sister) that 'Bros' mock all day.

True 'Bros' never say 'Bro' out loud; they stab you in the back instead. OpenAI founder Sam Altman might be the person who understands the lethal power of 'Bro' best in the world today. Loved ones, close friends, brothers—they all sound nice in words. When facing a 'Bro,' you must pursue relentlessly and strike with lethal intent.

Last night, Sam Altman's former 'Bro' and now rival, Dario Amodei, released Anthropic's latest flagship model, Claude Opus 4.7, just over two months after their previous model release.

Anthropic Releases Claude Opus 4.7

However, considering the numerous discussions in the community over the past few days about the perceived intelligence degradation of Claude Opus 4.6, the release of the new model was not entirely unexpected. Of course, Chinese AI media outlets still performed steadily, once again claiming that '700 million people are about to lose their jobs.'

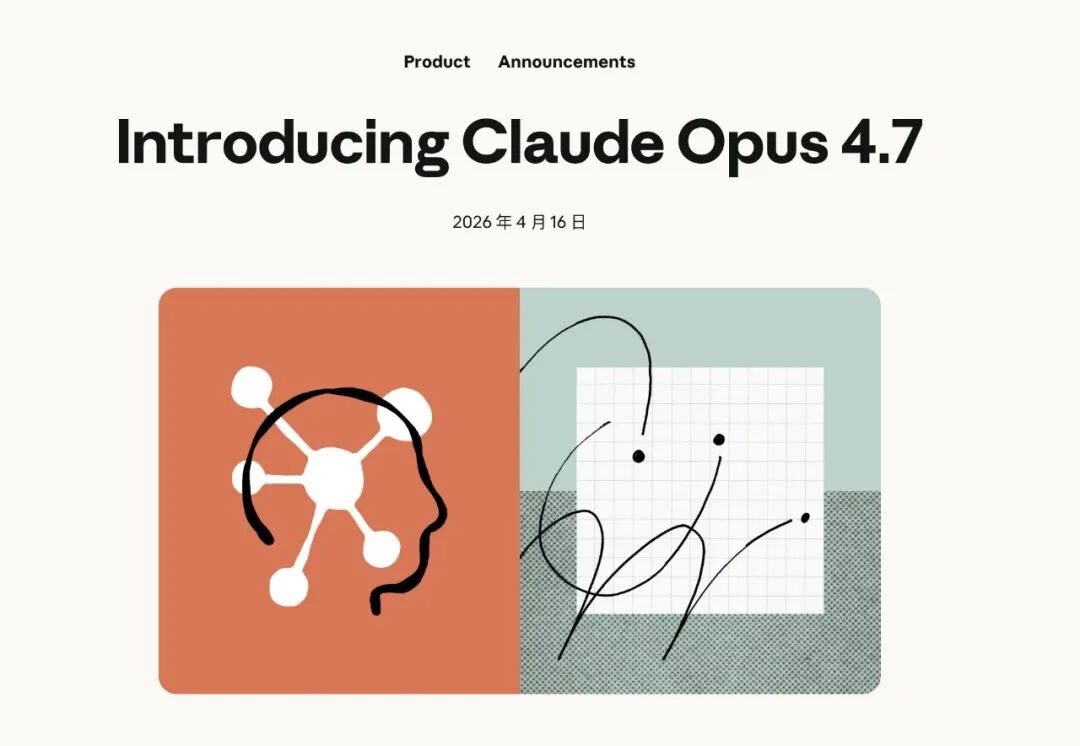

What was truly surprising was that just two or three hours after the release of Claude Opus 4.7, OpenAI announced a major update to its code application, Codex, declaring 'Codex for (almost) everything.'

OpenAI Releases Updated Version of Codex

Not only that, but many ChatGPT users also discovered that OpenAI was conducting a large-scale internal test of the GPT-image-2 model, with effects that were quite impressive. X was flooded with screenshots of female streamers generated by GPT.

To be honest, April 16th wasn't a particularly significant date for either OpenAI or Anthropic. OpenAI's rush to announce the new Codex version on the same day as Anthropic's new model release seemed to carry a hint of rivalry and attention-grabbing. Coupled with the fact that the performance of Claude Opus 4.7 didn't fully satisfy developers, Codex's counter-update seemed even more effective.

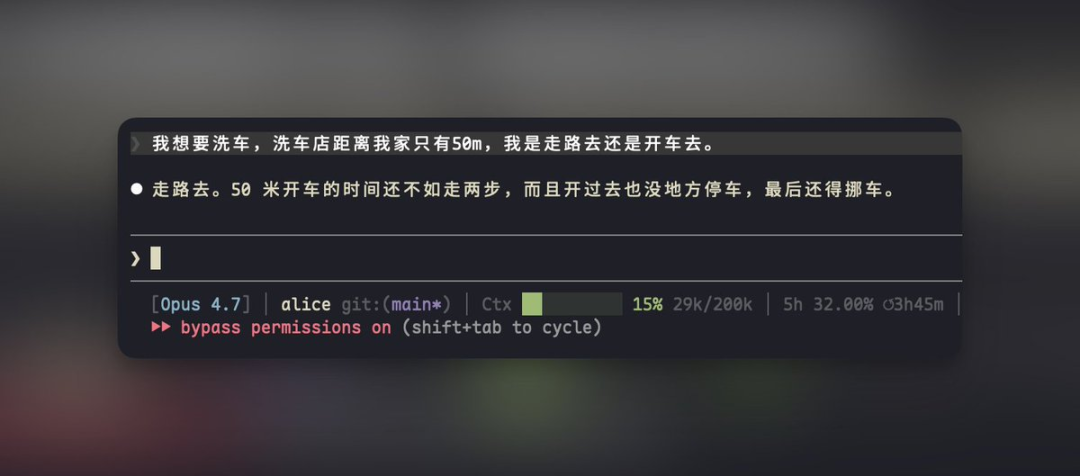

Many developers found that Opus 4.7 couldn't recognize car wash traps

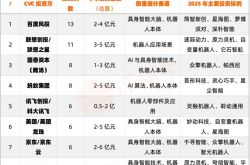

Forget the mindless hype from Chinese AI media for a moment; Claude Opus 4.7 wasn't a comprehensive model iteration. The upgrades in Opus 4.7 were more focused on code programming, visual capabilities, and Agent workflows, but some metrics were weaker than the previous model, Opus 4.6. Compared to GPT-5.4 and Gemini 3.1 Pro, it was a back-and-forth battle, lacking a decisive advantage.

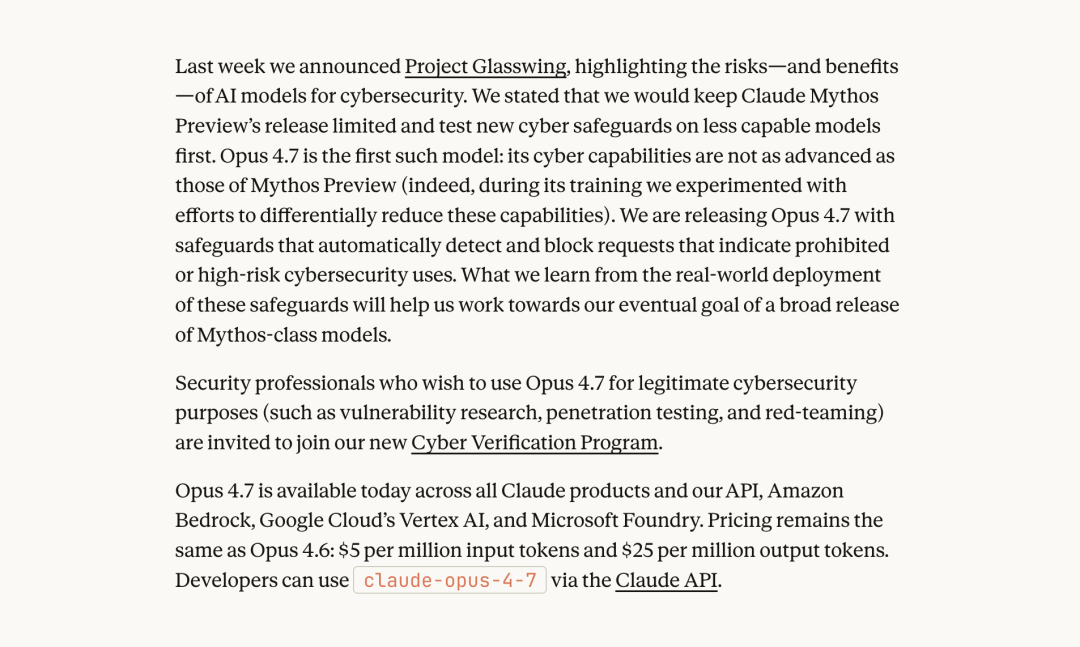

Even Anthropic themselves admitted that Opus 4.7 wasn't their most powerful model; their pride still rested with the yet-to-be-released Mythos Preview.

Anthropic claims to have tried multiple methods to reduce the cybersecurity capabilities of Opus 4.7

However, because Mythos Preview was deemed too powerful, Anthropic believed that releasing it hastily 'would have serious consequences for the economy, public safety, and national security,' so they decided to only open it up to a few partners for testing temporarily. The newly released Opus 4.7 was a version with intentionally reduced cybersecurity capabilities.

In comparison, while OpenAI maintained its usual sluggish pace and didn't release any new models, the latest Codex update, which incorporated backend computer control, a built-in browser, memory function, and access to numerous plugins, delighted many developers. Some even called it the desktop version of Doubao Mobile.

Some jokingly called this 'the revenge of the Father of Lobster,' Peter Steinberger, on Anthropic. When Steinberger first released the Lobster project, he named it Clawdbot, but later changed it to OpenClaw after receiving trademark complaints from Anthropic. In February of this year, OpenAI acquired Steinberger. The latest Codex update also bears traces of OpenClaw.

Some time ago, Anthropic seemed as productive as a donkey in a work team, releasing almost one new feature every two days and putting pressure on OpenAI. This time, OpenAI's Codex update on the day of Anthropic's new model release stole the spotlight.

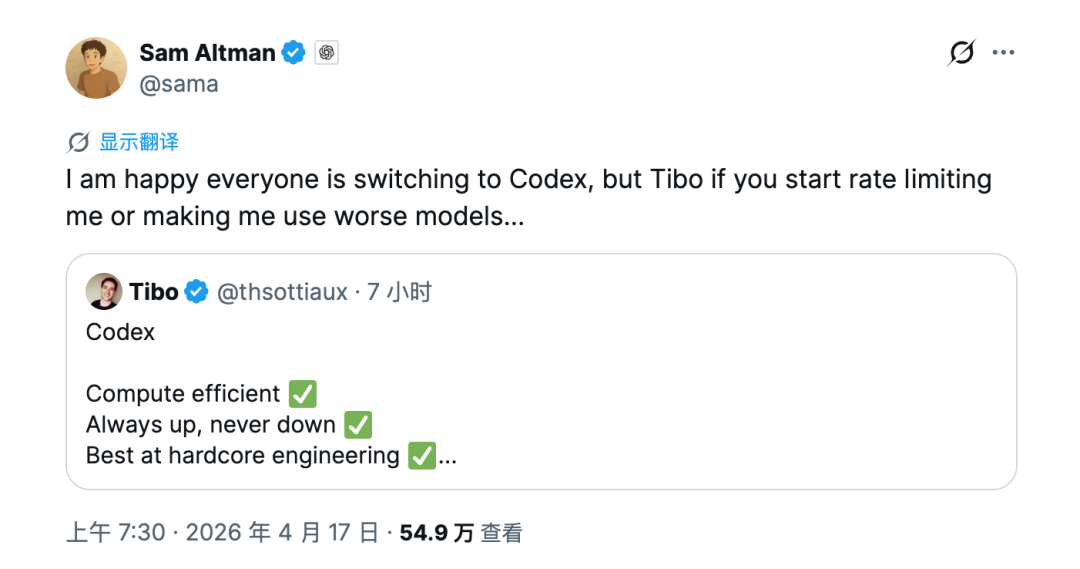

Sam Altman also seemed to finally emerge from the shadow of the attack, even finding time to joke on social media with Codex leader Thibault Sottiaux, 'I'm glad everyone is switching to Codex, but Tibo, if you start rate-limiting me or making me use worse models...'

Sam Altman's Tweet

Onlookers unaware of the inside story might not fully understand the love-hate rivalry between OpenAI and Anthropic. Anthropic's founders, the Amodei siblings, were originally senior executives at OpenAI, with brother Dario Amodei serving as Vice President of Research.

However, in 2021, the Amodei siblings and Sam Altman disagreed on OpenAI's development direction. After failed negotiations, they exited angrily and founded Anthropic.

To use a familiar Chinese saying, Anthropic can be seen as OpenAI's sibling after a family split. After all, apart from the Amodei siblings, Anthropic's early founding team also came almost entirely from OpenAI.

Like all siblings who go their separate ways, Anthropic has been at odds with OpenAI since its inception, clashing on every front. While there are certainly ideological differences among the founding teams, such fierce rivalry and ongoing battles are rare.

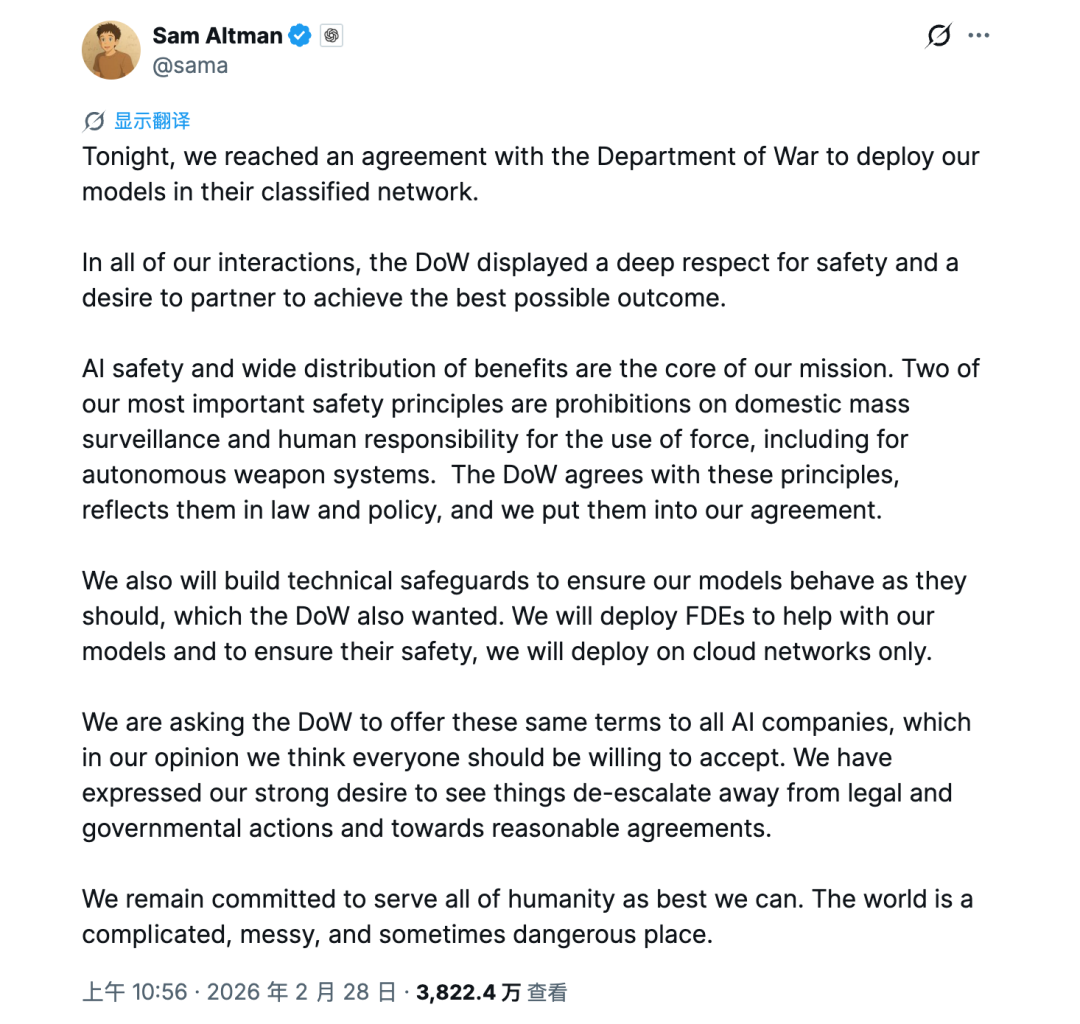

For example, earlier this year, Anthropic clashed with the U.S. Department of Defense, leading to a breakdown in negotiations and a subsequent ban by the Trump administration, resulting in the loss of hundreds of millions of dollars in contracts. Shortly after, the Trump administration directly designated Anthropic as a 'supply chain risk.' This was the first time a U.S.-based company had been designated as such, and Dario Amodei, who was full of Chinese threat theories, finally got a taste of what it's like to be a Chinese company. Trump's original words were cruder: 'I fired (them) like dogs.'""Logically speaking, facing the iron fist of the Trump administration should have been a moment for U.S. AI colleagues to unite and fight against the evil capitalist government. But just hours after Anthropic's negotiations broke down, Sam Altman announced that OpenAI had reached a cooperation agreement with the U.S. Department of Defense. OpenAI even emphasized in its press release that compared to Anthropic's previous cooperation agreement, their solution had more safety measures in place.

Sam Altman Announces OpenAI's Cooperation with the U.S. Department of Defense

What is a true brother? A true brother is someone who steps up when his brother is in trouble and then stabs him twice. Anthropic founder Dario Amodei was furious at OpenAI's opportunistic move, mocking their statement as hypocritical and showy within the company. He even called some of Sam Altman's public remarks outright lies and emotional manipulation.

It's not just that Sam Altman was too quick to strike at his former brother; Anthropic itself is no saint. Dario Amodei constantly mocks OpenAI, both openly and covertly.

For example, late last year, after Sam Altman raised a red alert within the company due to the threat from Gemini, Dario Amodei quickly stated that Anthropic didn't need red alerts to drive product releases. He also mocked some companies in the industry for 'YOLOing' (going all in) and blindly overbuilding data centers—a critique widely seen as aimed at OpenAI's massive investments.

Earlier this year, Anthropic's first advertisement during the U.S. Super Bowl directly targeted OpenAI. In the ad, Anthropic depicted a future where AI ads seamlessly invaded people's lives. For example, when you ask someone in the park how to exercise, after giving a mechanical AI-style response, they suddenly start reciting an advertisement for insoles, reminiscent of a Black Mirror episode.

At the end of the ad, Anthropic displayed a bold line: 'Ads are coming to AI. But not to Claude.' Considering that OpenAI had just started incorporating ads into ChatGPT, it was clear who Anthropic was targeting.

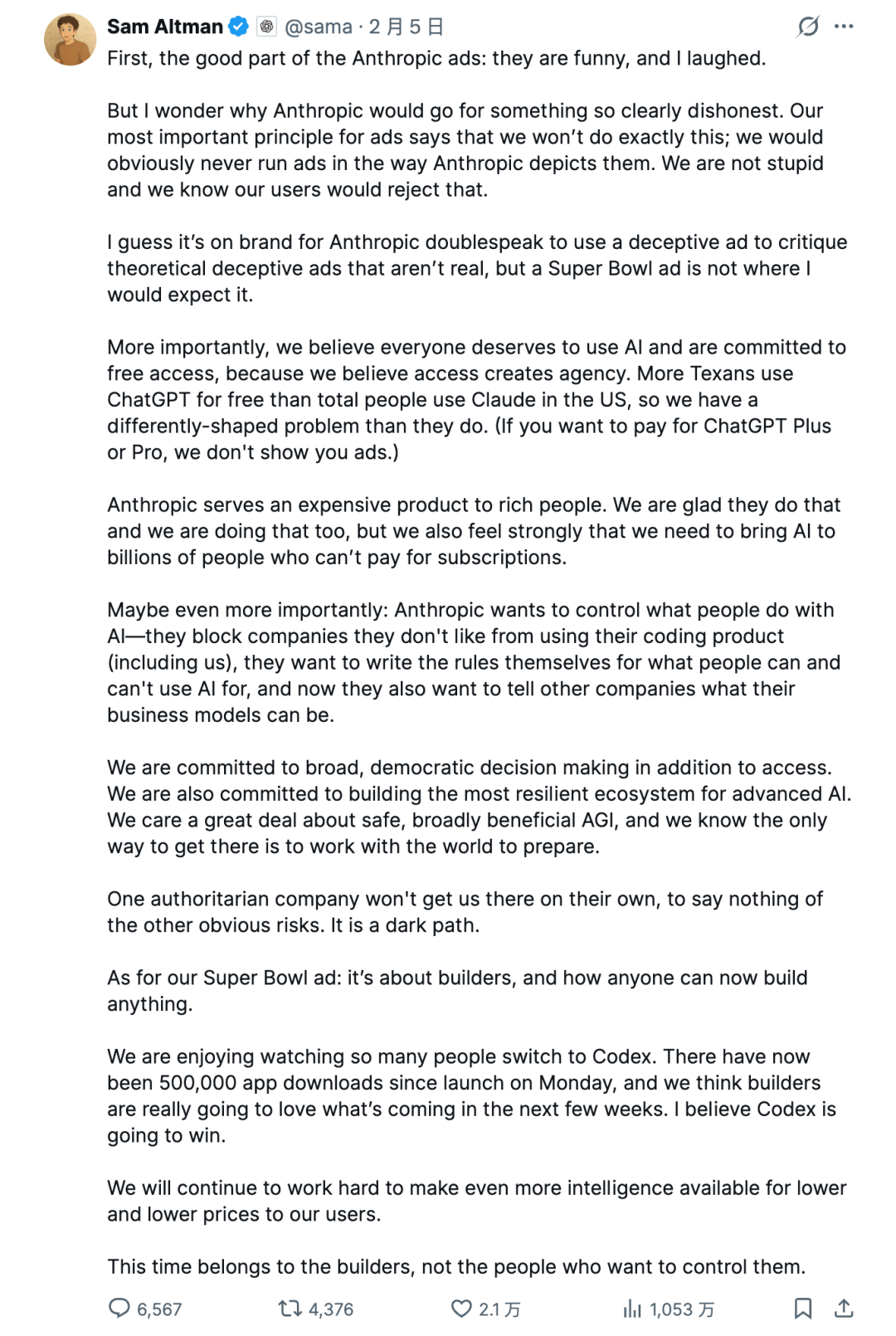

Sam Altman, without being provoked, updated a lengthy tweet on X, emphasizing, 'We obviously won't place ads in the way Anthropic depicted.' He said that using a misleading ad to criticize non-existent, hypothetical misleading ads was very much in line with Anthropic's usual double standards.

Sam Altman's Tweet

The two former good brothers and close 'Bros' have almost reached the point of enmity. Sometimes, the two old friends don't even bother to put on a show, refusing even physical contact when attending events together.

At the India AI Summit in February, there was a global tech leaders' group photo where participants had to hold hands and raise their arms to symbolize unity. By coincidence, Sam Altman and Dario Amodei were standing together. Both tacitly (tacitly) did not hold hands, only touching elbows and raising their fists. It was said that they had zero eye contact and zero physical interaction throughout.

Now, with both OpenAI and Anthropic accelerating their preparations for IPOs, competition and rivalry between the two companies are intensifying. Marketing campaigns, product updates, and the verbal sparring between founders are just minor skirmishes; the real battle is for the title of the 'first U.S. AI stock.'

Over the past period, U.S. media have continuously tracked and reported on the progress of their IPO preparations, with every move by the two companies affecting the confidence of potential investors.

Just as Anthropic announced that its annualized revenue had exceeded $30 billion, media outlets interpreted this as Anthropic having surpassed OpenAI. OpenAI executive Denise Dresser quickly clarified internally, accusing Anthropic of deliberately inflating its revenue by including distribution income from cloud service providers in its total revenue, resulting in an overestimation of annualized revenue by approximately $8 billion.

Barring any unexpected developments, both OpenAI and Anthropic are expected to go public within the year, potentially marking the largest IPOs in history, bar none. As the final IPO showdown approaches, the rivalry between OpenAI and Anthropic will only grow more intense.

There are only certain moments when OpenAI and Anthropic stand side by side.

In February of this year, Anthropic accused three Chinese large model companies—DeepSeek, Yuezhi Anmian, and MiniMax—of launching 'industrial-scale distillation attacks' against its Claude model. During the same period, in a report submitted to the U.S. Congress, OpenAI similarly accused DeepSeek of distilling GPT series models through obfuscation tactics.

The two companies truly are good 'bros' in the U.S.—fighting behind closed doors but presenting a united front in public.

© All rights reserved by Shangshan. Reproduction without authorization is prohibited.