Alternative Deduction of the AI Bubble Theory: Sustainability of High Valuations from the Perspective of Capital Allocation Trends

![]() 04/24 2026

04/24 2026

![]() 489

489

Graphic & Text | Tang Sister

Many investors following the AI sector may have noticed that numerous Agent products on the market are converging in terms of both business models and product positioning.

On April 9, 2026, OpenAI set the ChatGPT Pro tier at $100 per month, precisely aligning with the price point of Anthropic's Claude Max, launched a year earlier, with an identical three-tier structure of $20/$100/$200. Five days later, on April 14, Bloomberg revealed that Anthropic had received funding offers valuing the company at $800 billion, nearly on par with OpenAI.

Does something seem amiss? Logically, two competing companies in their early growth stages should strive to differentiate themselves or leverage unique advantages for growth. Yet, these two firms increasingly resemble the battle between Uber and Didi, albeit not yet at the stage of price wars.

This product-side homogenization is merely superficial; a deeper pricing mechanism warrants closer examination.

From 2014, Uber and Didi burned through billions in subsidies, with valuations soaring from billions to hundreds of billions in the same period; the food delivery and bike-sharing sectors followed similar strategies. In those scenarios, valuations were based on the future narrative of "monopolistic rents after the last player remains," a premise set for the future. In AI's case, the $800 billion valuation is not for future monopoly—everyone knows these two companies are unlikely to merge.

If not for monopoly, then for what?

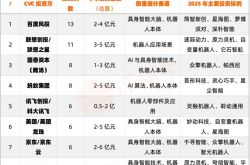

SoftBank, NVIDIA, Amazon, Middle Eastern sovereign funds, Tiger Global, and Fidelity have trillions in capital that must be deployed into AI, driven by benchmarks, strategic narratives, and investor commitments. Yet, within the AI sector, only a handful of independent targets can absorb tens of billions in single investments: OpenAI, Anthropic, and xAI (the latter merged into SpaceX in February 2026).

With abundant capital and few deployable targets, capital allocation pressures mount.

Thus, valuations are not derived from company fundamentals (under which neither would justify $800 billion). Trillions must be invested, and valuations rise to meet funding needs, with differentiation or winners/losers being secondary. As long as a target can absorb the capital, the valuation holds.

Understanding this, the product-side strategies make sense. Basic model capabilities are rapidly homogenizing, open-source large models are closing in, and enterprise clients demand functional parity and substitution rights in contracts. High-value paid scenarios are limited to coding and deep research, forcing both companies into the same space with nearly identical product moves.

If you're betting long on AI, you must ask: The ultimate payers for AI infrastructure, power companies, private credit, and cloud providers are few. The real risk lies in where the money flows, who retains it, and which segment collapses first.

01 Everything Seems Fine

A key insight: Fundamental analysis inadequately reflects company risks in this AI wave, especially within investment frameworks focused on the AI supply chain.

Oracle is one of the most dramatic turnarounds in the AI cycle. Its Q3 FY26 earnings (released March 10) showed cloud infrastructure revenue surging over 80% YoY, with contract reserves hitting an all-time high, prompting a ~10% stock jump overnight. For a legacy database company, this marks a complete transformation and a second growth curve long awaited by the market.

However, in September 2025, Oracle signed a five-year, $300 billion cloud services contract with OpenAI—equivalent to $60 billion annually, accounting for over half of Oracle's total contract reserves. Yet, OpenAI's operating cash flow remained negative, relying on next-round financing for daily expenses.

To fulfill contracts with major clients like OpenAI, Oracle announced in February 2026 it would raise $45–50 billion via debt and equity. A supplier leveraging itself to serve clients with unstable cash flows means Oracle's revenue narrative for years hinges on a money-losing company fulfilling payments.

In most supply chains, contract sizes rarely far exceed current cash flow coverage. Automotive orders span quarters to a year, aligning with vehicle delivery cycles; enterprise software multi-year contracts match predictable IT budgets; internet platform ad and subscription revenues settle current periods, with contract sizes syncing with current income. Fundamental analysis doesn’t need to trace each layer's counterparty or credit basis because contract sizes naturally align with operational rhythms.

This misalignment isn’t unique to Oracle, nor a strategic choice or accounting quirk—it’s pervasive across the AI supply chain.

What if capital runs short? At the 1999 internet bubble peak, Lucent's revenue neared $38 billion, yet it extended $8.1 billion in supplier financing to clients—about a quarter of revenue. Nortel and Cisco did the same, lending to cash-strapped telecom operators to buy their equipment. McKinsey counted $25.6 billion in industry-wide client loans from nine telecom equipment suppliers.

In September 2025, NVIDIA pledged $100 billion to OpenAI, mostly for purchasing NVIDIA's own AI chips. Four months later, Jensen Huang clarified it was "never a firm commitment," to be assessed in rounds based on deployment progress. This mirrors Lucent's 1999 approach—quarterly funding intentions marketed at full valuations until client defaults triggered collapse.

When the 2001 bubble burst, clients defaulted en masse. Lucent's loan portfolio bad debt soared from single digits to 40%+; dozens of telecoms went bankrupt in 2001–2002. Lucent's revenue collapsed from ~$38 billion to $8 billion by 2006, selling to Alcatel at $3/share; Nortel's stock plummeted from $86.75 to $0.18, leading to bankruptcy.

The AI supply chain is repeating this script. Multiple key nodes lock in future growth with contracts far exceeding current cash flows: Compute providers like CoreWeave, with ~$5.1 billion in 2025 revenue but ~$88 billion in contract reserves by April 2026 (two-thirds from Meta and OpenAI).

The top two AI startups alone—OpenAI's cumulative contract commitments reach $1.15 trillion across seven suppliers (Broadcom, Oracle, Microsoft, NVIDIA, AMD, Amazon, CoreWeave), yet its annualized revenue is just $24 billion.

Fundamental analysis obscures the gap between contract sizes and current cash flows, as contracts appear only as "contract reserves" on reports—not audited assets or income statement items. When issues arise, recovery depends on upstream payments; ultimately, only self-sufficient giants like Google and Microsoft can weather the storm, with others reliant on private markets for liquidity.

Thus, while individual nodes' financials appear sound, they share a hidden assumption: Capital will keep flooding into AI at current rates, and downstream clients will keep paying. This is where fundamental analysis falls short—it misses the interlayer dependencies and how "good businesses" rely on others' shaky contracts.

02 Money Flows

CoreWeave is a fascinating case in the AI supply chain.

Its 2025 annual report shows $5.1 billion in revenue, 67% from Microsoft. These numbers suggest a stable B2B business reliant on a creditworthy tech giant.

Yet, Microsoft accounts for only a fraction of its $88 billion in contract reserves. The bulk comes from post-2025 mega-deals: Meta signed $14.2 billion in September 2025, then added $21 billion in April 2026, doubling its total commitment to $35.2 billion (40% of reserves). OpenAI accounts for $22.4 billion (25%).

Nearly two-thirds of CoreWeave's future growth hinges on payments from Meta and OpenAI. Meta appears stabler than OpenAI, with independent ad cash flows. But Meta funds AI data centers indirectly: It contributes 20% equity, with Blue Owl-led JVs borrowing the rest—hundreds of billions in debt kept off Meta's books. One client is money-losing OpenAI; the other relies on external financing for expansion. Neither's payment capacity is entirely self-contained.

Other beneficiaries include power equipment makers and plant contractors. GE Vernova, spun off from GE in April 2024, makes gas turbines and grid gear. Its stock surged from ~$140 at IPO to ~$1,000 by April 2026 (7x in two years). Argan, an EPC contractor for gas plants, jumped from $130 to $600+ in a year (~5x).

Yet their gains defy traditional fundamentals. The issue is severe capacity-contract mismatches: GE Vernova's gas turbine reserves soared from tens of GW in 2024 to 83 GW by late 2025 (target: 100 GW by end-2026), but annual capacity won’t reach 20 GW until mid-2026—orders will take four to five years to fulfill. Argan's project pipeline ballooned from $1.4 billion to $2.9 billion (3x annual revenue).

These reserves represent future revenue, not current assets. If hyperscalers cut AI data center expansion, orders delay or renegotiate, reserve execution can slow instantly. Even with default clauses, actual payouts usually involve heavily discounted renegotiations, not full compensation.

Combining these examples, supply chain nodes fall into three categories:

Hyperscalers (Microsoft, Google, Meta, Amazon, Oracle): Their core businesses (ads, search, e-commerce, databases, enterprise software) predate AI and generate independent cash flows. Going long on them requires stress-testing: Strip out AI-driven valuation premiums—what remains is the true margin of safety.

OpenAI and Anthropic: They survive on capital allocation pressures, with creditworthiness tied to next-round funding success and valuation growth. Direct secondary market ownership isn’t feasible yet; they serve as "leading indicators" (e.g., private valuation trends) for supply chain health.

Midstream nodes (compute providers, data centers, power companies, grid equipment makers, private credit funds): Most complex. Weightings should reflect counterparty composition. Contracts with hyperscalers (e.g., Constellation's power deals with Microsoft) can be defensive. But those with high exposure to OpenAI/Anthropic or hyperscalers using SPV structures (e.g., ~66% of CoreWeave's reserves) warrant caution.

03 Conclusion

Another critical signal: Liquidity at the chain's end.

AI is the largest asset allocation theme, with sovereign funds and mega-capital still deploying. But private market inflows aren’t constant—they watch for: Can next-round private valuations keep rising? Are employees eagerly selling shares internally? Are large private credit funds facing redemptions? Blue Owl's three recent fluctuations signal that even deep pools tighten at points.

Blue Owl, a top U.S. private credit manager with >$300 billion AUM as of late 2025, holds much AI infrastructure-linked structured debt. From February to April 2026, three events occurred: OBDC II suspended quarterly share repurchases in mid-February; on February 18, it sold $1.4 billion in direct loans to boost liquidity; on April 2, a non-traded private credit fund hit 4.999% in quarterly redemption requests (just below the 5% mandatory limit).

The April 2 incident could blame geopolitical tensions (Iran), but February's events already flashed warning signs. Going long on AI remains valid—it's the dominant trend, with top-tier funding pressure intact and growth narratives unfinished.

But every long position must answer: Who ultimately pays for this segment? Clarify that, and you grasp both confidence and risk.

Disclaimer: This article is for learning and discussion only and does not constitute investment advice. Like, share, and repost—your support fuels our updates! Related Reading: Four-Year-Old Sige New Energy's Rapid Growth and Costs Windows Still Exists, But the User Isn't Human Middle-Class Awakening: Even SKP Struggles? The Costliest Mistake in Panic Response: Treating All Declines Alike OpenClaw's Research—The Least Valuable Part of Investing No One Bullish on U.S. Stocks, Yet Everyone Buys Precious Metals Flash Crash Lessons: Stop Pretending You're Diversified Tech Giants' Earnings Night: Meta Spends $135B, Microsoft Slows, Musk Cuts Premium Cars