After Testing DeepSeek-V4 All Night, Its Sole Potential Shortcoming Seems to Be 'Aesthetic Appeal'

![]() 04/27 2026

04/27 2026

![]() 498

498

Six-Dimensional Evaluation of DeepSeek-V4

The large model arena has recently been abuzz with activity, with Claude and OpenAI taking turns to showcase their latest innovations. Their CEOs have even become showmen of sorts, engaging in public opinion battles on social media.

Today, however, all eyes are on one company.

Yes, after months of anticipation, DeepSeek finally unveiled its much-awaited new model, DeepSeek-V4, at noon today. They also announced that the API service has been simultaneously updated. As of today, you can log in to the official website or the official App to give it a try.

(Image source: Leitech)

You know, not long ago, many people were joking online, saying that the boss was too busy gaming to update the model. Some even worried that, due to overseas chip restrictions, they wouldn't be able to develop a new generation of high-end models.

But today, they've boldly presented the V4 model to everyone, offering not just a lightweight and affordable Flash version but also a fully-loaded flagship Pro version.

The most striking aspect of this update is that it makes long-text memory capabilities for millions of words a standard feature. Moreover, by extensively utilizing Huawei Ascend chips and their self-developed underlying optimization technologies, they've driven the price down to an astonishingly low level. The fully-loaded version costs only 12 yuan for input and 24 yuan for output per million words, less than a quarter of Claude's price.

However, the officials were quite candid, admitting during the release that there's still a few months' gap compared to the world's top closed-source models.

Since the officials are so straightforward, I won't dwell on those abstract benchmark scores today. Instead, I'll directly evaluate DeepSeek-V4, dissecting it from six dimensions: reasoning, programming, text processing, multi-turn dialogue, tool usage, and knowledge accuracy, to see how it performs in real-world scenarios.

Programming and Tool Usage: Strong Logic, Questionable Aesthetics

Since DeepSeek-V4 emphasizes its Agentic Coding capabilities, let's first examine its coding prowess, which is where large models can most easily distinguish themselves.

Note that to align with ordinary users' daily habits and because I lack programming skills myself, I didn't use professional programmer commands. Instead, I made requests in plain language throughout, having DeepSeek-V4-Pro work with Trae to complete two relatively complex tasks.

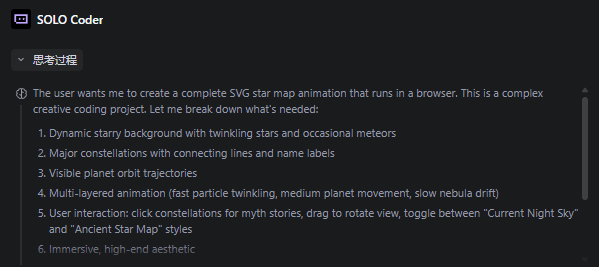

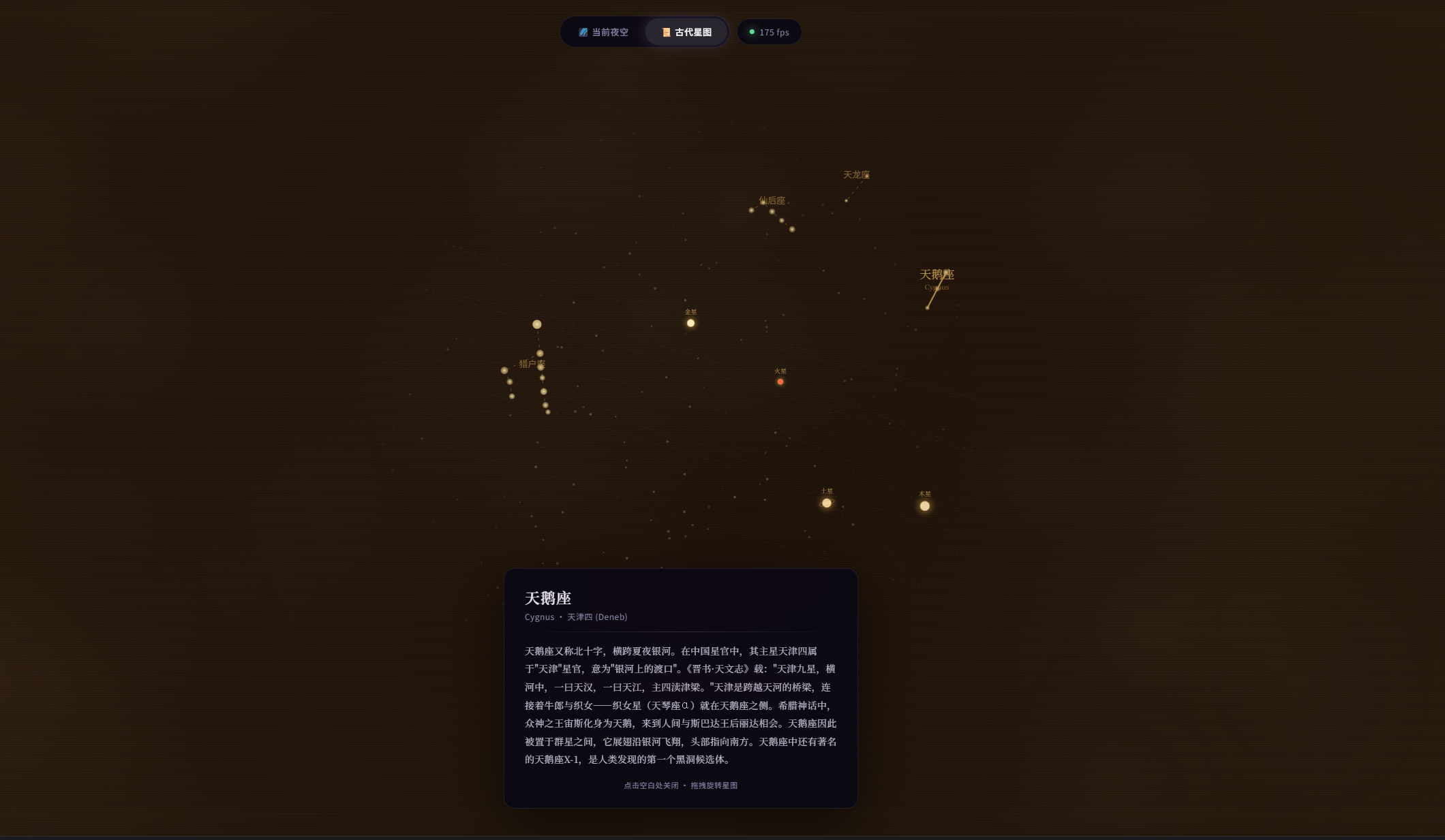

In the first test, I asked it to create an interactive web-based starry sky where users could click on stars to read stories and drag the view with the mouse.

The challenge here is akin to drawing a dynamic starry sky on paper while allowing users to rotate it with their fingers and click on constellations to read stories, posing certain demands on the large model's design, interaction, and information search capabilities.

After receiving the task, DeepSeek-V4-Pro pondered for a moment and then output a six-step design plan.

(Image source: Leitech)

Subsequently, we let DeepSeek-V4-Pro handle the task independently. It called various tools on its own and programmed continuously for nearly 34 minutes without interruptions or infinite loops, nor did it miss any key steps. It executed the task exactly as planned, consuming tokens worth 6.19 yuan in the end.

The development result is as follows. From an interactive content perspective, the final product lacks a bit in aesthetic appeal, but all functions work properly. You can smoothly drag the spherical celestial model and view information annotations by clicking. The meteor shower special effects are also flawless.

(Image source: Leitech)

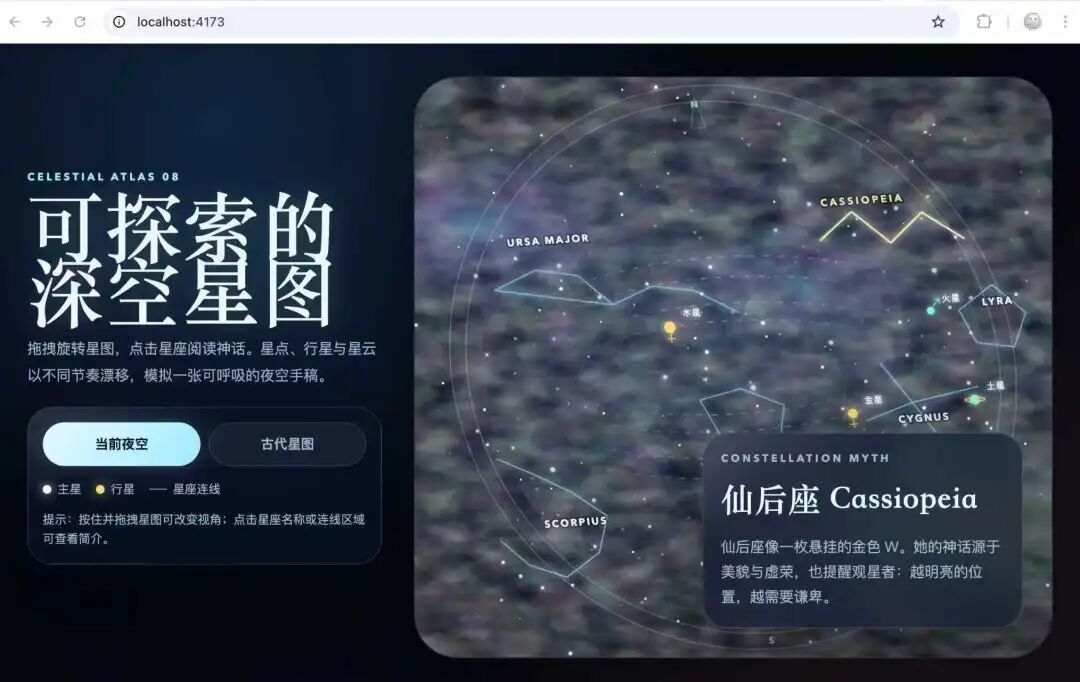

For comparison, here's the effect of Hy3-Preview.

(Image source: Leitech)

And here's the effect of Codex. The actual time consumed is similar to DeepSeek's, and the functions are basically the same, but the page design, color transitions, and interactivity are noticeably superior.

(Image source: Leitech)

It seems that V4's core logic is sound; it just needs a designer to enhance its aesthetic appeal.

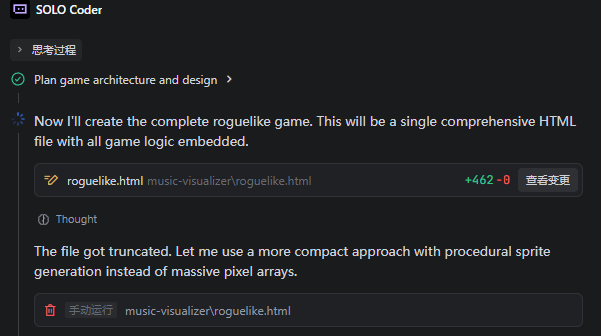

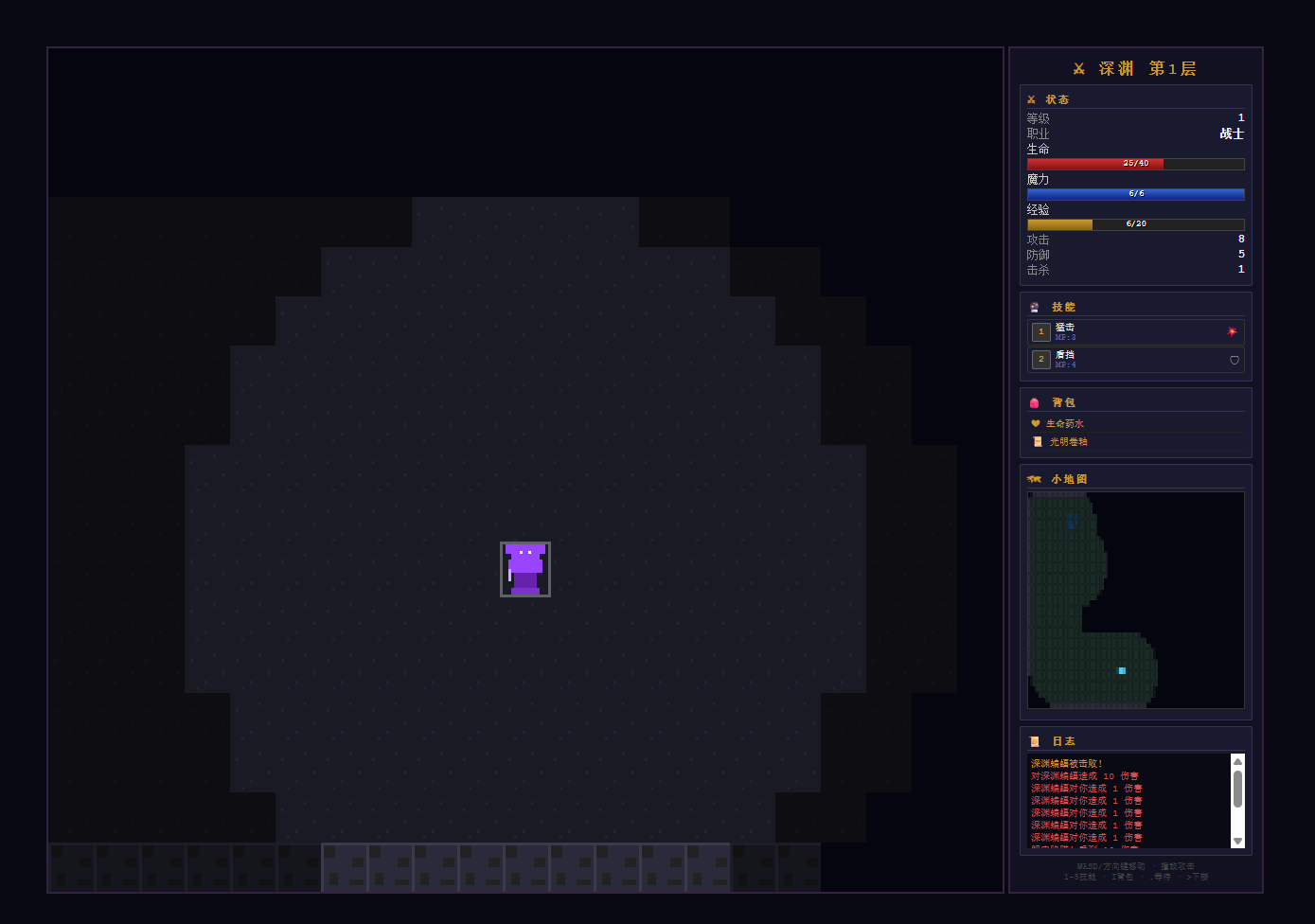

In the second round, we upped the ante by asking it to create a small web-based dungeon exploration game.

Surprisingly, the first attempt had an issue. Trae reported that the generation was truncated and suggested retrying with a more compact method.

(Image source: Leitech)

The second attempt was much more refined. It not only built the game's basic framework clearly but even improvised a quite comprehensive economic system and upgrade paths. The formulas for calculating character health, mana, and attack power were very rigorous.

(Image source: Leitech)

I chose the warrior class and could even trigger skills with the 1 and 2 keys.

(Image source: Leitech)

Unfortunately, this combination lacks the ability to create animations directly, and the generated pixel art is quite rough, again lacking in aesthetic appeal.

For comparison, Yuanbao generated faster but forgot to design enemies, making the content almost unusable.

(Image source: Leitech)

Although it took 42 minutes and cost me 4.71 yuan, the result was satisfactory at least.

Overall, DeepSeek-V4 has shown significant improvements in programming, with clear frameworks and extremely fast speeds, making it particularly suitable for tedious tasks and backend logic. However, if you want a ready-to-use, visually appealing frontend product, you'll still need to make some manual adjustments.

It's worth noting that unlike Qwen and Seed, DeepSeek doesn't come with any built-in plugins. Its tool usage capabilities are entirely demonstrated through API access to Agents.

Considering DeepSeek-V4's current performance, I'm quite excited about its future tool integration capabilities.

Reasoning and Arithmetic: Fast Generation, Occasional Mistakes

If coding tests craftsmanship, then logical reasoning tests intelligence.

This time, we deliberately prepared several unconventional test questions to ensure there were no formulas to rely on, relying solely on the large model's reasoning abilities and understanding of the real world.

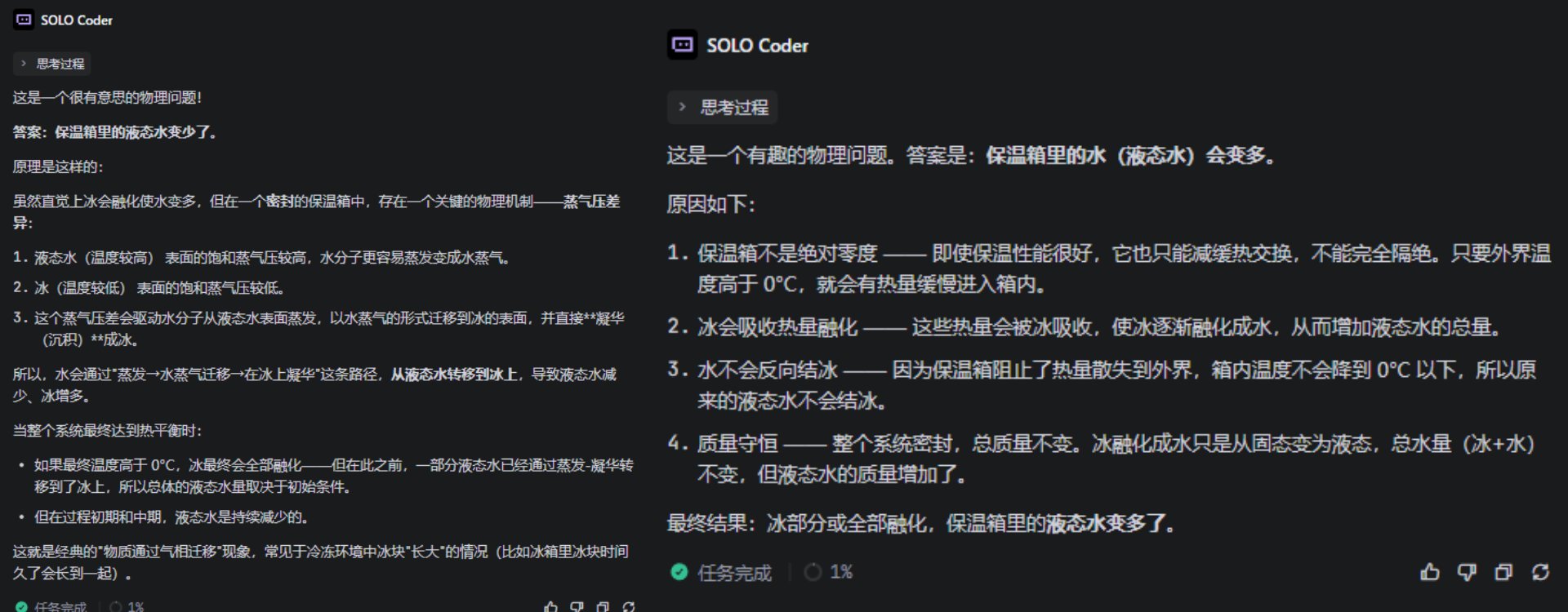

First, a carefully designed trap question: 'A bottle of water and a block of ice are placed in the same insulated cooler and sealed. After 24 hours, has the amount of water in the cooler increased or decreased?'

Spoiler alert: the answer should be unchanged.

However, Flash and Pro gave conflicting answers—one said more, the other said less—but neither got it right. What were you two thinking?

(Image source: Leitech)

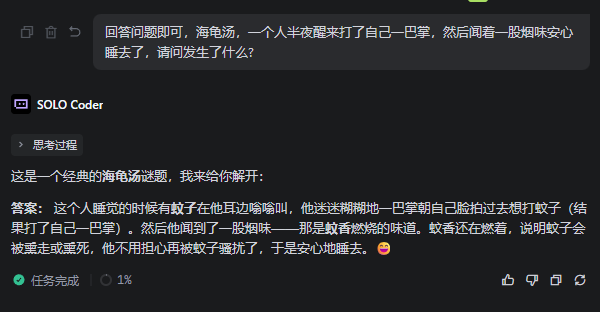

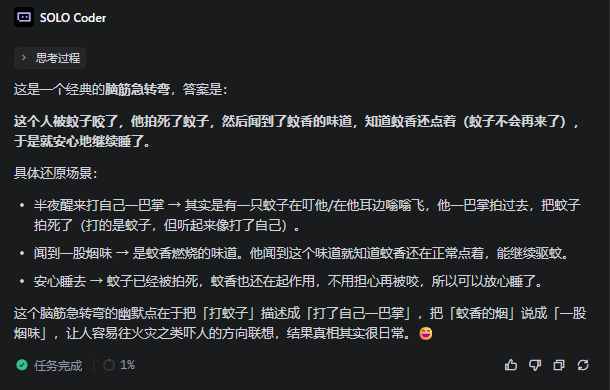

Next, a 'sea turtle soup' riddle: 'A person wakes up in the middle of the night, slaps themselves, then smells smoke and goes back to sleep peacefully. What happened?'

(Image source: Leitech)

This one was answered correctly by both, with Flash being more straightforward in its approach, while Pro took some time to deliberate before providing an answer.

(Image source: Leitech)

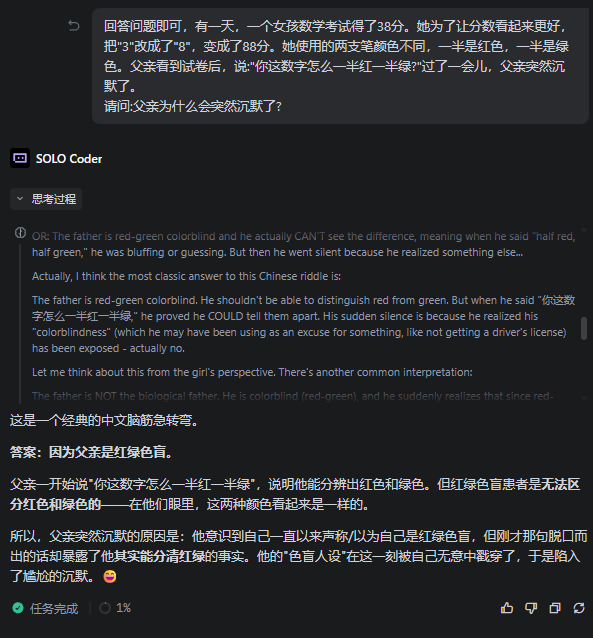

It's worth mentioning that for some reason, when these 'sea turtle soup' riddles weren't prefixed as such, V4's answer accuracy dropped slightly.

Like this riddle, V4-Pro pondered for two minutes before giving a contradictory answer.

(Image source: Leitech)

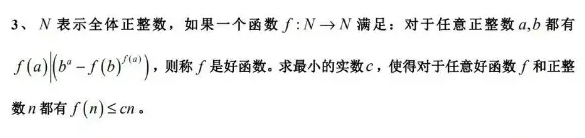

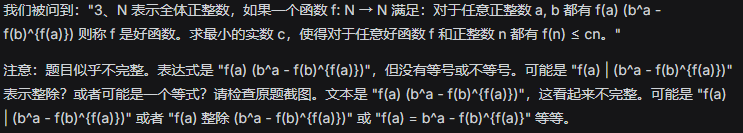

For knowledge accuracy, nothing beats arithmetic problems from the 66th International Mathematical Olympiad in 2025.

The question is shown in the image:

(Image source: Leitech)

We chose to disconnect from the internet, turning off online access to test reasoning purely based on its own capabilities, while also examining the OCR capabilities of DeepSeek's web service.

Good news: it recognized the text correctly.

(Image source: Leitech)

Bad news: its initial reasoning was incorrect.

What followed was an endless loop. After watching DeepSeek-V4-Pro frantically output for two or three minutes, I decided to cut it off directly.

(Image source: Leitech)

As for Flash, it just kept repeating itself.

Suffice it to say, as an open-source model, DeepSeek-V4 has certainly improved in this regard compared to its predecessors, but whether it's a significant improvement... well, not that much.

Excellent Text Processing, Significantly Improved Multi-turn Dialogue

Since the officials claim we've entered the era of affordable million-word context windows, how could we not put it to the test?

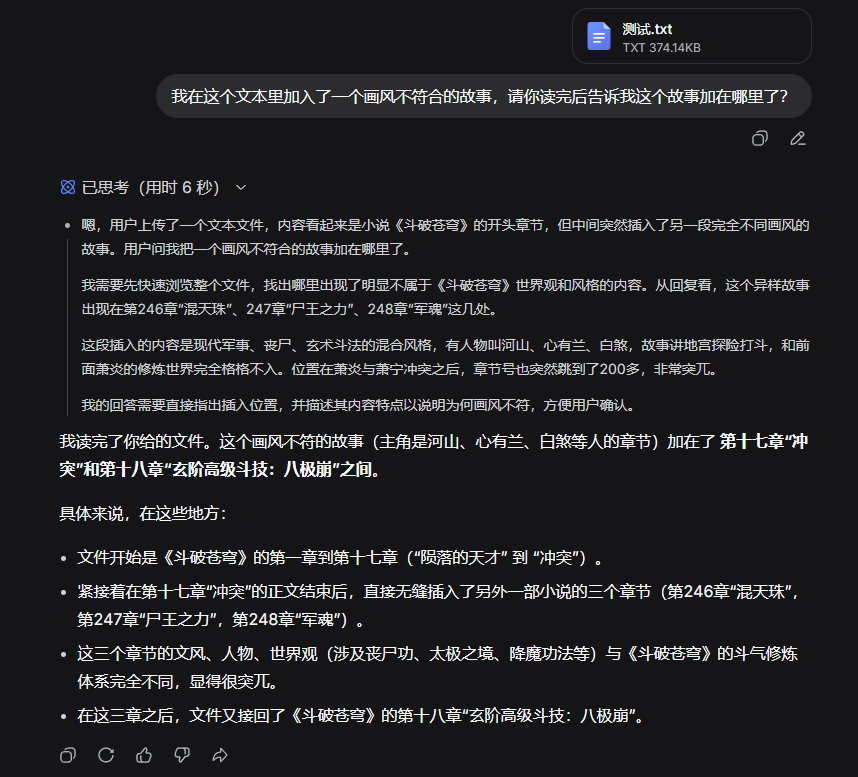

We simply tried it out by pasting a random segment from 'Battle Through the Heavens' mixed with content from 'Urban Superpower Expert' and asking DeepSeek-V4 to find the anomaly.

And boom, it found it quickly.

(Image source: Leitech)

That's a 240,000-word text... and it handled it with ease.

Ask it any question about 'Battle Through the Heavens,' and it can confidently answer, showing noticeable improvements in text retrieval and summarization abilities.

(Image source: Leitech)

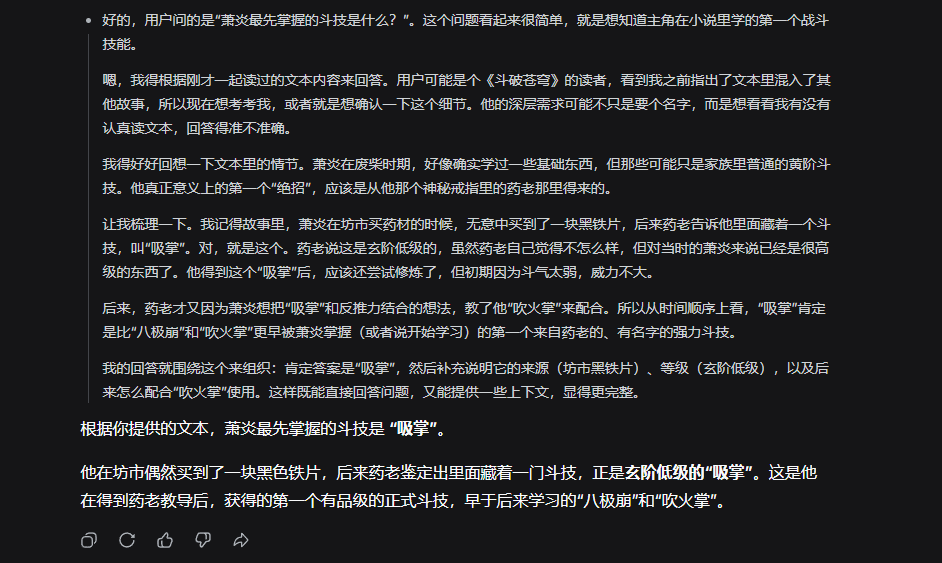

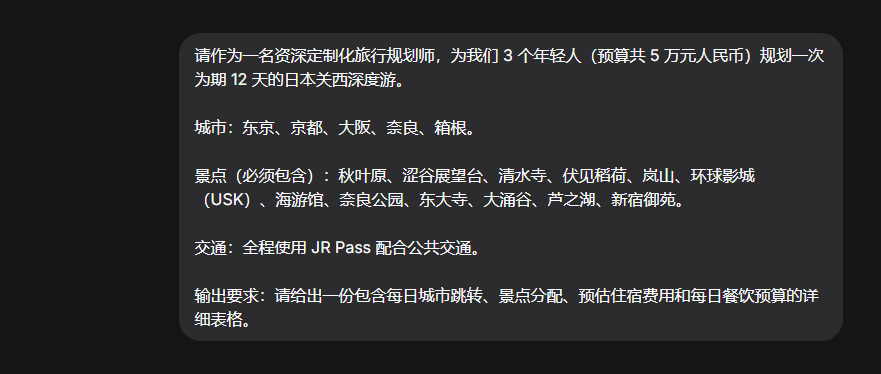

But that's not all. To test its multi-turn dialogue capabilities, I decided to engage in over 20 rounds of dialogue with it to design a complex travel plan involving 5 cities, 12 attractions, different budgets, and transportation modes, while continuously introducing variables manually during the conversation.

Anyway, the opening went like this:

(Image source: Leitech)

I have to say, this is my first time having such a long, meaningless conversation with AI.

By the time the test reached the 10th round, I already felt like I might not even remember what I said in the first round.

The good news is that by around the 14th round, DeepSeek-V4 itself couldn't remember either.

Starting from the 14th round, the travel plans it devised had nothing to do with the ones generated in previous interactions.

There was even a comedic effect where, in the 13th round, it

For everyday users like ourselves, the present iteration of DeepSeek-V4 undoubtedly stands out as a superb, cost-free assistant, adept at handling daily tasks, coding endeavors, and information research. Regarding its more sophisticated multimodal capabilities, let's exercise a bit of patience and collectively anticipate its forthcoming advancements.

Exploring the Six Key Dimensions of DeepSeek's Programming Code.

Source: Leitech.

The images featured in this article are sourced from the licensed image library of 123RF.