Trend丨The Future Direction of AI Inference is a System-Wide Computing Solution

![]() 04/27 2026

04/27 2026

![]() 338

338

Foreword:

In early April, a notable acquisition emerged in the AI infrastructure sector. d-Matrix, a pioneer in generative AI inference computing, announced the acquisition of the data center business of Carlsbad, California-based GigaIO. The collaboration between the two companies began in 2025 when d-Matrix integrated its Corsair inference platform into GigaIO's SuperNODE architecture, creating a hyperscale solution capable of supporting dozens of Corsair accelerators in a single node. Now, this deal fully incorporates GigaIO's FabreX PCIe memory fabric and SuperNODE platform into d-Matrix's product portfolio. Founder and CEO Sid Sheth has a clear vision for this move: "Inference is bigger than any single chip—it's now a systems problem."

Author | Fang Wensan

Image Source | Network

From Single Chips to Rack-Level Infrastructure

What is a "system-wide computing solution"? It means that competition in AI inference no longer revolves around the compute power parameters of a single chip but instead shifts toward end-to-end capabilities covering accelerators, networking, memory interconnects, software stacks, and even entire racks. This acquisition builds on a collaboration that began in 2025, aiming to enhance d-Matrix's ability to provide system-level AI infrastructure rather than discrete silicon.

GigaIO's composable infrastructure, FabreX, is a PCIe-standard-based composable memory fabric that supports building decoupled compute and memory pools across nodes, enabling dynamic configuration at the rack or cluster level. This technology forms a complete closed loop with d-Matrix's existing Corsair inference accelerators, JetStream networking, Aviator software, and the SquadRack rack-level reference architecture co-developed with Broadcom and Arista. From a broader industry perspective, the system-wide approach has become a consensus among leading companies. At the 2026 GTC conference, NVIDIA's product form (form factor) had already evolved from single GPUs to integrated "chip-rack-data center" systems, marking a shift in the focus of compute power competition to data center-scale platforms. d-Matrix's acquisition strategy aligns perfectly with this trend.

d-Matrix's Forward-Looking Judgment: Memory Bandwidth is the Real Bottleneck

d-Matrix has chosen a technical path starkly different from the GPU camp. After NVIDIA established dominance in AI training in 2019, founder Sheth did not bet on training chips but instead focused on inference. "Unless you have substantial differentiation, trying to do something there would be a foolish endeavor."

d-Matrix's core insight is that for Transformer-based inference, the bottleneck has never been computation but rather moving weights. The core source of latency comes from shuttling data between compute cores and memory. To address this, they developed digital in-memory computing technology—where matrix multiplication occurs directly within memory cells, with memory blocks themselves serving as compute blocks. Summation operations are completed via embedded adder trees, providing a more efficient hardware solution for AI inference. Built on SRAM rather than HBM and customized for Transformer workloads, Corsair configures large-capacity SRAM and LPDDR5X within the chip, keeping matrix operations as close to storage as possible to reduce data movement energy consumption and latency. Additionally, d-Matrix plans innovations in 3D DRAM memory stacking, expanding memory capacity into the third dimension and promising to boost AI model operation speeds by 10x while reducing energy consumption by up to 90% compared to the current industry standard HBM4.

This reconstruction at the foundational architectural level reflects a profound understanding of the essential needs of inference scenarios. As d-Matrix has stated, they consider "three major obstacles" to achieving fast, efficient, and high-performance AI inference, with memory bandwidth being the most critical barrier. Sheth's remarks clearly articulate the evolutionary logic of the system-wide approach: "We knew we needed something special, something more efficient—not just solving compute problems but also addressing compute, memory, memory bandwidth, memory capacity, and all these issues."

Market Signals: Financing Rhythm and Customer Positioning

d-Matrix's system-wide approach has received strong capital endorsement. In November 2025, the company completed a $275 million Series C funding round, valuing it at $2 billion and bringing cumulative financing to $450 million. Participants included European tech investment firm Bullhound Capital, Singapore's sovereign wealth fund Temasek, and Microsoft's venture capital arm M12, the Qatar Investment Authority, and EDBI. The involvement of these top-tier investors serves as a strong endorsement of d-Matrix's technical roadmap and commercial prospects.

At the product level, the Corsair platform's performance metrics are already impressive. It achieves 30,000 tokens/second throughput with just 2ms latency per token on the Llama 70B model; on the Llama 8B model, a single server can deliver 60,000 tokens/second with 1ms latency per token. Additionally, its solution is said to reduce interactive latency by up to 10x in performance mode compared to HBM-based alternatives. Sheth claims his solution outperforms GPUs by 2-3x in cost, 5-10x in energy efficiency, and nearly 10x in speed.

Target customers include hyperscale cloud providers, cutting-edge AI labs, and enterprise deployments. Partners such as supercomputers are bringing d-Matrix's solutions to market. Sheth expects the acquisition to accelerate revenue growth and support new pricing models in rack configurations for heterogeneous systems.

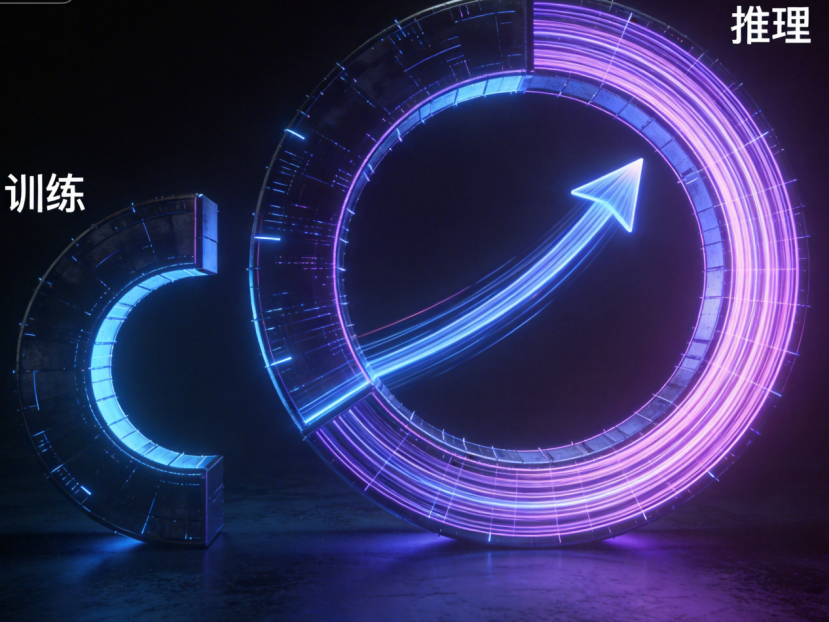

The Inflection Point of AI Inference and the Strategic Significance of the System-Wide Path

This acquisition merits attention primarily because the demand structure in the AI industry is undergoing a fundamental transformation. Deloitte projects that the global proportion of inference workloads in AI compute power will rise from about one-third in 2023 to about two-thirds by 2026. NVIDIA further notes that global compute demand has grown 1 million-fold over the past two years due to rapidly increasing inference tasks.

It is precisely at this structural inflection point that system-wide computing solutions demonstrate unique advantages. As inference workloads become increasingly distributed and heterogeneous across CPUs, GPUs, and inference accelerators, data must move efficiently in real-time between chips, nodes, racks, and entire data centers. Companies with complete system stacks can offer lower-latency, higher-energy-efficiency, and more cost-competitive solutions. China Galaxy Securities explicitly points out that the compute power competition has shifted from the chip level to the data center-scale platform. d-Matrix CEO Sheth puts it most precisely: "Inference is bigger than any single chip. It's now a systems problem."

Conclusion: From the acquisition of GigaIO's data center business to the foundational breakthroughs in digital in-memory computing technology, and the structural explosion in inference compute demand, all trends indicate that the future of AI inference lies in system-level optimization. The 2026 acquisition is merely the prologue to this systemic competition.

Online References:

Alibaba Cloud: "Defining the 2026 Intelligent Computing Era: Deconstructing the Underlying Protocols for Enterprise AI Applications' Transition from 'Experimental' to 'Production' States"

Smart Finance: "GF Securities: AI Inference Efficiency Innovation and Agent Resonance Open a Trillion-Dollar Market Space"

Sina Finance: "Digital Economy Weekly: GTC2026 Highlights—AI Shifts from Chip Competition to System Competition"

China Science and Technology Network: "The Year of Token Explosion! 2026 Zhongguancun Forum Annual Sub-Forum Discusses New Visions for AI Large-Scale Inference Services"