The AI Industry Outlook Post-DeepSeek-V4 Launch

![]() 05/07 2026

05/07 2026

![]() 351

351

Several days have elapsed since DeepSeek-V4's official unveiling. Have you noticed its understated presence compared to the R1 phenomenon? Indeed, the industry had pinned high hopes on DeepSeek's latest offering, anticipating another groundbreaking release that would either dominate social media discourse or send NVIDIA's soaring stock prices plummeting.

Clearly, V4 has not sparked the anticipated waves or widespread public debate, nor has it generated any viral sensations. Yet, this absence of fanfare does not imply that the new model has gone unnoticed, nor does it suggest that High-Flyer Quantitative has lost its edge. In fact, within my professional circles and industry groups, V4 has garnered significant attention and discussion. However, the perspectives and focal points of these discussions differ markedly from those during the R1 era.

I've synthesized the industry landscape following V4's release, offering insights into what leading domestic AI companies are prioritizing and the emerging trends in the large model sector.

R1's meteoric rise and DeepSeek's subsequent iconic status were largely attributed to its impact on NVIDIA's stock price, which experienced a direct shake-up upon R1's release.

Contrastingly, following V4's launch, NVIDIA's stock price not only held steady but even saw a slight increase of over 4%, while the typically volatile Wall Street remained notably calm.

It's crucial to note that R1 operated on NVIDIA clusters, essentially leveraging overseas computing power to burst the bubble they had helped create. In contrast, DeepSeek-V4 was trained entirely on domestic clusters, without granting NVIDIA any exclusive testing or priority adaptation rights beforehand. Logically, this should have had a more pronounced effect, more effectively puncturing NVIDIA's inflated industry bubble. So, why didn't it?

Many might initially wonder: Is DeepSeek-V4 not cutting-edge enough? We'll address this later. One overlooked factor is the changing times.

Previously, domestic model training was almost exclusively reliant on NVIDIA, with domestic computing power primarily utilized for inference. Thus, any shifts in this dynamic would inevitably squeeze NVIDIA's commercial space.

However, over the past two years, domestic chips have made significant strides. Following V4's release, eight mainstream domestic AI chip manufacturers—including Huawei Ascend, Cambrian, Hygon Information, Moore Threads, MetaX, Kunlunxin, T-Head, and Iluvatar CoreX—completed compatibility verification and technical adaptation for DeepSeek V4, with NVIDIA also included in the mix.

With the dilution of monopolistic threats, the overreaction in capital markets naturally subsided. Thus, DeepSeek's "NVIDIA effect" quietly faded into the background.

In late April, semiconductor stocks, both overseas and domestic, surged dramatically, indirectly confirming the market's general optimism towards the demand potential in the global computing power sector. The industry landscape has transitioned from a single-player dominance to a coexistence of multiple players and independent efforts. This scenario may indeed mark the end of an era.

Following DeepSeek-V4's release, many industry media professionals shared a common疑惑 (doubt): they questioned whether they were simply overlooking something, given the lack of explosive buzz compared to last year. Upon private discussions, they realized it wasn't just them—the entire industry had collectively settled into a more subdued state. Articles analyzing V4 also received minimal traffic.

What does DeepSeek-V4 represent when it no longer commands widespread public attention or a flurry of self-media analyses?

It no longer wields the power to dominate both B-end and C-end markets as R1 did. V4's reception has been mixed among general users and B-end developers/enterprise users.

On the C-end, concurrent overseas GPT product iterations have been overwhelmingly impressive, with performance breakthroughs capturing most of the online spotlight. In contrast, DeepSeek-V4 did not deliver a jaw-dropping performance that would elicit exclamations of "Wow, this is incredible!" C-end users did not perceive a strong impact, resulting in fewer viral talking points.

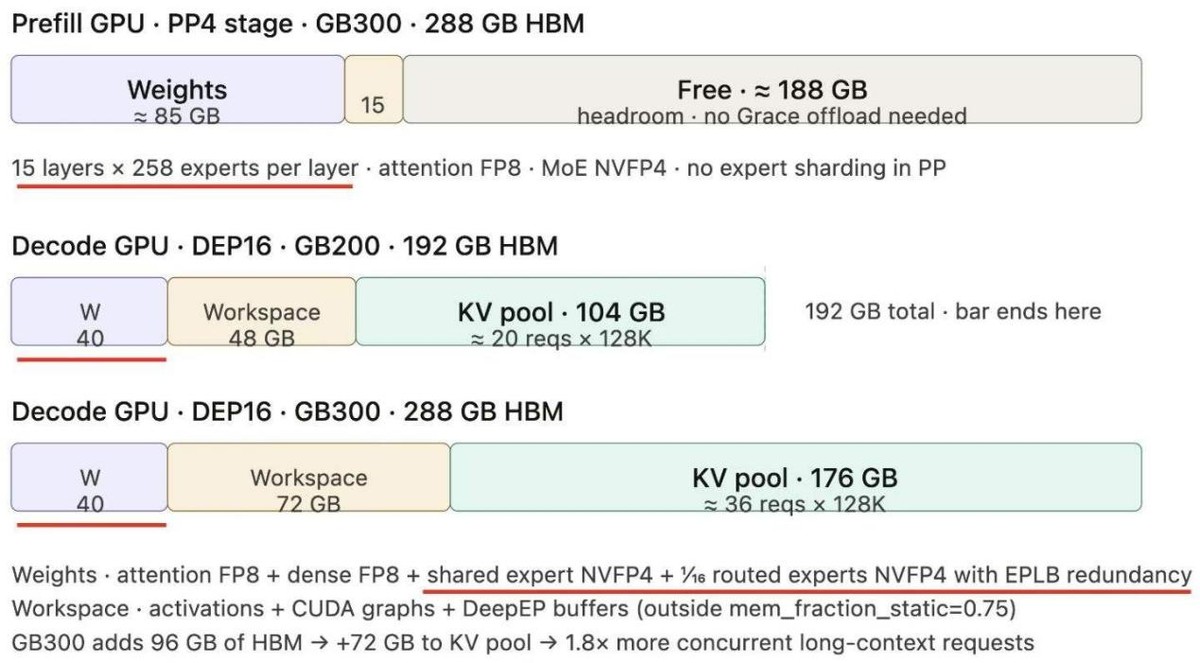

However, the B-end response has been overwhelmingly positive. Many enterprise developers conducted real-time tests immediately, and according to their feedback, they were most impressed with two aspects of V4: cost-efficiency and application-level adaptability. Under a 1 million tokens context setting, DeepSeek-V4-Pro requires only 27% of the single-token inference FLOPs compared to its predecessor V3.2, while KV cache usage drops to just 10% of the original. This significant reduction in inference computing power and KV cache directly translates to substantial savings in HBM memory resources, driving down overall storage costs and creating significant cost-saving opportunities for the industry.

Regarding application-level adaptability, V4 demonstrates strong compatibility with various AI agents, seamlessly integrating with mainstream AI proxies like OpenClaw and OpenCode at a price just 1/58th of Opus and 1/70th of GPT5.5. Given that agents are notorious for consuming tokens, this aspect has garnered significant attention from developers and enterprises.

Overall, V4 did not deliver an explosive experience for general users, causing its social media buzzworthiness to fade rapidly. However, this does not diminish the model's value. Instead, it indicates that the industry is moving away from hype and shifting towards in-depth B-end industrial implementation.

Recalling the previous R1 craze, social media was inundated with stunning copywriting, poems, and novels generated by DeepSeek, leaving everyone in awe of AI's capabilities. This time, however, the atmosphere in my social circle is entirely different. After DeepSeek-V4's release, the most shared and discussed content has been a motto from a technical article: "Not swayed by praise, not deterred by criticism, stay true to the path, and remain upright."

The model's capabilities no longer shock us. What resonates most with practitioners now is an attitude—an idealistic declaration. This shift in social media discourse is quite intriguing. I believe the industry's collective unconscious may recognize that the AI sector has reached a stage where it should shed hype and embrace steady progress, rather than continuing a senseless computing power arms race and model redundancy.

The reason for this perspective is that recently, the entire AI sector has been fiercely competing over tokens. The agent craze has fueled the token economy, bringing infinite application potential and commercial imagination but also generating immense anxiety that spreads from developers to enterprises and ordinary people. Everyone feels exhausted: technological tools are iterating faster and faster, computing power costs are rising, yet no one dares to stop using or learning them. When will this relentless pursuit end? Can society expect a real return on the massive investments in AI? These doubts likely linger in many people's minds.

Under such circumstances, the industry no longer craves model "bombs" with even more exaggerated parameters and capabilities. What everyone truly desires are technological solutions that offer cost controllability and clearer ROI.

The statement made during DeepSeek-V4's release—"Not swayed by praise, not deterred by criticism, stay true to the path, and remain upright"—embodies a pragmatic and focused work ethic. Compared to flashy technological demonstrations, it more precisely addresses the industry's pain points and resonates deeply with everyone.

Combining these observations, the industry landscape post-DeepSeek-V4 release partially mirrors the current reality of the AI sector: the ecosystem of domestic computing power and large models is thriving, while the bubble of trend-chasing and hype is dissipating. C-end excitement cannot match tangible ROI returns. Focusing on practical work, reducing costs, and seeking returns from large models have become mainstream trends, representing the new competitive focus for AI model manufacturers.

Regardless of praise or criticism, users will form their own judgments about V4. However, no one can deny that DeepSeek remains a pivotal player in the domestic large model landscape, and its choices partially reflect the direction of China's AI advancement.