The Super Node Era Dawns: Four Pioneering Domestic Solutions Redefine Independent Computing Power

![]() 04/15 2026

04/15 2026

![]() 440

440

— Today's 'Chip' Insights —

In 2026, China's computing power landscape is undergoing a seismic transformation.

Image Source: Unsplash

As AI model parameters soar from billions to trillions, super nodes have emerged as the strategic high ground and new growth engine for domestic computing power providers vying for market dominance.

Against this industry backdrop, Chinese vendors are fully mobilizing, unleashing a wave of super node innovations: In April 2025, Huawei launched its groundbreaking CloudMatrix384 super node; Alibaba Cloud followed with its heavyweight PanGu AL128 in September; in November, Sugon set a new global benchmark for computing density with scaleX640; and in 2026, Tsingmicro stunned the industry with its reconfigurable super node, continuously pushing the boundaries of scale and technology.

What Exactly is a Super Node?

The 'Super Aircraft Carrier' of AI Computing

01

A super node represents a revolutionary computing paradigm characterized by ultra-high integration, minimal latency, and unified global scheduling. It breaks free from traditional discrete networking models by integrating tens, hundreds, or even thousands of computing chips (GPUs/NPUs/RPUs) into a single, logically unified computing behemoth through innovative chip-level interconnection and system architecture design.

Core Advantages

Exponential Computing Density: Computing power per cabinet reaches several to dozens of times that of traditional clusters.

Ultra-Low Communication Latency: Cross-chip communication slashed from milliseconds to microseconds/nanoseconds.

Optimized Total Cost of Ownership: Reduced hardware requirements for switches and cables lower deployment and operational expenses.

Enhanced Cluster Efficiency: Eliminates multi-hop forwarding losses, dramatically boosting performance in large-scale model training and inference.

Amid China's fiercely competitive computing power landscape, four benchmark vendors have distinguished themselves through unique strengths and leading capabilities. Let's examine these four domestic super node pioneers to unlock the core strengths of China's computing power revolution.

In-Depth Analysis of Four Domestic Super Node Solutions

02

Huawei Ascend:

The 10,000-Card Behemoth Targeting Trillion-Parameter Models

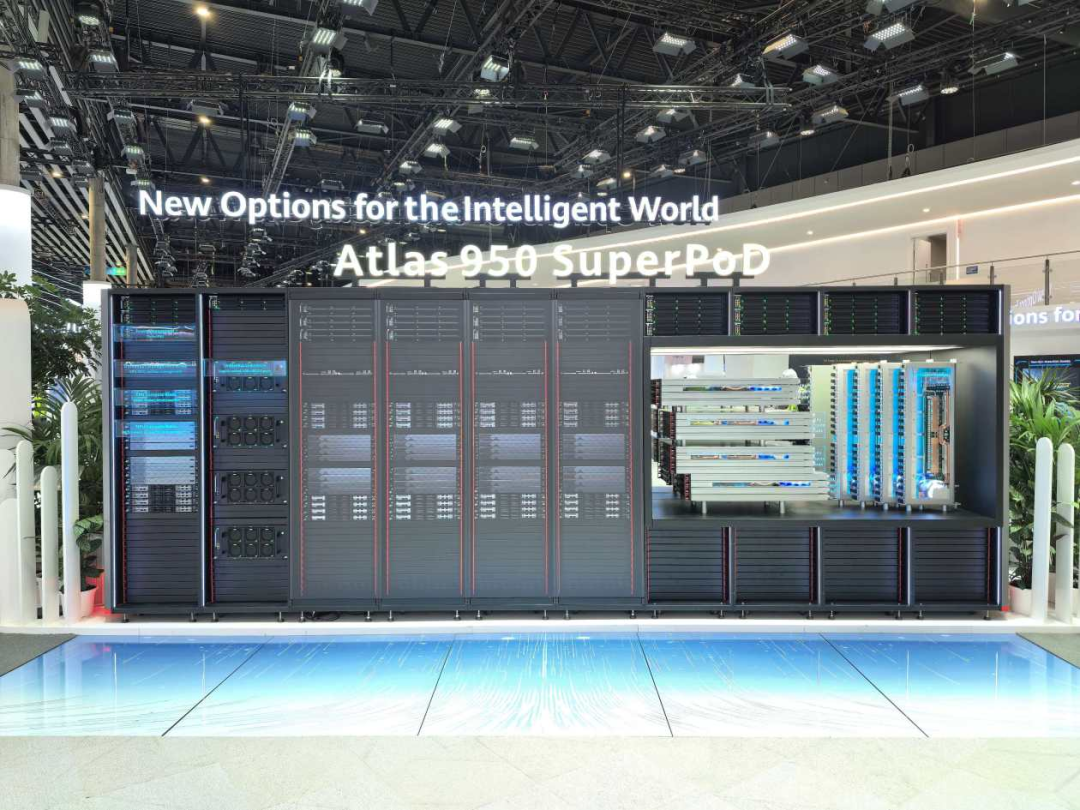

Flagship Solutions: Atlas 950 Super Node / CloudMatrix 384

Core Technologies:

Ultra-Large-Scale Integration: Atlas 950 supports 8,192 Ascend NPUs, directly addressing trillion-parameter model requirements.

Lingqu Interconnect Protocol: Proprietary high-speed interconnection achieves microsecond-level latency and 2TB/s bandwidth.

Edge-Cloud Synergy: Complete Ascend ecosystem enables end-to-end deployment from training to inference.

Key Metrics:

Single Cluster Scale: Exceeds 8,000 cards

Total Computing Power: 6.7× international flagship solutions

Memory Capacity: 15× international benchmarks

Interconnect Bandwidth: 62× international standards

Application Scenarios:

Ultra-large model training in national smart computing centers, supercomputing facilities, top research institutions, and major internet enterprises.

Technical Positioning:

Ecosystem Leader leveraging complete chip-software-ecosystem integration to create China's 'national team' solution for AI computing infrastructure.

Sugon:

Cable-Free Affordable Computing Power Democratizing Super Nodes

Flagship Solutions: scaleX640 / scaleX40

Core Technologies:

Orthogonal Cable-Free Architecture: Direct node interconnection eliminates all cables and optical modules.

High-Density Cost-Effective Design: scaleX40 integrates 40 cards, targeting the sweet spot of mid-market computing needs.

'One-Drag-Two' System Architecture: scaleX640 achieves unprecedented 640-card per-cabinet density.

Key Metrics:

scaleX40: Matches deployment cost of five 8-card servers while delivering +120% training performance and +330% inference capability

Reliability: 99.99% with operational time reduced from hours to minutes

Latency: One-way communication <100 nanoseconds

Application Scenarios:

Cost-effective, easy-to-deploy solutions for SME AI inference, university research, and traditional industry intelligent upgrades.

Technical Positioning:

Engineering Optimization specialist solving physical deployment challenges to transform premium computing power into mass-market accessibility.

Tsingmicro:

Reconfigurable Computing 'Transformers' Revolutionizing Interconnect Economics

Flagship Solution: 4K Reconfigurable Intelligent Computing Super Node

Core Technologies:

Reconfigurable Dataflow Architecture (RPU Chips): Based on 20 years of Tsinghua University R&D, hardware logic dynamically adapts to AI tasks, balancing GPU versatility with ASIC efficiency.

TSM-Link Direct Chip Interconnection + 2D-Torus Topology: Integrates 4,096 RPUs with intelligent routing capabilities, eliminating dependence on high-end switches.

3D Memory-Computing Integration + Chiplet Technology: Three-dimensional architecture dramatically boosts throughput and computing density.

Key Metrics:

Single Cluster Performance: >500 PFLOPS (FP8)

Interconnect Cost: 90% lower than international solutions

Total Cost: 50% reduction with 3× energy efficiency improvement

Application Scenarios:

Core component of China's 'East Data, West Computing' initiative and smart computing center construction. Excels in cost/latency-sensitive applications including large-scale model training, high-concurrency inference, edge-cloud collaboration, and embodied AI.

Technical Positioning:

Original Architecture Innovator reconstructing computing paradigms from the chip level, pioneering 'software-defined hardware' as the benchmark for independent, controllable domestic computing power.

Alibaba Cloud PanGu:

Cloud-Native Super Nodes Meeting Elastic Computing Demands

Flagship Solution: PanGu AI Infra2.0 AL128

Core Technologies:

Cloud-Native Converged Architecture: Deeply optimized for Alibaba Cloud's distributed scheduling, enabling elastic resource scaling.

High-Density GPU Cluster: Optimized per-cabinet GPU deployment for cloud-based AI training.

Cold Plate Liquid Cooling: Efficient thermal management supports stable operation of high-power chips.

Key Features:

Seamless Alibaba Cloud platform integration with minute-level computing power delivery

Supports multi-tenancy and mixed workloads for maximum resource utilization

Standardized intelligent computing services across public, private, and hybrid clouds

Application Scenarios:

Cloud-based training, elastic inference, and SaaS AI services for cloud providers, internet firms, and AI startups.

Technical Positioning:

Cloud Services Integrator embedding super node capabilities into cloud computing to deliver 'plug-and-play' computing power solutions.

Conclusion

03

Super nodes represent far more than mere hardware aggregation—they signify a fundamental paradigm shift in computing architecture. Led by these four domestic innovators, China is transforming from a follower to a parallel competitor and even leader in global computing power through original technological breakthroughs.