Large Models Charge Ahead, but Does the Network Lag? Two Sessions Hot Topic: The Next AI Computing Bottleneck Lies in ‘Connectivity’

![]() 03/11 2026

03/11 2026

![]() 448

448

As the 2026 sessions of the National People's Congress and the Chinese People's Political Consultative Conference got underway, the intelligent economy emerged as a hot topic among delegates and members. The government work report explicitly outlined plans to "forge a new paradigm for the intelligent economy" and identified the "implementation of ultra-large-scale intelligent computing clusters" as a pivotal focus for new infrastructure development.

Undoubtedly, this strategic move underscores a heightened demand for AI infrastructure. While the industry remains fixated on boosting chip computing power, a more fundamental technological hurdle has surfaced: the network's carrying capacity.

Today, AI model parameters have skyrocketed to the trillion-scale, with training tasks transitioning from single-card setups to clusters of ten thousand or even a hundred thousand cards. This shift places immense strain on network communication capabilities. Large model training necessitates the synchronization of massive parameters within microseconds, imposing unprecedented demands on network latency, packet loss rates, and scalability. Excessive network latency can lead to idle computing resources, while packet loss may disrupt training tasks, resulting in substantial resource wastage.

Fu Sidong, Tencent's optical network architect, highlighted that from the Pascal architecture in 2016 to the Blackwell architecture in 2024, AI computing power has surged roughly 1000-fold over eight years. In contrast, network bandwidth has only quadrupled during the same period. This disparity, where "computing power rockets while the network crawls," compels the industry to reassess the strategic importance of network technology.

Against this backdrop, achieving "strengthening computing through networking" has become an urgent challenge for the industry.

Recently, NVIDIA's fiscal year 2026 report offered a valuable case study for the industry. The data revealed an unprecedented surge in NVIDIA's networking business, with annual revenue surpassing $31 billion, marking a more than tenfold increase from fiscal year 2021 when it acquired Mellanox. In the fourth quarter alone, networking business revenue reached $11 billion, a year-on-year spike of 263%.

This growth is attributed to the widespread adoption of InfiniBand (IB) technology in ultra-large-scale AI clusters. IB networks, with their unique credit-based flow control mechanism, ensure sufficient resources at the receiving end before transmission, effectively eliminating congestion and packet loss, with switching delays as low as 100 nanoseconds. It is widely recognized as the gold standard in high-performance computing.

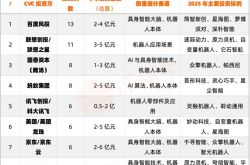

However, for domestic intelligent computing clusters, core technologies have long been dominated by NVIDIA, resulting in a highly concentrated supply chain. Amid the ongoing push for information technology innovation, there remains a significant market void for domestic IB solutions.

In contrast, the RoCE solution seeks to replicate IB's lossless transmission capabilities on a general-purpose Ethernet architecture, offering substantial cost advantages. Yet, a closer look at current mainstream RoCE solutions reveals that despite rapid progress in localizing switch brands, their core switching chips still predominantly rely on Broadcom, while network interface card chips are dominated by Mellanox.

In the 200G and above high-speed interconnection arena, RoCE-related I/O link technologies are still playing catch-up (currently only supporting the 100G level), lagging behind the mainstream 400G solutions in IB networks by a generation, making it challenging to meet the interconnection needs of large computing power clusters.

This also implies that core technologies in the high-end interconnection field of AIDC remain under the control of overseas manufacturers. To meet the high-level demands of ultra-large-scale intelligent computing clusters, it is imperative to directly tackle the challenges of localizing the IB technology route.

"NVIDIA's networking business boom confirms a fundamental truth: in the era of ultra-large-scale intelligent computing clusters, high-performance networks have taken center stage," remarked an industry insider. He noted that while RoCE is a pragmatic solution in the early stages of domestic intelligent computing infrastructure development, relying solely on a technical path grafted onto general-purpose Ethernet makes it difficult to fundamentally break through the performance ceiling in large-scale cluster network interconnection.

Therefore, promoting the independent development of IB networks is not merely a technological endeavor but also a strategic imperative in the era of AI large-scale computing power. Its significance extends beyond partial optimization of the existing domestic technology system; it aims to address the core shortcoming of high-performance networks, establishing a technological foundation that is both autonomous, controllable, and efficiently usable, and creating a truly internationally competitive new infrastructure for intelligent computing power.

The policy guidance on ultra-large-scale intelligent computing clusters during the Two Sessions not only provides a strong impetus for the AI industry's development but also presents a more rigorous test for the entire industrial chain. As competition in AI computing power intensifies, network connectivity is emerging as one of the core variables determining cluster efficiency. To unblock the "meridians and collaterals" of intelligent computing clusters, it is urgent to achieve a leap from external dependence to self-reliance in core network technologies. This path may be arduous and lengthy, but it is a crucial step in unlocking the door to the AI era.