NVIDIA's Q4 Financial Report: Falling Short of Expectations? The Growth Trajectory Continues

![]() 02/27 2026

02/27 2026

![]() 549

549

Editor | Zhang Lianyi

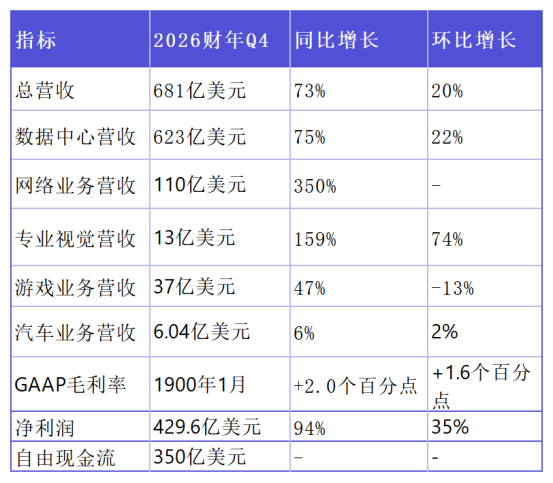

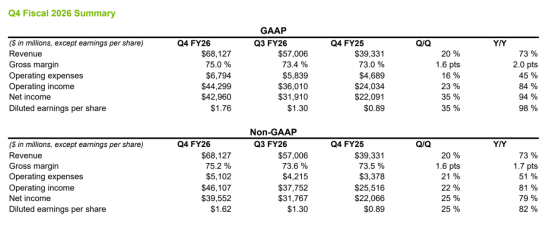

In the fourth quarter of fiscal year 2026, NVIDIA reported a revenue of $68.1 billion, marking a 73% year-over-year (YoY) increase and significantly surpassing market expectations of $66.23 billion.

NVIDIA has projected its revenue for the first quarter of fiscal year 2027 to reach $78 billion.

NVIDIA's total revenue for fiscal year 2026 amounted to $215.9 billion. If annualized at the $78 billion projection, the revenue for fiscal year 2027 could potentially soar to $310 billion.

During the subsequent earnings call, NVIDIA's CEO Jensen Huang and CFO Colette Kress repeatedly highlighted that the development of AI has reached a critical inflection point, with an explosive surge in demand for computing power.

Huang concluded by stating that the AI infrastructure has not yet reached its peak, implying that NVIDIA's growth trajectory is not only unimpeded but also accelerating.

For a more intuitive comparison: Huawei's total revenue for 2025 stood at RMB 880.9 billion (approximately $122 billion), although it did not disclose its profit figures. Assuming a gross margin of 47.5% based on Huawei's first-half performance, NVIDIA's gross margin of 71% for fiscal year 2026 stands in stark contrast.

The lion's share of peak profits in the tech industry remains firmly in the hands of U.S. tech firms.

01

High Margins, Robust Cash Flow

NVIDIA has sustained rapid growth over multiple quarters, demonstrating strong performance across all business segments.

Data center revenue soared to $62.3 billion in the fourth quarter, representing a 75% YoY increase and a 22% quarter-over-quarter (QoQ) rise. For the entire fiscal year, data center revenue reached $193.7 billion, up 68% YoY. Since ChatGPT sparked the AI boom in 2023, NVIDIA's data center business has expanded nearly 13-fold.

Networking revenue hit $11 billion in the fourth quarter, more than tripling YoY. Full-year networking revenue exceeded $31 billion, growing over tenfold from fiscal year 2021 (the year of the Mellanox acquisition).

Professional visualization revenue surpassed the $1 billion mark for the first time, reaching $1.3 billion, up 159% YoY and 74% QoQ.

Gaming and AI PC revenue amounted to $3.7 billion, up 47% YoY.

Automotive and robotics revenue reached $604 million, up 6% YoY. Notably, "Physical AI" contributed over $6 billion to NVIDIA's revenue for fiscal year 2026.

Source: NVIDIA Financial Reports

In terms of profitability, NVIDIA's margins rebounded significantly as production of the Blackwell chip ramped up. The fourth-quarter GAAP gross margin was 75.0%, while the non-GAAP gross margin was 75.2%, closely aligning with market expectations. Fourth-quarter net profit reached an astonishing $42.96 billion, up 94% YoY, with a quarterly net margin exceeding 63%.

Source: NVIDIA Financial Reports

For fiscal year 2026, NVIDIA's GAAP net profit was $120 billion. Analyzing its cash flow statement, free cash flow (FCF) reached $96.57 billion after deducting capital expenditures (Capex). NVIDIA returned $41.1 billion to shareholders through stock buybacks and dividends, accounting for 43% of annual FCF. As of the end of the fourth quarter, $58.5 billion remained authorized for future buybacks.

02

Computing Power Equals Revenue, Tokens Equate to Currency

Jensen Huang expressed unwavering confidence in NVIDIA's future revenue prospects.

During the earnings call, Huang declared:

"In this new AI-driven world, computing power is synonymous with revenue. Without computing power, you cannot generate tokens; without tokens, you cannot achieve revenue growth."

Traditionally, cloud providers purchased servers to "host" data and run legacy applications. However, the paradigm has now shifted. In Huang's vision, every GPU that cloud providers acquire is now engaged in "mining"—not for cryptocurrency, but for AI tokens. These tokens directly translate into revenue, whether through Claude Code subscriptions, OpenAI API calls, or Meta's AI-driven ad click-through rates.

An analyst posed a pointed question during the call: "Cloud providers' capital expenditures (CapEx) are approaching $700 billion this year. Many investors are concerned about growth prospects for next year, and some clients' cash flows are already under strain. If their CapEx does not increase, can NVIDIA still sustain its growth?"

Huang responded with confidence: "I am very confident in their cash flow growth."

His rationale is straightforward: The inflection point for Agentic AI has arrived. OpenAI's Codex, Anthropic's Claude Code, GitHub's Copilot—these AI agents are no longer confined to the lab but have become genuine productivity tools. For every dollar that cloud providers invest in computing power, they generate over a dollar in revenue—a business model with no reason to cease.

In essence, cloud providers are not merely "spending" but "investing." And the return on investment (ROI) from this investment may surpass that of any previous technological revolution.

03

Agentic AI: The Repeatedly Emphasized "Inflection Point"

One term dominated the discourse of this earnings report and call: Agentic AI. Jensen Huang mentioned it at least three times across various contexts, clearly stating: "We have reached the inflection point for Agentic AI."

Agentic AI refers to AI systems capable of autonomous decision-making and task execution. These are not chatbots that simply respond to queries but intelligent agents that function like employees—receiving tasks, breaking them down into steps, calling upon tools, and completing work.

OpenAI's GPT-5.3 Codex, Anthropic's Claude Code, Meta's Advanced AI Lab—all are pointing in the same direction: AI is evolving from a mere "tool" into an "employee."

Agentic AI has reached the "practical intelligence inflection point," marked by profitable token generation. This shift directly drives the global demand for computing expansion. Anthropic, for instance, witnessed a tenfold increase in revenue within a year but remains severely constrained by computing capacity.

Huang calculated during the call: With hundreds of millions of knowledge workers worldwide, if even 1% adopt AI agents on a large scale, computing demand will multiply dozens of times. This is why he remains confident about growth prospects for 2027.

04

The Annual "Chip Arms Race"

To maintain its leadership position, NVIDIA must ensure product superiority through continuous iteration.

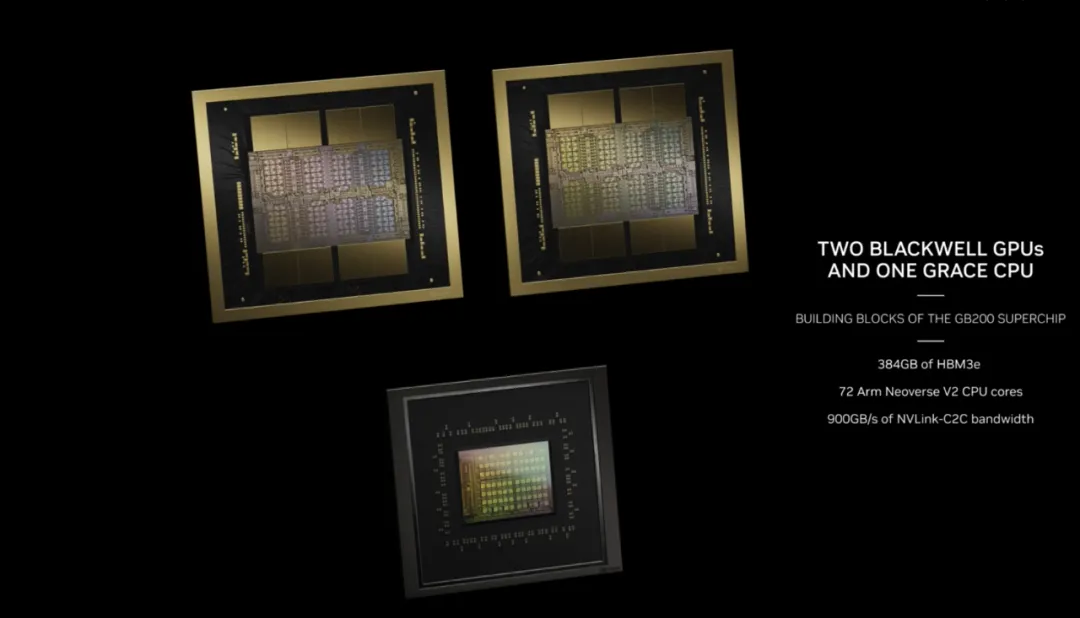

NVIDIA's current flagship architecture is Blackwell, with nearly 9 gigawatts deployed across core clients. The GB300 NVL72 achieved breakthrough inference performance, with rapid gains realized through CUDA optimization. The GB series contributed approximately two-thirds of data center revenue, becoming the primary growth engine.

Blackwell Chip

Blackwell Chip

According to SemiAnalysis' InferenceX benchmark, the GB300 and NVL72 deliver up to 50 times better performance per watt and 35 times lower cost per token compared to competitors.

In January 2026, NVIDIA unveiled the Rubin platform at CES. Its core advantage lies in training Mixture of Experts (MoE) models with one-fourth the number of GPUs, reducing inference token costs by up to ten times compared to Blackwell. In February 2026, NVIDIA shipped initial Vera Rubin samples to clients, with mass production slated for the second half of 2026. For the entire year of 2026, NVIDIA will sell both Blackwell and Rubin products.

Rubin Platform

05

Weaknesses, Threats, and Uncertainties

NVIDIA recently entered into a non-exclusive licensing agreement with Groq to integrate its low-latency inference technology and absorb its engineering team. The objective is to enhance AI infrastructure performance by addressing low-latency gaps in the inference stack.

Huang stated: "We will leverage Groq's innovations to expand NVIDIA's architecture, achieving new levels of AI infrastructure performance and value." This strategy echoes NVIDIA's approach when acquiring Mellanox—embedding external best-in-class technology into its full-stack system while maintaining architectural unity.

When asked about custom chips like Google TPU and Amazon Trainium, Huang responded sagely: NVIDIA views them not as threats but as complementary elements within the ecosystem. "You have your dedicated accelerators; I have my universal platform. We can coexist."

Beyond competitors, the Chinese market poses uncertainties. While NVIDIA has received U.S. government approval for limited shipments of the H200 to Chinese clients, no revenue has been generated yet, and import approvals remain unclear. Thus, NVIDIA's outlook excludes data center computing revenue from China.

Regardless, NVIDIA continues to set records. A quarterly revenue guidance of $78 billion, if achieved, would establish a new global benchmark. But this is merely the beginning.

Huang revealed during the call that he already envisions growth paths for 2027. This implies that NVIDIA's orders are booked through next year—and beyond.

-END-