Surge in AIDC Orders: Which Sectors Stand to Benefit?

![]() 02/28 2026

02/28 2026

![]() 597

597

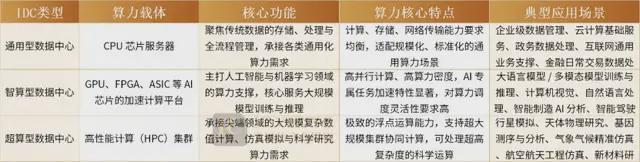

Generally, IDCs fall into three categories: General-purpose data centers provide computing power via CPU-based servers, focusing on traditional data storage, processing, and management with balanced requirements for computing, storage, and network transmission. Intelligent computing data centers rely on AI chip-accelerated platforms (GPU, FPGA, ASIC) for large-scale model training in AI and machine learning. Supercomputing data centers, composed of high-performance computing clusters, primarily serve cutting-edge scientific research like planetary simulation, astrophysics, and genetic analysis.

Intelligent computing data centers are also known as AIDC. Short for AI Data Center, AIDC specifically refers to data centers dedicated to deploying and operating AI computing tasks, particularly large-scale GPU/computing power chips.

As generative AI evolution drives exponential demand for computing power, the shift from 'general computing' to 'intelligent computing'—coupled with China's 'East Data, West Computing' policy—has positioned AIDC as a core infrastructure for the digital economy, with sustained industry growth.

01

Intensive Order Placements by Tech Giants Reignite AIDC Momentum

In Q2 2025, multiple domestic AIDC projects faced delays due to NVIDIA's H20 chip supply disruption. The resumption of H200 chip supplies accelerated domestic AIDC demand. Simultaneously, large-scale listings of domestic AI chip companies provided robust cash flow, supporting R&D and production expansion.

As Chinese tech leaders strive to keep pace with U.S. rivals, ByteDance plans to expand AI investments next year. Reports indicate increased capital expenditures for AI infrastructure, with 2026 capex projected at ~RMB 160 billion, up from ~RMB 150 billion in 2025. ByteDance's AI chip budget is ~RMB 85 billion, with 20,000 H200 test orders placed. If approved for advanced chip procurement, capex could surge further.

Alibaba has prioritized AI as a strategic focus, planning to invest over RMB 380 billion in R&D and infrastructure over three years, with potential upward revisions.

Dense bidding and project awards reflect AIDC's penetration from tech to traditional sectors:

China Mobile's 2025-2026 centralized procurement for AI general-purpose computing equipment (inference-type) concluded after two rounds: 7,058 units in the first round and ~441 units in a supplementary round. Total inference AI server purchases reached 7,499 units for 2025-2026. In January 2026, Kunlun Technology and others secured the 441-unit supplementary order, divided into two packages: CANN ecosystem equipment (Package 1) and CUDA-like ecosystem equipment (Package 2).

Traditional industries' intelligent transformation also fuels AIDC demand. In January 2026, Alibaba Cloud won a RMB 1.856 million bid for COFCO's AI infrastructure platform (Phase II), focusing on intelligent data query and agent platform development to support business intelligentization (intelligent) upgrades, marking AIDC's expansion into traditional sectors.

02

Jensen Huang's 'Five-Layer Cake' Theory

NVIDIA CEO Jensen Huang recently proposed a 'Five-Layer Cake' structure for AI infrastructure at Davos: Energy Layer (AI's 'oxygen,' including power grids, renewable energy, and storage), Chip & Computing Layer (GPU-centric hardware, NVIDIA's domain), Cloud Data Center Layer (computing power leasing), AI Model Layer (large models), and Application Layer (economic value creation).

Layer 1: Energy Layer

As AI's 'oxygen,' this foundational layer encompasses power grids, renewables, and storage, providing stable energy for AIDC and computing devices. AI's massive computing demands drive soaring energy consumption, making stable supply and cost control critical for industry growth.

Layer 2: Chip & Computing System Layer

The core carrier of AI computing power, this layer features GPUs/CPUs as 'engines,' paired with accelerators for efficient computing. NVIDIA and other chipmakers hold pivotal positions here.

Layer 3: Infrastructure Layer

Comprising data centers, networks, storage, and cloud services, this layer integrates energy and chip resources to form 'factories' for intelligent computing power delivery. It bridges computing power with real-world applications, requiring robust software and hardware support.

Layer 4: AI Model Layer

AI's 'brain,' this layer includes large language models, multimodal models, and vertical industry models, enabling intelligent reasoning, decision-making, and content generation. Model innovation drives AI technological iteration, with domain-specific optimizations meeting diverse needs.

Layer 5: Application Layer

The top layer, where AI technologies generate commercial value across autonomous driving, smart manufacturing, healthcare, finance, education, and entertainment. By integrating AI models with business operations, intelligent solutions create tangible socioeconomic value.

Notably, most capital currently focuses on the model layer, but true economic value stems from applications. The energy and infrastructure base underpins the entire ecosystem, guiding AIDC's development path.

AIDC's high power density demands stringent requirements for power supply and cooling systems, driving technological upgrades and talent demand in energy and infrastructure. 'New-era hydropower workers'—skilled in high-voltage DC power, liquid cooling, and energy storage—are becoming invaluable, alongside professionals in power distribution and substation construction, forming the backbone of AI infrastructure deployment.

03

Multi-Sector Benefits Across the AIDC Industrial Chain

AIDC's boom, driven by 'computing demand + technological revolution,' fuels growth across core segments and radiates benefits throughout the industrial chain, fostering a collaborative ecosystem.

(1) Power Systems: High-Voltage DC Dominance

Drawing from automotive trends, NVIDIA unveiled an 800V DC power architecture at Computex 2025 to support megawatt-scale power demands for next-gen AI data center racks. NVIDIA's GB200 servers now include BBUs (Battery Backup Units) as standard, leveraging lithium batteries for high-energy-density, high-rate power supply with superior flexibility, efficiency, and lifespan compared to traditional UPS systems.

(2) Liquid Cooling: Soaring Demand Amid Computing Density Surge

AI servers' GPU/ASIC power consumption skyrockets, with NVIDIA's GB200/GB300 NVL72 system reaching 130kW-140kW TDP per cabinet, exceeding traditional air cooling limits. This drives market expansion for cooling modules, heat exchangers, and components. Key cold plate suppliers include Cooler Master, AVC, BOYD, and Auras, with three expanding liquid cooling capacity in Southeast Asia to meet U.S. CSP demand.

(3) Power Semiconductors: SiC and GaN Synergy

In AI server power supplies, silicon carbide (SiC) and gallium nitride (GaN) exhibit complementary strengths. SiC offers lower on-resistance and stable temperature performance, ideal for high-voltage, high-temperature AC-DC PFC applications. GaN enables ultra-low switching losses with zero reverse recovery charge (Qrr), excelling in high-density CRPS and DC-DC LLC converters.

(4) Photovoltaics: Green Computing Fuels Energy Synergy

Global AIDC computing demand is set to quadruple by 2030, with green data centers dominating in China and Europe. AIDC's power hunger accelerates green energy integration, aligning with China's 'East Data, West Computing' strategy. Photovoltaics, as a mainstream clean energy source, can directly supply AIDC's DC power systems, benefiting companies like Sungrow.

(5) Optical Modules: 1.6T Commercialization Begins

Since 2025, 800G optical module demand has surged, with 1.6T entering commercialization, shifting industry focus from speed to efficiency. Domestic leaders like TFC Communication and Cambridge Industries reported >40% YoY profit growth, reflecting AI infrastructure-driven demand. The new cycle emphasizes technological iteration and supply-demand balance: 800G shipments doubled to ~20M units, 1.6T debuts, and head firms race to secure capacity and core technologies. However, optical chip shortages persist, making supply chain control a competitive edge.

(6) Domestic AI Computing Cards: 10K-Unit Shipments Become Commonplace

China's AI chip landscape diversifies with Huawei Ascend, Hygon, Cambricon, and others. At least nine firms have shipped or ordered >10K units, including tech giants (Huawei Ascend, Hygon) and startups (Cambricon, Muxi, Moore Threads, Enflame). Prices range from RMB 30K-200K per card, with 10K-unit shipments validating performance, stability, and TCO. The industry transitions from R&D to mass delivery, with 2026 poised for explosive growth in domestic inference chip shipments, shifting competition to AIDC infrastructure capabilities.

AIDC's boom stems from 'computing demand + technological revolution.' From order fulfillment to localization, and from core chips to supporting infrastructure, the entire chain offers rich investment and growth opportunities.

While China holds energy and infrastructure advantages, breakthroughs in high-end chip R&D and core technology innovation remain critical. AI's ultimate winners will be ecosystems that integrate full-stack technologies, drive industrial collaboration, and embrace technological transformation. As Jensen Huang noted, 'Don't reduce AI to ChatGPT vs. DeepSeek—look at the entire cake.'

This AI-driven 'New Industrial Revolution' has just begun.