Did Lin Junyang Miss Out on Being the Youngest P10, Will He Become Alibaba's 'Qwen Dilemma'?

![]() 03/06 2026

03/06 2026

![]() 672

672

Google's Management Expertise Continues to Accumulate

Is Alibaba Following Meta's Path?

On the evening of March 2, Alibaba officially open-sourced four Qwen 3.5 series models with native multimodal capabilities, achieving significant performance improvements with minimal parameters. The model's release quickly ignited the AI community, with Elon Musk promptly liking and commenting on social media, calling the "intelligence density impressive."

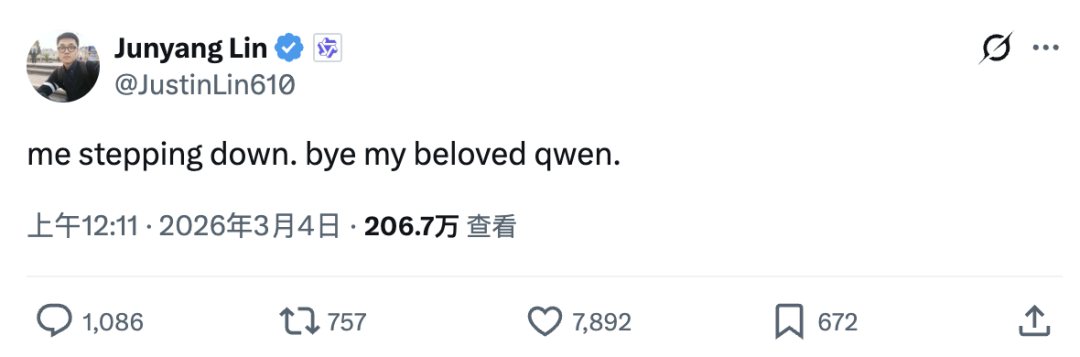

However, in the early hours of March 4, Qwen's technical lead, Lin Junyang, suddenly posted on social media: "me stepping down. bye my beloved qwen."

News of his resignation caused a stir in the community, as it was under his leadership that Alibaba's flagship Tongyi large model matured.

More specifically, according to LatePost, Lin formally submitted his resignation to Alibaba on the afternoon of March 3, and the Qwen team later shared this news within a small circle.

Sources close to the matter said some Qwen colleagues were emotional upon learning of his departure, "crying sadly."

Besides Lin, several Qwen researchers, including Yubo Wen and Huibin Yuan, also announced their resignations at the same time.

So, why did Lin Junyang become Alibaba's youngest P10? What contributions did he make to Tongyi? Why did he suddenly resign? What underlying issues should corporate giants reflect on?

01

From Peking University's School of Foreign Languages to Tongyi's Infrastructure: A Non-Typical Genius's Dimensional Strike

In an era where computational power determines discourse, a genius's pivot often captivates more than a giant's charge.

As we attempt to dissect how Qwen surged ahead over the past two years to become a global leader in open-source large models, Lin Junyang emerges as an unavoidable name.

This young tech evangelist, born in 1993, is not only a core witness to the integration of Alibaba's DAMO Academy and Tongyi Lab but also the key architect who personally shaped Alibaba's open-source large model landscape.

His resume, rapid promotion, and the technical soul he infused into Qwen exemplify the rise of young tech leaders in China's AI wave.

Lin's academic background alone sets him apart from typical "genius prodigies."

After completing his undergraduate studies in computer science at Peking University, where he built a solid foundation in coding and algorithms, Lin pursued a master's degree in the School of Foreign Languages, focusing on foreign linguistics and applied linguistics.

To many, shifting from hardcore computer science to the more humanities-oriented field of linguistics seemed like a detour. However, on the eve of the large language model (LLM) boom, this choice proved strategic.

The essence of large models lies in the mathematical deconstruction and recombination of human language logic. While pure computer engineers often get lost in the maze of parameters and computational power, Lin's linguistic background gave him a deeper intuition about how machines understand human intent, context, and semantic connections.

According to Synced Media, during his university years, Lin conducted a test using a dataset of over three million English-German word pairs. He found that traditional machine translation accuracy barely reached 23%, while an early version of the Transformer architecture boosted it directly to 27%.

This seemingly minor data comparison planted the seed of large model technical potential in Lin's mind and pointed the way for his future deep cultivation in natural language processing and multimodal representation learning.

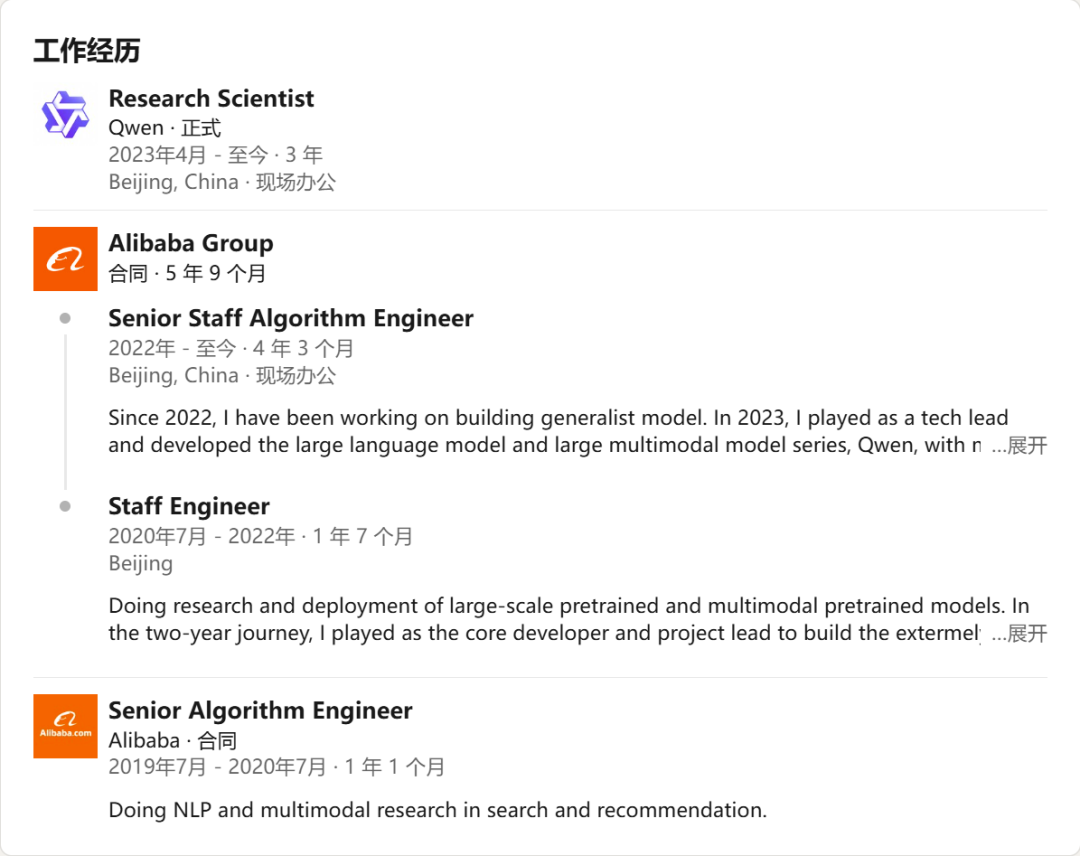

Armed with this interdisciplinary perspective, Lin joined Alibaba's DAMO Academy Intelligent Computing Lab in 2019 as a senior algorithm engineer, officially beginning his career.

At the time, Alibaba was actively exploring cutting-edge AI technologies. Lin quickly demonstrated astonishing technical prowess, deeply participating in core projects such as the ultra-large-scale pre-trained model M6, the unified multimodal pre-trained model OFA, and CogView.

These early technical accumulations not only led to his frequent publication of important papers at top academic conferences but also established him within Alibaba as a technical backbone who could "both look up at the academic stars and foot pedal the engineering ground."

Based on this solid and forward-looking technical foundation, Lin's promotion trajectory at Alibaba resembled a rocket launch.

Peng Tai, a former Alibaba employee, told Super Focus that among Alibaba's technical ranks from P4 to P14, P8 is already considered a career ceiling for many ordinary technicians, requiring strong independent leadership and problem-solving abilities. P9 signifies technical oversight at the director level, while P10 represents scientist or technical vice president status at the group level—a decision-making tier that can determine business survival and strategic direction.

Over six years at Alibaba, Lin ascended four ranks. Particularly between 2024 and 2025, thanks to the overwhelming open-source success of the Tongyi Qianwen team, he broke the traditional seniority system of internet giants, becoming Alibaba's youngest P10 technical executive at age 32.

This was not just Alibaba's tilt toward core talent in the fierce competition for large model experts but also a promotion earned through Lin's lines of code and technical decisions.

So, what exactly did Lin do for Qwen?

In short, he gave Qwen the confidence to compete head-on with top proprietary models like GPT and Claude at the global large model table and personally built a thriving open-source ecosystem.

In late 2022, Alibaba underwent a profound organizational restructuring, merging DAMO's language and vision AI teams into Alibaba Cloud to formally establish Tongyi Lab. Lin was officially appointed technical lead for the Tongyi Qianwen series of large models.

Amid strategic choices between closed-source and open-source routes, Lin led the team toward open-source, driving Tongyi Qianwen's inception and the iteration of the Qwen series as the core architectural force. He focused not just on model parameter expansion but also on practical usability and multimodal capabilities.

Under his leadership, the Tongyi Qianwen family achieved full-modality coverage from text and images to video and audio. More importantly, Lin spearheaded Qwen's "full-size" open-source strategy.

His team ensured developers could find the most suitable Qwen model, whether on resource-constrained edge devices or compute-intensive cloud clusters.

Beyond code and model output, Lin's deeper contribution to Tongyi Qianwen lay in reshaping its technical philosophy.

In January of this year, Lin explicitly proposed the core concept of "models as products." He strongly advocated that building foundational models today cannot remain stuck in laboratory benchmarking; researchers must think like product managers, refining research outcomes into stable, usable systems in the real world.

This pragmatic orientation has always been embedded in the Tongyi DNA under his leadership and explains why it quickly became the most widely called large model base in Chinese enterprise applications.

In six years, Lin transformed from a tech geek into a strategic leader for a major corporation, leaving Alibaba with a structurally complete, ecologically thriving, and globally competitive open-source family.

Through the purity of a technologist and the acumen of a product manager, he guided Tongyi through its toughest years of building from scratch and catching up to running alongside the leaders.

02

In the High-Pressure Chamber of Large Model Monetization, Is There Room for Pure Researchers?

Lin's departure is far from a peaceful personal career adjustment.

As news of his resignation fermented in the community, more details emerged, painting a regrettable picture.

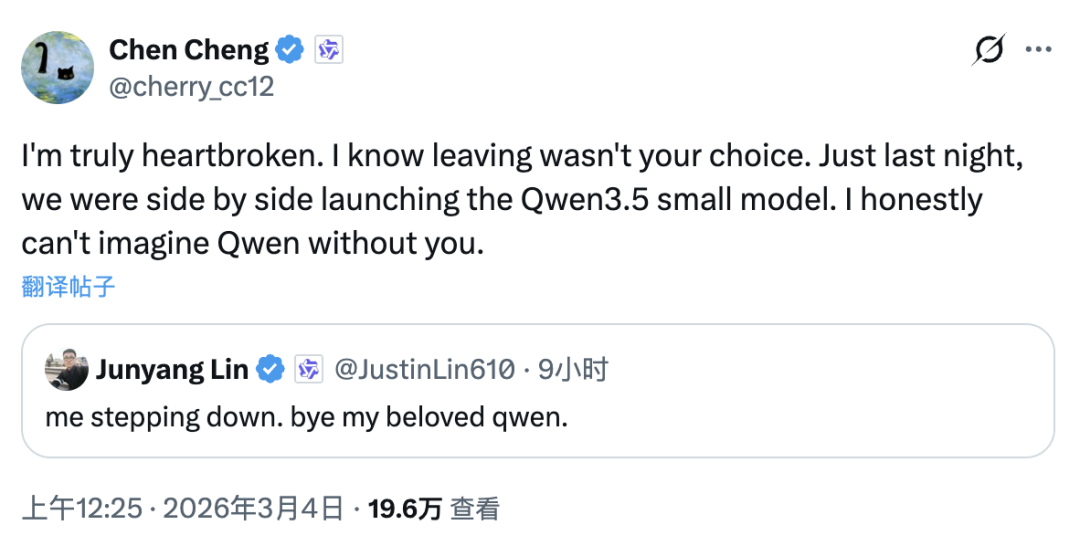

The immediate response from core Qwen contributors is thought-provoking: "Leaving wasn't your choice." Such wording almost explicitly suggests the passivity and suddenness behind this personnel earthquake.

According to statistics compiled by Bao Yu, a former Microsoft senior software engineer and tech expert, besides Lin, several other absolute core members of the Qwen team have also announced their departures.

These include Binyuan Hui, a backbone who often collaborated online to solve technical issues as late as 6 a.m., and Kaixin Li, a key contributor who led the development of multimodal and code models like Qwen 3.5 and Qwen VL.

At least four confirmed departures represent the pillars who nurtured Tongyi Qianwen from inception to maturity, from small to large models. A flagship team fresh off victory and in absolute ascent now faces disintegration of its command system overnight.

According to LatePost reports and community discussions, the conflict centers on a fundamental shift in Alibaba Cloud's evaluation methods for the Qwen team.

Over the past year or two, Qwen's success largely rested on open-source community recognition, download volumes on code hosting platforms, and dominance across authoritative benchmarks. However, as the "Hundred Models War" entered its second half, business decision-makers began demanding that large models not only lead technically but also immediately prove their worth at the consumer level.

LatePost reported that Alibaba Cloud started using metrics like DAU—typically used for consumer-facing C-end apps—to directly evaluate foundational model R&D teams. This is akin to holding aircraft engine engineers responsible for how many tickets are sold.

In China's To C large model application market, Qwen's consumer presence remains relatively weak compared to competitors leveraging strong traffic infusion and operational capabilities. Faced with this, management's urgency to pivot toward closed commercialization and application layers ultimately became a reform axe for the underlying R&D team.

Accompanying this strategic shift was a restructuring of management authority.

Sources say the new management architecture may have bypassed Lin, directly assuming team control, with new leaders more oriented toward reinforcement learning and commercialization.

When a geek team driven by frontier tech exploration is suddenly placed under a bureaucratic management system focused on traffic and monetization, friction—or even rupture—becomes inevitable. "Internal politics" and "management interference" emerged as frequently cited causes in the resignation fallout.

Thus, in open-source community discussions, a widespread concern is spreading: Without this cohort of pure technical stewards, could the Qwen 3 series become Tongyi Qianwen's swan song in the open-source world for a long time?

From a business logic standpoint, Alibaba's decision is not unfathomable. No company can indefinitely foot the bill for massive computational power that generates no direct economic returns; pursuing commercial closure is inevitable.

However, executing a team overhaul so violently at the peak of performance and when the ecosystem has just built absolute barriers may entail incalculable hidden costs.

The prosperity of open-source large models never relies solely on computational power stacking; it heavily depends on the core team's technical taste, open-source faith, and deep understanding of community ecology. One researcher even posted on WeChat: "Qwen is nothing without its people."

This seismic shock of stripping away soul figures and core teams recalls Sam Altman's forced departure from OpenAI years ago. While contexts, scales, and outcomes may differ, both reveal the fragile yet tense relationship between capital, management, and tech believers amid the AI wave.

Where Lin and the departing core members will go next remains unknown. But in an era of acute scarcity of computational power and talent, if they maintain an intact team, joining any open-source-friendly star startup will allow them to continue leaving their mark on the global AI landscape.

As for Qwen, whether this oscillation represents growing pains of commercialization or a loss of core competitiveness, only time will tell.

03

Balancing Acts and Choices for Tech Giants

Lin's resignation is not an isolated case. Globally, many tech giants have experienced similar growing pains: the inherent mismatch between the urgent need for commercialization and the long-term nature of frontier tech research.

Looking back at Silicon Valley, Meta's large model journey paid a heavy price for this mismatch.

To accelerate AI commercialization, Meta took an extremely aggressive approach in 2025, luring engineering teams with exorbitant salaries to speed up monetization. However, this short-term maneuver directly disrupted the original pure research ecosystem, causing many core scientists and researchers who led early LLaMA development to leave over ideological clashes.

This hasty action plunged Meta's internal R&D rhythm into chaos and pain, incurring high talent attrition costs, while LLaMA 5's capabilities remain unfavorably viewed by the market.

On the flip side stands Google.

Setting aside its past sluggishness in consumer products, Google remains AI's reigning "king" in terms of technical foundation strength.

This unshakable technical confidence stems from its long-standing reverence for and protection of basic research. Google's AI researchers enjoy tremendous exploration freedom, unburdened by short-term commercialization metrics.

Only such a pure and focused research atmosphere could nurture technological cornerstones and outstanding products like the Transformer architecture and Gemini 2/3 series that reshaped global AI progress.

The large model race is a marathon without a finish line. Commercialization is the bloodline for corporate survival, but basic research determines the model's ceiling.

Alibaba may have missed its youngest P10, possibly accelerating commercialization metrics temporarily. But without those starry-eyed, bottom-layer tech purists, will Qwen's future path mirror that of ERNIE Bot?

- END -