Fudging Strategies for Bosses in the AI Era

![]() 04/15 2026

04/15 2026

![]() 496

496

The OpenClaw craze has set off a chain reaction.

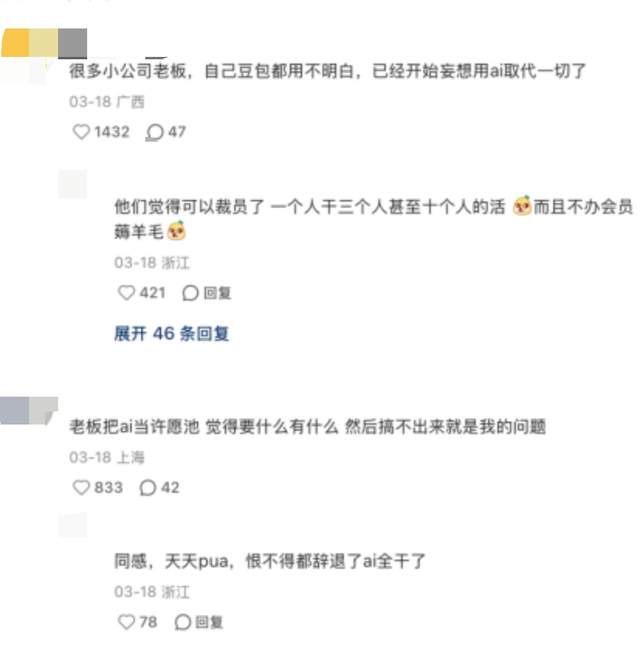

Overnight, countless bosses seem possessed by 'lobster anxiety.' Their WeChat Moments are flooded with claims like 'AI agents will replace 50% of jobs,' while industry groups buzz with warnings that 'companies not using OpenClaw will die within three years.' Panic spreads like flu, with the first symptoms appearing among tech-illiterate SME owners.

These bosses can't comprehend large models, fumble with APIs, and even need subordinates' help to register accounts. Yet this doesn't stop them from demanding one thing: make employees learn.

Thus, a grand 'lobster farming campaign' unfolds in workplaces. Employees are required to generate ten plans daily using Lobster, analyze competitor data with Lobster, and optimize customer service scripts with Lobster. Bosses can't articulate the practical value but demand visible usage. When asked for specifics, they deflect with 'Just start using it first.'

Employees know the reality: fragmented company data, manual processes, and lack of standardization make business integration impossible. But voicing this makes them appear resistant to new technology and 'out of touch.'

Hence, a new discipline emerges—teaching people to navigate between boss anxiety and workplace reality with minimal effort. This is called the 'Fudging Strategies in the AI Era.'

The AI boom has created subtle workplace contradictions.

Some employees spend two weeks researching mainstream tools and proposing detailed implementation plans, only to meet boss indifference. Meanwhile, others randomly screenshot obscure tools and win praise for being 'cutting-edge.'

Such scenarios repeat endlessly. Employees trying to reason with bosses often hit walls. Explaining tool-business mismatch becomes an attitude problem. Suggesting data foundation work before AI implementation gets labeled as 'technology resistance.' Reminding bosses that flashy external cases require dedicated teams and budgets gets dismissed as 'excuse-making.'

Gradually, workers realize: boss anxiety has distorted evaluation criteria. Under this twisted standard, 'appearing diligent' matters more than 'actual effectiveness,' and 'seeming to follow trends' trumps 'genuine implementation.'

Thus, fudging becomes mandatory. Workers consciously refine tactics through daily experience, forming three dominant strategies in today's AI workplace:

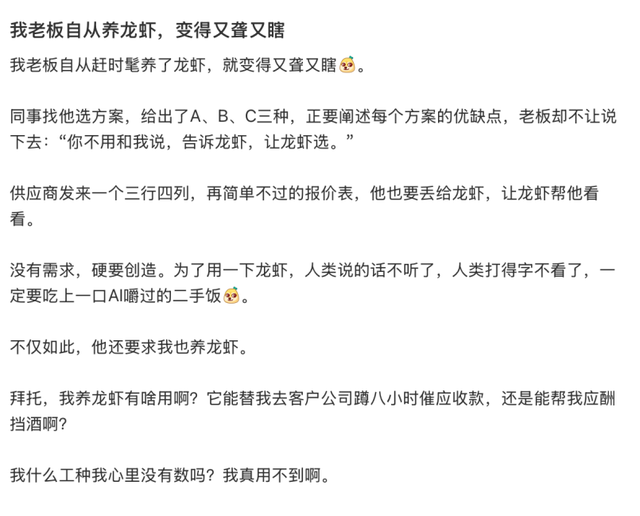

First Strategy: The Lobster Mouthpiece.

A netizen created a marketing plan that bosses called 'too conservative.' The revised version was deemed 'not innovative enough.' For the third attempt, they pasted boss requirements verbatim into Lobster to generate a plan.

Without changing a word, they reported: 'Boss, this is Lobster's suggestion.'

After review, the boss nodded: 'This approach works. Let's use it.'

Advanced version: Let Lobster say what you dare not. Want to reduce workload, adjust unreasonable deadlines, or explain project infeasibility? Direct requests likely get rejected.

But reframe it as: 'Boss, Lobster analysis suggests current resource allocation makes this target unrealistic. Priority adjustment recommended.' Bosses accept this, perceiving Lobster's objective, rational authority as infallible.

The beauty lies in not changing boss opinions but making them believe 'the idea came from Lobster.'

Second Strategy: Niche is Righteousness.

This counterintuitive tactic proves most effective.

While AI research logically favors mainstream tools, fudging strategies dictate the opposite: obscure, English-only tools impress bosses most.

A participant in major firm's AI competition revealed: 'Using well-known tools makes you seem lazy. But presenting something they've never heard of implies deep research, foreign source exploration, and comparative analysis. Tool functionality becomes irrelevant.'

Smart workers thus seek tools requiring environment configuration, command-line operation, proxy setup, and scientific internet access. Complexity breeds professional credibility.

When PPTs display terminal window screenshots with black backgrounds and green text, bosses think: 'I don't understand, but I'm impressed.' They dare not ask questions that would reveal their ignorance.

Thus, they nod and say: 'Good work. Proceed.'

This strategy offers bonus protection: obscure tools' complexity prevents bosses from testing them personally. Your fudging remains safely concealed.

Third Strategy: Interface Fudging.

This lowest-cost, fastest-acting tactic serves as fudging 101.

Employees need not research tools, understand technology, or produce valuable results. Simply open AI tool dialogues, input questions like 'How to improve marketing conversion,' and screenshot multi-round conversations to simulate 'deep engagement.'

Insert this image into PPTs with titles like 'Business Optimization Exploration Based on XX Intelligent Agent.'

Veterans add 'reverse fudging': intentionally leave minor errors in AI-generated plans, then point them out during reports: 'Boss, I noticed this small issue and will refine it later.'

Bosses invariably respond: 'Good catch. Your attention to detail is commendable.'

This achieves double fudging: demonstrating AI tool usage while proving judgment superior to Lobster's. Bosses get what they want—subordinates using new tools without being replaceable by them.

However, fudging risks exposure. Some bosses suddenly demand AI usage demonstrations.

Employees understand tools' limitations better than bosses. The 'omnipotent AI agents' in PPTs often fail to deliver business value.

When AI produces nonsense, employees must avoid bluntly declaring 'You're wrong' or 'This doesn't work.' That would imply: 'The system you made me use is worthless.' Consequences are dire.

Thus, employees need subtle ways to convey 'This tool has barriers' while saving face. The principle: 'Never let bosses feel ignorant. Make them think they just haven't studied it deeply yet.'

Typical phrasing: 'Boss, this tool runs automatically in the background now.'

Bosses usually nod: 'Keep researching,' then leave satisfied. They receive plausible explanations and save face; employees avoid trouble and keep jobs. Everyone wins.

This discussion raises a fundamental question: Why do employees expend such effort fudging? Why not tell bosses directly, 'This doesn't suit us'?

Because in the AI era, boss anxiety supersedes business focus. Employees' tasks shift from problem-solving to anxiety alleviation.

Boss anxiety stems from three layers:

First Layer: Information overload creates loss of control.

AI development outpaces ordinary cognitive updating. New papers emerge daily, with open-source implementations following tomorrow. Products launch one day, only to face three competitors within a week. Bosses scrolling WeChat and industry news fear missing an era with every blink. They can't grasp technical details or distinguish GPT-5.4 from Claude 3.1 but know one thing clearly: they're losing AI understanding and control. This loss breeds panic, which fuels blind action. 'Use it first, effectiveness later.'

Second Layer: Social pressure fuels comparison.

Bosses participate in various industry and chamber groups where 'AI adoption' serves as new social currency. Zhang's company implements AI customer service, Li's factory adopts AI quality inspection, Wang's team uses Copilot for coding. At banquets, golf courses, and private board meetings, conversations always turn to 'How's your AI implementation?' Bosses lacking compelling answers feel inferior. Not using AI signals backwardness, conservatism, and being out of touch. This social pressure causes more anxiety than business challenges.

Third Layer: Fear of obsolescence.

Traditional industry bosses have witnessed too many giants fall—Nokia, Kodak, BlackBerry—victims of missed technological shifts. They fear becoming next. Uncertain about what AI will disrupt, when, or to what extent, they know inaction means no chance to struggle when disruption arrives. This fear drives their blind AI pursuit at its deepest level.

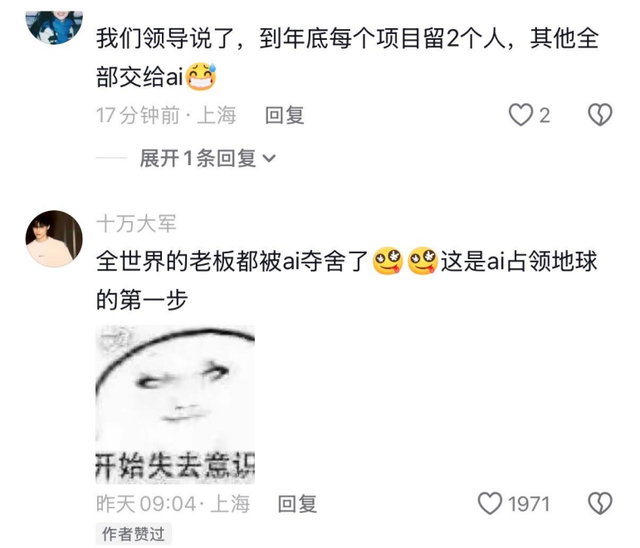

These anxieties form an inescapable cycle. Anxious bosses demand AI implementation; employees fail to deliver results, increasing boss anxiety; more anxious bosses demand even more AI.

Trapped in this loop, employees' only escape is fudging.

Only through fudging can they balance 'meeting superficial boss demands' and 'preserving genuine time.'

But does this fudging occur only between bosses and employees?

If you think fudging happens only between employees and bosses, you're underestimating its scope.

Looking one level up reveals systemic, top-down collective performance.

Take OpenClaw's popularity: did major firms creating Lobster agent variants genuinely pursue AI?

Not entirely.

After Lobster's explosion, capital markets demanded stories. Firms without 'Lobster variants' faced stock drops, investor queries, and media reports declaring them 'laggards.' Thus, regardless of technical foundation or application scenarios, firms rushed out products.

Insufficient model capability? No problem—just rebrand. Use open-source cores, modify interfaces, rename, add templates, and voilà: a 'self-developed Lobster agent' emerges. Press conferences proceed, media releases publish, and stock prices rise.

More interestingly, corporate fudging becomes employees' fudging tools.

Those 'niche tool' screenshots employees show Boss Zhang might actually run on API interfaces of major firms' rebranded models. Half-baked products created to fudge capital markets undergo layered packaging and retelling before appearing in Zhang's PPTs as evidence of trend-following.

Then, looking further, we see the boss deceiving the outside world.

Employees use lobster variants to deceive their bosses, submitting screenshots of these products and technical jargon to get by. The boss, armed with the PPTs created by employees, proclaims to the outside world, 'We are already fully applying AI technology,' speaking eloquently at industry summits and filling annual reports with claims of 'breakthrough progress in digital transformation.' Suppliers believe it, clients believe it, and the media believes it too. Only those inside the company know that the so-called 'AI middleware platform' holds little real value for actual business operations.

At this point, the complete outline of this deception chain becomes increasingly clear: Big companies deceive the capital market by launching lobster variants in disguise; employees deceive their bosses by using product screenshots and technical jargon to get by; bosses deceive themselves and the outside world by proclaiming 'full application of AI technology.' Every link in the chain is performing, and every participant vaguely knows they are part of a performance, yet all tacitly (by unspoken mutual understanding) choose not to expose it.

Because exposing it benefits no one. Big companies need stories to maintain their market value, bosses need stories to alleviate anxiety, employees need deception to keep their jobs, and the capital market needs stories to support expectations. Everyone on this chain is both a participant in and a beneficiary of the deception.

As for how long this deception will continue? No one knows the answer. But everyone knows that tomorrow, the deception will continue.