Embodied AI Takes Off, Starting with a Crash Course in 'Perception'

![]() 04/23 2026

04/23 2026

![]() 534

534

Author | Xiang Xin

A consensus in the embodied AI industry is that this year will mark the first year of mass production and deployment of humanoid robots.

As their scale expands, the environments robots face are also changing: structured scenarios in laboratories are gradually giving way to more open real-world settings.

Once robots enter real environments, the importance of the perception layer quickly becomes apparent—a capability that has long been underestimated in the past.

The robot's execution loop is "perception-decision-action." If perception falters, subsequent operations and decisions cannot be effectively executed.

The recent humanoid robot half-marathon serves as an example of an open scenario—prolonged outdoor running, changing lighting conditions, and uneven terrain exposed instability issues in the perception systems of many robots.

A humanoid robot suddenly turns and runs into the crowd as it nears the finish line.

A sufficiently stable and accurate perception system is a prerequisite for robots to work reliably in open environments.

As a result, the core components of the perception layer, which previously had a low profile, are being re-evaluated. The perception layer has become one of the key bottlenecks for robot deployment.

Following this logic, robot perception capabilities can be roughly divided into three layers: environmental perception, body state perception, and interaction and operation perception.

Seeing the World—Environmental Perception Sensors

Environmental perception is the first hurdle for robots entering real-world scenarios. It determines whether a robot can recognize objects, understand spaces, judge distances, and thus complete navigation, obstacle avoidance, and grasping localization.

At this layer, core hardware mainly includes two categories:

First, visual sensors, including RGB cameras, stereo cameras, depth cameras, and fisheye cameras, which primarily provide image, texture, and some depth information;

Second, spatial perception sensors, mainly LiDAR, which provide stable distance and spatial structure information.

Orbbec Gemini 330 Series Stereo 3D Camera

However, in real-world environments, collecting image information does not mean the robot can truly understand its surroundings.

For example, in environments with complex lighting, dynamic pedestrian flows, and alternating indoor-outdoor settings, images captured by RGB cameras are prone to distortion. Under backlit conditions, target areas may become dim and indistinguishable; strong reflections can weaken object edges and contour information; at night, image quality further declines.

Continuous movement of people and objects in the environment also increases recognition and localization difficulties, with visual systems prone to issues such as target loss and distance judgment errors.

Beyond the challenges of stability under complex lighting and dynamic scene understanding, there are two additional difficulties at the environmental perception layer.

One is the high demand for hand-eye coordination. Observations from visual and LiDAR sensors are subject to dynamic distortion, perspective jumps, and motion blur as the robot's limbs move, leading to instantaneous errors in target positioning and depth measurement.

The relative poses of the hand, eye, and object require real-time matching with sub-centimeter or even higher precision. Even slight deviations can result in grasping errors, collisions, or tracking loss.

The other is the high pressure on computing power and latency.

Both visual and LiDAR sensors generate large volumes of data. Cameras continuously output image streams, while LiDAR continuously outputs point clouds. Robots typically need to operate multiple sensors simultaneously, such as multi-camera setups, depth cameras, fisheye cameras, and LiDAR.

As a result, the volume of data collected at the frontend is enormous. However, algorithms for multi-source information fusion, 3D mapping, target detection and tracking, and dynamic obstacle segmentation are highly complex, placing significant demands on edge computing power.

Second, if environmental information processing is not timely enough, even delays of just a few hundred milliseconds can cause the system to lag behind real-time changes after Layer by layer transmission (layer-by-layer transmission).

This can lead to deviations in path judgment, slower obstacle avoidance reactions, inaccurate grasping positions, and further affect the stability of the robot's overall movements.

Therefore, after robots enter real-world scenarios, environmental perception components require a significant upgrade—from basic visual capture to precise recognition, stable tracking, and spatial understanding capabilities.

Focusing on these issues, the industry's current priorities lie in two areas: depth perception and spatial understanding.

Depth perception enables robots to obtain not just target recognition but also distance, contour, and spatial hierarchy information.

Spatial understanding, building on this, involves forming a more complete judgment of scene structure, obstacle distribution, and the relationships between target objects and their surroundings.

Along this path, the industry has developed two approaches:

Upgrading from 2D image understanding to 3D spatial understanding;

Evolving toward multi-sensor fusion: advancing from single-vision solutions to fused approaches combining vision + LiDAR, etc.

In this process, a group of representative companies has already begun deploying along different routes.

Orbbec focuses on depth vision capabilities. Its Gemini 330 series stereo 3D camera is equipped with the MX6800 depth engine chip, developed in-house for robotics scenarios. Combining active and passive imaging technologies, it can output relatively stable 3D data under widely varying lighting conditions, such as darkness and bright light.

Hesai's approach leans more toward spatial data acquisition and scene reconstruction. Its spatial intelligence AI hardware product, Kosmo, integrates customized LiDAR, multiple cameras, spatial perception algorithms, and AIGC capabilities into a compact device, capable of reconstructing the physical 3D world into corresponding digital 3D scenes.

RoboSense is advancing in the direction of multi-sensor fusion and system simplification. Its Active Camera, positioned as the "eyes of the robot," integrates three core perception modalities—depth, color, and pose—at the chip level, achieving millisecond-level spatiotemporal synchronization.

Compared to traditional multi-sensor stacking solutions, this approach reduces system complexity and helps improve consistency and response efficiency in perception results.

While the priorities of various vendors differ, their goals align:

To enable robots to achieve sufficiently stable and precise spatial understanding capabilities in complex, ever-changing real-world scenarios.

Perceiving Oneself—Body State Perception Sensors

Basic environmental perception alone is not enough. For robots to maintain balance and exert precise force during movement, they also need another set of "internal senses"—to perceive themselves.

Humanoid robots are highly dynamic systems. When walking, turning, moving up or down slopes, experiencing disturbances, or landing their feet, they need real-time awareness of their own posture, speed, and force changes to maintain balance, control exertion, and execute more stable actions.

The core devices supporting this capability can be mainly divided into two categories:

One is inertial sensors, represented by IMUs, which act as the "cerebellum" and vestibular system for embodied intelligent robots, primarily used to measure angular velocity and linear acceleration to support pose estimation and dynamic balance.

The other is torque and force sensors, including joint torque sensors, six-axis force sensors, and foot force sensors, responsible for perceiving force changes at joints, wrists, soles, and other locations.

The difficulties at the body state perception layer mainly concentrate on three points.

First, there are high demands for response speed and stability.

If body state perception is delayed, subsequent control can easily lag, disrupting the rhythm of movements. Meanwhile, during the execution of high-dynamic actions, vibrations, impacts, rapid turns, and landing feedback can amplify errors, affecting the entire control chain.

Second, consistency becomes a higher requirement during mass production.

Just because a prototype functions smoothly does not mean batch products (mass-produced products) will maintain the same stable performance over long periods. Once robots enter mass production, sensor consistency and reliability become even more critical.

Third, there are concurrent pressures for miniaturization, integration, and cost reduction.

Six-axis force and torque sensors often need to be installed in space-constrained locations such as wrists, gripper ends, or even dexterous hands. They must be small enough while maintaining measurement accuracy, structural strength, and system compatibility.

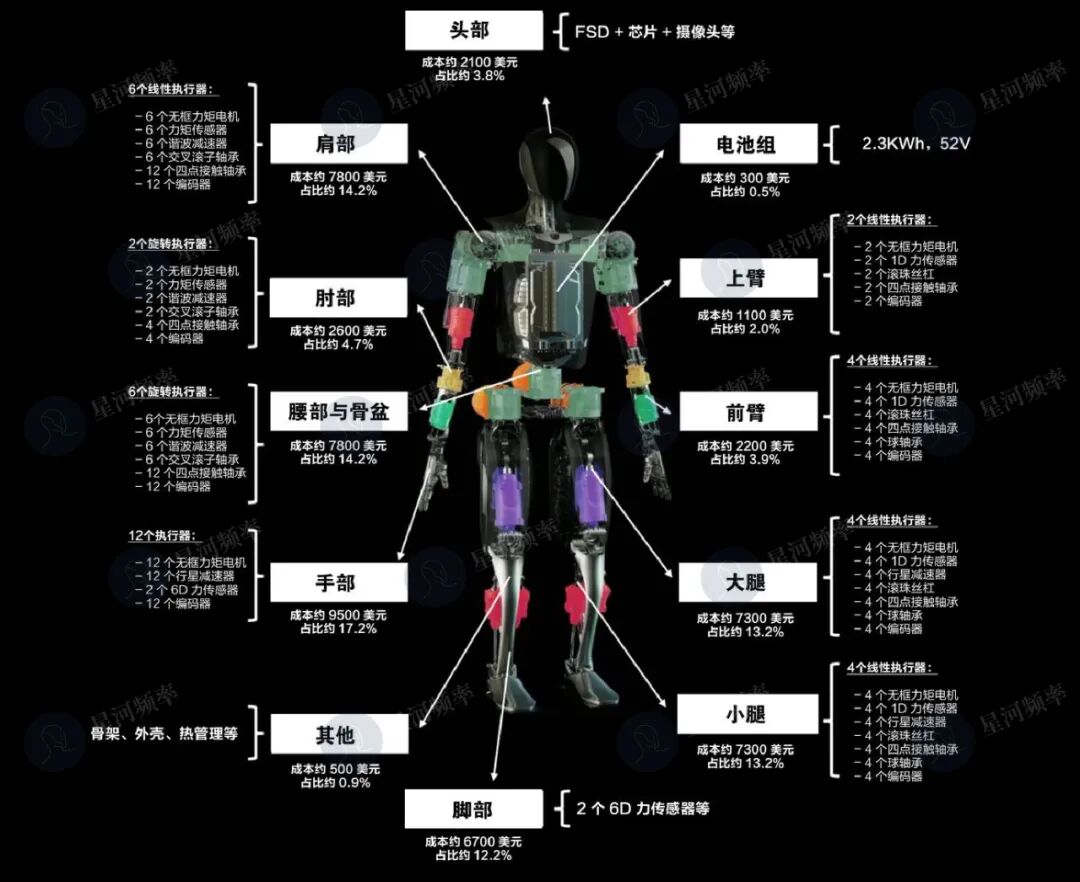

Moreover, the cost of such devices has remained high. For example, the two six-axis force sensors used in Tesla's robot feet cost $6,700.

Therefore, sensor miniaturization, high dynamic adaptability, and mass production consistency are current focal points for industry breakthroughs.

Within this field, two representative types of players have emerged.

One category consists of players who transitioned from the intelligent driving sector, with deep accumulate (accumulation) in automotive systems, represented by Dawn Technology.

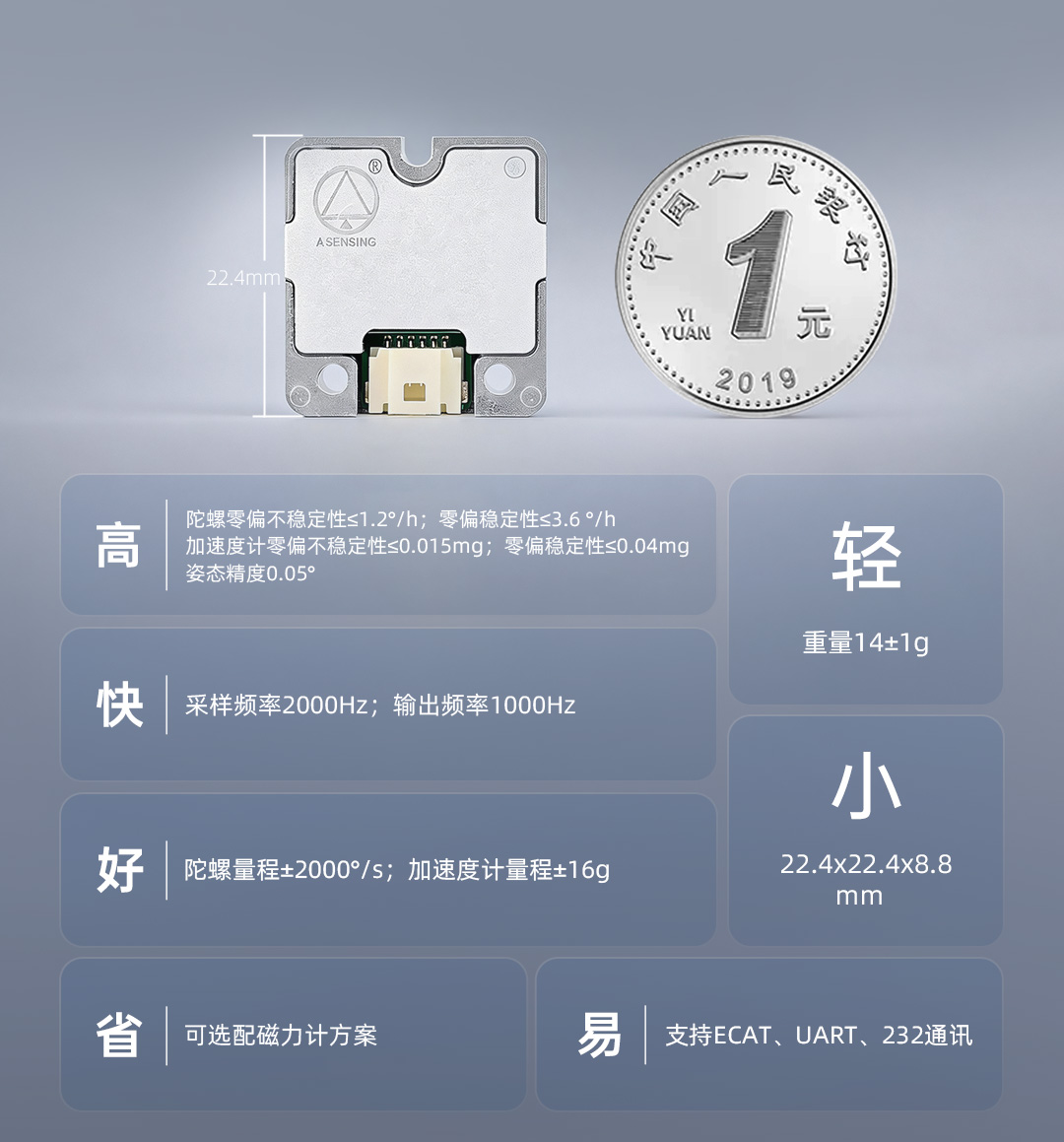

Dawn Technology has launched the automotive-grade IMU module IMU5146, which has been delivered to Galaxy General.

This IMU module achieves a Attitude measurement accuracy (pose measurement accuracy) of 0.05°, with an output frequency of 1000Hz and extremely low latency, enabling real-time capture of the robot's slight tilts and shakes to effectively avoid imbalance caused by response lag.

More importantly, Dawn has brought automotive-grade reliability, consistency, and mass production capabilities to the robot's self-perception layer.

Its products support wide-temperature operation from -40°C to 105°C and can withstand extreme impacts of 2000g, meeting the perception demands of humanoid robots during high-intensity dynamics such as jumping and rolling.

Additionally, Dawn possesses full-stack capabilities spanning underlying chips, algorithmic software, module systems, and precision manufacturing, allowing it to define product logic from the chip level upward and offering high flexibility.

Based on this scalable, highly reliable, and cost-effective spatiotemporal intelligence solution, Dawn has successfully expanded rapidly from automotive to robotics, construction machinery, renewable energy systems, and other fields.

The other category consists of professional force sensor manufacturers, represented by Kunwei Technology and Xin Jingcheng.

Kunwei Technology has launched the HRS humanoid series, designed specifically for the wrists and ankles of humanoid robots. With a minimum thickness of just 10 millimeters and repeatability accuracy (repeatability accuracy) superior to 0.1%FS, it has been supplied in bulk to leading companies such as UBTECH, Zhiyuan, and Galaxy General.

Xin Jingcheng focuses on MEMS six-axis force sensors, having completed prototype verification and forming small-batch orders. It is establishing automated production lines covering three key areas—fingertips, wrists, and ankles—to build capabilities for subsequent large-scale supply.

Touching the World—Interaction and Operation Perception Sensors

With visual and body perception, robots can walk, avoid obstacles, and stand steadily. But to truly perform tasks—such as plugging in interfaces, picking up an egg, or folding soft clothing—they lack one critical layer of capability closest to skin: tactile perception.

Many high-value tasks in embodied AI involve fine manipulation—picking up, placing, plugging in, assembling, or grasping flexible objects—all of which rely on delicate tactile feedback.

Although tactile perception sensors are far less mature than environmental and self-perception sensors, they are likely to become a differentiator for dexterous operations in the next stage.

Common tactile sensors at this stage mainly include electronic skin, fingertip tactile sensors, array-based pressure sensors, and vision-based tactile sensors.

These are distributed on robot hands, grippers, and end-effectors, performing tasks such as contact detection, pressure perception, material recognition, and deformation judgment.

This field faces numerous challenges.

First, tactile data currently lacks sufficiently mature products and standard systems.

As one founder of an embodied AI company noted, there are currently no mature, scalable tactile sensor products on the market. Definitions and collection methods vary widely across different products and solutions, making data reuse difficult.

Secondly, durability remains a practical issue. Haptic sensors operate under continuous contact, friction, and compression, demanding high longevity and stability.

Moreover, the integration of haptic sensors is extremely challenging. Fingers and end-effectors have limited space, requiring sensors to be thin while maintaining sensitivity and stability.

Simultaneously, algorithm integration is difficult. The coordination between haptic signals, vision, and motion control remains complex, with ongoing exploration in algorithm fusion.

Lastly, cost remains a significant issue. Haptics has not yet reached the stage of large-scale, low-cost adoption like vision technology.

Therefore, in the realm of haptics, many companies are still addressing challenges related to durability, cost, and data.

Pasini Sensing focuses its strategy on two ends: one is the sensor products themselves, and the other is the data ecosystem built around haptics.

At the product level, Pasini has introduced the multi-dimensional haptic sensor PX-6AX-GEN3, capable of outputting six-dimensional force, force distribution, material properties, temperature, resilience, and other types of haptic information. It features abrasion and puncture resistance, with an ultra-high industrial lifespan of 10 million cycles. It can also deliver highly consistent haptic information output in high and low-temperature environments ranging from 0 to 50°C.

Pasini is also constructing a full-modal data acquisition factory. In addition to the world's largest full-modal super data acquisition factory, Super EID Factory, which opened in Tianjin in April 2025, it plans to build four more super data acquisition factories in Suqian, Jiangsu; Wuhan, Hubei; Zigong, Sichuan; and Ganzhou, Jiangxi. It is also collaborating with cloud providers to advance a large-scale embodied intelligence data cloud marketplace.

Tactile Technology takes a more foundational approach, focusing on chip-level integration and perceptual capabilities.

Its developed analog-digital hybrid AI tactile chip supports high-precision three-dimensional force perception and can identify certain material properties and near-contact characteristics.

Daimon Robotics, on the other hand, emphasizes the construction of haptic datasets. In collaboration with multiple academic institutions and enterprises, it released Daimon-Infinity, the world's largest full-modal embodied dataset of the physical world incorporating haptics.

Daimon claims that Daimon-Infinity provides the highest-quality haptic data in the industry.

The Daimon-Infinity dataset relies on Daimon's self-developed two-finger gripper and five-finger glove data acquisition devices. Equipped with 110,000 sensing units and 120Hz high-frequency visuotactile sensors, along with fisheye cameras, encoders, IMUs, and binocular cameras, it provides comprehensive information for the dataset, including haptics, vision, motion trajectories, executed actions, and voice text.

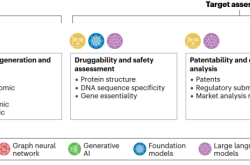

Overall, the advancement of robotic perceptual capabilities corresponds to three levels of competitive focus:

Vision-based environmental perception sensors serve as the gateway, enabling robots to see and understand their surroundings.

Force-based proprioceptive sensors represent the current bottleneck, determining whether robots can maintain stability, exert force, and interact safely in dynamic environments.

Haptics will be the breakthrough in the next stage, truly distinguishing "mobile robots" from "capable robots."

Whether on marathon courses, factory production lines, or in warehousing, sorting, and home services, the large-scale deployment of humanoid robots begins with perceptual systems.

The quality of information collected at the perceptual layer propagates through subsequent decision-making and action execution.

Robotic application scenarios are expanding from a small number of prototype validations and single-environment deployments to more complex real-world settings, while meeting requirements for longer continuous operation and higher-frequency large-scale deployment.

Therefore, whether the entire perceptual chain can form stable, replicable, and mass-producible industrial capabilities will increasingly influence the speed at which robots enter real-world scenarios.

Breakthroughs at this level may be the key to determining the pace of robot industrialization.