AI Video Generation: A Whirlwind of Change in 90 Days

![]() 05/07 2026

05/07 2026

![]() 543

543

Three months of rapid advancement in model capabilities and commercialization.

Over the past 90-plus days, the AI video generation sector has experienced intense competition and rapid change.

"Our product versions are updated daily—sometimes one in the morning and another in the afternoon," Liang Wei, co-founder of AI video creation platform MovieFlow, told Shuzhi Qianxian. Since the beginning of the year, their iteration speed has been extremely rapid. Shortly after launching an invite-only beta version, five or six more versions are already queued up for release.

This sense of urgency is not an isolated case.

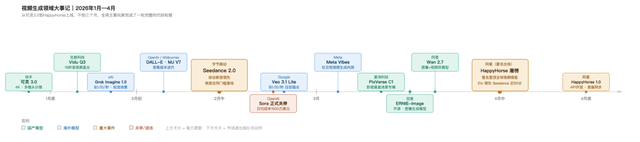

Foundational model providers are no exception. From Seedance 2.0 setting a new industry standard early in the year to OpenAI, the trailblazer of the track (Chinese term meaning "sector" or "track"), shutting down Sora, followed by Kling AI disclosing an ARR breakthrough (Chinese term meaning "exceeding") of $300 million at Kuaishou's March earnings call, and then the anonymous dark horse HappyHorse 1.0 topping the Artificial Analysis video arena rankings in early April before Alibaba claimed ownership—the past three months have seen Severe oscillation (Chinese term meaning " Severe oscillation " or "intense upheaval") in model capabilities and commercialization in this sector.

Accompanied by weekly updates to foundational models, the mid- and downstream segments of the multimodal generation supply chain have also experienced chain reaction (Chinese term meaning "ripple effects").

On one hand, advancements in foundational model capabilities are rapidly raising the floor for creators. Rapid multi-team collaboration, previously hindered by team limitations, is now becoming feasible, accelerating the arrival of industrialized workflows in AI filmmaking and other scenarios.

On the other hand, mid- and downstream application service providers are also accelerating their pace in this competitive race, with faster product launches and rapidly evolving industry conversion pathways. For example, the AI short drama sector is undergoing rapid reshuffling, with some companies exiting as soon as they enter. Downstream product users are also keenly tracking the latest model and product capabilities. One user told Shuzhi Qianxian that once they confirm superior performance and better pricing for a specific scenario, they "won't hesitate to switch overnight."

01

A Hundred Days of Dominance

Over the past three months, the multimodal generation sector has witnessed dramatic changes.

Early in the year, Seedance 2.0 made a splash, with its evaluation videos going viral. Many industry insiders hailed it as a groundbreaking product.

"This marks the first time in human history that AI video could potentially fully replace traditional human filmmaking workflows. AI has truly achieved automated integration of traditional processes like editing and post-production special effects in the early to mid-stages," said Jiang Lai, a Hollywood special effects veteran and current special effects director at an AI film and television company, on a podcast. Seedance 2.0 will significantly accelerate the industrialization of AI filmmaking.

Zhang Jin, a partner at Create OK, an AI tool provider for e-commerce viral videos, also tested several real-world cases with Seedance 2.0 within a week of its release and was impressed by its SOTA (state-of-the-art) capabilities. Zhang told Shuzhi Qianxian, "Its realism is on par with Sora2, representing a qualitative improvement over the previous Seedance 1.5. Moreover, based on reference image/video demos, it can accurately reference character appearances and video actions to generate specific fight scenes—something other models couldn't do at the time."

However, the emergence of an industry leader does not mean the war is over. In fact, iteration and evolution in this sector have accelerated unprecedentedly this year, with rapid weekly turnover.

From the late January rollouts of Kling 3.0 and Vidu Q3 to xAI Grok Imagine's low-cost entry into the streaming scene in early February, followed by Seedance 2.0's game-changing performance resetting industry expectations in mid-February—all this happened in less than four weeks.

In March, Google Veo 3.1 Lite and Grok simultaneously slashed overseas API pricing to $0.05 per second, while Sora officially shut down the same month. In April, Alibaba made a series of moves: Wan 2.7's rollout, HappyHorse's anonymous chart-topping debut followed by identity revelation, and the gray-launch of open APIs at month's end. Meanwhile, Aishi Technology released its film and television-specific model PixVerse C1, and Baidu open-sourced ERNIE-Image. In less than three months, nearly all major global players took action, completing a full generational turnover.

The capabilities of leading models quickly became industry standards amid rapid iteration. For example, lip-syncing evolved from Kling 2.6's late-year debut to Vidu Q3 and Kling 3.0's follow-up in January, Seedance 2.0's "native joint generation" height in February, and HappyHorse's open-source addition in April—in less than six months, this feature went from a "differentiated selling point" to an industry standard.

Additionally, multi-reference mode, once Vidu's secret weapon for locking in consistency in film and television content production, was soon integrated into the latest versions by leading models like Seedance.

Sun Weizhe, head of enterprise services at Aishi Technology, described foundational model progress and rapid iteration as "each leading for a month or two" during an industry summit speech in March. Sun noted that rapid industry iteration is the norm in this field, stating, "The industry has been iterating quickly over the past two years, with time windows closing after just a month or two."

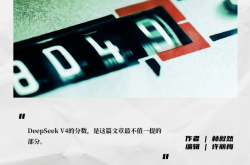

Coinciding with rapid foundational model iteration is an unprecedented commercialization challenge—OpenAI officially announced Sora's shutdown in late March. As the pioneer of the video generation sector and a global benchmark for AI video products, Sora2 had previously reported a nearly zero user retention rate after 60 days of launch (Chinese term meaning "launch"). Overseas media also reported Sora's daily inference costs at around $15 million, with total lifecycle revenue of only about $2.1 million.

Currently, benchmarks for large model deployment are in scenarios like code generation, with Anthropic's Claude Code exceeding $2.5 billion in ARR early this year. Some analysts suggest that one reason for OpenAI shutting down Sora was to focus on enterprise-grade products with more prominent revenue conversion, such as code generation.

With Sora's shutdown, in the multimodal generation sector where computational costs are tens or even hundreds of times higher than text-based large models, achieving better commercialization and application is a must-answer question for all providers. Sora's failure revealed the challenges of standalone consumer-grade video products under current computational costs, forcing other providers to accelerate Closed loop link (Chinese term meaning "closed-loop ecosystem") construction.

Overseas, Google announced at the Marketing Live conference in late March that it would officially integrate its generative video model Veo into Google Ads' Asset Studio. Domestic providers like ByteDance first conducted internal testing in products like Jianying (CapCut's Chinese version) before integrating the Seedance model into the international version of CapCut. ByteDance adopted a dual B-end and C-end strategy, introducing tiered pricing plans, with multiple adjustments and price hikes in C-end model invocation costs due to surging demand.

AI video startups are also prioritizing closer integration with industry and scenario-specific needs this year. Aishi Technology's Sun Weizhe mentioned that they began emphasizing deployment last year and accelerated scene and demand alignment this year, focusing on enterprise services as a key direction. They launched the C-series proprietary model for the film and television industry while deeply collaborating with content platforms like Mango TV and Zhangyue.

These moves signify that ecological advantages and closed-loop ecosystems for content-traffic-monetization are becoming increasingly critical in multimodal generation scenarios. An IDC report also pointed out that the era of showcasing technical prowess is over, with practicality, controllability, and deployability becoming the core competitiveness. Domestic model providers have shed their restless benchmarking anxiety, pushing the global sector into a more ruthless "self-developed elimination race."

02

Draw Rate Drops Tenfold, Prices Rise Nearly Fivefold

Accompanying rapid iteration in the foundational model sector and accelerated commercialization is rapid change in the mid- and downstream application ecosystem.

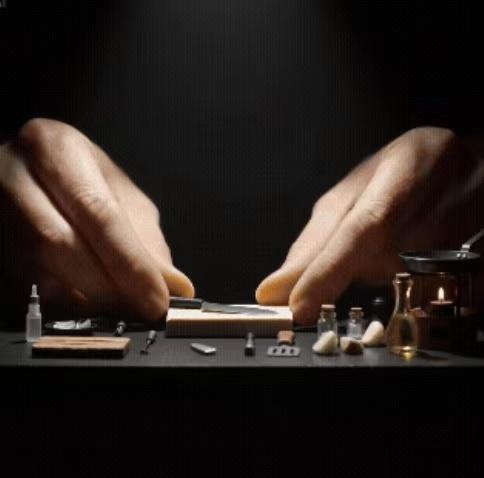

On one hand, with rapid model capability improvements, the industrialization process in film and television production scenarios has accelerated significantly, transitioning from "opening blind boxes" to "industrialized precision creation," which will broaden AI video application scenarios.

AI filmmaking creator Jiang Lai mentioned that previously, "rapid multi-team collaboration was complex and difficult, with inconsistent production quality and efficiency easily dragged down by individual limitations." However, with foundational model capability improvements, models like Seedance 2.0 now offer automatic storyboarding, lip-syncing, and multi-shot narrative capabilities. "The model's shot language already surpasses the quality of average storyboard artists," Jiang said.

This enables standardized collaboration among multiple teams centered around dynamic storyboarding, greatly accelerating the industrialization of AI filmmaking. While the AI video field was previously a competition among self-media creators, professional film and television production teams will enter the fray this year. Jiang believes that 2024 will be the year when professional AI practitioners widen the skill gap with individual creators.

Yang Chenliang, founder of Yishen Film and Television, told Shuzhi Qianxian that Seedance 2.0 has significantly reduced the draw rate, transforming film and television production scenarios from "opening blind boxes" to precision creation. "After Seedance 2.0's release, the operational model of the entire AI short drama supply chain has changed," Yang said.

Previously, insufficient model capabilities required a high draw rate to achieve desired scenes, with ratios as poor as 1:20 at their worst. This necessitated a large number of draw specialists for short drama or comic drama production. Under this mechanism, some production companies even collaborated with universities, using interns to reduce labor costs for drawing.

With substantial model capability improvements, the draw rate has dropped significantly to around 1:2, often requiring only one or two draws to achieve the desired scene and scene (Chinese term meaning "visuals"). This has made the role of draw specialists unnecessary, but the person responsible for generation now needs to be an executive director with directorial thinking, shot composition, and visual aesthetics.

"The draw rate has dropped significantly, but the cost per invocation has increased. For example, Seedance 2.0 costs around 1 yuan per second for 720P quality—about five times more expensive than before. The Waste rate (Chinese term meaning "waste rate") has decreased tenfold, with significantly improved usability," Yang said. However, Yang also believes that despite continuous tool iteration, film and television production still requires assets, storyboarding, etc. Regardless of tool iteration speed, the professional film and television production capabilities of personnel ultimately determine production quality.

"From this year to next, AI-generated scenes will account for about 50% of traditional film and television," observed MovieFlow co-founder Liang Wei. He noted that foundational model capability improvements are not unique to Seedance—the rapid global evolution of multimodal models will likely bring significant changes to film and television production methods. Previously, the process from script handover to shooting took months, but now a film can be fully pre-visualized with AI in just five days before determining which parts to shoot for real.

The same logic applies to e-commerce advertising scenarios. Advances in multimodal foundational model capabilities have enabled complex instruction control for long-duration, free-form digital humans. This year, e-commerce digital humans are evolving from "standing and reading scripts" to "holding products and changing outfits in real-time." An e-commerce live-streaming digital human service provider previously told Shuzhi Qianxian that industry estimates suggest the live-streaming digital human market will double in size by 2026 compared to last year.

On the other hand, rapid foundational model iteration and expanded content supply capabilities have attracted a more diverse range of entrants, leading to a dramatic increase in model invocation volumes and content production capacity. However, demand has not grown proportionally, making downstream commercial monetization fiercely competitive.

Tencent Video's Sun Zhonghuai previously mentioned that in the AI era, content supply will grow tenfold or even a hundredfold, but viewer attention will not increase proportionally.

DataEye data shows that 60,946 comic dramas were released in 2025, with only 96 surpassing 100 million views—a hit rate of just 0.16%. By February 2026, the number of ongoing comic dramas had surged to 120,000, with monthly volume doubling last year's annual total, but fewer than 150 works surpassed 100 million views, further dropping the hit rate to 0.12%.

This has created a survival phenomenon in the AI video sector over the past few months—token invocation volumes climb, generation times see long queues, invocation prices rise repeatedly, but AI comic drama revenue-sharing ratios decline, with over 90% of AI short drama companies operating at a loss.

The entire sector is advancing rapidly, with upstream and downstream supply chains quickly aligning. "The industry is iterating so fast that you can't even establish a model in such a short time. By the time you have a preliminary familiar model, the technology has already iterated, and the model needs to change again," Yang said.

03

Blowing Up Balloons in Cracks and an Expanding Market

AI video creation applications have also rapidly adjusted and adapted to market changes over the past few months.

Sora's exit prompted some application providers to quickly adjust their product introductions and positioning. Shuzhi Qianxian observed that some vendors previously promoted Sora2 workflows to serve downstream e-commerce users but swiftly revised their product introduction pages after Sora2's discontinuation, emphasizing product capabilities and achieved effects rather than foundational models.

Rapid foundational model capability improvements are also encroaching on harnessing abilities that once belonged to the application layer, requiring AI video applications to quickly adjust their business workflows.

CreatOK's Zhang Jin explained that previously, insufficient model consistency and low storyboard comprehension meant complex sequences could only be generated fragment by fragment using keyframes, then combined. Over the past few months, most scenes can now be generated at once, prompting them to abandon their previous stitching workflow and delegate more of the generation process to models. "This is good—it helps us avoid investing in things that will inevitably be overshadowed by models as early as possible," Zhang said.

Under rapid market evolution, application providers are also accelerating their product update speeds and frequencies. MovieFlow's recently launched Studio version is a high-end product line for professional creators, offering fine control over film and television industrialization workflows and supporting full-link operations from pre-visualization and storyboard planning to multi-shot narratives. Liang told Shuzhi Qianxian that while the Studio version is currently in invite-only beta testing, five or six more versions are already queued up for release. "We're updating daily—sometimes one version in the morning and another in the afternoon. Our website now pushes updates every day," Liang said.

This iteration speed has become a defining characteristic of large model application entrepreneurship today. Liang believes that while only a small fraction of society currently perceives AI capabilities, the speed at which the technological singularity is approaching is unprecedentedly rapid, imposing immense pressure on entrepreneurs to keep pace cognitively and operationally. "You have to withstand this immense rhythm and pressure," Liang said.

Observers have also noted that foundational model providers are making significant moves in the AI application space to build closed-loop ecosystems, potentially squeezing the survival space of mid-tier application providers. For example, ByteDance's Jimeng, Jianying, and Doubao (recently launched paid membership version) all integrate Seedance 2.0's core video generation capabilities, while Alibaba's Qianwen integrates Wan and HappyHorse capabilities.

This tests how application platforms can build differentiated products and services between market demands and foundational model capabilities.

However, Liang Wei believes that no matter how much large models continue to advance, there is still a gap between reaching real-world users and becoming a product that users can truly use. This gap may narrow over time and with improvements in model capabilities, but it will always exist.

"We're like blowing up a balloon in a crack. The challenge is how quickly we can build that balloon," he used a metaphor, suggesting that application developers need to find a balance between technology and the market and then act quickly.

Zhang Jin also admitted that the value of solely focusing on generation will gradually diminish. Models can generate everything, but what users always want are results delivered in specific scenarios. In the future, model capabilities will be equalized, and the competition will be about who can better harness the model and apply it in the right places. "What we need to do and accumulate is a deeper understanding of user scenarios, more accumulation of public and user data, making it easier for models to obtain high-quality user context, while leaving the generation capabilities entirely to the models."

Application service providers do not see only pressure and challenges; there is also a vast market space that is opening up.

Although the relative gap is narrowing with model advancements, the imaginative space for the entire ecosystem of multimodal generative models and application development continues to expand. The application market for multimodal models is just beginning to open up, leading many practitioners to believe that the application layer is far from reaching a cutthroat saturation point.

For example, Liang Wei sees that multimodal capabilities have the potential to become a new artistic medium, creating new ways of content consumption. He believes that by the third quarter of this year, video multimodal models will completely solve performance issues, making AI-generated character performances indistinguishable from those of real humans. With multimodal capabilities in place, in addition to enabling pre-visualization in film and television production, significantly enhancing the industrialization level of filmmaking, many stories that never had the chance to be presented may also be brought to the public based on multimodal capabilities, opening up new market spaces.

"Comic dramas may only represent 1% of the applications of multimodal generation capabilities; the potential of the other 99% has not truly been demonstrated yet," Liang Wei mentioned. In addition to rapid product development since the second quarter of this year, they are also increasing their market promotion and marketing efforts.

Whether it's mass-market scenarios, corporate video production needs, or advertising production scenarios, the penetration of multimodal model capabilities has only just begun. "The Nolans, Zhang Yimous, and Guo Fans of the AIdeo era have not yet emerged," Liang Wei said.