Just Now! Anthropic Establishes TAI Institute to Address AI Threats: The AI Version of 'Don't Be Evil' Arrives?

![]() 05/08 2026

05/08 2026

![]() 440

440

This is far more important than a powerful model.

Last night, AI newcomer Anthropic (referred to as 'A Corp' hereafter) did not release a new Claude model. Instead, it launched something that seems particularly 'boring': The Anthropic Institute (TAI for short).

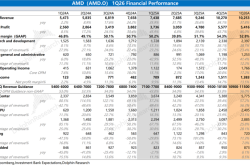

Compared to the popular Harness Engineering in 2026, TAI aims to address grander challenges. According to Anthropic's published research agenda (anthropic-institute-agenda), TAI focuses on four key areas: economic diffusion, threats and resilience, real-world AI systems, and AI-driven research and development. TAI has also issued a global 'call for heroes,' recruiting researchers to work together to solve these issues.

(Image source: Official X@Anthropic)

In other words, A Corp (short for Anthropic) has established an internal organization primarily focused on studying how humans coexist with AI:

How will AI impact employment and the economy?

What new security risks will it bring?

Will human behavior and judgment change after real-world AI use?

When AI begins assisting in developing even stronger AI, how should this acceleration process be understood and constrained?

Many readers may perceive this as just another routine move by an AI company. However, Leitech believes this could be A Corp's most noteworthy action in recent times. TAI's positive impact on the AI industry and humanity is akin to Google's 'Don't Be Evil' mantra for the internet industry. That's why Leitech AGI says this 'release' is no less significant than a major model upgrade.

AI's Profound Impact on the Economy: Not Just Job Security for Workers

TAI's primary research direction is Economic Diffusion.

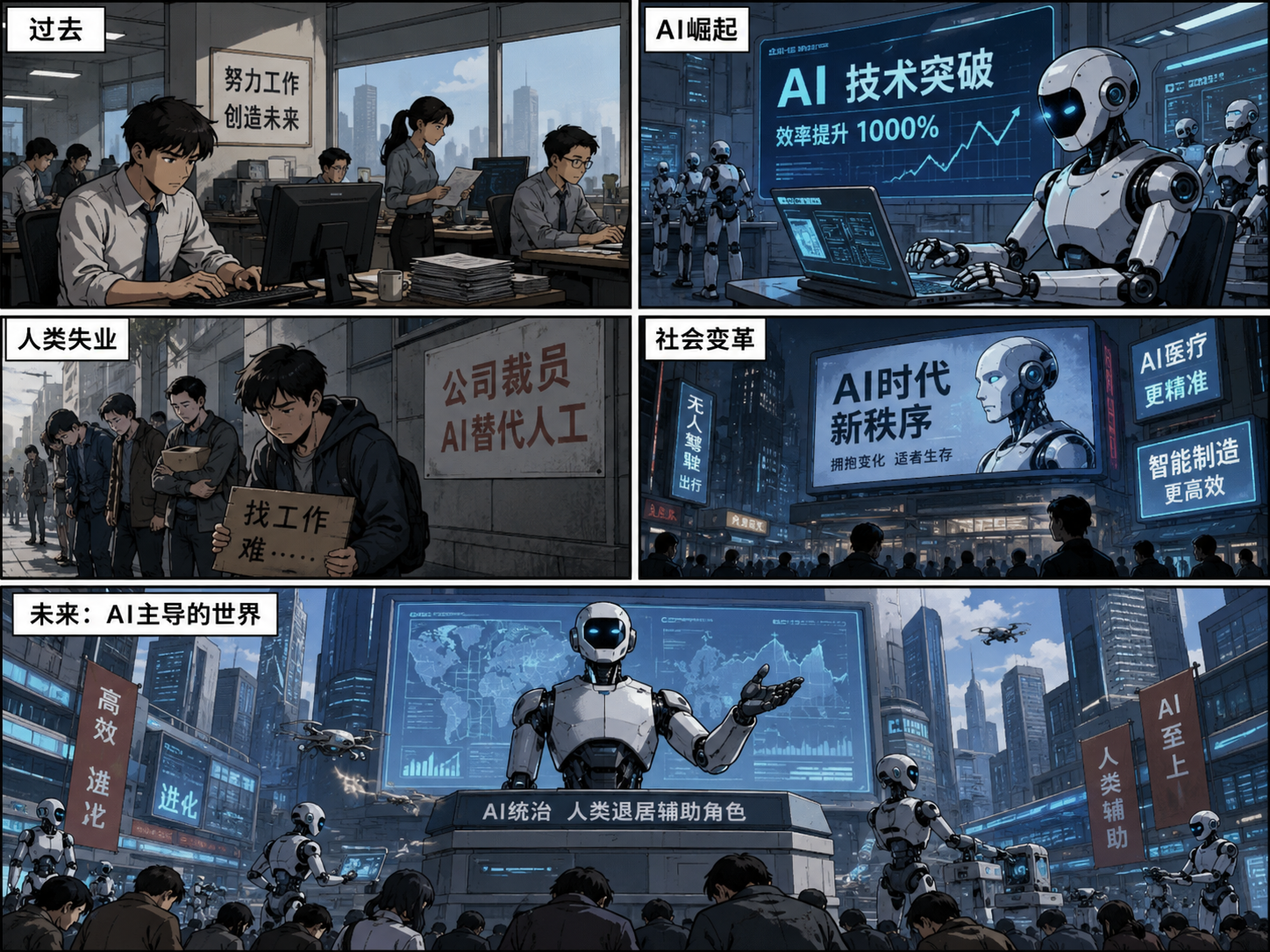

Reviewing the first three industrial revolutions in human history—be it the spinning jenny, the roaring steam engine, or later electricity and assembly lines—they essentially replaced extremely cheap and repetitive manual labor. However, the fourth industrial revolution sparked by AI is vastly different; it directly enters humanity's most prided intellectual work zones.

The core contradiction TAI points out is this: as tools upgrade, workers' situations worsen.

In its research, TAI mentions that if three people can accomplish the work of 300 in the future using large models, what will happen to the company?

Designers can use AI to instantly handle the most tedious layers and materials, while programmers can use AI for Vibe Coding... Assuming AI can boost work efficiency by 75%, this won't compress the human workweek from 40 hours (or even 996) to 8 hours. Instead, humans might need to do five times more work.

TAI is concerned with the new logic that 'with AI, your workload must multiply.' To quantify this, TAI introduces a new term: The Anthropic Economic Index. A Corp states it won't just publish obscure academic papers but intends to extract real data to clearly show humanity: In which industries is AI quietly replacing human jobs? Will newcomers be 'eliminated' right out of the gate?

(Image source: AI-generated)

Moreover, TAI calculates this impact in the real world. We know large models are insatiable 'money pits'; every time we use AI to generate text, images, videos, or even a simple query, it burns a massive amount of Tokens. At the bottom lie computing power, chips, storage, electricity, and if we dig deeper, carbon emissions, capital, etc. Resources are always finite. When society pours vast resources into AI, other industries will inevitably suffer.

In 2026, the most noticeable feeling is that AI-induced memory and storage shortages have directly led to widespread price hikes in consumer electronics. Even smartphone manufacturers have been forced to reduce their willingness to launch new models. However, at the same time, all smartphone manufacturers hope to use AI to reshape product logic and extend product lifecycles. OpenAI's native AI smartphone is also on the agenda. While everyone benefits from AI, more industries are being profoundly impacted by it—for better or worse.

TAI aims to use the 'economic index' to quantify AI's economic impact from abstract perceptions into data models: Only by clarifying the problem can we solve it.

The Ultimate Crisis: Humans Are 'Outsourcing' Their Brains

If job loss is a slow torture, then AI's transformation of human thinking is direct harm.

The internet will suffer first. You may notice that today's internet is becoming a 'shit mountain.' Searching for travel guides used to yield many posts about avoiding pitfalls. Now, it's full of AI-generated, beautifully formatted but utterly fabricated nonsense.

Worse still, AI has lowered the barrier to entry for gray industries: Using AI to swap faces for malicious rumors, cloning loved ones' voices for telecom fraud—scammers can destroy ordinary lives by burning a few Tokens.

TAI also highlights a deeper crisis: AI is unknowingly making humans dumber.

There was a case where a Chinese user, encountering an unfamiliar wild mushroom in the wild, took a photo and asked AI, 'Can I eat this?' AI confidently identified a highly toxic mushroom as an 'edible, delicious morel.' Another incident involved a child asking AI about a mousetrap. AI analyzed it as a 'square, metal-structured abandoned go-kart toy.' The child, curious, touched it and got their finger caught.

These stories sound like dark humor, but they reveal a phenomenon: AI's greatest trait isn't intelligence but 'mysterious confidence.' AI can never be 100% accurate. Google Gemini's latest model achieves about 91% factual accuracy—already top-tier. Yet many users, imperceptibly influence (unconsciously), abandon thinking and habitually 'outsource' all decision-making to a string of code.

TAI poses a thought-provoking question: When a significant portion of society seeks advice from just two or three large models, how will human thinking patterns and problem-solving methods suffer terrible (terrifying) 'homogenization'? You think you're using AI tools to boost productivity and cognitive levels, but in reality, you're 'outsourcing your brain.' In other words, if everyone starts relying on AI, humanity risks losing autonomous thinking abilities, reducing all brains to copies of a single mold.

AI's Dual-Use Capabilities: How to Prevent an Intelligence Explosion?

TAI introduces a new concept: Dual-Use Capabilities. The official explanation is that if an AI model becomes more capable in biology, it can not only develop new drugs but also create extremely lethal biological weapons. If an AI excels at writing code, it's not just a good programmer but also a hacker capable of easily infiltrating national networks.

(Image source: Official Anthropic)

When such 'dual-use' monsters massively integrate into self-driving car brains, factory robotic arms, or even security systems and drone swarms, what disasters could ensue? In phones, AI might say, 'Sorry, I made a mistake.' But in reality, a one-second recognition bias (deviation) means a real safety accident.

Not to mention large models iterate every few weeks, while humans take 'years' to amend regulations, laws, or insurance policies. This gap creates a defenseless 'naked period.' When AI-induced disasters strike, today's society lacks the 'resilience' to bear them.

To address this, TAI established the Frontier Red Team. Their task is simple yet abstract: attack and lure their own AI agents daily to gauge their real-world destructive potential. The goal is to erect a defense line before society's outdated systems collapse entirely.

Previously, human programmers dominated AI's evolutionary pace. But now, advanced large models can read papers and write code independently. Soon, they might develop newer large models themselves. As AI's self-nesting speed accelerates, technological evolution will outpace human cognition.

(Image source: AI-generated)

To prepare for this impending singularity, TAI proposes a new concept: Fire Drill Scenarios for intelligence explosions.

Simply put, TAI plans to conduct war games with top lab executives and governments: They must test humanity's ability to hit the brakes before an 'intelligence explosion' truly occurs.

Developing While Governing: A Corp Hits the Brakes Seriously

At a time when the entire industry is sprinting blindly, Anthropic's move to establish TAI is indeed admirable.

Neighboring OpenAI dominates headlines not for technological breakthroughs but for executive infighting and lawsuits with Musk. Many AI companies, despite poor performance, manipulate rankings and seek funding, absorbing social capital through inflated valuations. While the industry discusses TAI's topics, most AI giants adopt a 'develop first, worry later' attitude. Amid this frenetic atmosphere, A Corp slams the brakes, exposing these dirty secrets openly, signaling a new AI ethos: develop while governing.

A Corp isn't acting out of charity or saintliness but playing a shrewd commercial game. Today's powerful investors and governments are terrified of AI mishaps: Buying a model, they care little about performance fluctuations but fear catastrophic failures. A Corp's TAI positions it as a 'rational' player, earning user trust and global confidence.

(Image source: AI-generated)

TAI's article concludes by stating that all its research findings and early warnings will directly feed into Anthropic's core institution—the Long-Term Benefit Trust (LTBT). LTBT's mission is to monitor the company's commercial decisions, ensuring every Anthropic move serves humanity's long-term interests rather than short-term financial gains.

This mirrors Google's iconic 'Don't Be Evil' mantra: Through TAI, A Corp tells the world that while peers race blindly, they're not just keeping pace but studying how to stop.

Expecting tech giants to self-regulate is absurd. But in an era where everyone floors the gas pedal blindfolded, having a leading player voluntarily establish TAI—investing in economic indices, simulating intelligence explosions, and studying brain degradation—is itself noteworthy. That's why Leitech argued at the outset that TAI's release matters more than A Corp launching a new model.

Appendix: TAI's Official Agenda, Translated by Google Gemini

At The Anthropic Institute (TAI), we leverage insights from cutting-edge labs to study AI's global impact and share our findings publicly. Here, we outline the questions driving our research agenda.

Our research focuses on four key areas:

Economic Diffusion

Threats and Resilience

Real-World AI Systems

AI-Driven Research and Development

In 'Core Views on AI Safety,' we argue that effective safety research requires close engagement with frontier AI systems. The same logic applies to studying AI's security, economic, and social impacts.

At Anthropic, we've already witnessed foundational changes in software engineering and internal economic structures. Our systems face new threats, and AI's early signs are accelerating its own R&D. To maximize AI's benefits, we aim to share this information broadly. We're studying how these dynamics affect the external world and how the public can help guide these transformations.

At TAI, we examine AI's real-world impact from a frontier lab perspective and publish our findings to help external organizations, governments, and the public make informed AI development decisions.

We will share research findings, data, and tools to make it easier for individual researchers and institutions to pursue these lines of inquiry. Specifically, we will share:

We will extract more granular information at a higher frequency from the Human Economic Index to understand the impact and application of AI on the labor market. We aim to become an early warning signal for major changes and disruptions.

Investigate which societal areas are most in need of investment to enhance their resilience in the face of new security risks posed by AI.

Provide a more detailed overview of how Anthropic is using new AI tools to accelerate work, as well as the implications of potential recursive self-improvement in AI systems.

TAI will influence decision-making at Anthropic. This may manifest in the company sharing data it otherwise wouldn’t (e.g., economic indices) or releasing technology in different ways (e.g., cyber threat analyses that inform programs like Project Glasswing).

We anticipate that the research conducted by the TAI Institute will increasingly serve as a critical reference for Anthropic’s Long-Term Benefit Trust (LTBT). The LTBT’s mission is to ensure Anthropic continuously optimizes its actions for the long-term benefit of humanity. We developed this research agenda in collaboration with the LTBT and employees from various departments at Anthropic.

This is a dynamic agenda, not a static one. We will refine these questions as evidence accumulates and expect new questions—not yet covered today—to emerge. We welcome feedback on this agenda and will revise it based on what we learn through discussion.

If you’re interested in helping us answer these questions, we encourage you to apply to become an Anthropic Researcher. The Researcher Program is a four-month opportunity, mentored by members of the TAI team, to study one or more of these questions. You can learn more and apply for the next cohort here.

Our Research Agenda:

Last updated: May 7, 2026

Economic Diffusion

Understanding how the deployment of increasingly powerful AI systems transforms the economy is critical. We also need to develop the economic data and forecasting capabilities necessary to choose AI deployment approaches that benefit the public.

To address the questions in this pillar of our research, we will refine data in the Human Economic Index. We will also explore other methods to improve our models of how powerful AI impacts society—whether through job displacement, unprecedented economic growth, or other effects.

AI Adoption and Diffusion

Who adopts AI? AI research and development is concentrated in a few companies across a handful of countries, but its deployment is global. What determines whether a country, region, or city gains access to AI? If they gain access, how do they extract economic value from it? What policies or business models could effectively alter this dynamic? How might free-weight or open-weight models facilitate this?

AI adoption at the firm level: Why do firms adopt AI, and what are the consequences? How does AI alter the scale at which firms or teams can achieve maximum efficiency? How concentrated is AI adoption across firms? How do changes in AI adoption concentration translate into profit margins and labor shares? How would industrial organization change if a 3-person team or company could now do what once took 300? Or if firms could more easily centralize knowledge and doing so offered scale efficiencies, would we see larger, more sprawling firms with greater incentives to systematically monitor workers?

Is AI a general-purpose technology? Does AI follow the pattern of past “general-purpose technologies,” which diffuse fastest in lucrative commercial applications and slowest where social returns exceed private returns? Are there policies or decisions that could alter this trend?

Productivity and Economic Growth

Productivity growth: What will be AI’s impact on the pace of innovation and productivity growth across the economy?

Sharing gains: What pre-distribution or redistribution mechanisms could effectively spread the gains from AI development and deployment more broadly?

Market transaction costs: How will AI affect transaction systems and costs in markets? When does having an agent negotiate on your behalf enhance market efficiency and equitable outcomes? When does it not?

Broad Labor Market Impacts

AI and employment: How will AI alter employment across sectors of the economy? As AI automates existing parts of the economy, what new tasks and jobs might emerge? How will these shifts differ across regions and countries? Our Human Economic Index Survey will provide monthly insights into how people perceive AI’s impact on their jobs and their expectations for the future. We will also update the Economic Index to share higher-frequency, more granular data.

Can the pace of AI diffusion be modulated? Central banks use “levers” like policy rates and forward guidance to curb inflation. Could AI companies (in coordination with governments, at the industry level) employ similar levers to control the pace of AI diffusion sector by sector? Would doing so yield significant public benefits?

The Future of Work and Workplaces

Worker perspectives on work: How do workers across industries perceive occupational change? How much agency do they have over these changes? Can “worker power” be preserved or transformed?

Professional development pipelines: Many industries rely on entry-level roles (e.g., paralegals, junior analysts, and associate developers) to train future senior practitioners. If AI displaces the work through which expertise was historically accumulated, how will people become experts in the first place? What does this imply for a field’s long-term stock of senior talent?

Future-ready learning: What should people learn today to prepare for tomorrow? What will future occupations look like? How will AI transform how we learn and develop expertise?

The role of paid work: If AI significantly reduces the centrality of paid work in human life, under what conditions will people redistribute their time and energy to other meaningful sources? What can we learn from historical or contemporary groups for whom work was scarce or optional? How should society navigate this transition?

Threats and Resilience

AI systems often advance multiple capabilities simultaneously, including dual-use ones. For example, an AI system that improves in biology is also better at creating biological weapons. An AI system that excels at computer programming is also better at hacking computer systems. If society can better understand the threats AI systems might amplify, it can more easily adapt to this shifting threat landscape.

We pose these questions to help build partnerships that strengthen the world’s capacity to navigate transformative AI and establish early warning systems for new threats that may arise. Many of these questions will guide our Frontier Red Teaming research agenda.

Assessing Risks and Dual-Use Capabilities:

Dual-use technologies: Powerful AI is inherently dual-use: a tool for improving healthcare and education or for surveillance and repression. Can we build observability tools to understand if and how this is happening?

Pricing risk appropriately: What are effective, market-driven approaches to improving societal resilience to anticipated threats from AI systems? Can we develop new methods for pricing risk or create technical tools and human organizations that improve resilience in advance of predictable threats (e.g., rising AI cyberattack capabilities)?

Offense-defense balance: Will AI-enabled capabilities fundamentally favor attackers in domains like cyberspace and biosecurity? Does AI also favor attackers when applied to more traditional domains, such as through increasing integration with command-and-control systems? More broadly, how will AI alter the nature of human conflict?

Developing Risk Mitigations:

Crisis response planning: During the Cold War, U.S. presidents had a direct hotline to the Kremlin for use in a nuclear crisis. What geopolitical infrastructure would be needed if AI systems triggered a crisis? This infrastructure might not be between nations—it could also be between companies or other entities.

Faster defenses: AI capabilities can advance significantly in months, while regulatory, insurance, and infrastructure responses take years. How can we bridge this gap? Can defensive mechanisms—like automated patching, AI threat detection, or pre-deployed response capabilities—keep pace with the speed and scale of AI attacks? Or is this asymmetry structural? How can we deploy these defenses as effectively as possible?

Intelligence Capabilities for Surveillance

AI’s impact on surveillance: How will AI transform how surveillance operates? Will it reduce costs, increase efficiency, or both?

AI Systems in Practice

Interactions between humans, organizations, and AI systems will be a major source of societal change. Understanding how AI systems might alter the people and institutions that interact with them is a core research area for our Societal Impact team. To study these shifts, we’re improving existing tools and developing new ones—ranging from software that improves platform observability to tools for conducting large-scale qualitative surveys.

AI’s Impact on Individuals and Society:

Group epistemology: What happens to our epistemology when large portions of the population reference the same few models? Can we find ways to measure large-scale shifts in beliefs, writing styles, and problem-solving approaches caused by shared AI use?

Critical thinking: As AI systems become increasingly capable and trustworthy, how can we detect and avoid degradation in human critical thinking skills due to growing reliance on AI’s judgment?

Technological interfaces: The interface of a technology determines how people interact with it—television made people passive viewers, while computers made it easier to be creative producers. What kinds of interfaces can we build so that AI systems improve and facilitate human autonomy?

Managing human-AI collaboration systems: How can humans effectively manage teams composed of humans and AI systems? Conversely, how should AI systems manage teams composed of humans, AI, or both?

Identifying Significant Impacts of AI:

Behavioral impacts: Just as social media has led to changes in human behavior, AI may shape human actions as well. What monitoring or measurement approaches could help researchers understand this dynamic?

Enabling research: Are there transparency mechanisms and tools that would allow a broad population (not just frontier AI companies) to easily study real-world AI applications?

Understanding and Governing AI Models:

System “values”: What “values” do AI systems express, and how are they connected to how the system is trained? More concretely, how can we measure the impact of an AI’s “composition” on its behavior post-deployment? We will expand on prior research addressing these questions.

Governance of autonomous agents: What aspects of existing laws, governance systems, and accountability mechanisms could apply to autonomous AI agents? For example, how maritime law handles abandoning ships is relevant to how law might handle unsupervised agents. Conversely, are there aspects of existing law that already apply to AI agents but really shouldn’t?

Agent reliability: What aspects of autonomous AI agents could be adjusted to fit within existing laws, governance systems, and accountability mechanisms? For instance, can we ensure AI agents have unique and reliable identities, even without direct human control?

AI governing AI: How can we effectively use AI to govern AI systems? In what areas of AI regulation do humans have a comparative advantage or are legally or normatively required to “be in the loop”?

Agent interactions: What norms will emerge from interactions between AI agents? How will different agents express different preferences, and how will those preferences affect other agents?

AI-Driven R&D

As AI systems become more capable, scientists are using them to conduct an increasing share of research. This means more scientific research is happening autonomously or semi-autonomously, with less human intervention. In AI research itself, increasingly capable systems may be used to develop subsequent versions of themselves. We sometimes refer to this paradigm as “AI-driven AI R&D.”

AI-driven AI R&D may be a “natural dividend” of building more intelligent and capable systems. Just as advances in coding capabilities have given rise to dual-use cyber capabilities and advances in scientific capabilities may give rise to dual-use biological capabilities, advances in sophisticated technical work may naturally produce AI systems capable of self-developing AI systems.

AI-driven AI R&D carries significant potential risks. For policymakers evaluating possible measures, it will be critical to understand trends in the pace of AI development and whether AI research will begin to compound on itself.

AI for AI R&D

Governance of AI R&D: If AI systems are used to autonomously develop and improve themselves, how can humans effectively understand and control these systems? Ultimately, what will govern these systems?

Intelligence explosion drills: How do we conduct intelligence explosion drills? How can we run tabletop exercises that actually test decision-making by lab leadership, boards, and governments?

AI R&D telemetry: How can we measure the overall pace of AI R&D? What telemetry and underlying technical support would be needed to gather this information? How could metrics related to AI R&D serve as early warning signals for recursive self-improvement?

Controlling AI takeoff speeds: If an intelligence explosion is coming, what intervention points could slow or shape its speed? Assuming humans could intervene, what entities should wield this ability—governments? Companies?

AI in R&D Beyond AI—Research in Other Domains Driven by AI:

Technology trees: AI accelerates certain scientific fields far more than others, depending on data availability, evaluation metrics, and how much knowledge is tacit or institutionally bounded. How uneven is this gradient of development? What human problems does the resulting variation in scientific progress imply will be prioritized?

Jagged frontiers: Model capabilities are stronger in some domains than others. Fields with massive positive externalities—like drug discovery and materials science—are underinvested in relative to their value. Markets guide model improvements based on private returns, but can we improve model performance for social externalities?

AIAnthropicOpenAIclaude large model

Source: Leikeji

Images in this article are from 123RF’s licensed image library.