Anthropic's Financial AI Agents 'Take Over' Wall Street

![]() 05/08 2026

05/08 2026

![]() 463

463

Source | 01Caijing

Yu Tu/Text

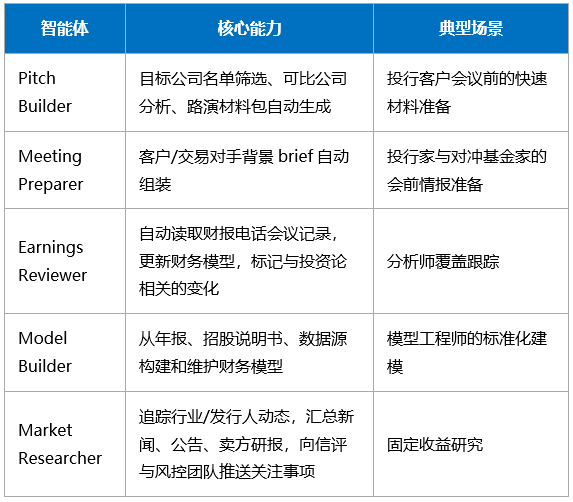

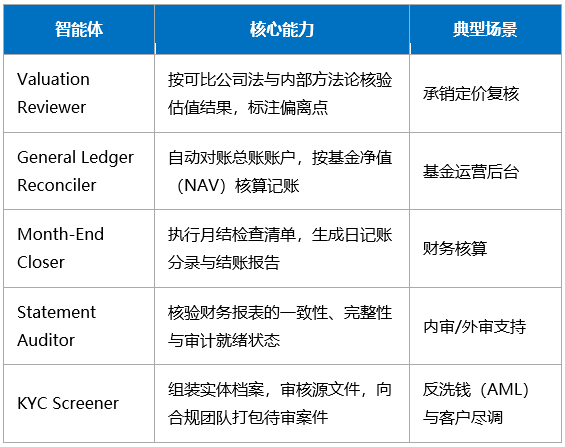

In early May, Anthropic announced in New York the launch of 10 AI agents specifically designed for the financial services industry, covering core scenarios such as investment banking research, valuation and pricing, financial operations, compliance due diligence, and audit risk control.

At the same time, the company officially announced the establishment of a joint venture with $1.5 billion in funding from global top-tier investment institutions including Blackstone, Goldman Sachs, and Apollo, aiming to promote the application of AI technology (especially Anthropic's Claude model) in private equity holding companies.

These agents are not general-purpose but are pre-designed and optimized for specific workflows or task types, offering out-of-the-box functionality that significantly lowers deployment barriers.

Based on business scenarios, these agents can be divided into two major categories:

1. Research and Customer Service

2. Finance and Operations

01

Anthropic's Financial AI Agent Architecture: Three-Tier Modular Design

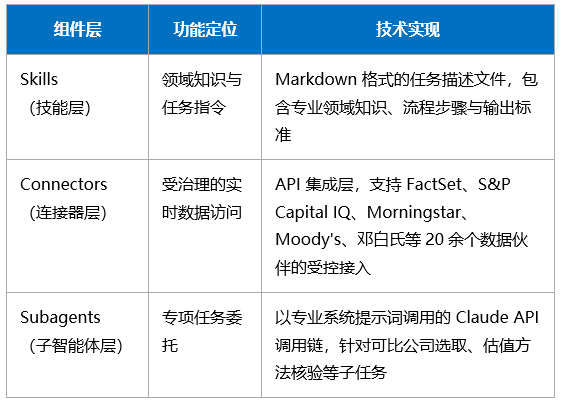

Anthropic encapsulates each financial agent template into a standardized architecture consisting of a skill layer, connector layer, and sub-agent layer.

This architecture was described by The Register as 'sounding complex but essentially just multi-step API calls.' Critics argue that Anthropic's terminology system is overly packaged, while supporters believe this encapsulation lowers enterprise deployment barriers.

Anthropic offers two deployment paths:

Plugin Mode (Claude Cowork/Claude Code): Agents operate as assistants within analysts' desktop environments. For example, Pitch Builder can simultaneously output an Excel comparable company model, a PowerPoint pitch deck, and an Outlook follow-up email in a single task, with automatic context transfer between applications.

Hosted Agent Mode (Claude Managed Agent): Fully autonomous operation of large-scale or scheduled tasks on the Claude Platform, equipped with fine-grained tool permission controls, a credential vault, and complete operational audit logs. Each tool call and decision result can be retrospectively reviewed by compliance and engineering teams in the Claude Console.

The underlying model supporting the entire solution is Claude Opus 4.7, which Anthropic claims leads the industry with a score of 64.37% in Vals AI's Finance Agent evaluation.

However, The Register commented, 'This failure rate would be grounds for immediate dismissal if it occurred in humans'—implying that an error rate of about 36% remains unacceptable for financial professionals.

02

Regulatory Compliance: The Core Prerequisite for Financial AI Implementation

Anthropic explicitly makes human-in-the-loop (HITL) collaboration a design prerequisite for all financial agents: users must review, iterate, and approve agent outputs before submission to clients, regulatory filings, or execution.

Specific compliance mechanisms include: full operational auditing, fine-grained permission controls, credential vaults, governed data connections, and compliance adaptability.

Current U.S. financial regulatory requirements for AI agents can be summarized into three principles:

Transparent Disclosure: Enterprises must shift from generalized template language to substantive disclosures that accurately reflect AI's true technical capabilities, prohibiting 'AI washing.'

Adequate Oversight: AI governance must be deeply integrated with existing oversight frameworks, developing technical-specific procedures, monitoring third-party suppliers, and preventing AI errors and data misuse.

Human-Led: Oversight responsibilities cannot be fully delegated to algorithms; human-led, documented, and systemically error-capturable supervision mechanisms must be maintained.

Outside the U.S., systems using AI for credit scoring and fraud detection are classified as high-risk applications under the EU AI Act, requiring specialized documentation, transparency mechanisms, human review interfaces, and explainability pipelines.

Some of the connectors released by Anthropic this time (such as Dun & Bradstreet business identity verification and Moody's credit rating access) are precisely data governance layers designed to meet such regulatory requirements.

U.S. banking regulators (SR 11-7) and UK regulators (SS1/23) require formal model risk management for AI models, including independent validation, performance monitoring, and documentation.

However, most agent frameworks on the current market do not come with such governance infrastructure built-in—enterprises must construct it themselves after purchase, creating significant compliance friction in practice.

03

Wall Street Welcomes Agents

Wall Street is receptive to agent applications.

JPMorgan Chase is one of the institutions with the largest-scale financial AI deployments, with a 2026 technology budget of $19.8 billion and an incremental AI-specific expenditure of about $1.2 billion. Its core systems include:

OmniAI Platform: Runs 450+ production models, uniformly managing feature definitions, model versions, and deployment pipelines;

LLM Suite: Provides a unified generative AI portal for 200,000+ global employees, with simultaneous access to OpenAI GPT and Anthropic Claude;

IndexGPT: A thematic investment tool combining large language models with natural language processing for wealth management clients.

JPMorgan Chase's proprietary data accumulated from processing nearly $10 trillion in daily cross-border payments is considered its core competitive moat in fraud detection and risk management.

CEO Jamie Dimon revealed at Anthropic's launch event that he personally created a comprehensive dashboard in 20 minutes using Claude Code.

JPMorgan Chase publicly disclosed that it expects AI to generate $1.5–2 billion in annual business value.

Goldman Sachs achieved a 3–4x productivity improvement over GitHub Copilot among over 12,000 developers by deploying the autonomous programming agent Devin in collaboration with Cognition Labs.

Goldman Sachs CIO Marco Argenti proposed a 'hybrid workforce' framework: 'This is the first time what you're buying is no longer infrastructure but 'intelligence' itself... It penetrates deeper into how we operate and think.'

04

Practices of Some Institutions

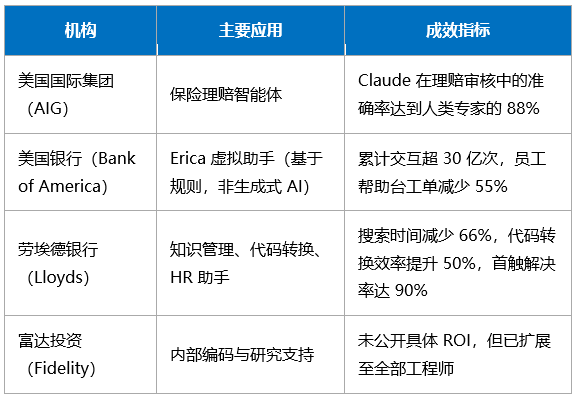

More and more financial institutions are putting AI agents into actual business operations and achieving quantifiable results in accuracy, efficiency, and cost. Below are application cases from internationally representative institutions:

The case of Bank of America's Erica reveals an important distinction: Erica does not use generative AI or large language models but selects responses from predefined answer sets based on natural language intent classification.

Gartner predicts that by the end of 2026, 30% of large financial institutions will deploy at least one production-grade AI agent in core business processes.

Bank CIOs are under pressure to prioritize AI agents, autonomous operations, and programmable money in their strategies, but regulatory uncertainty, data governance complexity, and talent gaps are the three core obstacles.

-End-