Price Hikes! Price Hikes! Price Hikes! A Global Computing Power and Storage 'Earthquake' Triggered by AI

![]() 03/19 2026

03/19 2026

![]() 330

330

By 2026, artificial intelligence has evolved from a cutting-edge technology in laboratories to a ubiquitous presence in the daily operations of countless industries: businesses use AI for data analysis, intelligent customer service, and automated production; individuals rely on AI for writing copy, editing images, and making plans; governments leverage AI to optimize public services, urban management, and public safety.

Every AI interaction, computation, and data processing relies on the support of computing power and storage. Computing power serves as the 'brain' of AI, responsible for processing data and running models; storage acts as AI's 'memory bank,' storing data, model parameters, and operational logs.

For over a decade, cloud computing services have followed the trend of becoming 'cheaper with more usage,' while storage chips have seen consistent price reductions due to technological advancements and expanded production capacity. Both businesses and individuals have grown accustomed to low-cost cloud services and digital products.

However, starting in the second half of 2025, the global market suddenly witnessed a reversal: mainstream cloud providers such as Amazon AWS, Microsoft Azure, Alibaba Cloud, and Tencent Cloud raised prices for AI computing power, cloud storage, and server leasing, with some products seeing increases of up to 34%. Storage chip giants like Samsung, SK Hynix, and Micron followed suit, with prices for DRAM memory, NAND flash memory, and HBM high-bandwidth memory skyrocketing. Some storage chips experienced price hikes exceeding 500% within six months, driving up the costs of memory and hard drives for ordinary smartphones and computers as well.

This sudden wave of price increases is not a short-term market fluctuation or malicious price gouging by manufacturers. Instead, it stems from a structural transformation in the industrial chain triggered by the explosive global demand for AI computing power. AI, like a 'super money pit,' is voraciously consuming computing power and storage resources, leading to a supply shortage in upstream hardware production capacity and soaring costs for midstream cloud services, ultimately rippling across the entire market.

Explosive Demand for AI Computing Power: Why Is the World 'Competing for Computing Power'?

Computing power, simply put, refers to a computer's ability to process data and run programs, akin to the thinking speed of the human brain—the faster the brain works, the more efficient it is at solving problems.

Traditional computing power is primarily used for basic operations like daily office tasks, web browsing, and video playback. In contrast, AI computing power is high-performance computing designed specifically for training and inference of artificial intelligence models. It requires processing vast amounts of text, image, audio, and video data, running trillion-parameter large models, and demands computational speed, data transmission speed, and hardware performance tens or even hundreds of times greater than traditional computing power.

To draw an analogy, traditional computing power is like a family sedan, suitable for daily commuting; AI computing power is like an F1 race car, requiring extreme speed and performance to accomplish 'extreme tasks' such as training large AI models and processing multimodal data.

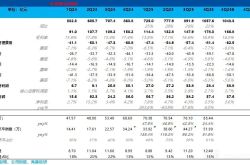

According to data from authoritative institutions such as the China Academy of Information and Communications Technology (CAICT), IDC, and Inspur Information, global demand for AI computing power in 2026 exhibits explosive growth, with key figures as follows: Global Computing Power Scale: In 2026, the total global computing power reaches 12.8 EFLOPS, a 58% year-on-year increase. Intelligent computing power accounts for over 80%, officially surpassing general-purpose computing power to become the absolute dominant force in global computing power, marking the industry's full entry into the 'era of intelligent computing power.'

China's intelligent computing power reaches 1,590 EFLOPS, a 72% year-on-year increase—the highest growth rate globally. However, there remains a 25%-30% gap in high-end computing power resources, with public intelligent computing centers operating at near-full capacity. Global AI server shipments are projected to reach 3.2 million units in 2026, a 35% year-on-year increase. The domestic AI server market is expected to reach 350 billion yuan, a 40%+ year-on-year increase, with the computing power demand of a single AI server being 8-10 times that of a traditional server.

In the past, AI computing power was primarily used for large model training. By 2026, with the widespread adoption of AI applications, inference computing power accounts for over 70% of demand, becoming the core driver. Every user interaction with AI agents, AI assistants, and industry-specific AI applications continuously consumes inference computing power, with token (the smallest unit of data processed by AI) usage growing exponentially. In February 2026, China's weekly AI model token calls surpassed 5 trillion, exceeding the U.S. for the first time.

Global AI-related spending is projected to reach $480 billion in 2026, a 33% year-on-year increase. The total capital expenditure of the world's top eight cloud providers exceeds $600 billion, a 40% annual increase, all allocated to building AI computing centers, servers, and storage infrastructure.

At the 2026 GTC conference, NVIDIA CEO Jensen Huang predicted that global market demand for AI computing power would reach $1 trillion by 2027—double the 2026 forecast—highlighting the frenzied demand for AI computing power.

Since the advent of ChatGPT in 2023, global tech giants and AI companies have been locked in an 'arms race' for large models. Firms like OpenAI, Google, Microsoft, Baidu, Alibaba, and ByteDance have continuously rolled out larger, more powerful models, scaling from hundred-billion to trillion and even ten-trillion parameters.

Training a trillion-parameter model requires tens of thousands of high-end AI chips, runs continuously for months, and consumes hundreds of millions of kilowatt-hours of electricity—demanding tens of thousands of times more computing power than traditional software. Moreover, large models require ongoing iteration and optimization, with training being a long-term, repetitive process that further drives up demand for computing power.

If large model training represents a 'one-time surge in computing power demand,' the deployment of AI applications signifies sustained computing power consumption. By 2026, AI has permeated every industry: Consumer side: AI chatbots, AI painting, AI writing, and AI office assistants handle billions of daily interactions. Enterprise side: AI drives smart manufacturing, logistics, finance, and healthcare, automating data processing, workflow optimization, and quality inspection. Industry side: Autonomous driving, smart cities, and industrial internet require real-time processing of massive sensor data.

These applications all demand real-time inference computing power. Just as smartphones constantly require battery power, AI applications continuously need computing support, transforming computing power demand from a 'niche training need for a few enterprises' into a 'universal societal need.'

In 2026, AI evolves from single-modal text processing to multimodal processing integrating text, images, audio, video, and 3D models, with each interaction processing volumes of data dozens of times greater than text alone. Simultaneously, the explosive growth of AI agents—which can autonomously complete tasks, access data, and iterate—results in token consumption per task being 5-10 times (or even 100 times in complex scenarios) that of traditional AI conversations, further exacerbating the computing power shortage.

While demand explodes, supply lags, plunging the world into a computing power deficit: Delivery cycles for high-end AI chips (NVIDIA H100, H200, AMD MI300) extend to 6-12 months, making them unattainable even with money. Demand for racks in intelligent computing centers outstrips supply, with leading cloud providers' AI computing resources operating at full capacity, forcing new customers to wait in line. Small and medium-sized enterprises (SMEs) cannot afford to build their own computing power and must rely on cloud services, further driving up demand for cloud computing.

The computing power shortage directly serves as the core catalyst for price hikes in cloud services and storage chips—insufficient computing power necessitates server expansion and increased storage, but soaring hardware costs ultimately force price increases onto the market.

The Underlying Logic Behind the Shift from 'Cheaper with More Usage' to 'Across-the-Board Price Hikes'

From the second half of 2025 to 2026, the global cloud services market broke its decade-long tradition of 'only price decreases' and entered a comprehensive price hike cycle.

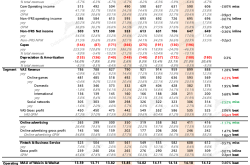

Among overseas providers, Amazon AWS led the way by raising prices for EC2 machine learning, cloud storage, and server leasing, with some AI computing power services seeing increases exceeding 20%. Microsoft Azure increased prices for AI inference and cloud databases by 15%-30%. Google Cloud followed suit, raising prices for high-end computing power resources by over 25%.

Domestically, in March 2026, Alibaba Cloud announced price hikes for AI computing power, object storage, and GPU server leasing, with maximum increases reaching 34%. Tencent Cloud, Baidu Intelligent Cloud, and Huawei Cloud subsequently adjusted their AI-related cloud service prices, with core computing power products generally seeing increases of 10%-25%.

In their price hike announcements, cloud providers consistently cited the same reasons: explosive demand for AI computing power, soaring upstream hardware costs, and substantial increases in infrastructure investment. This is not a case of cloud providers wanting to raise prices but of cost pressures becoming untenable.

The three core reasons for cloud service price hikes are as follows: Upstream hardware costs soar, with chip, storage, and server prices doubling. The core infrastructure of cloud services—servers, AI chips, and storage devices—saw dramatic price increases in 2025-2026: Prices for high-end GPU chips surged by over 50%, with supply falling short of demand, forcing cloud providers to compete for limited stock at premium prices. Prices for HBM high-bandwidth memory, DDR5 memory, and enterprise-grade SSDs increased by over 60% within six months, with some products exceeding 500% price hikes. The cost of an AI server is 5-10 times that of a traditional server, with the procurement cost of just 32 64GB memory sticks for a single H100 server exceeding 300,000 yuan.

Hardware costs account for over 60% of cloud providers' total investments. With hardware prices doubling, cloud providers' operational costs skyrocket, necessitating price hikes to cover expenses.

To meet AI computing power demand, cloud providers must large-scale build intelligent computing centers, with construction costs exceeding those of traditional data centers by more than tenfold: Traditional data center cabinets operate at 6-8kW, while AI intelligent computing center cabinets require 50-120kW—10-20 times higher. A 1GW computing power cluster consumes approximately 7,000 GWh of electricity annually, equivalent to the total annual electricity consumption of a medium-sized city. AI chips, with their massive power consumption, require liquid cooling systems, whose procurement and operational costs are 3-5 times those of traditional air cooling. Intelligent computing centers demand larger sites and higher-bandwidth networks, with infrastructure investments reaching tens or even hundreds of billions of yuan.

In 2026, China's 'East Data, West Computing' project added 800 billion yuan in infrastructure investment for computing power, while global cloud providers' investments in intelligent computing centers reached astronomical figures, all of which are ultimately passed on to cloud service prices.

In the past, cloud services operated in a 'supply exceeds demand' market, with providers relying on low prices to capture market share. By 2026, with the explosive demand for AI computing power, high-end computing power and storage resources have transformed from 'general production materials' into 'scarce strategic resources,' entering a 'seller's market.'

Cloud providers use price hikes to both alleviate cost pressures and optimize resource allocation, prioritizing scarce computing power for high-value clients to avoid waste. For example, Alibaba Cloud allocated scarce AI computing power to token-based businesses, ensuring core operations' computing supply through price adjustments.

The costs of cloud service price hikes ultimately trickle down throughout the market: AI companies face soaring costs for large model training and inference, with SMEs in the AI sector struggling under 'computing power costs crushing enterprises,' forcing some to reduce R&D and lower service quality. SMEs relying on cloud services for operations see a 10%-30% increase in cloud service expenditures, driving up operational costs. Individual users face higher prices for cloud storage, cloud services, and AI tool memberships, increasing personal usage costs. Enterprises undergoing digital transformation in manufacturing, finance, and retail see increased cloud computing expenditures, raising digitalization costs.

The Global Storage 'Earthquake' Triggered by AI's 'Capacity Grab'

If cloud service price hikes represent the 'surface phenomenon,' then storage chip price increases are the core root of this computing power crisis. Storage chips serve as AI's 'data granary'—without them, even the most powerful computing power cannot process data or run models. From 2025-2026, the global storage chip market entered a 'super price hike cycle,' with DRAM, NAND, and HBM prices surging across the board, becoming the focal point of the global tech industry.

According to data from TrendForce, CFM Flash Market, and SK Hynix, in the first quarter of 2026, contract prices for standard DRAM rose 55%-70% quarter-on-quarter, exceeding 500% from June 2025 levels, with inventories sufficient for only about four weeks—a historic low. Prices for enterprise-grade and consumer-grade NAND flash memory increased 40%-55% quarter-on-quarter, with Kioxia and Samsung selling out their entire 2026 NAND production capacity. Prices for HBM3 and HBM3E, specialized for AI servers, surged by over 100%, with SK Hynix and Samsung selling out their 2026 HBM capacity in advance, with orders booked through the end of 2027. SK Hynix's operating profit for fiscal 2025 reached 47.21 trillion won, with a profit margin of 49%—a record high—making storage chip manufacturers the biggest beneficiaries of this price hike cycle.

AI servers' demand for storage chips is exponentially higher than that of traditional servers. A single AI server requires 8-10 times more DRAM memory and three times more NAND flash memory than a traditional server. An NVIDIA H100 AI server needs 640GB of HBM, 2TB-4TB of DDR5, and 32TB-132TB of NAND, imposing extremely high quantity and performance requirements on storage chips. In 2026, global AI server shipments exceeded 3.2 million units, consuming 53% of the global monthly memory production capacity and directly squeezing storage capacity for traditional consumer electronics.

The explosive demand for AI servers has transformed storage chips from a 'supporting role' into a core strategic resource in the AI industrial chain, with global storage giants prioritizing AI servers' storage needs.

Storage chip production capacity is finite, requiring high-end wafer fabs and cleanrooms, with capacity expansion taking 2-3 years and unable to scale up rapidly in the short term. Driven by the high profits of AI demand, the three major storage giants—Samsung, SK Hynix, and Micron—actively adjusted their capacity structures: Shifting 70%-80% of their advanced production capacity to high-margin, AI-specific storage products like HBM, DDR5, and enterprise-grade SSDs. Drastically reducing capacity for traditional products like DDR4 and consumer-grade NAND, causing supply shortages and price increases for traditional storage chips.

This 'capacity tilt toward AI' strategy directly triggered a supply-demand imbalance across all storage chip categories, with both AI-specific high-end storage and consumer-grade storage for smartphones and computers facing supply tightness.

The core bottleneck in storage chip manufacturing is cleanroom space—an essential environment for storage chip production, with extremely high construction costs and long lead times, making short-term expansion impossible. SK Hynix explicitly stated: Given the explosion in AI demand and limited cleanroom space, storage chip supply cannot meet global demand in 2026, with price hikes inevitable throughout the year.

Meanwhile, the storage industry was in a downturn from 2023-2024, with manufacturers generally cutting capacity and reducing capital expenditures, resulting in no new capacity releases in 2025-2026 and further exacerbating supply shortages.

In the past, the storage industry operated as a 'cyclical industry,' with prices fluctuating based on supply and demand and manufacturers competing on low prices to capture market share. By 2026, AI demand had transformed the storage industry into a 'quasi-foundry' model, with cyclicality significantly weakened: Cloud providers and AI companies signed multi-year long-term contracts with storage giants, shifting from 'locking in both price and volume' to 'locking in volume but not price' to prioritize capacity supply. Storage manufacturers abandoned price wars, instead relying on high-end products and capacity advantages to generate profits, further driving up storage chip prices.

Storage chips are the 'staple food' of the electronics industry, and the ripple effects of price hikes have spread across the entire industrial chain. Consumer electronics price increases: For products such as mobile phones, computers, tablets, and solid-state drives, storage costs account for 10%-40% of material costs. Rising storage chip prices have led to increases in the prices of end-user products, making mobile phones and computers more expensive for ordinary consumers. Data center costs soar: Data centers of cloud providers and internet companies require massive amounts of storage chips. The rise in storage costs directly drives up the prices of cloud services and servers.

Automotive electronics prices rise: Autonomous driving and smart vehicles require terabyte-scale storage chips. The increase in storage prices has led to higher costs for vehicle intelligence.

Accelerated localization of alternatives: The global storage shortage has presented opportunities for Chinese storage manufacturers. Products from companies such as Yangtze Memory Technologies (YMTC) and ChangXin Memory Technologies (CXMT) have shifted from 'alternatives' to 'viable options,' rapidly increasing the market share of domestically produced storage.

No one—whether part of the industrial chain, businesses, or individuals—can remain unaffected.

The surge in AI computing power, cloud services, and storage chip prices is not an issue confined to a single industry. Instead, it represents a transformation that the global technology sector, real economy, and ordinary individuals must all confront, with impacts spanning upstream, midstream, and downstream sectors and touching every aspect of production, life, and consumption.

Upstream storage and chip manufacturers are entering a 'super boom cycle.' Storage chip and AI chip manufacturers have emerged as the biggest winners in this wave of price hikes, experiencing explosive revenue and profit growth while further consolidating industry concentration. Samsung, SK Hynix, and Micron monopolize the global HBM and high-end DRAM markets, wielding unprecedented pricing power. NVIDIA and AMD dominate the high-end AI chip market, with their market valuations continuing to climb. Semiconductor equipment and material manufacturers are benefiting from the expansion of storage and chip production capacity, with orders surging.

At the same time, the global semiconductor industrial chain is shifting from being 'consumer electronics-driven' to **'AI computing power-driven,'** with production capacity, technology, and capital all tilting toward the AI sector. Traditional consumer electronics supply chains are facing extrude (pressure/squeeze).

Midstream cloud providers are transitioning from 'low-price competition' to 'value competition.' Cloud providers are bidding farewell to the era of 'low prices to capture market share' and entering **'a value competition phase centered on computing power, technology, and service'**: Leading cloud providers are further increasing their market share by leveraging their computing power resources and technological advantages, while smaller cloud providers face elimination. Cloud providers are accelerating the development of self-designed chips, self-designed storage, and intelligent computing centers to reduce reliance on upstream hardware. Computing power leasing and MaaS (Model as a Service) have become new profit growth points for cloud providers. By 2026, the Chinese computing power leasing market is expected to reach RMB 260 billion.

The AI application industry is undergoing a brutal shakeout. Leading AI companies, leveraging their financial and computing power advantages, continue to iterate their products and dominate the market.

Small and medium-sized AI companies, burdened by high computing power costs and resource shortages, are being forced to exit the market or be acquired. The cost of digital transformation in traditional industries is rising, leading some companies to temporarily halt their AI initiatives and slow their digitalization progress.

Large enterprises are increasing their investments in computing power to seize the AI initiative. Large technology companies and industry leaders, with the financial resources and capabilities to build their own computing power centers and secure storage capacity, are turning AI computing power into a core competitive advantage. Internet giants, financial giants, and manufacturing giants are all building their own intelligent computing centers to reduce reliance on cloud services. They are signing long-term contracts with storage and chip manufacturers to secure capacity and control costs. They are accelerating the implementation of AI technologies to reduce costs and increase efficiency, offsetting the pressure of rising computing power and storage costs.

Small and medium-sized enterprises (SMEs) are the most direct victims of this wave of price hikes. Their spending on cloud services and AI tools has increased by 10%-30%, driving up operational costs.

Unable to afford the costs of building their own computing power infrastructure, they can only scale back their AI usage or opt for low-cost alternatives. Some micro and small enterprises that rely on AI are being forced to halt their AI-related businesses due to excessive costs.

Traditional industries such as manufacturing, retail, and agriculture, which originally relied on cloud computing and AI for digital transformation, are now facing significantly higher transformation costs. Computing power and storage expenditures for smart manufacturing and intelligent logistics have increased, lengthening the investment return cycle. Some SMEs are temporarily halting their digital transformation efforts, slowing down the industry's digitalization progress.

Digital product price increases: The prices of products such as mobile phones, computers, solid-state drives, and USB drives have generally increased by 10%-30% due to rising storage chip prices, making digital products more expensive for ordinary consumers. Cloud service and AI tool price hikes: The prices of cloud storage memberships, AI writing tools, AI painting tools, and office software memberships have increased, raising the cost of digital services for individuals. Rising costs of life services: The AI operational costs of platforms such as food delivery, e-commerce, and transportation are increasing, with some of these costs being passed on to consumers, leading to slight price increases for life services.

Moreover, computing power has become a 'new dimension of national strength.' Countries worldwide are recognizing that computing power is the national strength of the digital age and are elevating it to a national strategic priority. China is advancing the 'East Data, West Computing' project and increasing investments in intelligent computing centers, domestic chips, and domestic storage. The United States has revised the CHIPS and Science Act, adding $200 billion in computing power R&D investments to support its domestic chip and storage industries. The European Union has released a computing power strategy aiming to achieve 70% self-sufficiency in computing power by 2030.

The 'de-risking' of the global technology supply chain is accelerating. Shortages in storage, chips, and computing power have made countries worldwide aware of the importance of autonomous and controllable supply chains. China is accelerating the replacement of domestic storage, domestic chips, and domestic computing power, with companies such as Yangtze Memory Technologies, ChangXin Memory Technologies, and Huawei Ascend rapidly rising. The United States and the European Union are promoting supply chain reshoring to reduce reliance on overseas storage and chip production capacity. The global technology supply chain is shifting from 'global division of labor' to 'regionalization and autonomy,' with its structure being completely reconfigured.

When will the wave of price hikes end? Where are AI computing power and storage headed?

According to industry organizations and manufacturers, the current wave of cloud service and storage chip price hikes is expected to continue until the end of 2026 and gradually stabilize by 2027. The core basis for this judgment is as follows: From the second half of 2026 to 2027, new production capacity from storage giants and chip manufacturers will gradually come online, and the production capacity of domestic storage and domestic chips will rapidly increase, gradually alleviating the supply shortage.

On the demand side, by 2027, the growth rate of AI computing power demand will shift from 'exponential explosion' to 'steady growth.' The large model arms race will become more rational, and the deployment of AI applications will enter a stable phase, slowing down demand growth. The price inflection point for HBM and high-end storage is expected in early 2028, while prices for traditional storage and cloud services will gradually stabilize in 2027 and stop rising sharply.

SK Hynix, Samsung, and other manufacturers have clearly stated that 2026 will be the peak for storage prices, with prices gradually declining in 2027 as production capacity is released.

The future core formula for the AI industry will be: AI capability = (computing power × storage capability × network capability) / power consumption. Computing power, storage, networking, and power will achieve coordinated development. Computational storage integration technology will become widespread, reducing data transmission and improving computing efficiency. Green power and liquid cooling technologies will become standard, addressing the energy consumption bottleneck of computing power. Computing power networks will enable cross-regional scheduling, breaking down 'computing power silos.'

China's storage, chip, and computing power industries will rise rapidly. From 2026 to 2028, the market share of domestic storage is expected to increase to over 25%. Domestic AI chips will achieve substitution in the mid-to-low-end market and gradually break through in the high-end market. The global technology supply chain will form a 'Sino-U.S. dual-cycle' pattern, with autonomous control becoming the core trend.

With capacity expansion and technological innovation, AI computing power will become a universal infrastructure for society, much like water and electricity. Computing power costs will gradually decline, enabling SMEs and individuals to use AI computing power at low cost. Computing power services will become more inclusive, driving the deep deployment of AI across industries. Computing power and storage technologies will continue to iterate, improving performance and reducing costs to support the sustained progress of AI technology.

An inevitable transformation in the AI era, with both opportunities and challenges

The global surge in AI computing power demand, triggering collective price hikes in cloud services and storage chips, is not a short-term market fluctuation but an inevitable signal of the arrival of the AI era. AI has become the core driving force behind global technological, economic, and social development. Computing power and storage, as the 'infrastructure' of AI, are undergoing a fundamental transformation from 'supporting roles' to 'leading actors.'

This wave of price hikes has imposed short-term cost pressures on businesses and individuals but has also driven upgrades in the global technology industry. Storage and chip manufacturers are accelerating technological innovation, cloud providers are optimizing service efficiency, countries are increasing investments in core technology R&D, and the localization of alternatives is advancing rapidly. In the long run, this transformation will make AI computing power and storage more mature and inclusive, ultimately driving AI technology into every industry and every household, allowing artificial intelligence to truly benefit humanity.

This is a challenge, but even more so, an opportunity. By seizing the window of opportunity (windfall/opportunity) of AI computing power transformation, optimizing costs, and deploying technologies, one can gain an early advantage in the AI era. For individuals, there is no need for excessive anxiety. As the industry matures, prices will eventually return to rational levels, and we will all enjoy the convenience and dividends brought by AI.

The curtain has risen on the AI era, and the transformation of computing power and storage is just beginning. This restructuring of the global technology industry will profoundly influence the development landscape of the next decade or even two decades, and each of us is a witness and participant in this transformation.